Posted by Mike Bifulco, Developer Relations Engineer

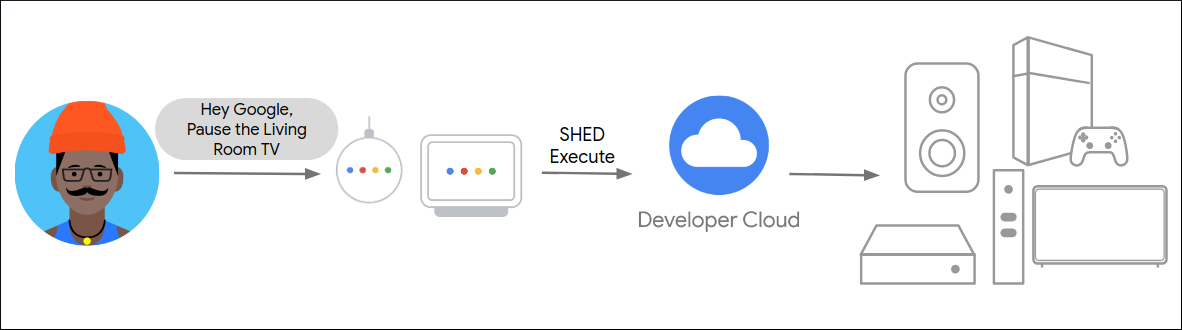

Every day, millions of users ask Google Assistant for help with the things that matter to them: managing a connected home, setting reminders and timers, adding to their shopping list, communicating with friends and family, and countless other imaginative uses. Developers use Assistant APIs and tools to add voice interactivity to their apps for everything from building games, to ordering food, to listening to the news, and much more.

The Google Assistant Developer Relations team works with our community and our engineering teams to help developers build, integrate, and innovate with voice-driven technology on the Assistant platform. We help developers build Conversational Actions, Smart Home hardware and tools, and App Actions integrations with Android. As we continue our mission to bring accessible voice technology to Android devices, smart speakers and screens, we’re excited to announce that we are hiring for several roles!

What Assistant DevRel does

In Developer Relations (DevRel), we wear many hats - our developer ecosystem stretches across several Google products, and work with our community wherever we can. Our team consists of engineers, technical writers, and content producers who work to help developers build with Assistant, while providing active feedback and validation to the engineering teams to make Google Assistant even better. These are just some of the ways we do our work:

Google I/O and other conferences

Google I/O is Google’s annual developer conference, where Googlers from across the company share the latest product releases, insights from Google experts, as well as hands-on learning. The Assistant DevRel team is heavily involved in I/O, writing, producing, and delivering a variety of content types, including: keynotes, technical talks, hands-on workshops, codelabs, and technical demos. We also meet and talk to developers who are building cool things with Assistant.

We also participate in a variety of other conferences, and while most have been virtual for the past year or so, we’re looking forward to traveling to places near and far to deliver technical content to the global community.

Our team members contribute to creation and presentation of content at events like Google I/O.

Google Developers YouTube channel

One of the best ways to get our content out to the world is via YouTube. Members of our team make frequent appearances on the Google Developers channel, producing segments and episodes for The Developer Show, Assistant On Air, AoG Pro Tips, as well as tutorials on new features and developer tools.

Open Source Projects

Another exciting part of our work is the creation and maintenance of Open Source libraries used as samples, demos, and starter kits for devs working with Assistant. As a part of the team, you’ll contribute to projects in GitHub organizations including github.com/actions-on-google and github.com/actions-on-google-labs, as well as projects and libraries created outside of Google.

Developer Platform Tools

The Assistant DevRel team also helps build and maintain the Assistant Developer Platform - we contribute to the tools, policies and features which allow developers to distribute their Assistant apps to Android devices, smart screens and speakers. This engineering work is a truly unique opportunity to shape the future of a growing developer platform, and to support the future of voice-driven and multi-modal technology – all built from the ground up.

Open positions on our team

Our team is headquartered in Mountain View, California, US. If contributing to the next generation of Google Assistant excites you, read below about our openings to find out more.

Developer Relations Engineer

Location: Mountain View, CA, New York, NY, Seattle, WA, or Austin, TX

As a Developer Relations Engineer (or DRE), you’ll work to build developer tools, code samples, and demos for Google Assistant. You’ll work with our community to educate and support developers using our APIs to build their software. You will also be the 0th customer for new features on Assistant - testing, verifying, and giving active feedback to the PM, UX, and Engineering teams that make Assistant come to life. You’ll work with Google Developer Experts to build and scale content to be shared at conferences, events, and hackathons. DREs may also occasionally contribute to blog posts, help write and produce scripts for educational videos on YouTube, and speak at events like conferences, Google Developer Groups, and meetups. Candidates should have experience building native Android apps with Java or Kotlin - experience creating web applications with HTML, JavaScript, and CSS is a plus.

Sound interesting? Learn more and apply to be a Developer Relations Engineer.

Developer Relations Engineering Manager

Location: Mountain View, CA, New York, NY, Seattle, WA, or Austin, TX

Developer Relations Engineering Managers help coordinate and direct teams of engineers to build and update developer tools, APIs, reference documentation, and code samples. As an Engineering Manager, you’ll work with leadership across the company to prioritize new features, goals, and programs for developer relations within Assistant. You’ll manage a variety of roles, including Developer Relations Engineers, Program Managers, and Technical Writers. You’ll be asked to work across a variety of technologies, with a strong focus on building tools and libraries for Android.

Sound interesting? learn more and apply to be a Developer Relations Engineering Manager

Thanks for reading! To share your thoughts or questions, join us on Reddit at r/GoogleAssistantDev.

Follow @ActionsOnGoogle on Twitter for more of our team's updates, and tweet using #AoGDevs to share what you’re working on. Can’t wait to see what you build!