Tag Archives: Google Assistant

Changes we’re making to Google Assistant

Google Assistant will no longer support a number of underutilized features to focus on improving quality and reliability.

Google Assistant will no longer support a number of underutilized features to focus on improving quality and reliability.

Source: The Official Google Blog

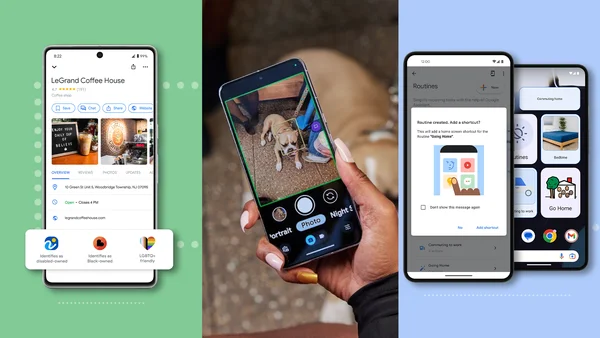

8 ways we’re making daily tasks more accessible

Today, we’re launching new products and features aimed at making daily tasks more accessible for people with disabilities.

Today, we’re launching new products and features aimed at making daily tasks more accessible for people with disabilities.

Source: Google LatLong

Assistant with Bard: A step toward a more personal assistant

We’re introducing Assistant with Bard, a personal assistant powered by generative AI.

We’re introducing Assistant with Bard, a personal assistant powered by generative AI.

Source: The Official Google Blog

Talk to Google Assistant and Alexa on new JBL Authentics speakers

Set up and use both Google Assistant and Alexa on Harman’s new line of speakers.

Set up and use both Google Assistant and Alexa on Harman’s new line of speakers.

Source: The Official Google Blog

8 ways Google tools help me care for my pets

Use Google tools like Maps, Docs and Photos to make caring for your pet easier.

Use Google tools like Maps, Docs and Photos to make caring for your pet easier.

Source: Google LatLong

3 tips to make Google Assistant your own

Google Assistant provides hands-free help throughout the day, tailored to your needs. Here are three tips to personalize your Assistant.

Google Assistant provides hands-free help throughout the day, tailored to your needs. Here are three tips to personalize your Assistant.

Source: The Official Google Blog

7 ways Google products can help you celebrate the Asian community

How you can use Google products to celebrate Asian and Pacific Islander culture for Asian Pacific American Heritage Month.

How you can use Google products to celebrate Asian and Pacific Islander culture for Asian Pacific American Heritage Month.

Source: The Official Google Blog

7 formas en las que los productos de Google homenajean a la comunidad asiática

Obtén más información sobre las formas en las que puedes celebrar el Mes de la Herencia Asiático-estadounidense y de las islas del Pacífico con los productos y servicios…

Obtén más información sobre las formas en las que puedes celebrar el Mes de la Herencia Asiático-estadounidense y de las islas del Pacífico con los productos y servicios…

Source: The Official Google Blog

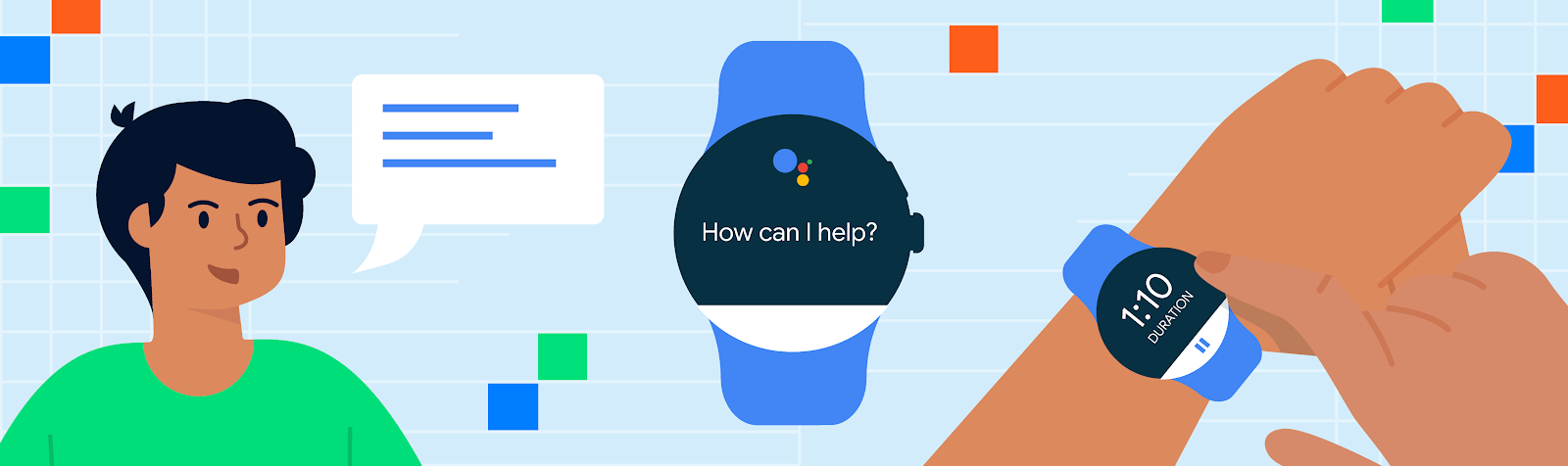

Voice controlled workouts with Google Assistant

With tens of millions of installs of the adidas Running app, users every day turn to adidas as part of their health and fitness routine. Like many in the industry, adidas recognized that in this ever-evolving market, it's important to make it as easy as possible for users to achieve their fitness goals and making their app available on Wear was a natural fit. adidas didn’t stop at bringing their running app to the watch, however, they also realized that in a user situation such as a workout, the ability to engage with the application hands-free, or even eyes-free, further simplified how users could engage with the app.

Integrating Google Assistant

To enable hands-free control, adidas looked to Google Assistant and App Actions, which lets users control apps with their voice using built-in intents (BIIs). Users can perform specific tasks by voice or act upon tasks such as starting a run or swim.

Integrating Health and Fitness BIIs was a simple addition that adidas’ staff Android developer made in their IDE by declaring <capability> tags in their shortcuts.xml file in order to create a consistent experience between the mobile app and a watch surface. It’s a process that looks like this:

- First, Assistant parses the user’s request using natural language understanding and identifies the appropriate BII. For example, START_EXERCISE to begin a workout.

- Second, Assistant will then fulfill the user’s intent by launching the application to a specified content or action. Besides START_EXERCISE, users can also stop (STOP_EXERCISE), pause (PAUSE_EXERCISE), or resume (RESUME_EXERCISE) their workouts. Haptic feedback or dings can also be added here to show whether a user request was successful or not.

With App Actions being built on Android, the development team was able to deploy quickly. And when partnered with the Health Services and Health Connect APIs which respectively support real-time sensor and health data, end users can have a cohesive and secure experience across mobile and Wear OS devices.

|

“What’s exciting about Assistant and Wear is that the combination really helps our users reach their fitness goals. The ability for a user to leverage their voice to track their workout makes for a unique and very accessible experience,” says Robert Hellwagner, Director of Product Innovation for adidas Runtastic. “We are excited by the possibility of what can be done by enabling voice based interactions and experiences for our users through App Actions.”

Learn more

Enabling voice controls to unlock hands-free and eyes-free contexts is an easy way to create a more seamless app experience for your users. To bring natural, conversational interactions to your app read our documentation today, explore how to build with one of our codelabs, or subscribe to our App Actions YouTube playlist for more information. You can also sign up to develop for Android Health Connect if you are interested in joining our Google Health and Fitness EAP. To jump right into how this integration was built, learn more about integrating WearOS and App Actions.

Posted by John Richardson, Partner Engineer

Posted by John Richardson, Partner Engineer