Today, as part of the Compose August ‘23 Bill of Materials, we’re releasing version 1.5 of Jetpack Compose, Android's modern, native UI toolkit that is used by apps such as Play Store, Dropbox, and Airbnb. This release largely focuses on performance improvements, as major parts of our modifier refactor we began in the October ‘22 release are now merged.

Performance

When we first released Compose 1.0 in 2021, we were focused on getting the API surface right to provide a solid foundation to build on. We wanted a powerful and expressive API that was easy to use and stable so that developers could confidently use it in production. As we continue to improve the API, performance is our top priority, and in the August ‘23 release, we have landed many performance improvements.

Modifier performance

Modifiers see large performance improvements, up to 80% improvement to composition time, in this release. The best part is that, thanks to our work getting the API surface right in the first release, most apps will see these benefits just by upgrading to the August ‘23 release.

We have a suite of benchmarks that are used to monitor for regressions and to inform our investments in improving performance. After the initial 1.0 release of Compose, we began focusing on where we could make improvements. The benchmarks showed that we were spending more time than anticipated materializing modifiers. Modifiers make up the vast majority of a composition tree and, as such, were the largest contributor to initial composition time in Compose. Refactoring modifiers to a more efficient design began under the hood in the October ‘22 release.

The October ‘22 release included new APIs and performance improvements in our lowest level module, Compose UI. Modifiers build on top of each other so we started migrating our low level modifiers in Compose Foundation in the next release, March ‘23. This included graphicsLayer, low level focus modifiers, padding, and offset. These low level modifiers are used by other highly utilized modifiers such as Clickable, and are also utilized by many framework Composables such as Text. Migrating modifiers in the March ‘23 release brought performance improvements to those components, but the real gains would come when we could migrate the higher level modifiers and composables themselves to the new modifier system.

In the August ‘23 release, we have begun migrating the Clickable modifier to the new modifier system, bringing substantial improvements to composition time, in some cases up to 80%. This is especially relevant in lazy lists that contain clickable elements such as buttons. Modifier.indication, used by Clickable, is still in the process of being migrated, so we anticipate further gains to come in future releases.

As part of this work, we identified a use case for composed modifiers that wasn’t covered in the original refactor and added a new API to create Modifier.Node elements that consume CompositionLocal instances.

We are now working on documentation to guide you through migrating your own modifiers to the new Modifier.Node API. To get started right away, you can reference the samples in our repository.

Learn more about the rationale behind the changes in the Compose Modifiers deep dive talk from Android Dev Summit ‘22.

Memory

This release includes a number of improvements in memory usage. We have taken a hard look at allocations happening across different Compose APIs and have reduced the total allocations in a number of areas, especially in the graphics stack and vector resource loading. This not only reduces the memory footprint of Compose, but also directly improves performance, as we spend less time allocating memory and reduce garbage collection.

In addition, we fixed a memory leak when using ComposeView, which will benefit all apps but especially those that use multi-activity architecture or large amounts of View/Compose interop.

Text

BasicText has moved to a new rendering system backed by the modifier work, which has brought an average of gain of 22% to initial composition time and up to a 70% gain in one benchmark of complex layouts involving text.

A number of Text APIs have also been stabilized, including:

- TextMeasurer and related APIs

- LineHeightStyle.Alignment(topRatio)

- Brush

- DrawStyle

- TextMotion

- DrawScope.drawText

- Paragraph.paint (brush, drawStyle, blendMode)

- MultiParagraph.paint (brush, drawStyle, blendMode)

- PlatformTextInput

Improvements and fixes for core features

We have also shipped new features and improvements in our core APIs as well as stabilizing some APIs:

- LazyStaggeredGrid is now stable.

- Added asComposePaint API to replace toComposePaint as the returned object wraps the original android.graphics.Paint.

- Added IntermediateMeasurePolicy to support lookahead in SubcomposeLayout.

- Added onInterceptKeyBeforeSoftKeyboard modifier to intercept key events before the soft keyboard.

Get started!

We’re grateful for all of the bug reports and feature requests submitted to our issue tracker — they help us to improve Compose and build the APIs you need. Continue providing your feedback, and help us make Compose better!

Wondering what’s next? Check out our roadmap to see the features we’re currently thinking about and working on. We can’t wait to see what you build next!

Happy composing!

Posted by

Posted by

Posted by Anna Bernbaum, Product Manager and

Posted by Anna Bernbaum, Product Manager and

.gif)

Posted by Lin Guo, Software Engineer

Posted by Lin Guo, Software Engineer

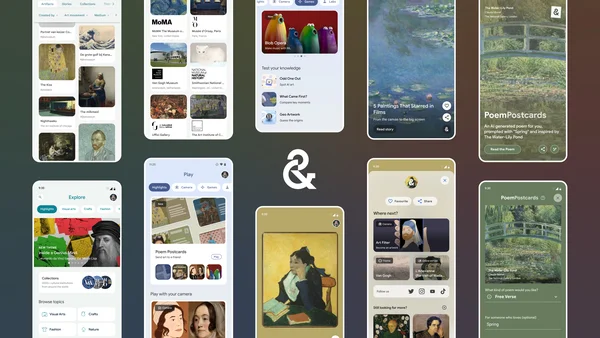

Discover the redesigned Google Arts & Culture app now on Android. Send an AI-generated summer postcard to a friend with Poem Postcards.

Discover the redesigned Google Arts & Culture app now on Android. Send an AI-generated summer postcard to a friend with Poem Postcards.