The Android bot is a beloved mascot for Android users and developers, with previous versions of the bot builder being very popular - we decided that this year we’d rebuild the bot maker from the ground up, using the latest technology backed by Gemini. Today we are releasing a new open source app, Androidify, for learning how to build powerful AI driven experiences on Android using the latest technologies such as Jetpack Compose, Gemini through Firebase, CameraX, and Navigation 3.

Androidify app demo

Here’s an example of the app running on the device, showcasing converting a photo to an Android bot that represents my likeness:

Under the hood

The app combines a variety of different Google technologies, such as:

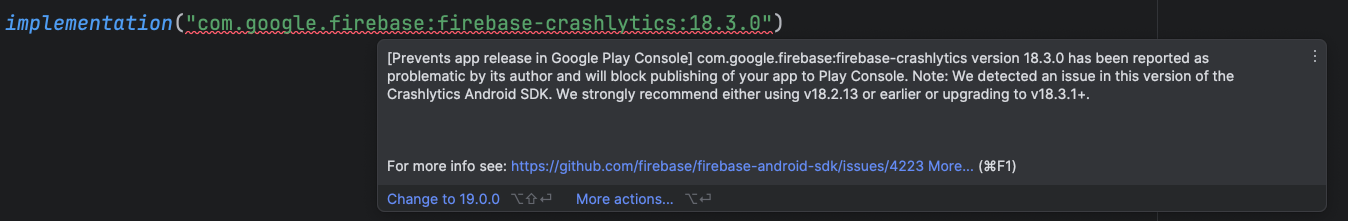

- Gemini API - through Firebase AI Logic SDK, for accessing the underlying Imagen and Gemini models.

- Jetpack Compose - for building the UI with delightful animations and making the app adapt to different screen sizes.

- Navigation 3 - the latest navigation library for building up Navigation graphs with Compose.

- CameraX Compose and Media3 Compose - for building up a custom camera with custom UI controls (rear camera support, zoom support, tap-to-focus) and playing the promotional video.

This sample app is currently using a standard Imagen model, but we've been working on a fine-tuned model that's trained specifically on all of the pieces that make the Android bot cute and fun; we'll share that version later this year. In the meantime, don't be surprised if the sample app puts out some interesting looking examples!

How does the Androidify app work?

The app leverages our best practices for Architecture, Testing, and UI to showcase a real world, modern AI application on device.

AI in Androidify with Gemini and ML Kit

The Androidify app uses the Gemini models in a multitude of ways to enrich the app experience, all powered by the Firebase AI Logic SDK. The app uses Gemini 2.5 Flash and Imagen 3 under the hood:

- Image validation: We ensure that the captured image contains sufficient information, such as a clearly focused person, and assessing for safety. This feature uses the multi-modal capabilities of Gemini API, by giving it a prompt and image at the same time:

val response = generativeModel.generateContent(

content {

text(prompt)

image(image)

},

)

- Text prompt validation: If the user opts for text input instead of image, we use Gemini 2.5 Flash to ensure the text contains a sufficiently descriptive prompt to generate a bot.

- Image captioning: Once we’re sure the image has enough information, we use Gemini 2.5 Flash to perform image captioning., We ask Gemini to be as descriptive as possible,focusing on the clothing and its colors.

- “Help me write” feature: Similar to an “I’m feeling lucky” type feature, “Help me write” uses Gemini 2.5 Flash to create a random description of the clothing and hairstyle of a bot.

- Image generation from the generated prompt: As the final step, Imagen generates the image, providing the prompt and the selected skin tone of the bot.

The app also uses the ML Kit pose detection to detect a person in the viewfinder and enable the capture button when a person is detected, as well as adding fun indicators around the content to indicate detection.

Explore more detailed information about AI usage in Androidify.

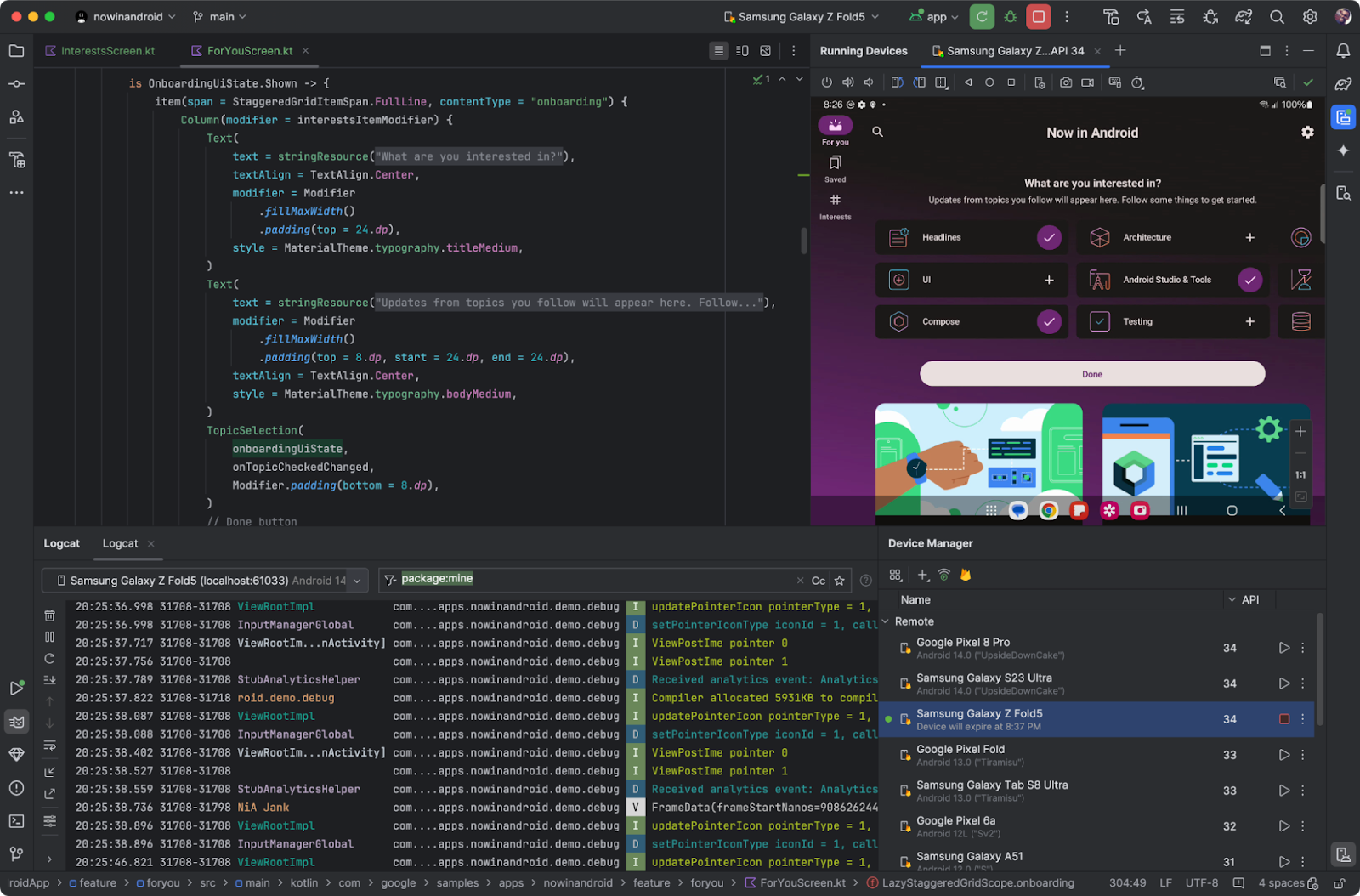

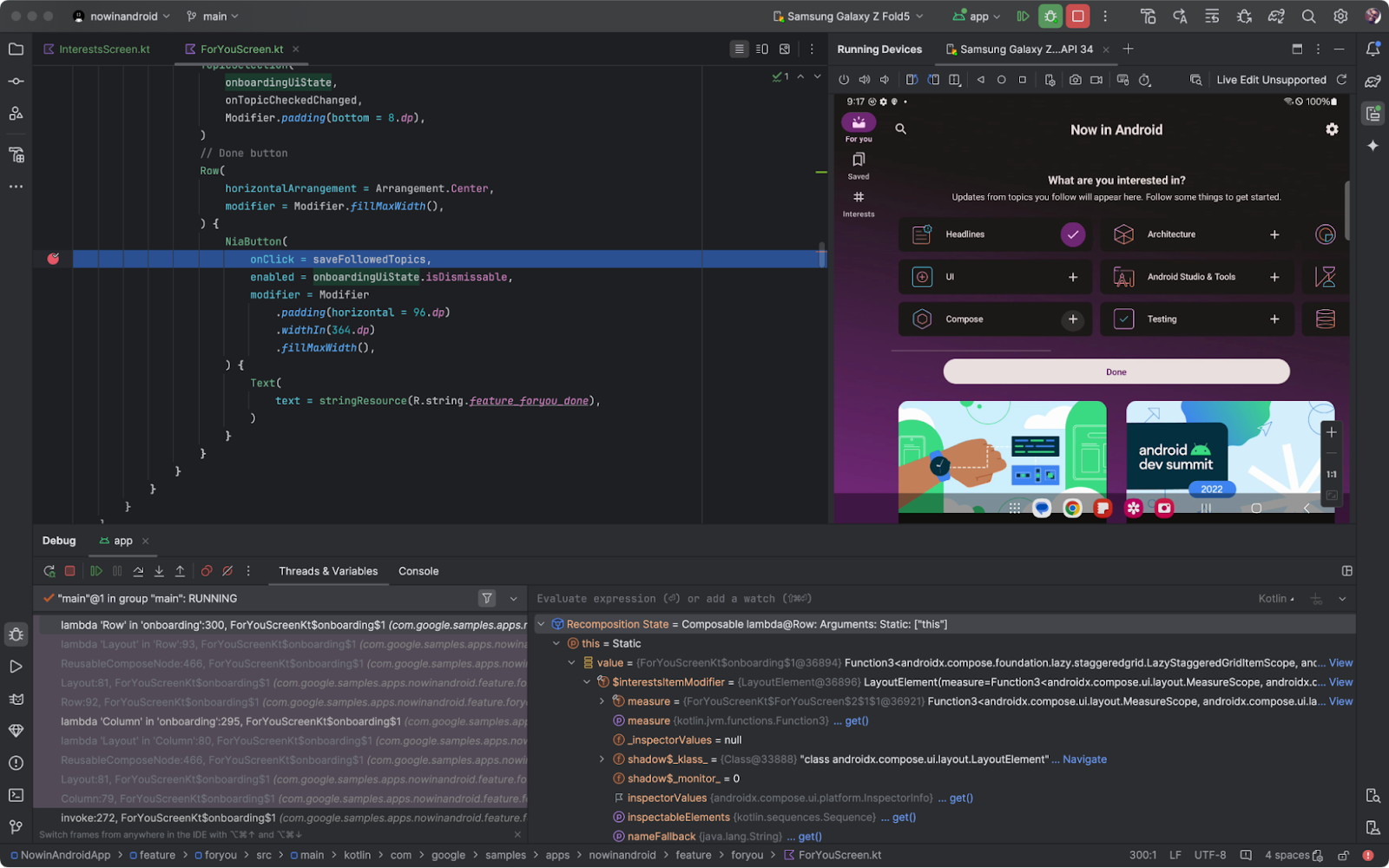

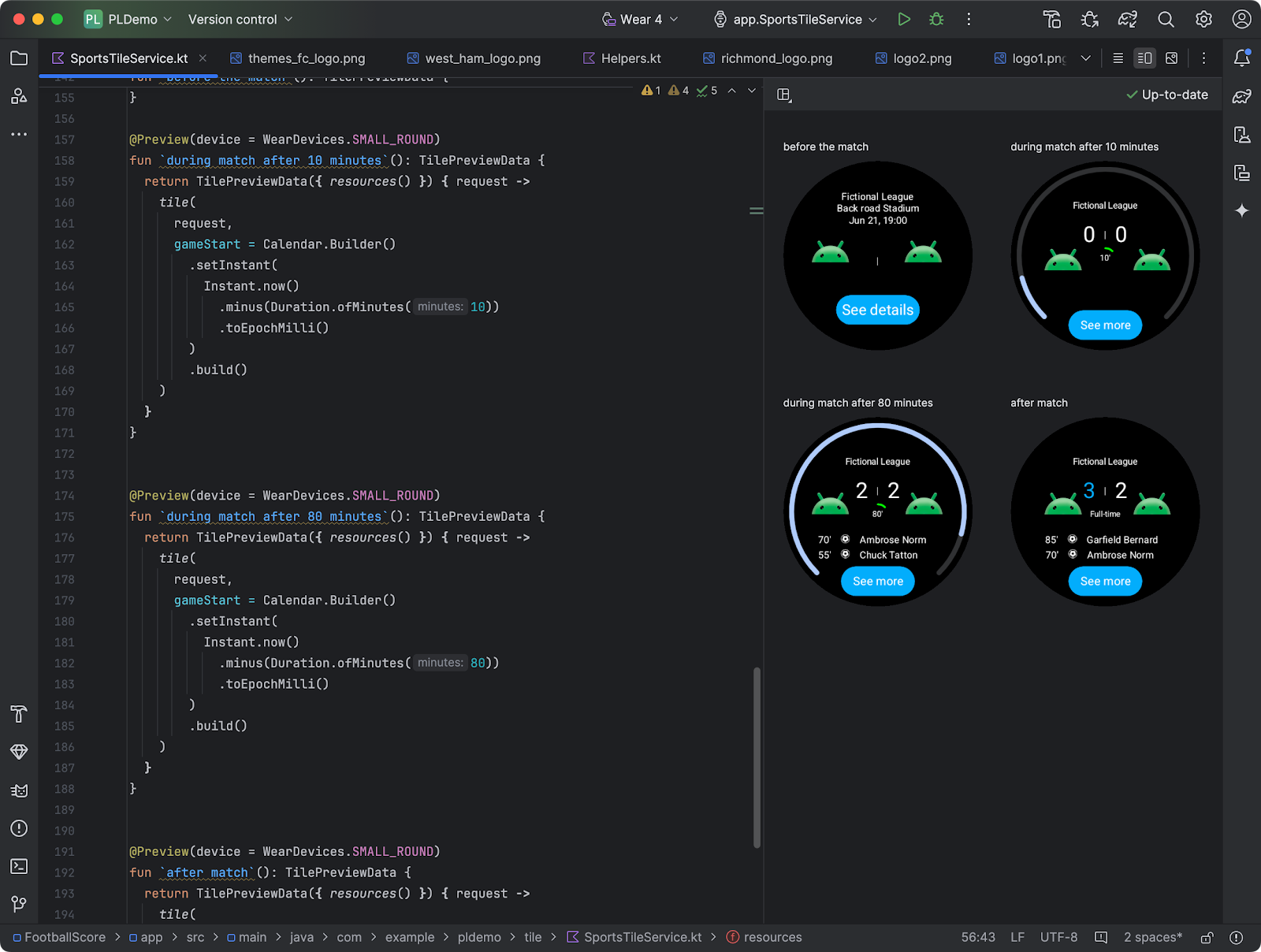

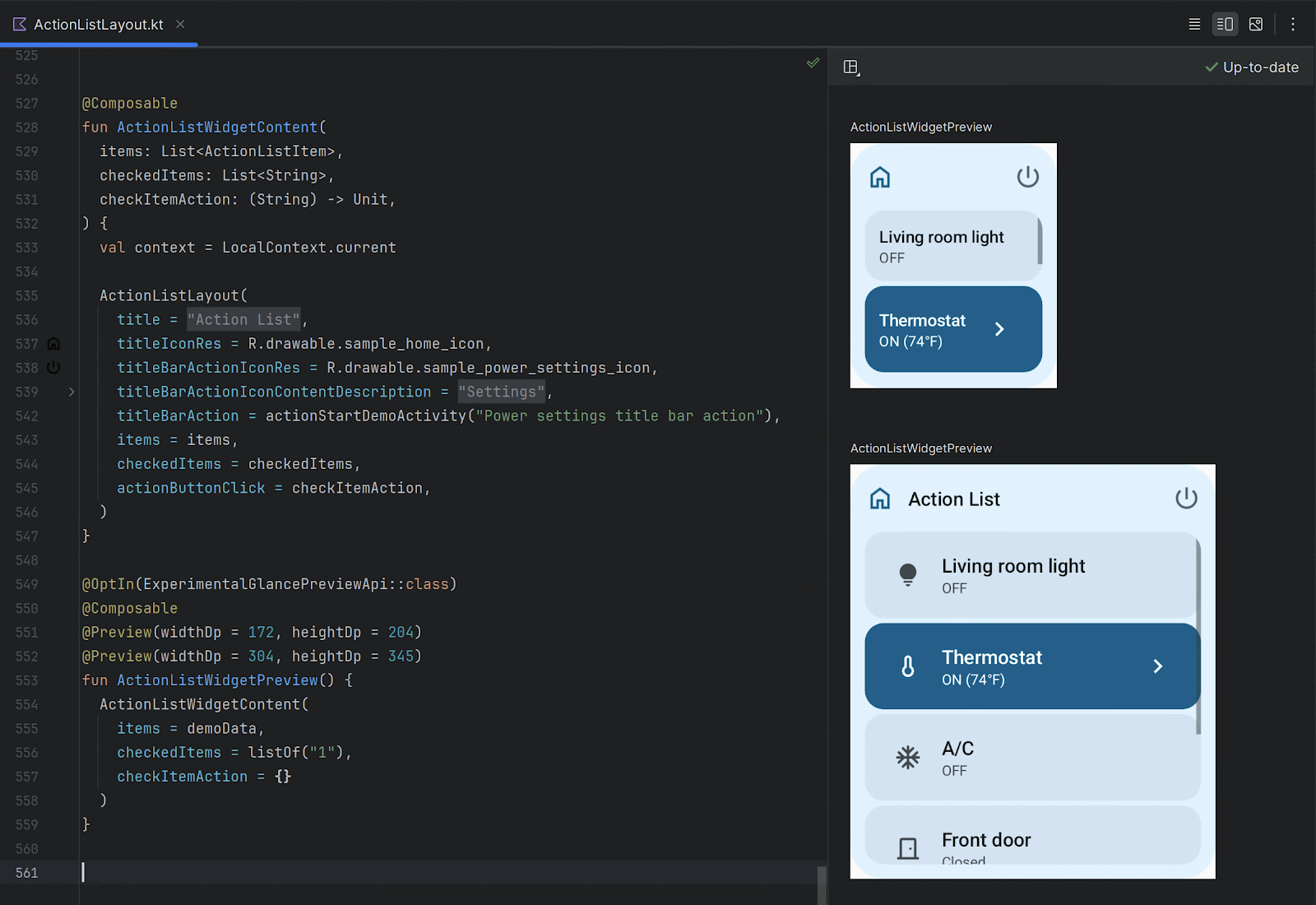

Jetpack Compose

The user interface of Androidify is built using Jetpack Compose, the modern UI toolkit that simplifies and accelerates UI development on Android.

Delightful details with the UI

The app uses Material 3 Expressive, the latest alpha release that makes your apps more premium, desirable, and engaging. It provides delightful bits of UI out-of-the-box, like new shapes, componentry, and using the MotionScheme variables wherever a motion spec is needed.

MaterialShapes are used in various locations. These are a preset list of shapes that allow for easy morphing between each other—for example, the cute cookie shape for the camera capture button:

Beyond using the standard Material components, Androidify also features custom composables and delightful transitions tailored to the specific needs of the app:

- There are plenty of shared element transitions across the app—for example, a morphing shape shared element transition is performed between the “take a photo” button and the camera surface.

- Custom enter transitions for the ResultsScreen with the usage of marquee modifiers.

- Fun color splash animation as a transition between screens.

- Animating gradient buttons for the AI-powered actions.

To learn more about the unique details of the UI, read Androidify: Building delightful UIs with Compose

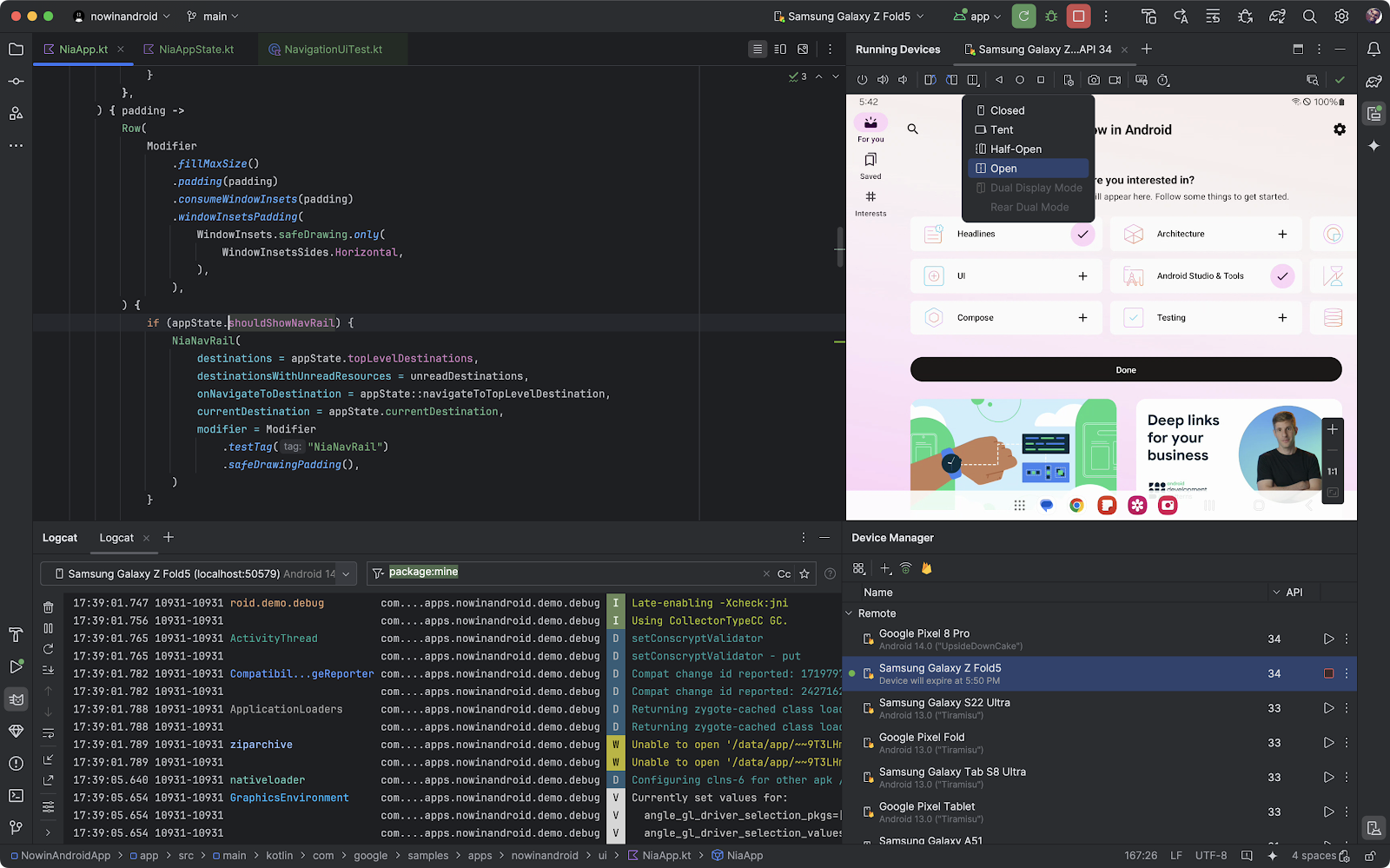

Adapting to different devices

Androidify is designed to look great and function seamlessly across candy bar phones, foldables, and tablets. The general goal of developing adaptive apps is to avoid reimplementing the same app multiple times on each form factor by extracting out reusable composables, and leveraging APIs like WindowSizeClass to determine what kind of layout to display.

For Androidify, we only needed to leverage the width window size class. Combining this with different layout mechanisms, we were able to reuse or extend the composables to cater to the multitude of different device sizes and capabilities.

- Responsive layouts: The CreationScreen demonstrates adaptive design. It uses helper functions like isAtLeastMedium() to detect window size categories and adjust its layout accordingly. On larger windows, the image/prompt area and color picker might sit side-by-side in a Row, while on smaller windows, the color picker is accessed via a ModalBottomSheet. This pattern, called “supporting pane”, highlights the supporting dependencies between the main content and the color picker.

- Foldable support: The app actively checks for foldable device features. The camera screen uses WindowInfoTracker to get FoldingFeature information to adapt to different features by optimizing the layout for tabletop posture.

- Rear display: Support for devices with multiple displays is included via the RearCameraUseCase, allowing for the device camera preview to be shown on the external screen when the device is unfolded (so the main content is usually displayed on the internal screen).

Using window size classes, coupled with creating a custom @LargeScreensPreview annotation, helps achieve unique and useful UIs across the spectrum of device sizes and window sizes.

CameraX and Media3 Compose

To allow users to base their bots on photos, Androidify integrates CameraX, the Jetpack library that makes camera app development easier.

The app uses a custom CameraLayout composable that supports the layout of the typical composables that a camera preview screen would include— for example, zoom buttons, a capture button, and a flip camera button. This layout adapts to different device sizes and more advanced use cases, like the tabletop mode and rear-camera display. For the actual rendering of the camera preview, it uses the new CameraXViewfinder that is part of the camerax-compose artifact.

The app also integrates with Media3 APIs to load an instructional video for showing how to get the best bot from a prompt or image. Using the new media3-ui-compose artifact, we can easily add a VideoPlayer into the app:

@Composable private fun VideoPlayer(modifier: Modifier = Modifier) { val context = LocalContext.current var player by remember { mutableStateOf<Player?>(null) } LifecycleStartEffect(Unit) { player = ExoPlayer.Builder(context).build().apply { setMediaItem(MediaItem.fromUri(Constants.PROMO_VIDEO)) repeatMode = Player.REPEAT_MODE_ONE prepare() } onStopOrDispose { player?.release() player = null } } Box( modifier .background(MaterialTheme.colorScheme.surfaceContainerLowest), ) { player?.let { currentPlayer -> PlayerSurface(currentPlayer, surfaceType = SURFACE_TYPE_TEXTURE_VIEW) } } }

Using the new onLayoutRectChanged modifier, we also listen for whether the composable is completely visible or not, and play or pause the video based on this information:

var videoFullyOnScreen by remember { mutableStateOf(false) } LaunchedEffect(videoFullyOnScreen) { if (videoFullyOnScreen) currentPlayer.play() else currentPlayer.pause() } // We add this onto the player composable to determine if the video composable is visible, and mutate the videoFullyOnScreen variable, that then toggles the player state. Modifier.onVisibilityChanged( containerWidth = LocalView.current.width, containerHeight = LocalView.current.height, ) { fullyVisible -> videoFullyOnScreen = fullyVisible } // A simple version of visibility changed detection fun Modifier.onVisibilityChanged( containerWidth: Int, containerHeight: Int, onChanged: (visible: Boolean) -> Unit, ) = this then Modifier.onLayoutRectChanged(100, 0) { layoutBounds -> onChanged( layoutBounds.boundsInRoot.top > 0 && layoutBounds.boundsInRoot.bottom < containerHeight && layoutBounds.boundsInRoot.left > 0 && layoutBounds.boundsInRoot.right < containerWidth, ) }

Additionally, using rememberPlayPauseButtonState, we add on a layer on top of the player to offer a play/pause button on the video itself:

val playPauseButtonState = rememberPlayPauseButtonState(currentPlayer) OutlinedIconButton( onClick = playPauseButtonState::onClick, enabled = playPauseButtonState.isEnabled, ) { val icon = if (playPauseButtonState.showPlay) R.drawable.play else R.drawable.pause val contentDescription = if (playPauseButtonState.showPlay) R.string.play else R.string.pause Icon( painterResource(icon), stringResource(contentDescription), ) }

Check out the code for more details on how CameraX and Media3 were used in Androidify.

Navigation 3

Screen transitions are handled using the new Jetpack Navigation 3 library androidx.navigation3. The MainNavigation composable defines the different destinations (Home, Camera, Creation, About) and displays the content associated with each destination using NavDisplay. You get full control over your back stack, and navigating to and from destinations is as simple as adding and removing items from a list.

@Composable fun MainNavigation() { val backStack = rememberMutableStateListOf<NavigationRoute>(Home) NavDisplay( backStack = backStack, onBack = { backStack.removeLastOrNull() }, entryProvider = entryProvider { entry<Home> { entry -> HomeScreen( onAboutClicked = { backStack.add(About) }, ) } entry<Camera> { CameraPreviewScreen( onImageCaptured = { uri -> backStack.add(Create(uri.toString())) }, ) } // etc }, ) }

Notably, Navigation 3 exposes a new composition local, LocalNavAnimatedContentScope, to easily integrate your shared element transitions without needing to keep track of the scope yourself. By default, Navigation 3 also integrates with predictive back, providing delightful back experiences when navigating between screens, as seen in this prior shared element transition:

Learn more about Jetpack Navigation 3, currently in alpha.

Learn more

By combining the declarative power of Jetpack Compose, the camera capabilities of CameraX, the intelligent features of Gemini, and thoughtful adaptive design, Androidify is a personalized avatar creation experience that feels right at home on any Android device. You can find the full code sample at github.com/android/androidify where you can see the app in action and be inspired to build your own AI-powered app experiences.

Explore this announcement and all Google I/O 2025 updates on io.google starting May 22.

Posted by Rebecca Franks – Developer Relations Engineer

Posted by Rebecca Franks – Developer Relations Engineer

Posted by Thomas Ezan – Developer Relations Engineer, Rebecca Franks – Developer Relations Engineer, and Avneet Singh – Product Manager

Posted by Thomas Ezan – Developer Relations Engineer, Rebecca Franks – Developer Relations Engineer, and Avneet Singh – Product Manager

Posted by Rebecca Franks - Developer Relations Engineer

Posted by Rebecca Franks - Developer Relations Engineer

Posted by

Posted by

Posted by

Posted by

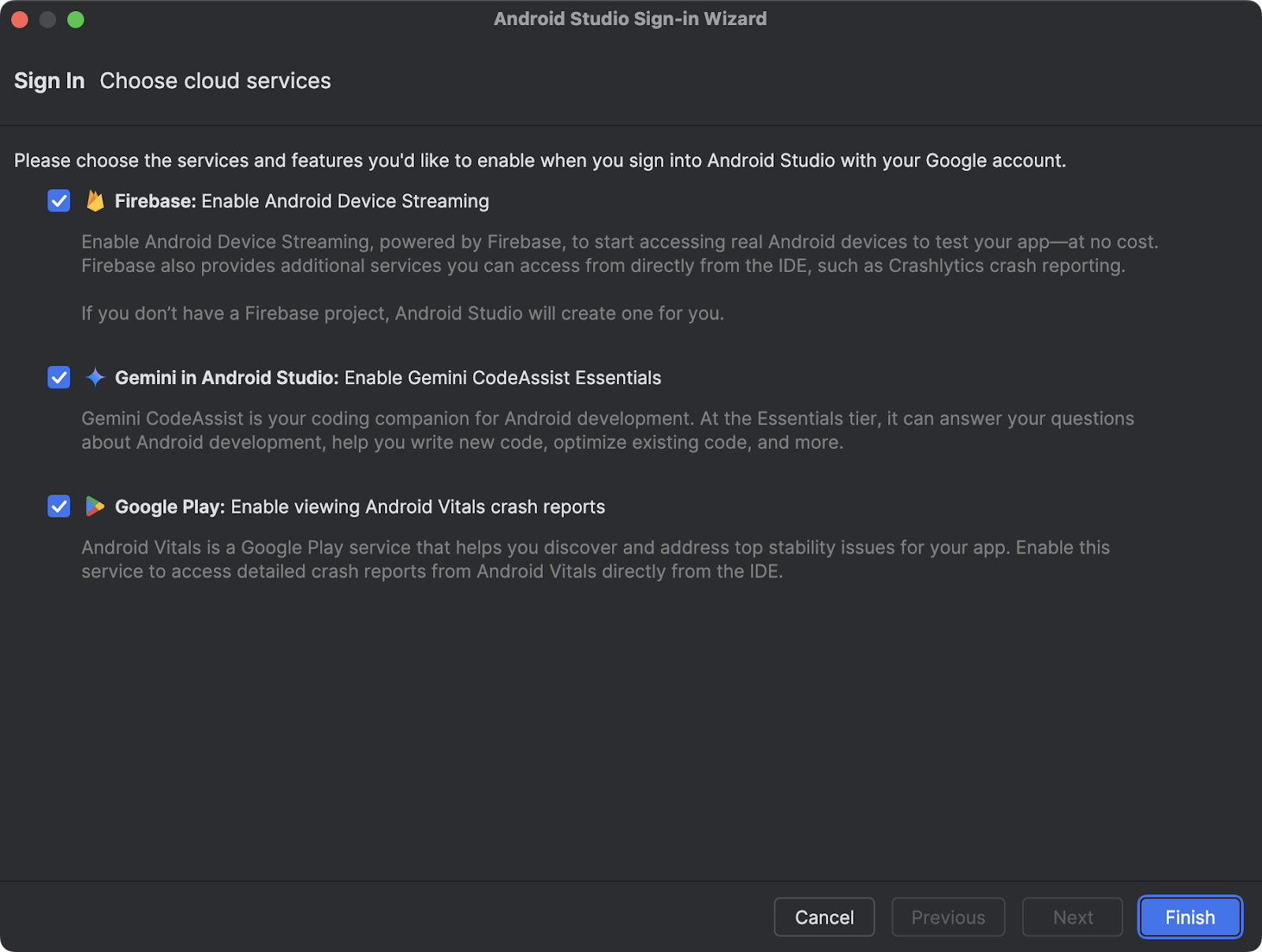

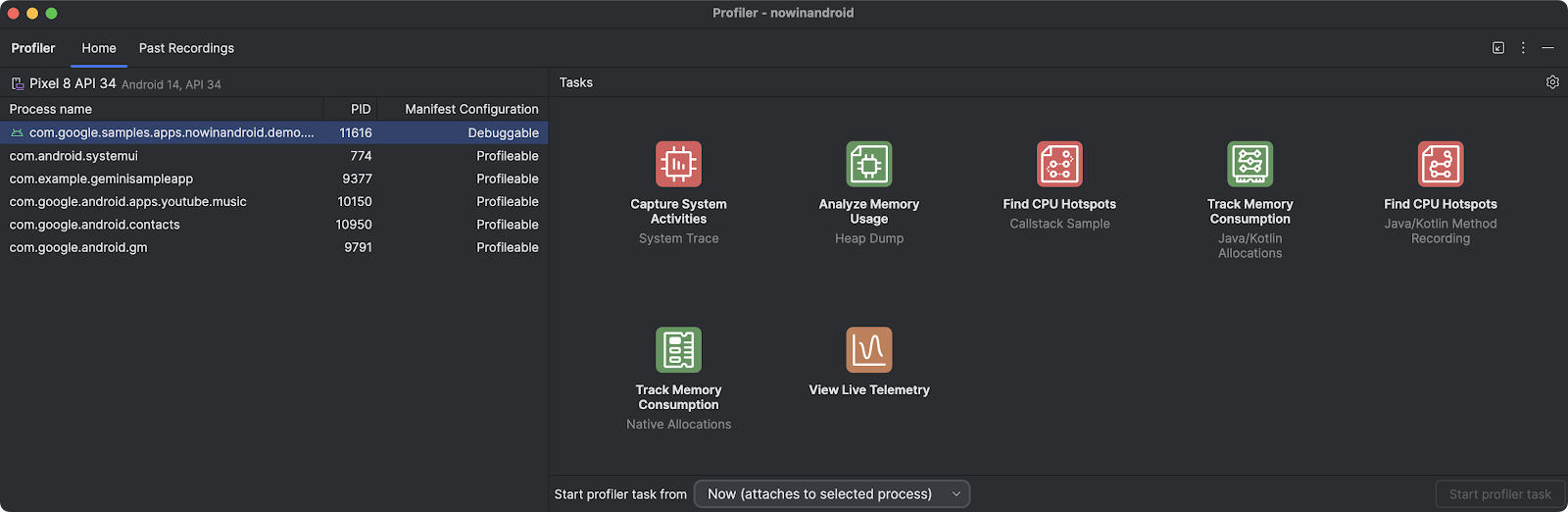

Posted by Adarsh Fernando, Senior Product Manager, Android Developer Tools

Posted by Adarsh Fernando, Senior Product Manager, Android Developer Tools

Posted by

Posted by

Posted by

Posted by

Posted by

Posted by

Posted by Kaushik Sathupadi, Prakhar Srivastav, and Kristin Bi – Software Engineers; Alex Geboff – Technical Writer

Posted by Kaushik Sathupadi, Prakhar Srivastav, and Kristin Bi – Software Engineers; Alex Geboff – Technical Writer