A guest post by Rouella Mendonca, AI Product Lead and Matt Brown, Machine Learning Engineer at Audere

A guest post by Rouella Mendonca, AI Product Lead and Matt Brown, Machine Learning Engineer at Audere

Please note that the information, uses, and applications expressed in the below post are solely those of our guest authors from Audere.

Please note that the information, uses, and applications expressed in the below post are solely those of our guest authors from Audere.

About HealthPulse AI and its application in the real world

Preventable and treatable diseases like HIV, COVID-19, and malaria infect ~12 million per year globally with a disproportionate number of cases impacting already underserved and under-resourced communities1. Communicable and non-communicable diseases are impeding human development by their negative impact on education, income, life expectancy, and other health indicators2. Lack of access to timely, accurate, and affordable diagnostics and care is a key contributor to high mortality rates.

Due to their low cost and relative ease of use, ~1 billion rapid diagnostic tests (RDTs) are used globally per year and growing. However, there are challenges with RDT use.

- Where RDT data is reported, results are hard to trust due to inflated case counts, lack of reported expected seasonal fluctuations, and non-adherence to treatment regimens.

- They are used in decentralized care settings by those with limited or no training, increasing the risk of misadministration and misinterpretation of test results.

HealthPulse AI, developed by a digital health non-profit Audere, leverages MediaPipe to address these issues by providing digital building blocks to increase trust in the world’s most widely used RDTs.

HealthPulse AI is a set of building blocks that can turn any digital solution into a Rapid Diagnostic Test (RDT) reader. These building blocks solve prominent global health problems by improving rapid diagnostic test accuracy, reducing misadministration of tests, and expanding the availability of testing for conditions including malaria, COVID, and HIV in decentralized care settings. With just a low-end smartphone, HealthPulse AI improves the accuracy of rapid diagnostic test results while automatically digitizing data for surveillance, program reporting, and test validation. It provides AI facilitated digital capture and result interpretation; quality, accessible digital use instructions for provider and self-tests; and standards based real-time reporting of test results.

These capabilities are available to local implementers, global NGOs, governments, and private sector pharmacies via a web service for use with chatbots, apps or server implementations; a mobile SDK for offline use in any mobile application; or directly through native Android and iOS apps.

It enables innovative use cases such as quality-assured virtual care models which enables stigma-free, convenient HIV home testing with linkage to education, prevention, and treatment options.

HealthPulse AI Use Cases

HealthPulse AI can substantially democratize access to timely, quality care in the private sector (e.g. pharmacies), in the public sector (e.g. clinics), in community programs (e.g. community health workers), and self-testing use cases. Using only an RDT image captured on a low-end smartphone, HealthPulse AI can power virtual care models by providing valuable decision support and quality control to clinicians, especially in cases where lines may be faint and hard to detect with the human eye. In the private sector, it can automate and scale incentive programs so auditors only need to review automated alerts based on test anomalies; procedures which presently require human reviews of each incoming image and transaction. In community care programs, HealthPulse AI can be used as a training tool for health workers learning how to correctly administer and interpret tests. In the public sector, it can strengthen surveillance systems with real-time disease tracking and verification of results across all channels where care is delivered - enabling faster response and pandemic preparedness3.

HealthPulse AI algorithms

HealthPulse AI provides a library of AI algorithms for the top RDTs for malaria, HIV, and COVID. Each algorithm is a collection of Computer Vision (CV) models that are trained using machine learning (ML) algorithms. From an image of an RDT, our algorithms can:

- Flag image quality issues common on low-end phones (blurriness, over/underexposure)

- Detect the RDT type

- Interpret the test result

Image Quality Assurance

When capturing an image of an RDT, it is important to ensure that the image captured is human and AI interpretable to power the use cases described above. Image quality issues are common, particularly when images are captured with low-end phones in settings that may have poor lighting or simply captured by users with shaky hands. As such, HealthPulse AI provides image quality assurance (IQA) to identify adversarial image conditions. IQA returns concerns detected and can be used to request users to retake the photo in real time. Without IQA, clients would have to retest due to uninterpretable images and expired RDT read windows in telehealth use cases, for example. With just-in-time quality concern flagging, additional cost and treatment delays can be avoided. Examples of some adversarial images that IQA would flag are shown in Figure 1 below.

|

| Figure 1: Images of malaria, HIV and COVID tests that are dark, blurry, too bright, and too small. |

Classification

With just an image captured on a 5MP camera from low-end smartphones commonly used in Africa, SE Asia, and Latin America where a disproportionate disease burden exists, HealthPulse AI can identify a specific test (brand, disease), individual test lines, and provide an interpretation of the test. Our current library of AI algorithms supports many of the most commonly used RDTs for malaria, HIV, and COVID-19 that are W.H.O. pre-qualified. Our AI is condition agnostic and can be easily extended to support any RDT for a range of communicable and non-communicable diseases (Diabetes, Influenza, Tuberculosis, Pregnancy, STIs and more).

HealthPulse AI is able to detect the type of RDT in the image (for supported RDTs that the model was trained for), detect the presence of lines, and return a classification for the particular test (e.g. positive, negative, invalid, uninterpretable). See Figure 2.

|

| Figure 2: Interpretation of a supported lateral flow rapid test. |

How and why we use MediaPipe

Deploying HealthPulse AI in decentralized care settings with unstable infrastructure comes with a number of challenges. The first challenge is a lack of reliable internet connectivity, often requiring our CV and ML algorithms to run locally. Secondly, phones available in these settings are often very old, lacking the latest hardware (< 1 GB of ram and comparable CPU specs), and on different platforms and versions ( iOS, Android, Huawei; very old versions - possibly no longer receiving OS updates) mobile platforms. This necessitates having a platform agnostic, highly efficient inference engine. MediaPipe’s out-of-the-box multi-platform support for image-focused machine learning processes makes it efficient to meet these needs.

As a non-profit operating in cost-recovery mode, it was important that solutions:

- have broad reach globally,

- are low-lift to maintain, and

- meet the needs of our target population for offline, low resource, performant use.

Without needing to write a lot of glue code, HealthPulse AI can support Android, iOS, and cloud devices using the same library built on MediaPipe.

Our pipeline

MediaPipe’s graph definitions allow us to build and iterate our inference pipeline on the fly. After a user submits a picture, the pipeline determines the RDT type, and attempts to classify the test result by passing the detected result-window crop of the RDT image to our classifier.

For good human and AI interpretability, it is important to have good quality images. However, input images to the pipeline have a high level of variability we have little to no control over. Variability factors include (but are not limited to) varying image quality due to a range of smartphone camera features/megapixels/physical defects, decentralized testing settings which include differing and non-ideal lighting conditions, random orientations of the RDT cassettes, blurry and unfocused images, partial RDT images, and many other adversarial conditions that add challenges for the AI. As such, an important part of our solution is image quality assurance. Each image passes through a number of calculators geared towards highlighting quality concerns that may prevent the detector or classifier from doing its job accurately. The pipeline elevates these concerns to the host application, so an end-user can be requested in real-time to retake a photo when necessary. Since RDT results have a limited validity time (e.g. a time window specified by the RDT manufacturer for how long after processing a result can be accurately read), IQA is essential to ensure timely care and save costs. A high level flowchart of the pipeline is shown below in Figure 3.

|

| Figure 3: HealthPulse AI pipeline |

Summary

HealthPulse AI is designed to improve the quality and richness of testing programs and data in underserved communities that are disproportionately impacted by preventable communicable and non-communicable diseases.

Towards this mission, MediaPipe plays a critical role by providing a platform that allows Audere to quickly iterate and support new rapid diagnostic tests. This is imperative as new rapid tests come to market regularly, and test availability for community and home use can change frequently. Additionally, the flexibility allows for lower overhead in maintaining the pipeline, which is crucial for cost-effective operations. This, in turn, reduces the cost of use for governments and organizations globally that provide services to people who need them most.

HealthPulse AI offerings allow organizations and governments to benefit from new innovations in the diagnostics space with minimal overhead. This is an essential component of the primary health journey - to ensure that populations in under-resourced communities have access to timely, cost-effective, and efficacious care.

About Audere

Audere is a global digital health nonprofit developing AI based solutions to address important problems in health delivery by providing innovative, scalable, interconnected tools to advance health equity in underserved communities worldwide. We operate at the unique intersection of global health and high tech, creating advanced, accessible software that revolutionizes the detection, prevention, and treatment of diseases — such as malaria, COVID-19, and HIV. Our diverse team of passionate, innovative minds combines human-centered design, smartphone technology, artificial intelligence (AI), open standards, and the best of cloud-based services to empower innovators globally to deliver healthcare in new ways in low-and-middle income settings. Audere operates primarily in Africa with projects in Nigeria, Kenya, Côte d’Ivoire, Benin, Uganda, Zambia, South Africa, and Ethiopia.

1 WHO malaria fact sheets

Circle to Search is now available on select premium Android smartphones — here are some helpful ways to use it.

Circle to Search is now available on select premium Android smartphones — here are some helpful ways to use it.

Circle to Search is now available on select premium Android smartphones — here are some helpful ways to use it.

Circle to Search is now available on select premium Android smartphones — here are some helpful ways to use it.

We’re publishing an AI Opportunity whitepaper to help ASEAN governments tap into AI’s vast potential.

We’re publishing an AI Opportunity whitepaper to help ASEAN governments tap into AI’s vast potential.

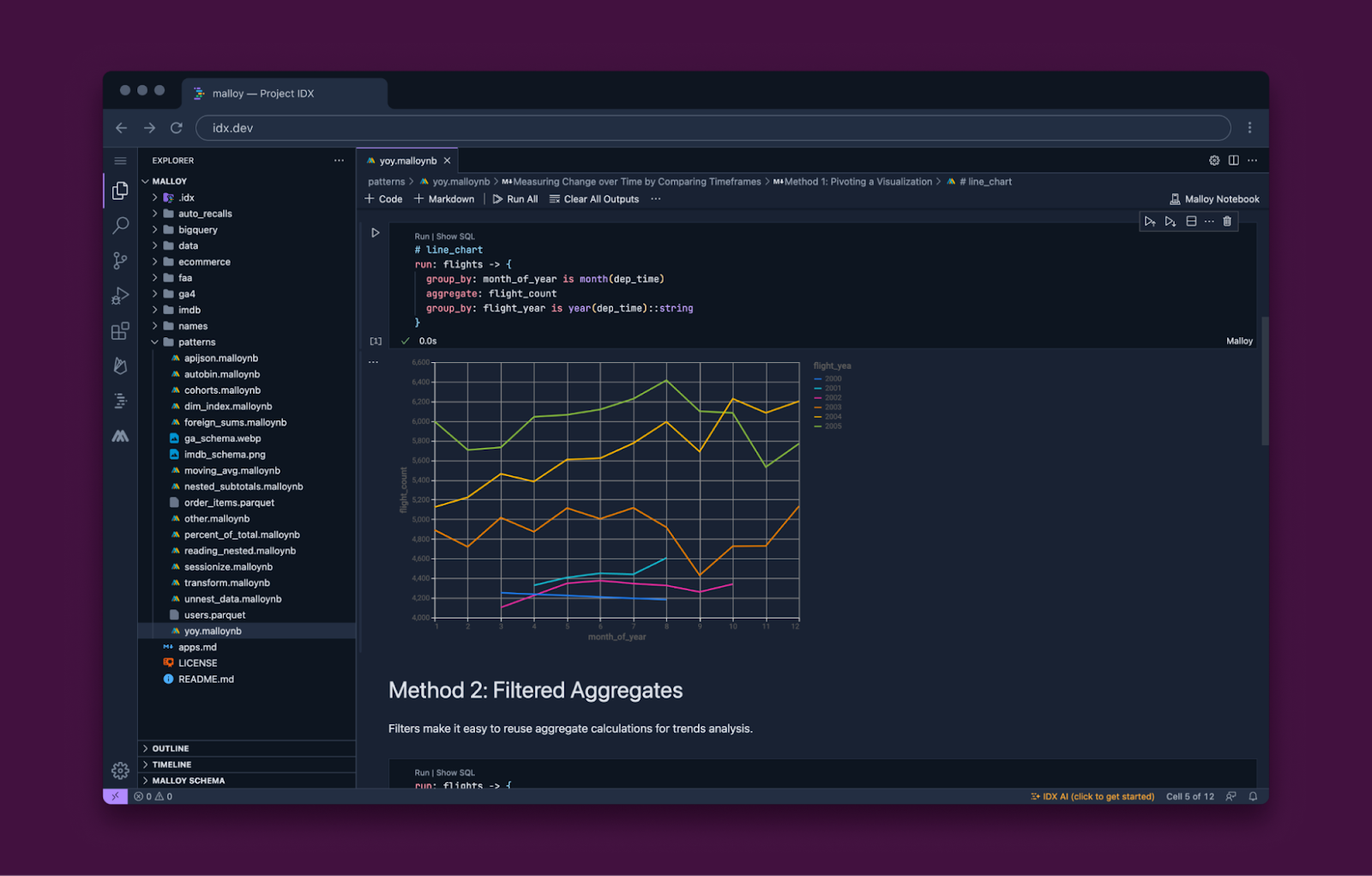

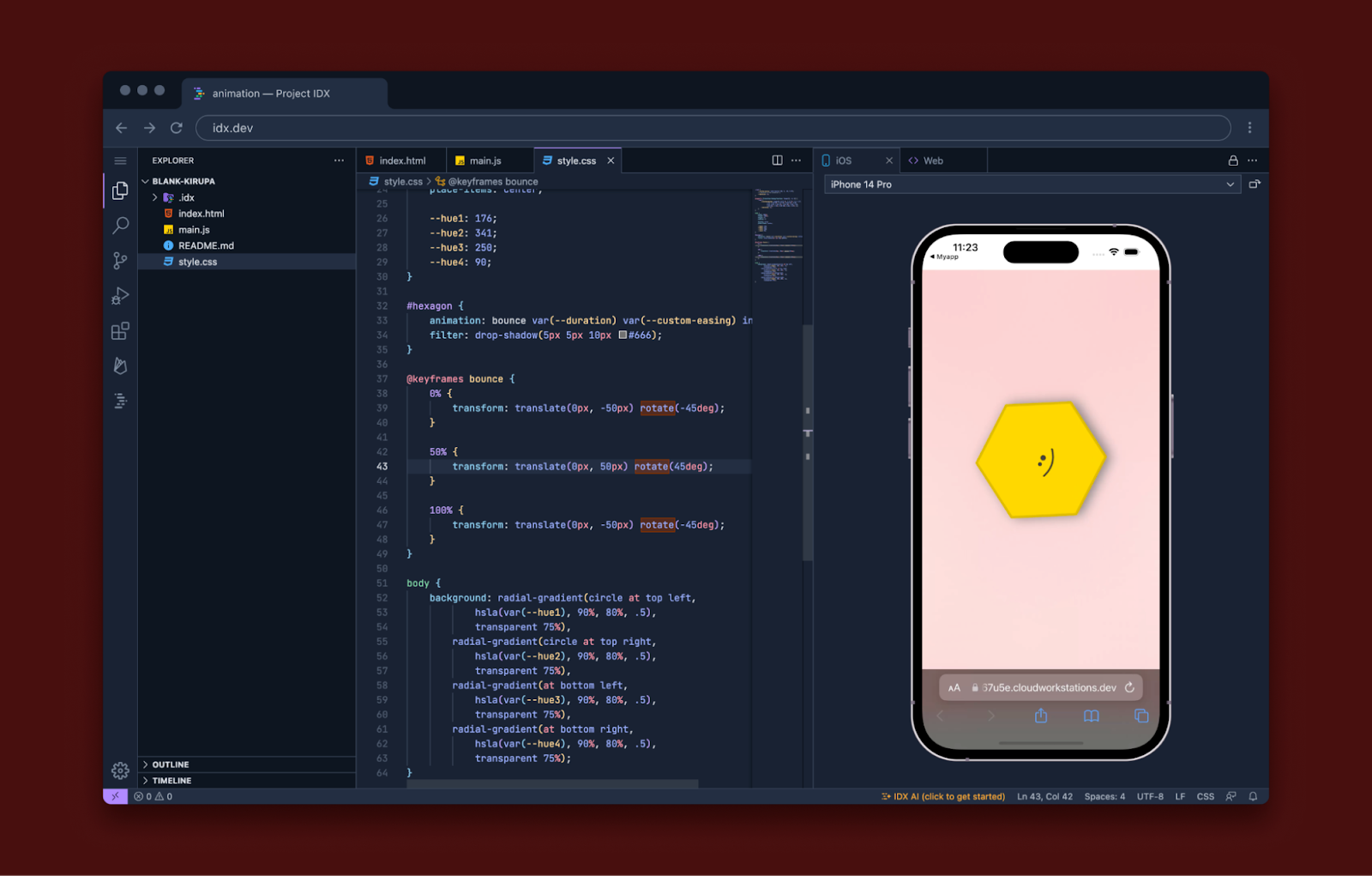

Posted by the IDX team

Posted by the IDX team

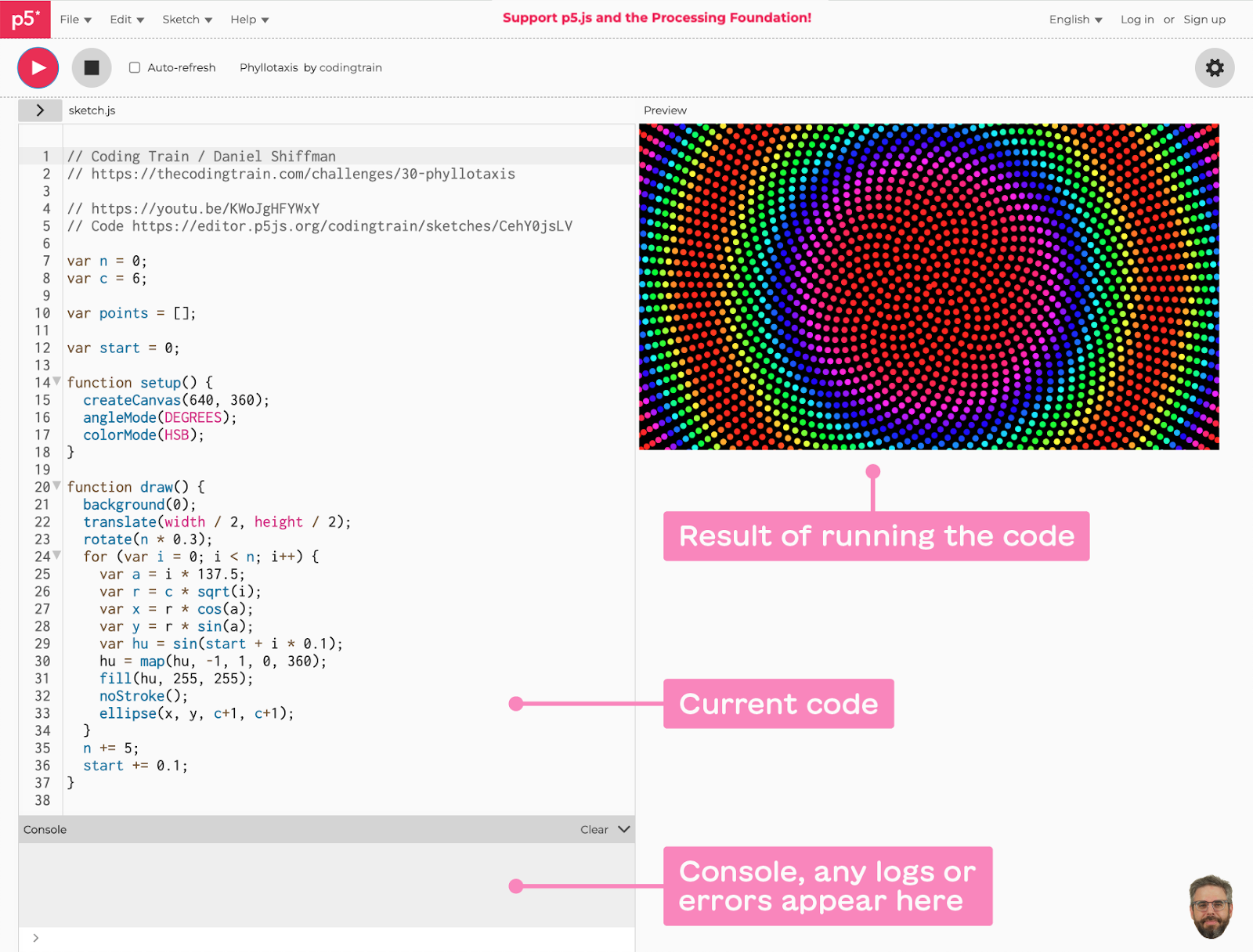

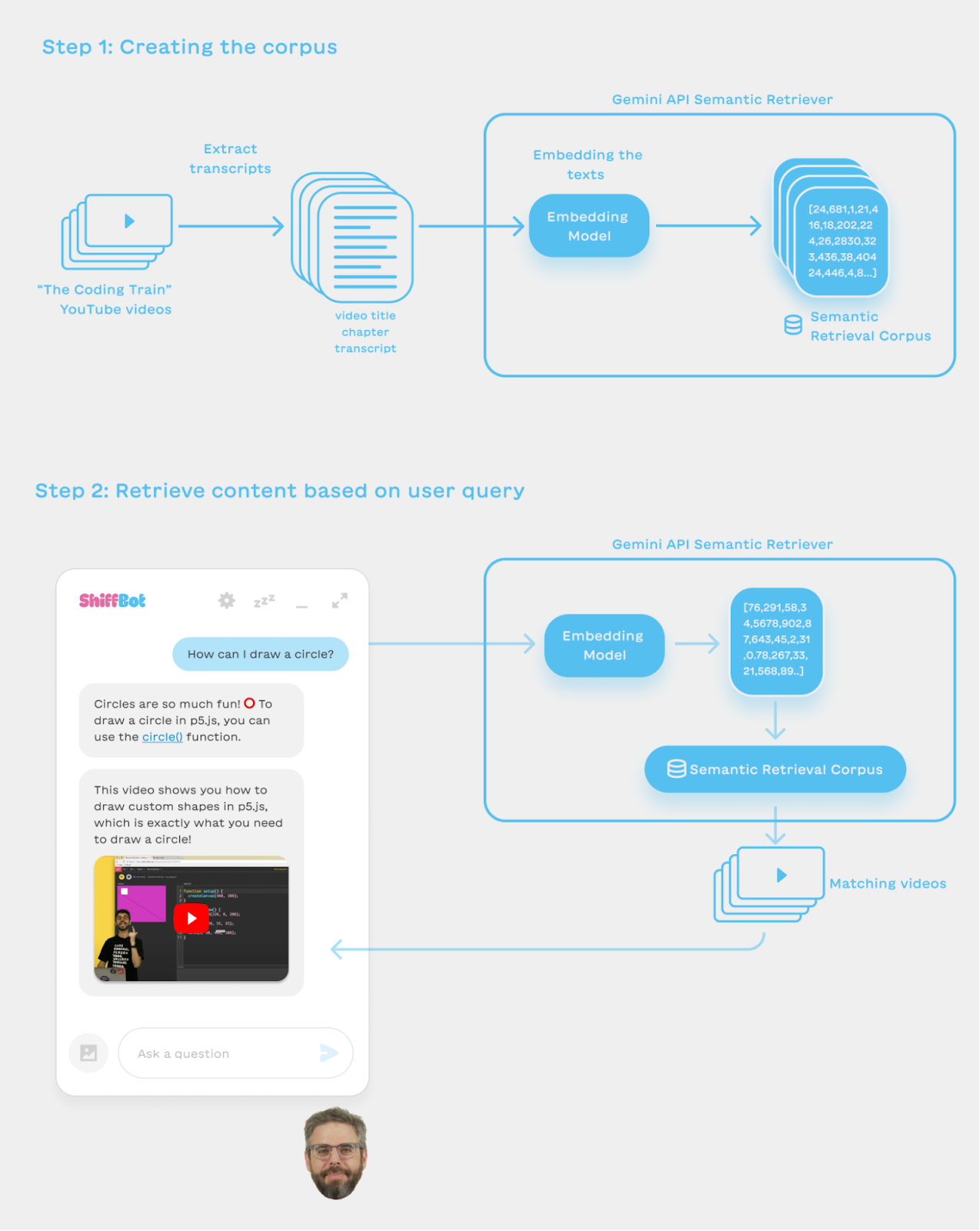

Posted by Jasmin Rubinovitz, AI Researcher

Posted by Jasmin Rubinovitz, AI Researcher

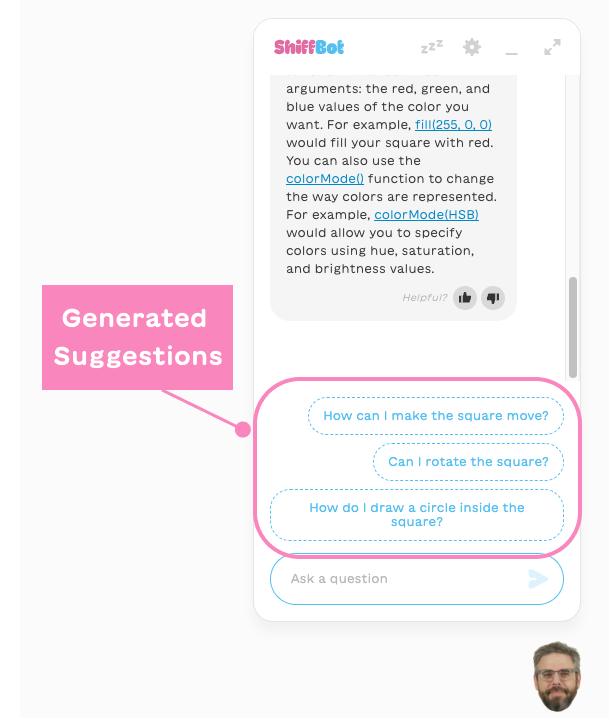

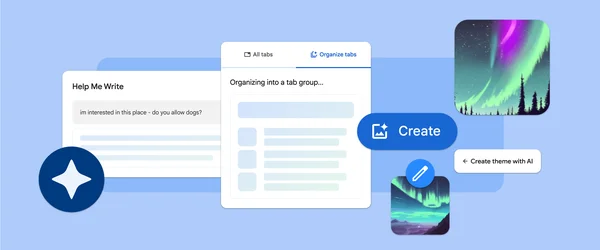

Three experimental generative AI features are coming to Chrome on Macs and Windows PCs to make browsing easier and more personalized.

Three experimental generative AI features are coming to Chrome on Macs and Windows PCs to make browsing easier and more personalized.

A guest post by Rouella Mendonca, AI Product Lead and Matt Brown, Machine Learning Engineer at

A guest post by Rouella Mendonca, AI Product Lead and Matt Brown, Machine Learning Engineer at

Learn more about Google for Startups Accelerator: AI First program for North American startups.

Learn more about Google for Startups Accelerator: AI First program for North American startups.

Check out the ways Google and Samsung are collaborating to bring the latest Google AI innovations to Samsung’s flagship smartphones.

Check out the ways Google and Samsung are collaborating to bring the latest Google AI innovations to Samsung’s flagship smartphones.

Circle to Search is a new way to search what’s on your screen without switching apps, available on select premium Android smartphones.

Circle to Search is a new way to search what’s on your screen without switching apps, available on select premium Android smartphones.