Posted by Wesley Chun (@wescpy), Developer Advocate, Google Cloud

Introduction

Google Cloud offers three distinct ways of running your code or application in a serverless way, each serving different use cases. Google App Engine, our first Cloud product, was created to give users the ability to deploy source-based web applications or mobile backends directly to the cloud without the need of thinking about servers or scaling. Cloud Functions came later for scenarios where you may not have an entire app, great for one-off utility functions or event-driven microservices. Cloud Run is our latest fully-managed serverless product that gives developers the flexibility of containers along with the convenience of serverless.

As all are serverless compute platforms, users recognize they share some similarities along with clear differences, and often, they ask:

- How different is deploying code to App Engine, Cloud Functions, or Cloud Run?

- Is it challenging to move from one to another if I feel the other may better fit my needs?

We're going to answer these questions today by sharing a unique application with you, one that can be deployed to all three platforms without changing any application code. All of the necessary changes are done in configuration.

More motivation

Another challenge for developers can be trying to learn how to use another Cloud product, such as this request, paraphrased from a user:

- I have a Google App Engine app

- I want to call the Cloud Translation API from that app

Sounds simple enough. This user went straight to the App Engine and Translation API documentation where they were able to get started with the App Engine Quickstart to get their app up and going, then found the Translation API setup page and started looking into permissions needed to access the API. However, they got stuck at the Identity and Access Management (IAM) page on roles, being overwhelmed at all the options but no clear path forward. In light of this, let's add a third question to preceding pair outlined earlier:

- How do you access Cloud APIs from a Cloud serverless platform?

Without knowing what that user was going to build, let's just implement a barebones translator, an "MVP" (minimally viable product) version of a simple "My Google Translate" Python Flask app using the Translation API, one of Google Cloud's

AI/ML "building block" APIs. These APIs are backed by pre-trained machine learning models, giving developers with little or no background in AI/ML the ability to leverage the benefits of machine learning with only API calls.

The application

The app consists of a simple web page prompting the user for a phrase to translate from English to Spanish. The translated results along with the original phrase are presented along with an empty form for a follow-up translation if desired. While the majority of this app's deployments are in Python 3, there are still many users working on upgrading from Python 2, so some of

those deployments are available to help with migration planning. Taking this into account, this app can be deployed (at least) eight different ways:

- Local (or hosted) Flask server (Python 2)

- Local (or hosted) Flask server (Python 3)

- Google App Engine (Python 2)

- Google App Engine (Python 3)

- Google Cloud Functions (Python 3)

- Google Cloud Run (Python 2 via Docker)

- Google Cloud Run (Python 3 via Docker)

- Google Cloud Run (Python 3 via Cloud Buildpacks)

The following is a brief glance at the files and which configurations they're for:

Nebulous serverless sample app files

Diving straight into the application, let's look at its primary function,

translate():

@app.route('/', methods=['GET', 'POST'])

def translate(gcf_request=None):

local_request = gcf_request if gcf_request else request

text = translated = None

if local_request.method == 'POST':

text = local_request.form['text'].strip()

if text:

data = {

'contents': [text],

'parent': PARENT,

'target_language_code': TARGET[0],

}

rsp = TRANSLATE.translate_text(request=data)

translated = rsp.translations[0].translated_text

context = {

'orig': {'text': text, 'lc': SOURCE},

'trans': {'text': translated, 'lc': TARGET},

}

return render_template('index.html', **context)

Core component (translate()) of sample application

Some key app components:

- Upon an initial request (GET), an HTML template is rendered featuring a simple form with an empty text field for the text to translate.

- The form POSTs back to the app, and in this case, grabs the text to translate, sends the request to the Translation API, receives and displays the results to the user along with an empty form for another translation.

- There is a special "

ifdef" for Cloud Functions near the top to receive a request object because a web framework isn't used like you'd have with App Engine or Cloud Run, so Cloud Functions provides one for this reason.

The app runs identically whether running locally or deployed to App Engine, Cloud Functions, or Cloud Run. The magic is all in the configuration. The

requirements.txt file

* is used in all configurations, whether to install third-party packages locally, or to direct the

Cloud Build system to automatically install those libraries during deployment. Beyond

requirements.txt, things start to differ:

- App Engine has an

app.yaml file and possibly an appengine_config.py file. - Cloud Run has either a

Dockerfile (Docker) or Procfile (Cloud Buildpacks), and possibly a service.yaml file. - Cloud Functions, the "simplest" of the three, has no configuration outside of a package requirements file (

requirements.txt, package.json, etc.).

The following is what you should expect to see after completing one translation request:

"My Google Translate" MVP app (Cloud Run edition)

Next steps

The sample app can be run locally or on your own hosting server, but now you also know how to deploy it to each of Cloud's serverless platforms and what those subtle differences are. You also have a sense of the differences between each platform as well as what it takes to switch from one to another. For example, if your organization is moving to implement containerization into your software development workflow, you can migrate your existing App Engine apps to Cloud Run

using Docker or

using Cloud Buildpacks if you don't want to think about containers or Dockerfiles. Lastly, you now know how to access Cloud APIs from these platforms. Lastly, you now know how to access Cloud APIs from these platforms.

The user described earlier was overwhelmed at all the IAM roles and options available because this type of detail is required to provide the most security options for accessing Cloud services, but when prototyping, the fastest on-ramp is to use the default service account that comes with Cloud serverless platforms. These help you get that prototype working while allowing you to learn more about IAM roles and required permissions. Once you've progressed far enough to consider deploying to production, you can then follow the best practice of "least privileges" and create your own (user-managed) service accounts with the minimal permissions required so your application functions properly.

To dive in, the code and codelabs (free, self-paced, hands-on tutorials) for each deployment are available in

its open source repository. An

active Google Cloud billing account is required to deploy this application to each of our serverless platforms even though you can do all of them without incurring charges. More information can be found in

the "Cost" section of the repo's

README. We hope this sample app teaches you more about the similarities and differences between our plaforms, shows you how you can "shift" applications comfortably between them, and provides a light introduction to another Cloud API. Also check out my colleague's

post featuring similar content for Node.js.

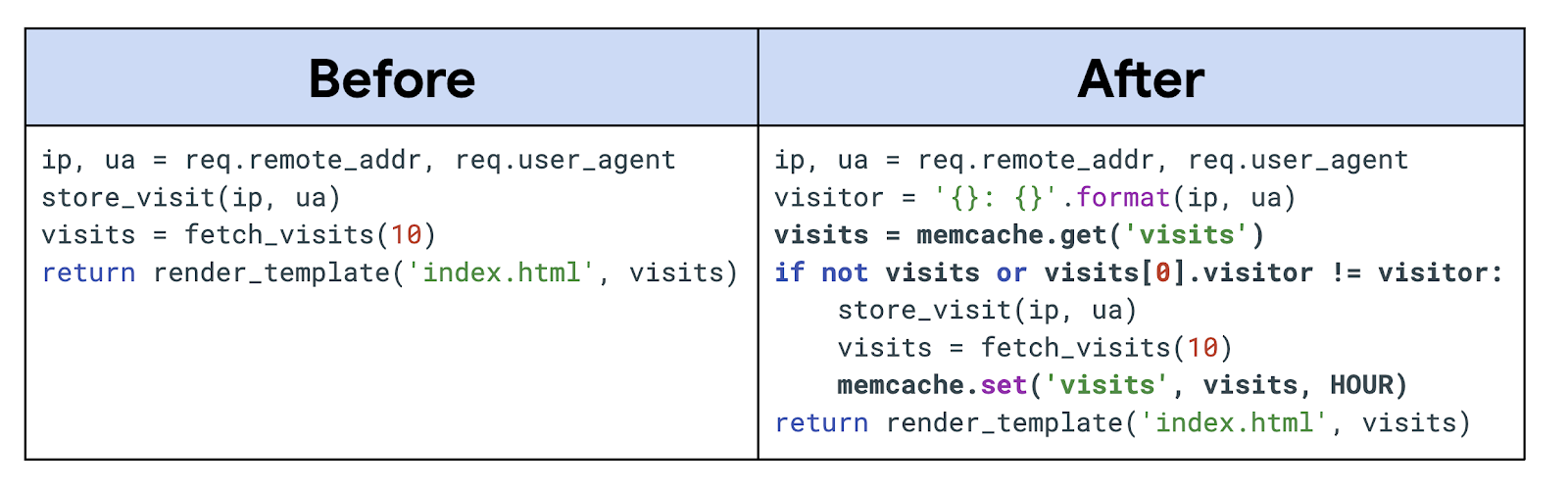

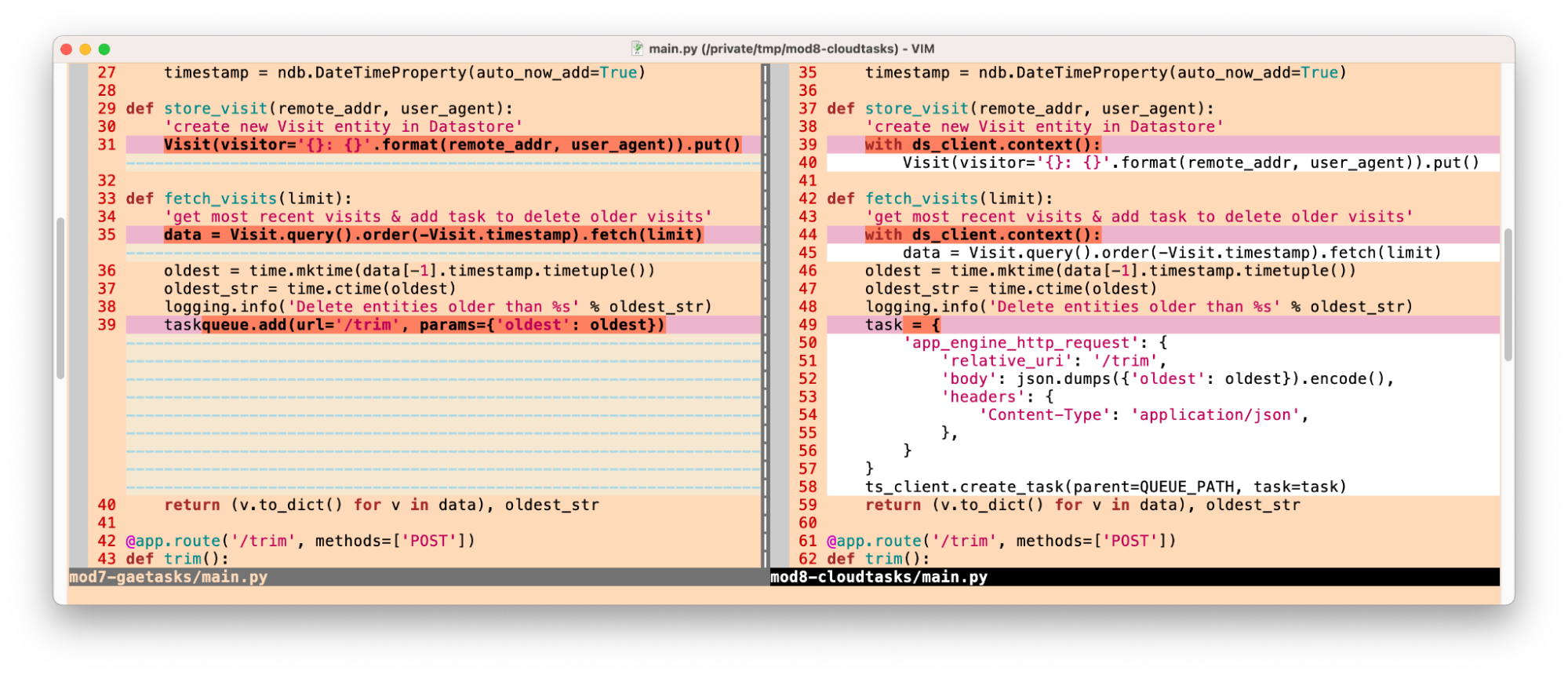

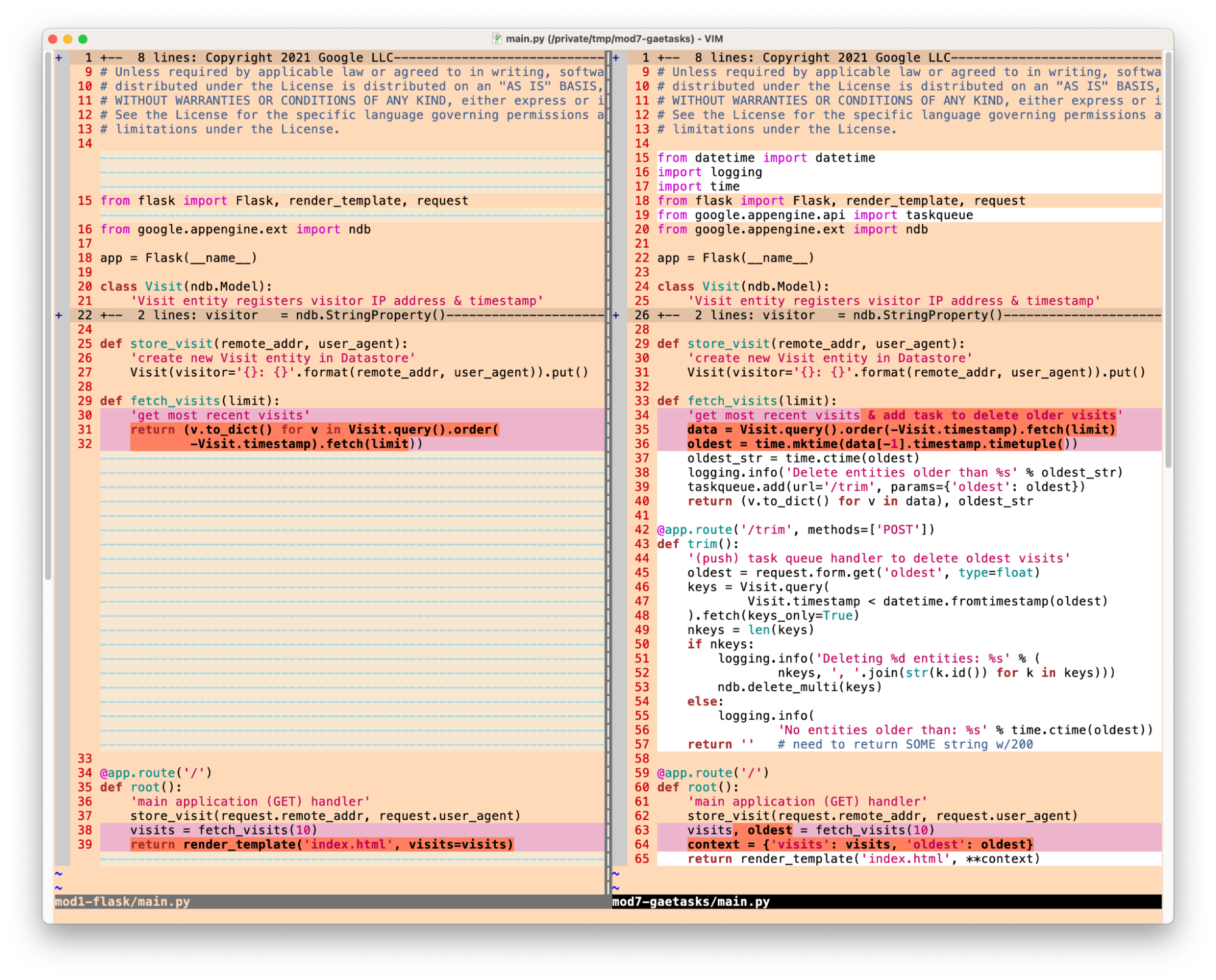

![Adding App Engine Task Queue pull task usage to sample app showing 'Before'[Module 1] on the left and 'After' [Module 18] with altered code on the right](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjRVGVYc9fl4xI9xUpOUaAwXup8p-wUf5nbKllG7OYJSVAYSPVCGw7DU8EbMoTE3kBBgVZiICFvsn_7fHP2oymA_1ASKBrAE2Qt8PzCGAAkK7_WnyvAIEMKQUrxP8FSz3tGykLrlu9nyluN5vgEPrWZrBqIAalCoRmos169g9m9NHz3cGqQyych9eEG/s1600/Screen%20Shot%202022-11-29%20at%205.19.28%20PM.png)