We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

A new award from Google for ML and systems pioneers in academia

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

Free and open source, Gemini CLI brings Gemini directly into developers’ terminals — with unmatched access for individuals.

Free and open source, Gemini CLI brings Gemini directly into developers’ terminals — with unmatched access for individuals.

The latest episode of the Google AI: Release Notes podcast focuses on how the Gemini team built one of the world’s leading AI coding models.Host Logan Kilpatrick chats w…

The latest episode of the Google AI: Release Notes podcast focuses on how the Gemini team built one of the world’s leading AI coding models.Host Logan Kilpatrick chats w…

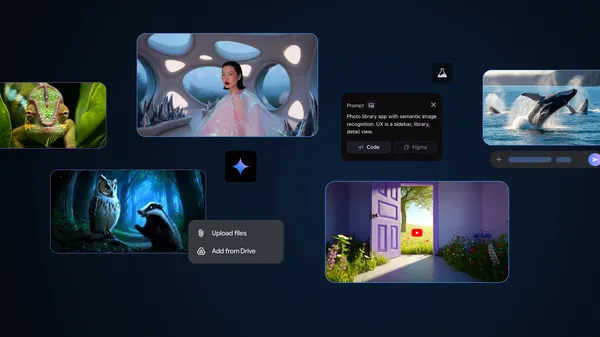

These AI tools from Google I/O 2025 are available globally for your experimentation.

These AI tools from Google I/O 2025 are available globally for your experimentation.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.

Providing developers with Gemini 2.5 models, personalization capabilities and enhancements to chat.