Tracking objects in video is a fundamental problem in computer vision, essential to applications such as activity recognition, object interaction, or video stylization. However, teaching a machine to visually track objects is challenging partly because it requires large, labeled tracking datasets for training, which are impractical to annotate at scale.

In “Tracking Emerges by Colorizing Videos”, we introduce a convolutional network that colorizes grayscale videos, but is constrained to copy colors from a single reference frame. In doing so, the network learns to visually track objects automatically without supervision. Importantly, although the model was never trained explicitly for tracking, it can follow multiple objects, track through occlusions, and remain robust over deformations without requiring any labeled training data.

|  |  |

|  |  |

Example tracking predictions on the publicly-available, academic dataset DAVIS 2017. After learning to colorize videos, a mechanism for tracking automatically emerges without supervision. We specify regions of interest (indicated by different colors) in the first frame, and our model propagates it forward without any additional learning or supervision.

Learning to Recolorize Video

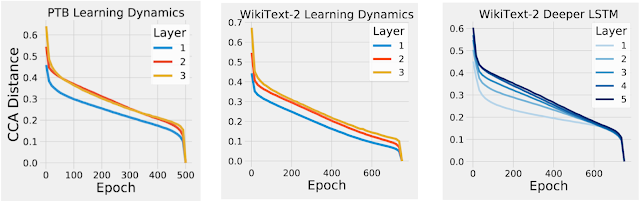

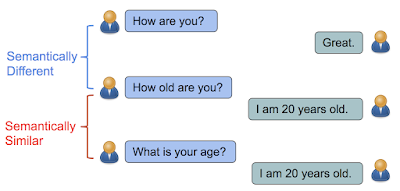

Our hypothesis is that the temporal coherency of color provides excellent large-scale training data for teaching machines to track regions in video. Clearly, there are exceptions when color is not temporally coherent (such as lights turning on suddenly), but in general color is stable over time. Furthermore, most videos contain color, providing a scalable self-supervised learning signal. We decolor videos, and then add the colorization step because there may be multiple objects with the same color, but by colorizing we can teach machines to track specific objects or regions.

In order to train our system, we use videos from the Kinetics dataset, which is a large public collection of videos depicting everyday activities. We convert all video frames except the first frame into gray-scale, and train a convolutional network to predict the original colors in the subsequent frames. We expect the model to learn to follow regions in order to accurately recover the original colors. Our main observation is the need to follow objects for colorization will cause a model for object tracking to be automatically learned.

|

| We illustrate the video recolorization task using video from the DAVIS 2017 dataset. The model receives as input one color frame and a gray-scale video, and predicts the colors for the rest of the video. The model learns to copy colors from the reference frame, which enables a mechanism for tracking to be learned without human supervision. |

|

|

Examples of predicted colors from colorized reference frame applied to input video using the publicly-available Kinetics dataset.

Although the network is trained without ground-truth identities, our model learns to track any visual region specified in the first frame of a video. We can track outlined objects or a single point in the video. The only change we make is that, instead of propagating colors throughout the video, we now propagate labels representing the regions of interest.

Analyzing the Tracker

Since the model is trained on large amounts of unlabeled video, we want to gain insight into what the model learns. The videos below show a standard trick to visualize the embeddings learned by our model by projecting them down to three dimensions using Principal Component Analysis (PCA) and plotting it as an RGB movie. The results show that nearest neighbors in the learned embedding space tend to correspond to object identity, even over deformations and viewpoint changes.

Top Row: We show videos from the DAVIS 2017 dataset. Bottom Row: We visualize the internal embeddings from the colorization model. Similar embeddings will have a similar color in this visualization. This suggests the learned embedding is grouping pixels by object identity.

Tracking Pose

We found the model can also track human poses given key-points in an initial frame. We show results on the publicly-available, academic dataset JHMDB where we track a human joint skeleton.

|  |  |

|  |  |

Examples of using the model to track movements of the human skeleton. In this case the input was a human pose for the first frame and subsequent movement is automatically tracked. The model can track human poses even though it was never explicitly trained for this task.

While we do not yet outperform heavily supervised models, the colorization model learns to track video segments and human pose well enough to outperform the latest methods based on optical flow. Breaking down performance by motion type suggests that our model is a more robust tracker than optical flow for many natural complexities, such as dynamic backgrounds, fast motion, and occlusions. Please see the paper for details.

Future Work

Our results show that video colorization provides a signal that can be used for learning to track objects in videos without supervision. Moreover, we found that the failures from our system are correlated with failures to colorize the video, which suggests that further improving the video colorization model can advance progress in self-supervised tracking.

Acknowledgements

This project was only possible thanks to several collaborations at Google. The core team includes Abhinav Shrivastava, Alireza Fathi, Sergio Guadarrama and Kevin Murphy. We also thank David Ross, Bryan Seybold, Chen Sun and Rahul Sukthankar.