At The Check Up, we unveil how we’re using AI to reshape what’s possible in health and help people live healthier lives.

At The Check Up, we unveil how we’re using AI to reshape what’s possible in health and help people live healthier lives.

6 health AI updates we shared at The Check Up

At The Check Up, we unveil how we’re using AI to reshape what’s possible in health and help people live healthier lives.

At The Check Up, we unveil how we’re using AI to reshape what’s possible in health and help people live healthier lives.

Learn how AI is making accurate health information more accessible and personalized and how AI can accelerate scientific discoveries with AI co-scientist.

Learn how AI is making accurate health information more accessible and personalized and how AI can accelerate scientific discoveries with AI co-scientist.

Here are Google’s latest AI updates from February 2025

Here are Google’s latest AI updates from February 2025

Technology like AI is changing the ways we prevent, diagnose and treat diseases to make healthcare more accessible and human, putting people at the heart of innovation.T…

Technology like AI is changing the ways we prevent, diagnose and treat diseases to make healthcare more accessible and human, putting people at the heart of innovation.T…

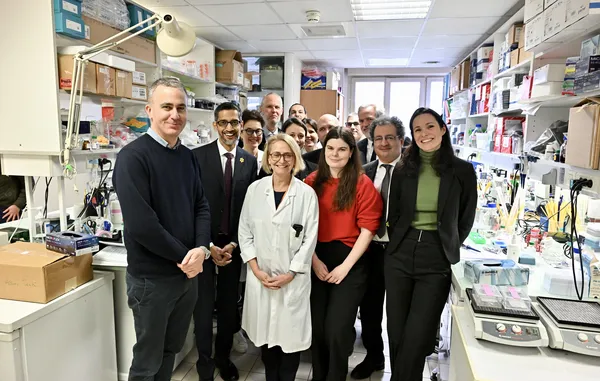

Google and Institut Curie are embarking on a journey together to revolutionize the fight against women’s cancers.

Google and Institut Curie are embarking on a journey together to revolutionize the fight against women’s cancers.

Recap some of Google’s biggest AI news from 2024, including moments from Gemini, NotebookLM, Search and more.

Recap some of Google’s biggest AI news from 2024, including moments from Gemini, NotebookLM, Search and more.

Open Health Stack, a set of open-source tools from Google, allows developers to create digital health solutions for low-resource settings around the world.

Open Health Stack, a set of open-source tools from Google, allows developers to create digital health solutions for low-resource settings around the world.

Like many nations, Singapore faces rising rates of chronic diseases like hypertension and hyperlipidemia — as well as an aging population that increases pressures on the…

Like many nations, Singapore faces rising rates of chronic diseases like hypertension and hyperlipidemia — as well as an aging population that increases pressures on the…

Learn about Google's four-pillar health strategy aimed at improving global health.

Learn about Google's four-pillar health strategy aimed at improving global health.