Last June, we released Motion Stills, an iOS app that uses our video stabilization technology to create easily shareable GIFs from Apple Live Photos. Since then, we integrated Motion Stills into Google Photos for iOS and thought of ways to improve it, taking into account your ideas for new features.

Today, we are happy to announce a major new update to the Motion Stills app that will help you create even more beautiful videos and fun GIFs using motion-tracked text overlays, super-resolution videos, and automatic cinemagraphs.

Motion Text

We’ve added motion text so you can create moving text effects, similar to what you might see in movies and TV shows, directly on your phone. With Motion Text, you can easily position text anywhere over your video to get the exact result you want. It only takes a second to initialize while you type, and a tracks at 1000 FPS throughout the whole Live Photo, so the process feels instantaneous.

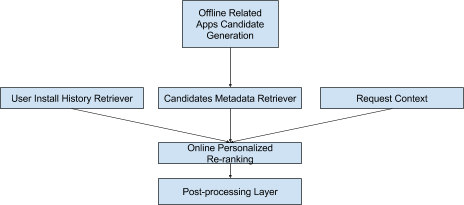

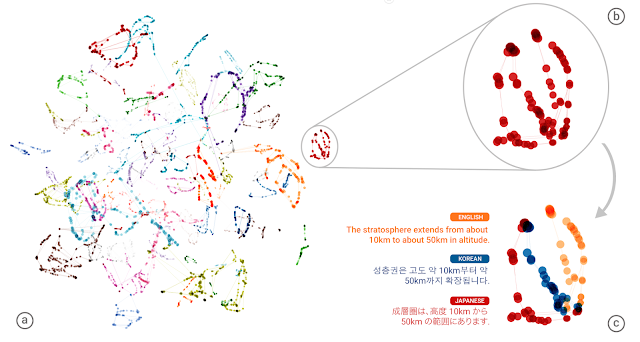

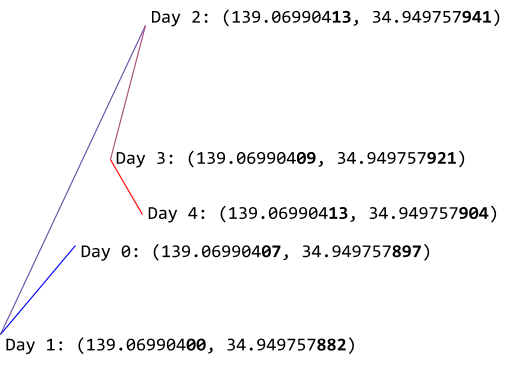

To make this possible, we took the motion tracking technology that we run on YouTube servers for “Privacy Blur”, and made it run even faster on your device. How? We first create motion metadata for your video by leveraging machine learning to classify foreground/background features as well as to model temporally coherent camera motion. We then take this metadata, and use it as input to an algorithm that can track individual objects while discriminating it from others. The algorithm models each object’s state that includes its motion in space, an implicit appearance model (described as a set of its moving parts), and its centroid and extent, as shown in the figure below.

Enhance! your videos with better detail and loops

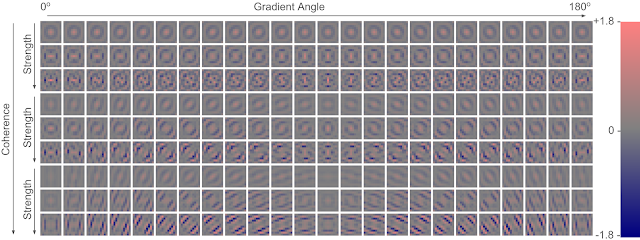

Last month, we published the details of our state-of-the-art RAISR technology, which employs machine learning to create super-resolution detail in images. This technology is now available in Motion Stills, automatically sharpening every video you export.

We are also going beyond stabilization to bring you fully automatic cinemagraphs. After freezing the background into a still photo, we analyze our result to optimize for the perfect loop transition. By considering a range of start and end frames, we build a matrix of transition scores between frame pairs. A significant minimum in this matrix reflects the perfect transition, resulting in an endless loop of motion stillness.

Continuing improve the experience

Thanks to your feedback, we’ve additionally rebuilt our navigation and added more tutorials. We’ve also added Apple’s 3D touch to let you “peek and pop” clips in your stream and movie tray. Lots more is coming to address your top requests, so please download the new release of Motion Stills and keep sending us feedback with #motionstills on your favorite social media.