What’s changing

We’re improving the granularity of Google Meet hardware Admin log events. This upgrade offers a more comprehensive and precise audit trail, enabling you to better track and understand administrative actions related to your Google Meet hardware. This increased visibility will enhance your organization's security and facilitate more effective troubleshooting.

First, the “HANGOUTS DEVICE SETTING” event category is going away and will be replaced with a new event type: “GOOGLE MEET HARDWARE”. This does not apply for “Chromebox for meetings Device Setting Change”, which will move to “APPLICATION SETTING” in a follow-up launch.

The following changes made in the Google Meet hardware Admin console will be logged as an

Admin log event under “GOOGLE MEET HARDWARE”:

- Change lifecycle state on Meet device

- Change OU membership of Meet device

- Change properties on Meet device

- This includes all information found in the Admin console under Devices > Google Meet Hardware > Devices > [Device name] > Device settings.

- Perform bulk action on Meet devices

- Perform command on Meet device

You can also view additional fields related to these new events, including:

- Device ID

- Resource ID(s) for Serial Number

- Device type (will always be ‘meet’)

- Action(s) (if applicable)

- Setting name (if applicable)

- And, if applicable, additional information, such as the meeting code and more.

Note that some fields are not visible in the log viewer by default; you can add additional fields using the “Manage columns” button.

In the coming weeks, you will be able to create, change, and delete application settings under “Application Settings”. All changes to settings found in the Admin console under Devices > Google Meet hardware > Settings will be audited here. We will share more details in the coming weeks.

Additional details

In the coming months, we are removing all events under the “HANGOUTS DEVICE SETTINGS” event type since the product name is obsolete, and the new events will include this information and even more data. Prior to their removal, you’ll still be able to filter for these events, however new activity will be only captured under the new “GOOGLE MEET HARDWARE” events.

This table has more details:

New Event name | Associated Actions |

Perform command on Meet device | Reboot Connect to Meeting Mute Hangup Run Diagnostics Passcode viewed

|

Perform bulk action on Meet devices |

*Audit logs will also be created for the individual devices included in a bulk action. |

Change properties on Meet device | Occupancy detection, noise cancellation, etc. |

Change lifecycle state on Meet device | Provision or deprovision a Meet device |

Change OU membership of Meet device | Moving a device from OU to OU |

Getting started

- Admins: Visit the Help Center to learn more about admin log events.

- End users: There is no end user impact or action required.

Rollout pace

Important note: The new log events will be available in the user interface via the Event filter drop-down under “Google Meet Hardware” beginning July 7, 2025, however data will remain under the old log events (“Hangouts Device Settings”).Data will become available under the new log events starting July 21, 2025. You can use the time in between to update any scripts or rules to align with the new log events.

Availability

- This update impacts all Google Workspace customers with Google Meet hardware devices.

Resources

Google Play's Indie Games Fund in Latin America is returning for its fourth year. We're committing $2 million for another 10 indie game studios, bringing our total inves…

Google Play's Indie Games Fund in Latin America is returning for its fourth year. We're committing $2 million for another 10 indie game studios, bringing our total inves…

.png)

.png)

.png)

.png)

Posted by Daniel Trócoli – Google Play Partnerships

Posted by Daniel Trócoli – Google Play Partnerships

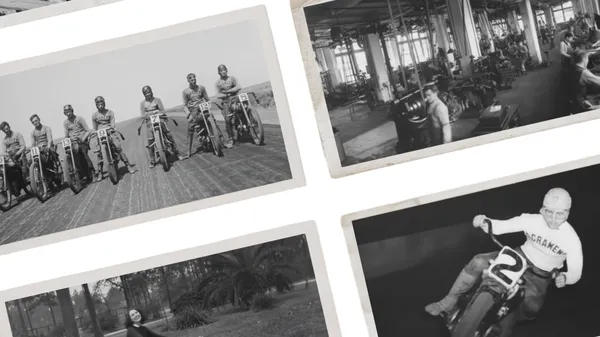

In Moving Archives, we’re bringing the iconic Harley-Davidson Museum archives to life with the help of Veo and Gemini.

In Moving Archives, we’re bringing the iconic Harley-Davidson Museum archives to life with the help of Veo and Gemini.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

The lack of a reliable standard for safe, effective age checks across sites and apps online has long frustrated parents and companies. Google’s Credential Manager API — …

The lack of a reliable standard for safe, effective age checks across sites and apps online has long frustrated parents and companies. Google’s Credential Manager API — …