Editor’s note: Today is Global Accessibility Awareness Day. We’re also sharing how we’re making education more accessibleand launching a newAndroid accessibility feature.

Over the past nine years, my job has focused on building accessible products and supporting Googlers with disabilities. Along the way, I’ve been constantly reminded of how vast and diverse the disability community is, and how important it is to continue working alongside this community to build technology and solutions that are truly helpful.

Before delving into some of the accessibility features our teams have been building, I want to share how we’re working to be more inclusive of people with disabilities to create more accessible tools overall.

Nothing about us, without us

In the disability community, people often say “nothing about us without us.” It’s a sentiment that I find sums up what disability inclusion means. The types of barriers that people with disabilities face in society vary depending on who they are, where they live and what resources they have access to. No one’s experience is universal. That’s why it’s essential to include a wide array of people with disabilities at every stage of the development process for any of our accessibility products, initiatives or programs.

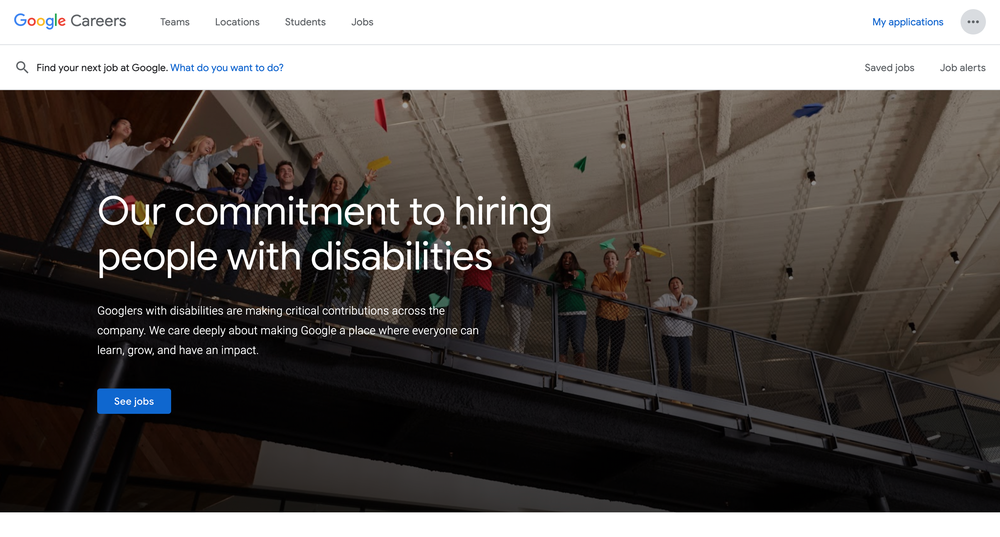

We need to work to make sure our teams at Google are reflective of the people we’re building for. To do so, last year we launched our hiring site geared toward people with disabilities — including our Autism Career Program to further grow and strengthen our autistic community. Most recently, we helped launch the Neurodiversity Career Connector along with other companies to create a job portal that connects neurodiverse candidates to companies that are committed to hiring more inclusively.

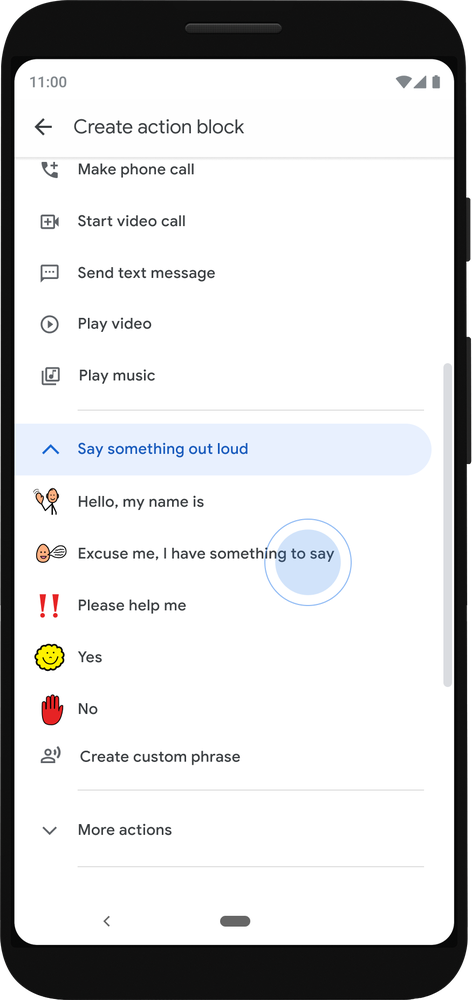

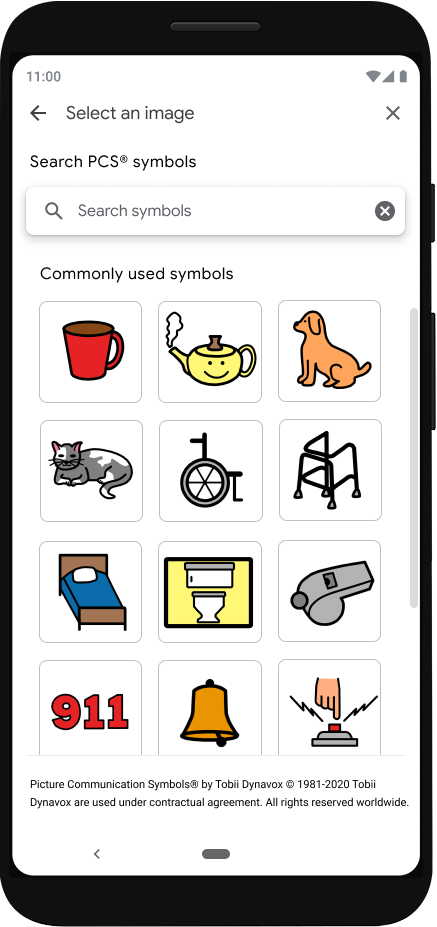

Beyond our internal communities, we also must partner with communities outside of Google so we can learn what is truly useful to different groups and parlay that understanding into the improvement of current products or the creation of new ones. Those partnerships have resulted in the creation of Project Relate, a communication tool for people with speech impairments, the development of a completely new TalkBack, Android’s built-in screen reader, and the improvement of Select-to-Speak, a Chromebook tool that lets you hear selected text on your screen spoken out loud.

Equitable experiences for everyone

Engaging and listening to these communities — inside and outside of Google — make it possible to create tools and features like the ones we’re sharing today.

The ability to add alt-text, which is a short description of an image that is read aloud by screen readers, directly to images sent through Gmail starts rolling out today. With this update, people who use screen readers will know what’s being sent to them, whether it’s a GIF celebrating the end of the week or a screenshot of an important graph.

Communication tools that are inclusive of everyone are especially important as teams have shifted to fully virtual or hybrid meetings. Again, everyone experiences these changes differently. We’ve heard from some people who are deaf or hard of hearing, that this shift has made it easier to identify who is speaking — something that is often more difficult in person. But, in the case of people who use ASL, we’ve heard that it can be difficult to be in a virtual meeting and simultaneously see their interpreter and the person speaking to them.

Multi-pin, a new feature in Google Meet, helps solve this. Now you can pin multiple video tiles at once, for example, the presenter’s screen and the interpreter’s screen. And like many accessibility features, the usefulness extends beyond people with disabilities. The next time someone is watching a panel and wants to pin multiple people to the screen, this feature makes that possible.

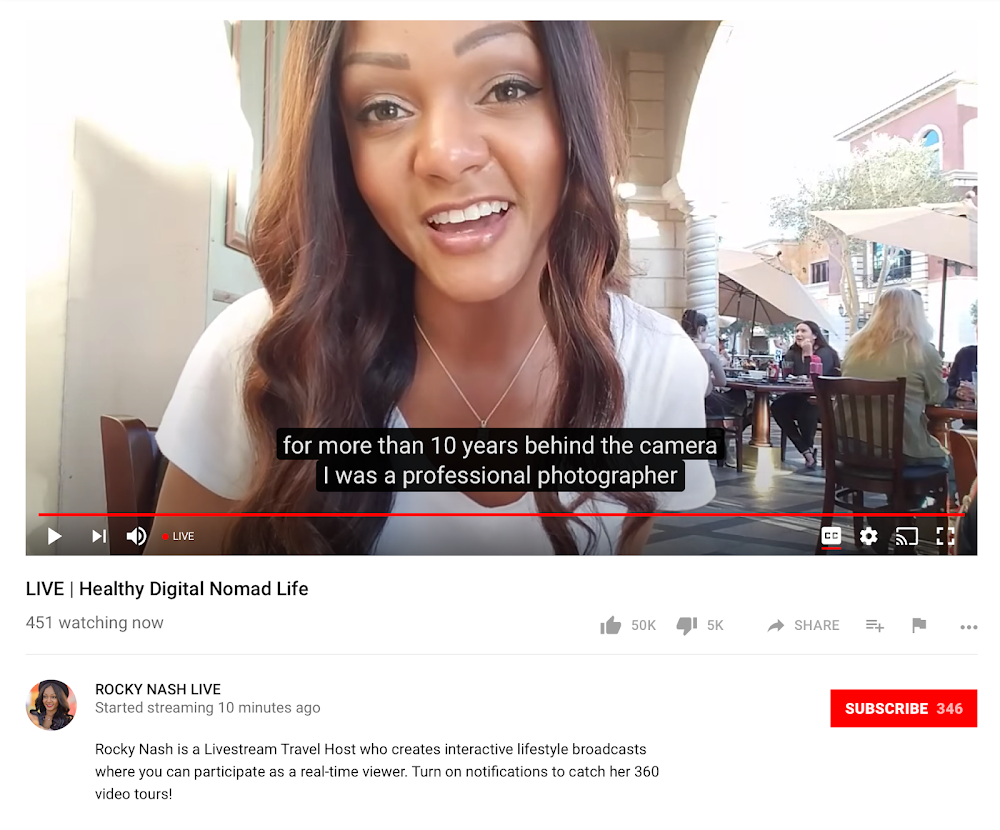

We've also been working to make video content more accessible to those who are blind or low-vision through audio descriptions that describe verbally what is on the screen visually. All of our English language YouTube Originals content from the past year — and moving forward — will now have English audio descriptions available globally. To turn on the audio description track, at the bottom right of the video player, click on “Settings”, select “Audio track”, and choose “English descriptive”.

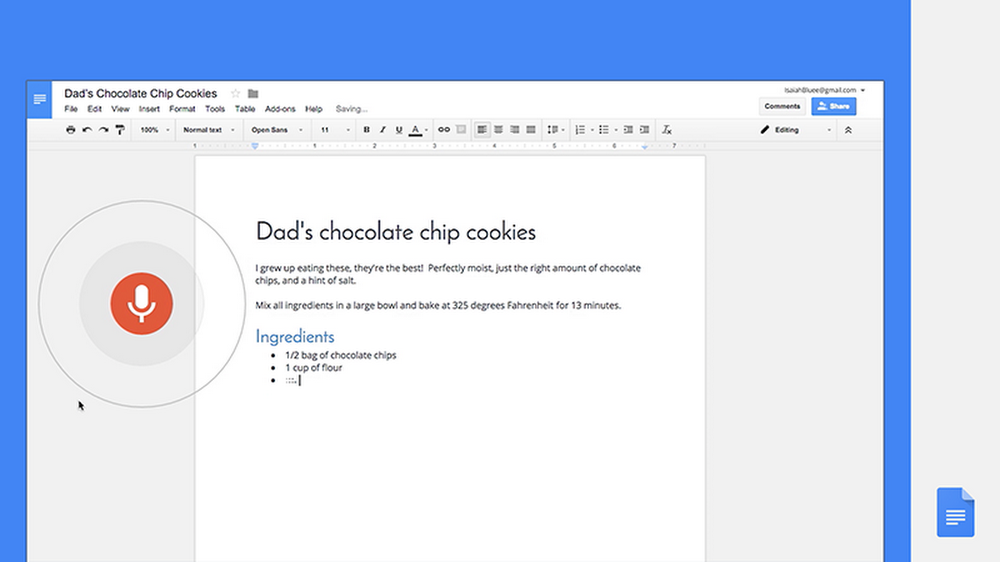

For many people with speech impairments, being understood by the technology that powers tools like voice typing or virtual assistants can be difficult. In 2019, we started work to change that through Project Euphonia, a research initiative that works with community organizations and people with speech impairments to create more inclusive speech recognition models. Today, we’re expanding Project Euphonia’s research to include four more languages: French, Hindi, Japanese and Spanish. With this expansion, we can create even more helpful technology for more people — no matter where they are or what language they speak.

I’ve learned so much in my time working in this space and among the things I’ve learned is the absolute importance of building right alongside the very people who will most use these tools in the end. We’ll continue to do that as we work to create a more inclusive and accessible world, both physically and digitally.

Today, we’re launching new products and features aimed at making daily tasks more accessible for people with disabilities.

Today, we’re launching new products and features aimed at making daily tasks more accessible for people with disabilities.

Today, we’re launching new products and features aimed at making daily tasks more accessible for people with disabilities.

Today, we’re launching new products and features aimed at making daily tasks more accessible for people with disabilities.

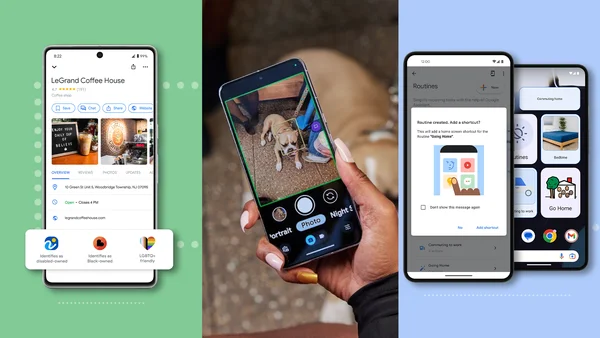

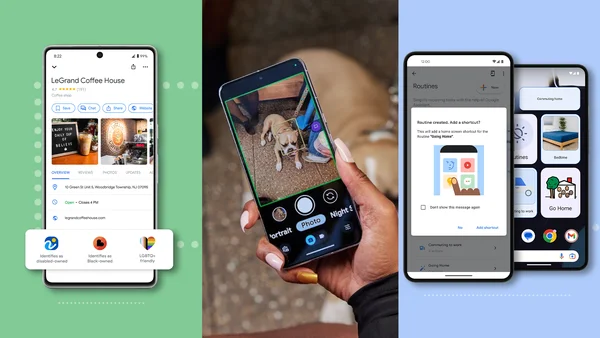

Android is announcing new features rolling out now and in the coming weeks.

Android is announcing new features rolling out now and in the coming weeks.