Posted by Jessica Dene Earley-Cha, Mike Bifulco and Toni Klopfenstein, Developer Relations Engineers for Google Assistant

We've reached the end of the year - and what a year it's been! Between all of our live (virtual) events including I/O, developer summits, meetups and more, there are a lot of highlights for App Actions, Smart Home Actions and Conversational Actions. Let's dive in and take a look.

App Actions

App Actions allows developers to extend their Android App to Google Assistant. App Actions integrates more cleanly with Android using new Android platform features. With the introduction of the beta shortcuts.xml configuration resource, expanding existing Android features and our latest Google Assistant Plug App Actions is moving closer to the Android platform.

App Actions Benefits:

- Display app information on Google surfaces. Provide Android widgets for Assistant to display, offering inline answers, simple confirmations and brief interactions to users without changing context.

- Launch features from Assistant. Connect your app's capabilities to user queries that match predefined semantic patterns (BII).

- Suggest voice shortcuts from Assistant. Use Assistant to proactively suggest tasks for users to discover or replay, in the right context.

Core Integration

Capabilities is a new Android framework API that allows you to declare the types of actions users can take to launch your app and jump directly to performing a specific task. Assistant provides the first available concrete implementation of the capabilities API. You can utilize capabilities by creating a shortcuts.xml resource and defining your capabilities. Capabilities specify two things: how it's triggered and what to do when it's triggered. To add a capability, you’ll need to select a Built-In intent (BII), which are pre-built language models that provide all the Natural Language Understanding to map the user's input to individual fields. When a BII is matched by the user’s request, your capability will trigger an Android Intent that delivers the understood BII fields to your app, so you can determine what to show in response.

To support a user query like “Hey Google, Find waterfall hikes on ExampleApp,” you can use the GET_THING BII. This BII supports queries that request an “item” and extracts the “item” from the user query as the parameter thing.name. The best use case for the GET_THING BII is to search for things in the app. Below is an example of a capability that uses the GET_THING BII:

<!-- This is a sample shortcuts.xml -->

<shortcuts xmlns:android="http://schemas.android.com/apk/res/android">

<capability android:name="actions.intent.GET_THING">

<intent

android:action="android.intent.action.VIEW"

android:targetPackage="YOUR_UNIQUE_APPLICATION_ID"

android:targetClass="YOUR_TARGET_CLASS">

<!-- Eg. name = "waterfall hikes" -->

<parameter

android:name="thing.name"

android:key="name"/>

</intent>

</capability>

</shortcuts>

This framework integration is in the Beta release stage, and will eventually replace the original implementation of App Actions that uses actions.xml. If your app provides both the new shortcuts.xml and old actions.xml, the latter will be disregarded.

Learn how to add your first capability with this codelab.

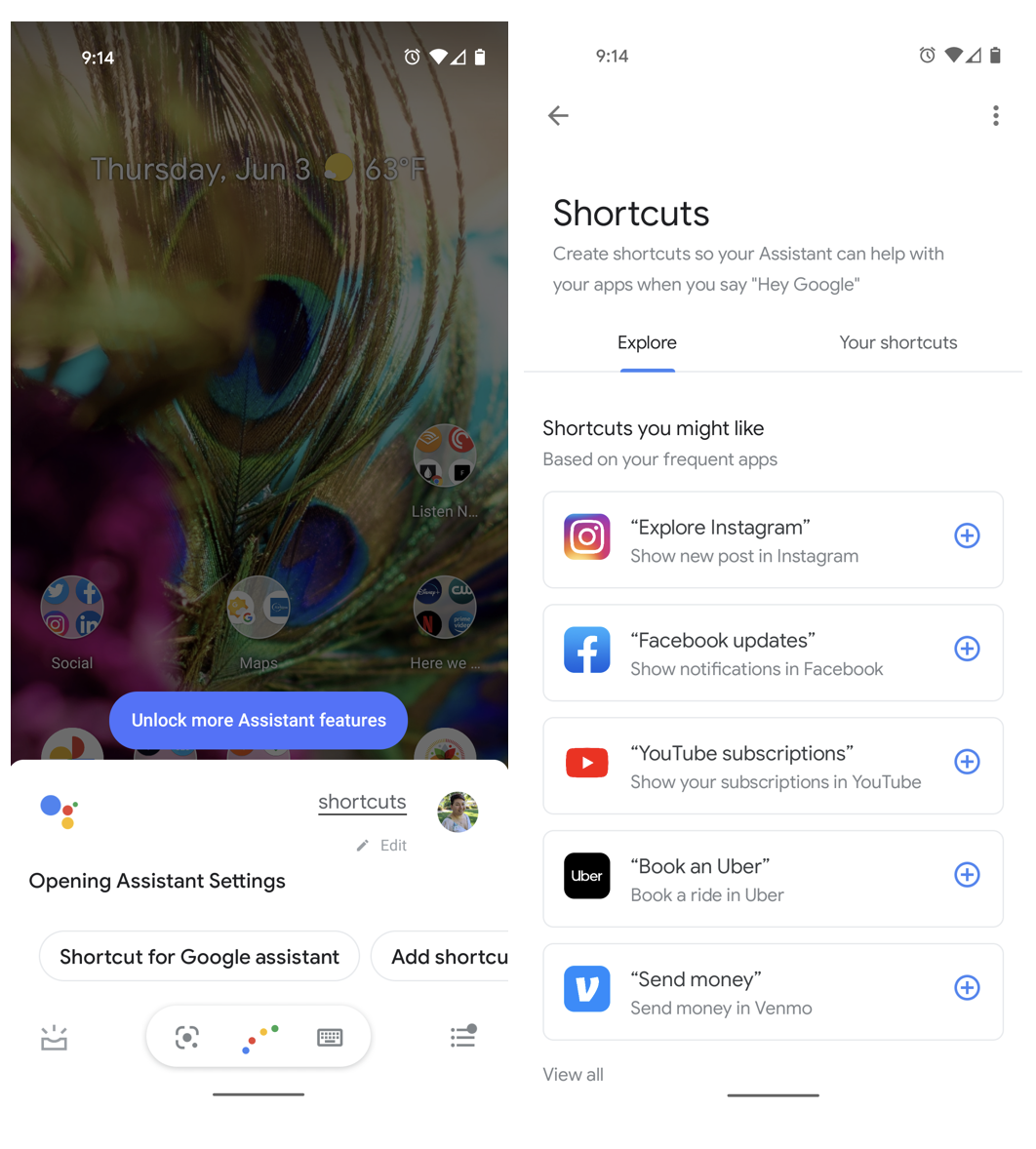

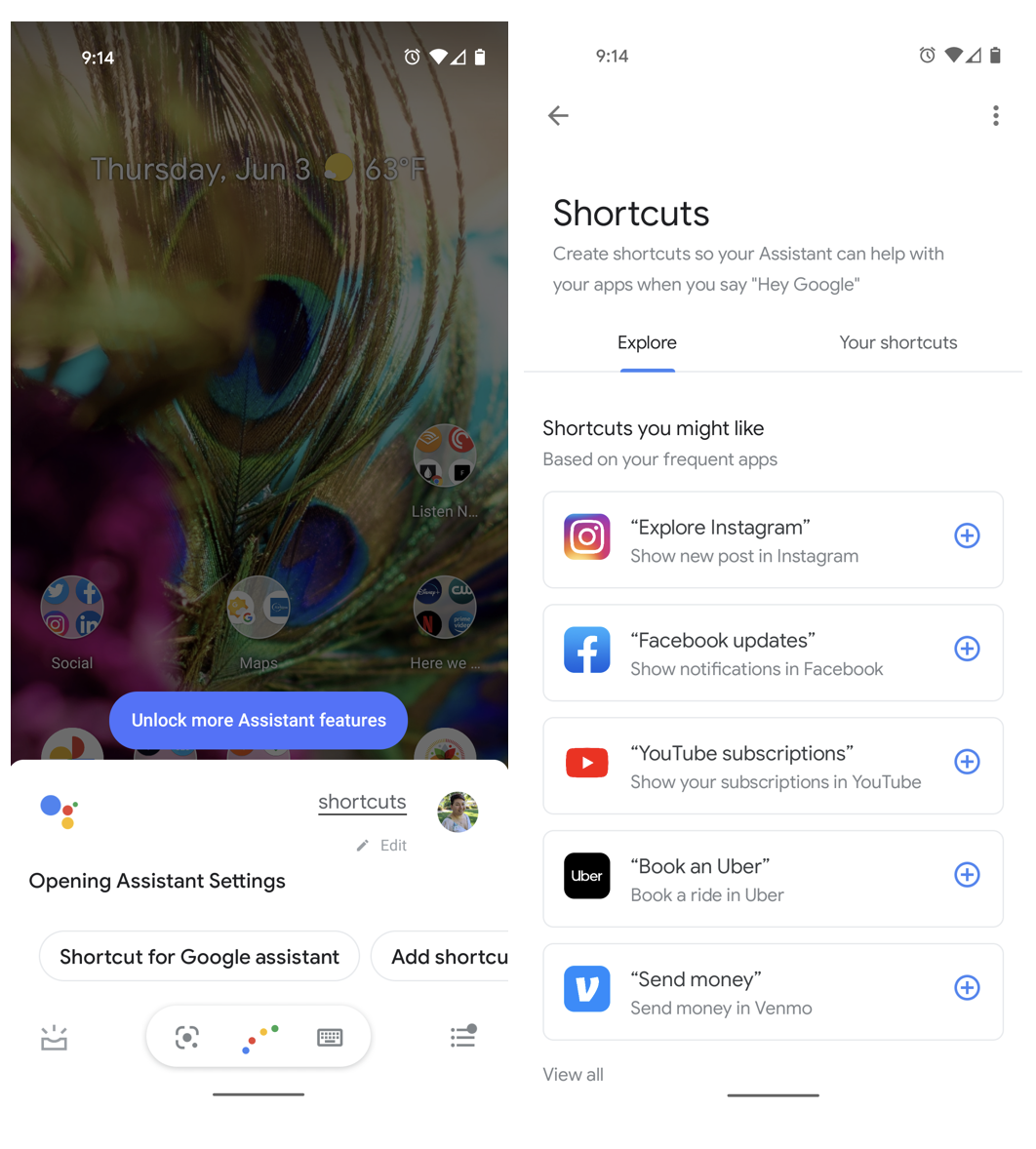

Voice shortcuts

Google Assistant suggests relevant shortcuts to users during contextually relevant times. Users can see what shortcuts they have by saying “Hey Google, shortcuts.”

Shortcut for Google Assistant

You can use the Google Shortcuts Integration library, currently in beta, to push an unlimited number of dynamic shortcuts to Google to make your shortcuts visible to users as voice shortcuts. Assistant can suggest relevant shortcuts to users to help make it more convenient for the user to interact with your Android app.

Learn how to push your dynamic shortcuts to Assistant with our dynamic shortcuts codelab.

Example of App using Dynamic Shortcuts CodeLab Tool

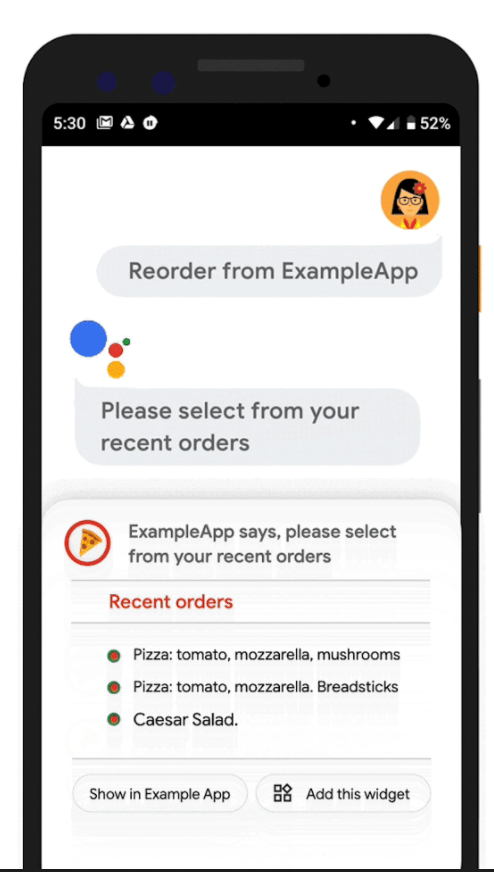

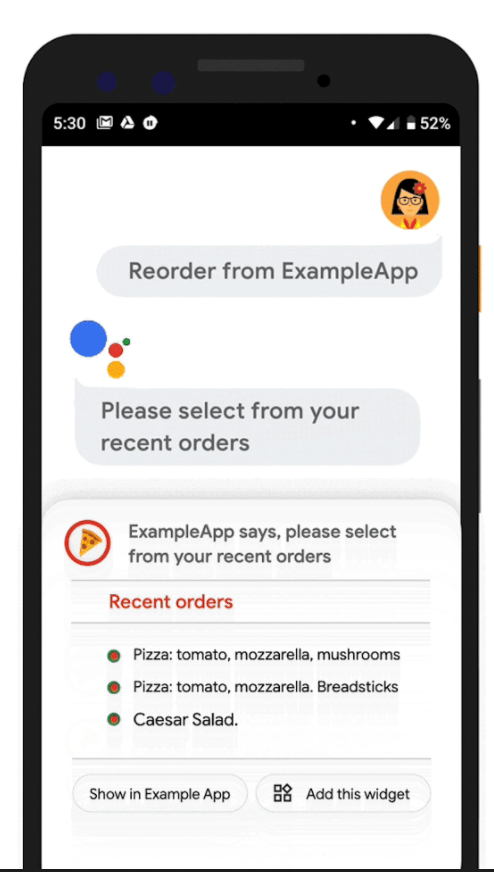

Simple Answers, Hands Free & Android Auto

During situations where users need a hand free experience, like on Android Auto, Assistant can display widgets to provide simple answers, brief confirmations and quick interactive experience as a response to a user’s inquiry. These widgets are displayed within the Assistant UI, and in order to implement a fully voice-forward interaction with your app, you can arrange for Assistant to speak a response with your widget, which is safe and natural for use in automobiles. A great re-engagement feature with widgets, is that a “Add this widget” chip can be included too!

Example of App using Dynamic Shortcuts CodeLab Tool

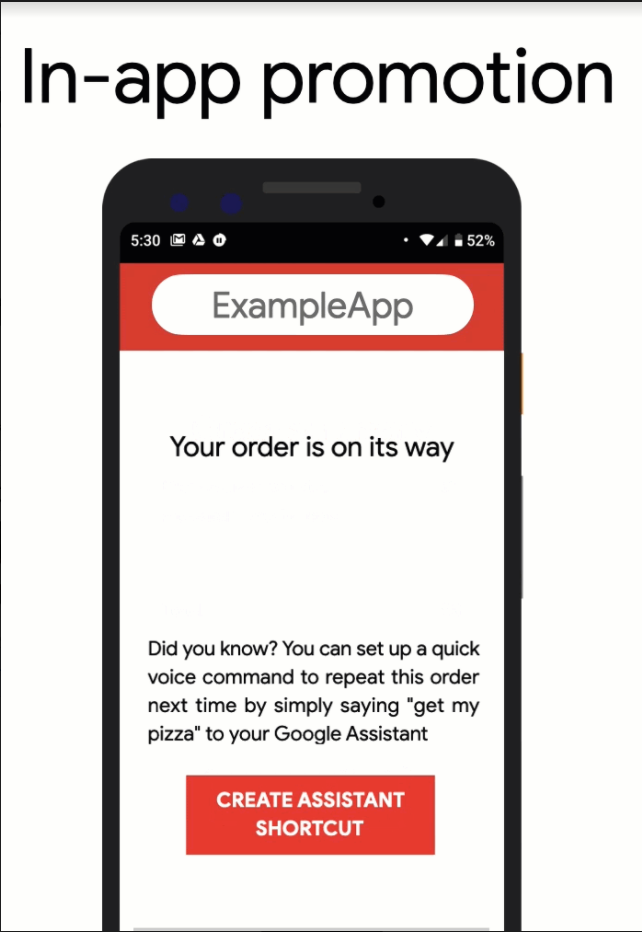

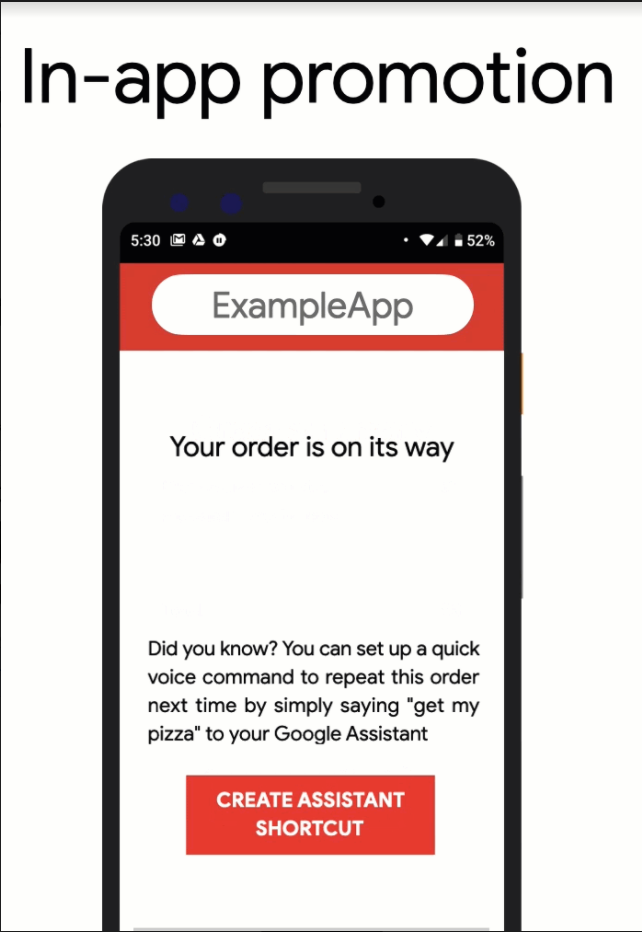

Re Engagement

Another re-engagement tool is In-App Promo SDK you can proactively suggest shortcuts in your app for actions that the user can repeat with a voice command to Assistant, in beta. The SDK allows you to check if the shortcut you want to suggest already exists for that user and prompt the user to create the suggested shortcut.

New Tooling

To support testing Capabilities, the Google Assistant plugin for Android Studio was launched. It contains an updated App Action Test Tool that creates a preview of your App Action, so you can test an integration before publishing it to the Play store.

New App Actions resources

Learn more with new or updated content:

Smart Home Actions

A big focus of this year's Smart Home launches were new and updated tools. At events like I/O, Works With: SiLabs, and the Google Smart Home Developer Summit, we shared these new resources to help you quickly build a high quality smart home integration.

New Resources

To make implementing new features even easier for developers, we released many new tools to help you get your Smart Home Action up and running.

To help consumers discover Google-compatible smart home devices and associated routines, we released the smart home directory, accessible on the web and through the Google Home app.

We heard your requests for more ways to localize your integrations, so we added sample utterances in English (en-US), German (de-DE), and French (fr-FR) to several device guides. Additionally, we also rolled out Chinese (zh-TW) as one of the supported languages for the overall platform. To make our documentation more accessible, we added a Japanese translation of our developer guides.

We also released several new device types and traits, along with new features to support your integrations, including proactive and follow-up responses, app discovery and deep linking.

Quality Improvements

For general onboarding, we've added three new codelabs to enable you to dive deeper into debugging and monitoring your projects. You can now walk through debugging smart home Actions, debugging local fulfillment Actions, and dig deeper into your log-based metrics for your Actions.

When you're actively developing your integration, the Google Home Playground can simulate a virtual home with configurable device types and traits. Here you can view the types and traits in Home Graph, modify device attributes, and share device configurations.

If you discover issues with your configuration, we've continued upgrading the monitoring and logging dashboards to show you detailed views of events with your integrations, as well as better guidance on how to handle errors and exceptions.

The WebRTC Validator Tool acts as a WebRTC peer to stream to or from, and generally emulates the WebRTC player on smart displays with Google Assistant. If you're specifically working with a smart camera, WebRTC is now supported on the CameraStream trait.

Local Home

In order to continue striving towards quality responses to user queries, we also added support to the Local Home SDK to support local queries and responses. Additionally, to help users onboard new devices in their homes quickly and use Google Nest devices as local hubs, we launched BLE Seamless Setup.

Matter

The new Google Home IDE enables you to improve your development process by enabling in-IDE access to Google Assistant Simulator, Cloud Logging, and more for each of your projects. This plugin is available for VSCode.

Finally, as we get closer to the official launch of the Matter protocol, we're working hard to unify all of our smart home ecosystem tools together under a single name - Google Home. The Google Home Developer Center will enable you to quickly find resources for integrating your Matter-compatible smart devices and platforms with Nest, Android, Google Home app, and Google Assistant.

Conversational Actions

Way back in January of 2021, we rolled up an updated Actions for Families program, which provides guidelines for teams building actions meant for kids. Conversational Actions which are approved for the Actions for Families program get a special badge in the Assistant Directory, which lets parents know that your Action is family-friendly.

During the What's New in Google Assistant keynote at Google I/O, Director of Product for the Google Assistant Developer Platform Rebecca Nathenson mentioned several coming updates and changes for Conversational Actions. This included the launch of a Developer Preview for a new client-side fulfillment model for Interactive Canvas. Client-side fulfillment changes the implementation strategy for Interactive Canvas apps, removing the need for a webhook relaying information between the Assistant NLU and their web application. This simplifies the infrastructure needed to deploy an action that uses Interactive Canvas. Since the release of this Developer Preview, we’ve been listening closely to developers to get feedback on client-side fulfillment.

Interactive Canvas Developer Tools

We also released Interactive Canvas Developer tools - a Chrome extension which can help dev teams mock and debug the web app side of Interactive Canvas apps and games. Best of all, it’s open source! You can install the dev tools from the Chrome Web Store, or compile them from source yourself on GitHub at actions-on-google/interactive-canvas-dev-tools.

Updates to SSML

Earlier this year we announced support for new SSML features in Conversational Actions. This expanded support lets you build more detailed and nuanced features using text to speech. We produced a short demonstration of SSML Features on YouTube, and you can find more in our docs on SSML if you’re ready to dive in and start building already

Updates to Transaction UX for Smart Displays

Also announced at I/O for Conversational Actions - we released an updated workflow for completing transactions on smart displays. The new transaction process lets users complete transactions from their smart screens, by confirming the CVC code from their chosen payment method, rather than using a phone to enter a CVC code. If you’d like to get an idea of what the new process looks like, check out our demo video showing new transaction features on smart devices.

Tips on Launching your Conversational Action

Driving a successful launch for Conversational Actions contains helpful information to help you think through some strategies for putting together a marketing team and go-to-market plan for releasing your Conversational Action.

Looking forward to 2022

We're looking forward to another exciting year in 2022. To stay connected, sign up for our new App Actions email series or Google Home newsletter, or for the general Assistant newsletter.

As always, you can also join us on Reddit or follow us on Twitter. Happy Holidays!

Posted by John Richardson, Partner Engineer

Posted by John Richardson, Partner Engineer