Author Archives: Prabhakar Raghavan

Search outside the box: How we’re making Search more natural and intuitive

For over two decades, we've dedicated ourselves to our mission: to organize the world’s information and make it universally accessible and useful. We started with text search, but over time, we've continued to create more natural and intuitive ways to find information — you can now search what you see with your camera, or ask a question aloud with your voice.

At Search On today, we showed how advancements in artificial intelligence are enabling us to transform our information products yet again. We're going far beyond the search box to create search experiences that work more like our minds, and that are as multidimensional as we are as people.

We envision a world in which you’ll be able to find exactly what you’re looking for by combining images, sounds, text and speech, just like people do naturally. You’ll be able to ask questions, with fewer words — or even none at all — and we’ll still understand exactly what you mean. And you’ll be able to explore information organized in a way that makes sense to you.

We call this making search more natural and intuitive, and we’re on a long-term path to bring this vision to life for people everywhere. To give you an idea of how we’re evolving the future of our information products, here are three highlights from what we showed today at Search On.

Making visual search work more naturally

Cameras have been around for hundreds of years, and they’re usually thought of as a way to preserve memories, or these days, create content. But a camera is also a powerful way to access information and understand the world around you — so much so that your camera is your next keyboard. That’s why in 2017 we introduced Lens, so you can search what you see using your camera or an image. Now, the age of visual search is here — in fact, people use Lens to answer 8 billion questions every month.

We’re making visual search even more natural with multisearch, a completely new way to search using images and text simultaneously, similar to how you might point at something and ask a friend a question about it. We introduced multisearch earlier this year as a beta in the U.S., and at Search On, we announced we’re expanding it to more than 70 languages in the coming months. We’re taking this capability even further with “multisearch near me,” enabling you to take a picture of an unfamiliar item, such as a dish or plant, then find it at a local place nearby, like a restaurant or gardening shop. We will start rolling “multisearch near me” out in English in the U.S. this fall.

Multisearch enables a completely new way to search using images and text simultaneously.

Translating the world around you

One of the most powerful aspects of visual understanding is its ability to break down language barriers. With advancements in AI, we’ve gone beyond translating text to translating pictures. People already use Google to translate text in images over 1 billion times a month, across more than 100 languages — so they can instantly read storefronts, menus, signs and more.

But often, it’s the combination of words plus context, like background images, that bring meaning. We’re now able to blend translated text into the background image thanks to a machine learning technology called Generative Adversarial Networks (GANs). So if you point your camera at a magazine in another language, for example, you’ll now see translated text realistically overlaid onto the pictures underneath.

With the new Lens translation update, you’ll now see translated text realistically overlaid onto the pictures underneath.

Exploring the world with immersive view

Our quest to create more natural and intuitive experiences also extends to helping you explore the real world. Thanks to advancements in computer vision and predictive models, we're completely reimagining what a map can be. This means you’ll see our 2D map evolve into a multi-dimensional view of the real world, one that allows you to experience a place as if you are there.

Just as live traffic in navigation made Google Maps dramatically more helpful, we’re making another significant advancement in mapping by bringing helpful insights — like weather and how busy a place is — to life with immersive view in Google Maps. With this new experience, you can get a feel for a place before you even step foot inside, so you can confidently decide when and where to go.

Say you’re interested in meeting a friend at a restaurant. You can zoom into the neighborhood and restaurant to get a feel for what it might be like at the date and time you plan to meet up, visualizing things like the weather and learning how busy it might be. By fusing our advanced imagery of the world with our predictive models, we can give you a feel for what a place will be like tomorrow, next week, or even next month. We’re expanding the first iteration of this with aerial views of 250 landmarks today, and immersive view will come to five major cities in the coming months, with more on the way.

Immersive view in Google Maps helps you get a feel for a place before you even visit.

These announcements, along with many others introduced at Search On, are just the start of how we’re transforming our products to help you go beyond the traditional search box. We’re steadfast in our pursuit to create technology that adapts to you and your life — to help you make sense of information in ways that are most natural to you.

Source: The Official Google Blog

Search outside the box: How we’re making Search more natural and intuitive

For over two decades, we've dedicated ourselves to our mission: to organize the world’s information and make it universally accessible and useful. We started with text search, but over time, we've continued to create more natural and intuitive ways to find information — you can now search what you see with your camera, or ask a question aloud with your voice.

At Search On today, we showed how advancements in artificial intelligence are enabling us to transform our information products yet again. We're going far beyond the search box to create search experiences that work more like our minds, and that are as multidimensional as we are as people.

We envision a world in which you’ll be able to find exactly what you’re looking for by combining images, sounds, text and speech, just like people do naturally. You’ll be able to ask questions, with fewer words — or even none at all — and we’ll still understand exactly what you mean. And you’ll be able to explore information organized in a way that makes sense to you.

We call this making search more natural and intuitive, and we’re on a long-term path to bring this vision to life for people everywhere. To give you an idea of how we’re evolving the future of our information products, here are three highlights from what we showed today at Search On.

Making visual search work more naturally

Cameras have been around for hundreds of years, and they’re usually thought of as a way to preserve memories, or these days, create content. But a camera is also a powerful way to access information and understand the world around you — so much so that your camera is your next keyboard. That’s why in 2017 we introduced Lens, so you can search what you see using your camera or an image. Now, the age of visual search is here — in fact, people use Lens to answer 8 billion questions every month.

We’re making visual search even more natural with multisearch, a completely new way to search using images and text simultaneously, similar to how you might point at something and ask a friend a question about it. We introduced multisearch earlier this year as a beta in the U.S., and at Search On, we announced we’re expanding it to more than 70 languages in the coming months. We’re taking this capability even further with “multisearch near me,” enabling you to take a picture of an unfamiliar item, such as a dish or plant, then find it at a local place nearby, like a restaurant or gardening shop. We will start rolling “multisearch near me” out in English in the U.S. this fall.

Multisearch enables a completely new way to search using images and text simultaneously.

Translating the world around you

One of the most powerful aspects of visual understanding is its ability to break down language barriers. With advancements in AI, we’ve gone beyond translating text to translating pictures. People already use Google to translate text in images over 1 billion times a month, across more than 100 languages — so they can instantly read storefronts, menus, signs and more.

But often, it’s the combination of words plus context, like background images, that bring meaning. We’re now able to blend translated text into the background image thanks to a machine learning technology called Generative Adversarial Networks (GANs). So if you point your camera at a magazine in another language, for example, you’ll now see translated text realistically overlaid onto the pictures underneath.

With the new Lens translation update, you’ll now see translated text realistically overlaid onto the pictures underneath.

Exploring the world with immersive view

Our quest to create more natural and intuitive experiences also extends to helping you explore the real world. Thanks to advancements in computer vision and predictive models, we're completely reimagining what a map can be. This means you’ll see our 2D map evolve into a multi-dimensional view of the real world, one that allows you to experience a place as if you are there.

Just as live traffic in navigation made Google Maps dramatically more helpful, we’re making another significant advancement in mapping by bringing helpful insights — like weather and how busy a place is — to life with immersive view in Google Maps. With this new experience, you can get a feel for a place before you even step foot inside, so you can confidently decide when and where to go.

Say you’re interested in meeting a friend at a restaurant. You can zoom into the neighborhood and restaurant to get a feel for what it might be like at the date and time you plan to meet up, visualizing things like the weather and learning how busy it might be. By fusing our advanced imagery of the world with our predictive models, we can give you a feel for what a place will be like tomorrow, next week, or even next month. We’re expanding the first iteration of this with aerial views of 250 landmarks today, and immersive view will come to five major cities in the coming months, with more on the way.

Immersive view in Google Maps helps you get a feel for a place before you even visit.

These announcements, along with many others introduced at Search On, are just the start of how we’re transforming our products to help you go beyond the traditional search box. We’re steadfast in our pursuit to create technology that adapts to you and your life — to help you make sense of information in ways that are most natural to you.

Source: The Official Google Blog

Search your world, any way and anywhere

People have always gathered information in a variety of ways — from talking to others, to observing the world around them, to, of course, searching online. Though typing words into a search box has become second nature for many of us, it’s far from the most natural way to express what we need. For example, if I’m walking down the street and see an interesting tree, I might point to it and ask a friend what species it is and if they know of any nearby nurseries that might sell seeds. If I were to express that question to a search engine just a few years ago… well, it would have taken a lot of queries.

But we’ve been working hard to change that. We've already started on a journey to make searching more natural. Whether you're humming the tune that's been stuck in your head, or using Google Lens to search visually (which now happens more than 8 billion times per month!), there are more ways to search and explore information than ever before.

Today, we're redefining Google Search yet again, combining our understanding of all types of information — text, voice, visual and more — so you can find helpful information about whatever you see, hear and experience, in whichever ways are most intuitive to you. We envision a future where you can search your whole world, any way and anywhere.

Find local information with multisearch

The recent launch of multisearch, one of our most significant updates to Search in several years, is a milestone on this path. In the Google app, you can search with images and text at the same time — similar to how you might point at something and ask a friend about it.

Now we’re adding a way to find local information with multisearch, so you can uncover what you need from the millions of local businesses on Google. You’ll be able to use a picture or screenshot and add “near me” to see options for local restaurants or retailers that have the apparel, home goods and food you’re looking for.

Later this year, you’ll be able to find local information with multisearch.

For example, say you see a colorful dish online you’d like to try – but you don’t know what’s in it, or what it’s called. When you use multisearch to find it near you, Google scans millions of images and reviews posted on web pages, and from our community of Maps contributors, to find results about nearby spots that offer the dish so you can go enjoy it for yourself.

Local information in multisearch will be available globally later this year in English, and will expand to more languages over time.

Get a more complete picture with scene exploration

Today, when you search visually with Google, we’re able to recognize objects captured in a single frame. But sometimes, you might want information about a whole scene in front of you.

In the future, with an advancement called “scene exploration,” you’ll be able to use multisearch to pan your camera and instantly glean insights about multiple objects in a wider scene.

In the future, “scene exploration” will help you uncover insights across multiple objects in a scene at the same time.

Imagine you’re trying to pick out the perfect candy bar for your friend who's a bit of a chocolate connoisseur. You know they love dark chocolate but dislike nuts, and you want to get them something of quality. With scene exploration, you’ll be able to scan the entire shelf with your phone’s camera and see helpful insights overlaid in front of you. Scene exploration is a powerful breakthrough in our devices’ ability to understand the world the way we do – so you can easily find what you’re looking for– and we look forward to bringing it to multisearch in the future.

These are some of the latest steps we’re taking to help you search any way and anywhere. But there’s more we’re doing, beyond Search. AI advancements are helping bridge the physical and digital worlds in Google Maps, and making it possible to interact with the Google Assistant more naturally and intuitively. To ensure information is truly useful for people from all communities, it’s also critical for people to see themselves represented in the results they find. Underpinning all these efforts is our commitment to helping you search safely, with new ways to control your online presence and information.

Source: The Official Google Blog

How AI is making information more useful

Today, there’s more information accessible at people’s fingertips than at any point in human history. And advances in artificial intelligence will radically transform the way we use that information, with the ability to uncover new insights that can help us both in our daily lives and in the ways we are able to tackle complex global challenges.

At our Search On livestream event today, we shared how we’re bringing the latest in AI to Google’s products, giving people new ways to search and explore information in more natural and intuitive ways.

Making multimodal search possible with MUM

Earlier this year at Google I/O, we announced we’ve reached a critical milestone for understanding information with Multitask Unified Model, or MUM for short.

We’ve been experimenting with using MUM’s capabilities to make our products more helpful and enable entirely new ways to search. Today, we’re sharing an early look at what will be possible with MUM.

In the coming months, we’ll introduce a new way to search visually, with the ability to ask questions about what you see. Here are a couple of examples of what will be possible with MUM.

With this new capability, you can tap on the Lens icon when you’re looking at a picture of a shirt, and ask Google to find you the same pattern — but on another article of clothing, like socks. This helps when you’re looking for something that might be difficult to describe accurately with words alone. You could type “white floral Victorian socks,” but you might not find the exact pattern you’re looking for. By combining images and text into a single query, we’re making it easier to search visually and express your questions in more natural ways.

Some questions are even trickier: Your bike has a broken thingamajig, and you need some guidance on how to fix it. Instead of poring over catalogs of parts and then looking for a tutorial, the point-and-ask mode of searching will make it easier to find the exact moment in a video that can help.

Helping you explore with a redesigned Search page

We’re also announcing how we’re applying AI advances like MUM to redesign Google Search. These new features are the latest steps we’re taking to make searching more natural and intuitive.

First, we’re making it easier to explore and understand new topics with “Things to know.” Let’s say you want to decorate your apartment, and you’re interested in learning more about creating acrylic paintings.

If you search for “acrylic painting,” Google understands how people typically explore this topic, and shows the aspects people are likely to look at first. For example, we can identify more than 350 topics related to acrylic painting, and help you find the right path to take.

We’ll be launching this feature in the coming months. In the future, MUM will unlock deeper insights you might not have known to search for — like “how to make acrylic paintings with household items” — and connect you with content on the web that you wouldn’t have otherwise found.

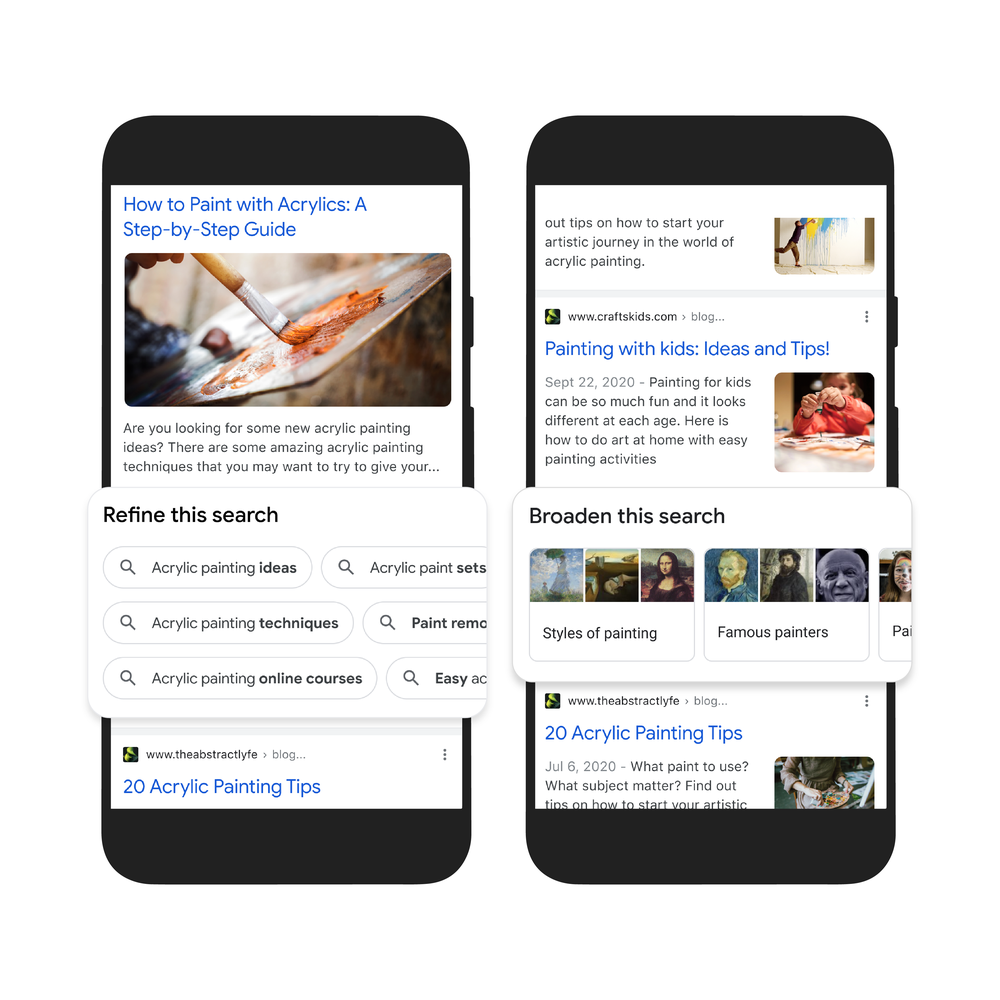

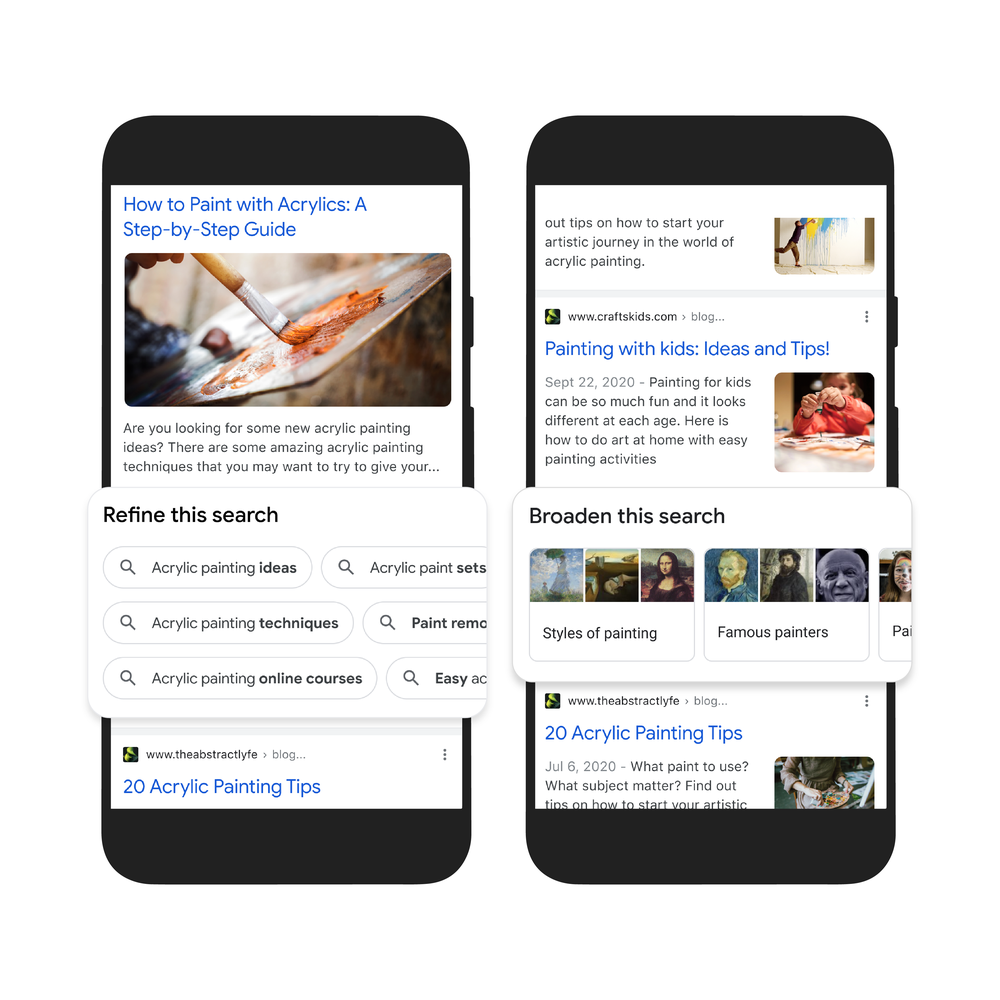

Second, to help you further explore ideas, we’re making it easy to zoom in and out of a topic with new features to refine and broaden searches.

In this case, you can learn more about specific techniques, like puddle pouring, or art classes you can take. You can also broaden your search to see other related topics, like other painting methods and famous painters. These features will launch in the coming months.

Third, we’re making it easier to find visual inspiration with a newly designed, browsable results page. If puddle pouring caught your eye, just search for “pour painting ideas" to see a visually rich page full of ideas from across the web, with articles, images, videos and more that you can easily scroll through.

This new visual results page is designed for searches that are looking for inspiration, like “Halloween decorating ideas” or “indoor vertical garden ideas,” and you can try it today.

Get more from videos

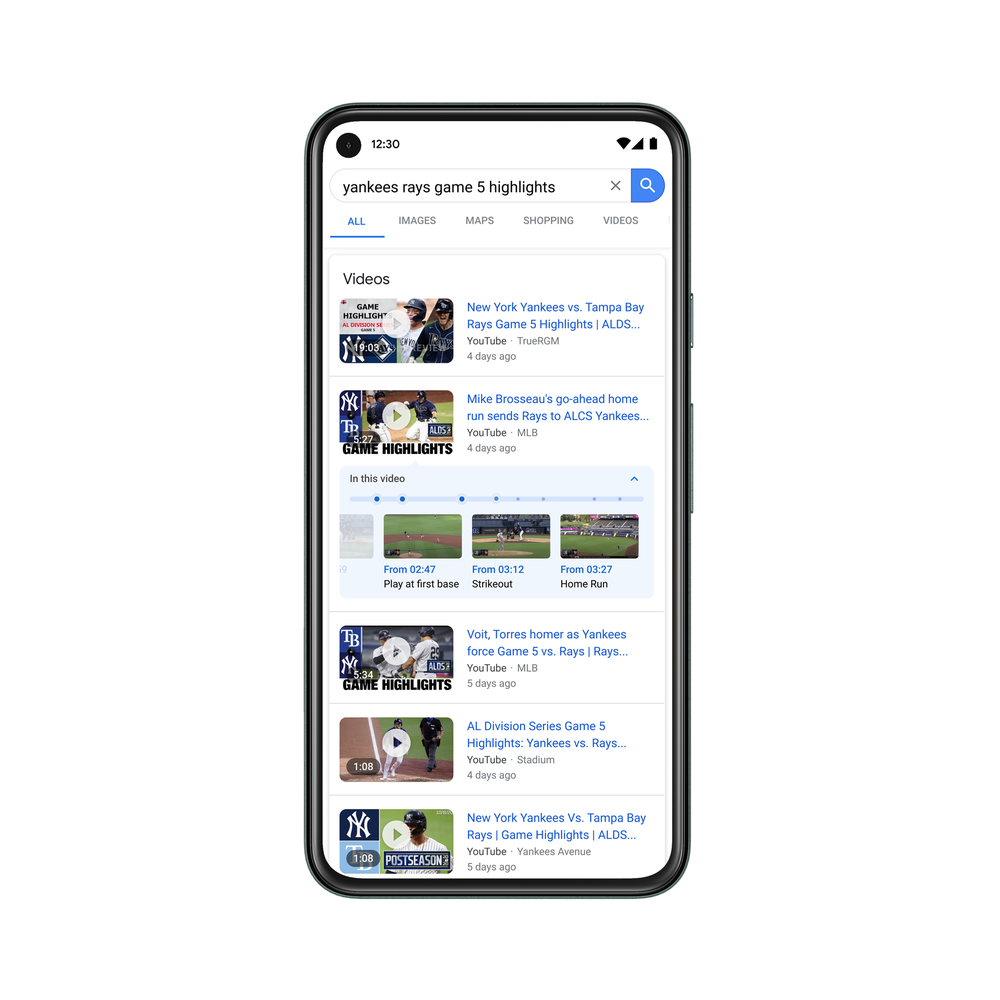

We already use advanced AI systems to identify key moments in videos, like the winning shot in a basketball game, or steps in a recipe. Today, we’re taking this a step further, introducing a new experience that identifies related topics in a video, with links to easily dig deeper and learn more.

Using MUM, we can even show related topics that aren’t explicitly mentioned in the video, based on our advanced understanding of information in the video. In this example, while the video doesn’t say the words “macaroni penguin’s life story,” our systems understand that topics contained in the video relate to this topic, like how macaroni penguins find their family members and navigate predators. The first version of this feature will roll out in the coming weeks, and we’ll add more visual enhancements in the coming months.

Across all these MUM experiences, we look forward to helping people discover more web pages, videos, images and ideas that they may not have come across or otherwise searched for.

A more helpful Google

The updates we’re announcing today don’t end with MUM, though. We’re also making it easier to shop from the widest range of merchants, big and small, no matter what you’re looking for. And we’re helping people better evaluate the credibility of information they find online. Plus, for the moments that matter most, we’re finding new ways to help people get access to information and insights.

All this work not only helps people around the world, but creators, publishers and businesses as well. Every day, we send visitors to well over 100 million different websites, and every month, Google connects people with more than 120 million businesses that don't have websites, by enabling phone calls, driving directions and local foot traffic.

As we continue to build more useful products and push the boundaries of what it means to search, we look forward to helping people find the answers they’re looking for, and inspiring more questions along the way.

Source: The Official Google Blog

How AI is making information more useful

Today, there’s more information accessible at people’s fingertips than at any point in human history. And advances in artificial intelligence will radically transform the way we use that information, with the ability to uncover new insights that can help us both in our daily lives and in the ways we are able to tackle complex global challenges.

At our Search On livestream event today, we shared how we’re bringing the latest in AI to Google’s products, giving people new ways to search and explore information in more natural and intuitive ways.

Making multimodal search possible with MUM

Earlier this year at Google I/O, we announced we’ve reached a critical milestone for understanding information with Multitask Unified Model, or MUM for short.

We’ve been experimenting with using MUM’s capabilities to make our products more helpful and enable entirely new ways to search. Today, we’re sharing an early look at what will be possible with MUM.

In the coming months, we’ll introduce a new way to search visually, with the ability to ask questions about what you see. Here are a couple of examples of what will be possible with MUM.

With this new capability, you can tap on the Lens icon when you’re looking at a picture of a shirt, and ask Google to find you the same pattern — but on another article of clothing, like socks. This helps when you’re looking for something that might be difficult to describe accurately with words alone. You could type “white floral Victorian socks,” but you might not find the exact pattern you’re looking for. By combining images and text into a single query, we’re making it easier to search visually and express your questions in more natural ways.

Some questions are even trickier: Your bike has a broken thingamajig, and you need some guidance on how to fix it. Instead of poring over catalogs of parts and then looking for a tutorial, the point-and-ask mode of searching will make it easier to find the exact moment in a video that can help.

Helping you explore with a redesigned Search page

We’re also announcing how we’re applying AI advances like MUM to redesign Google Search. These new features are the latest steps we’re taking to make searching more natural and intuitive.

First, we’re making it easier to explore and understand new topics with “Things to know.” Let’s say you want to decorate your apartment, and you’re interested in learning more about creating acrylic paintings.

If you search for “acrylic painting,” Google understands how people typically explore this topic, and shows the aspects people are likely to look at first. For example, we can identify more than 350 topics related to acrylic painting, and help you find the right path to take.

We’ll be launching this feature in the coming months. In the future, MUM will unlock deeper insights you might not have known to search for — like “how to make acrylic paintings with household items” — and connect you with content on the web that you wouldn’t have otherwise found.

Second, to help you further explore ideas, we’re making it easy to zoom in and out of a topic with new features to refine and broaden searches.

In this case, you can learn more about specific techniques, like puddle pouring, or art classes you can take. You can also broaden your search to see other related topics, like other painting methods and famous painters. These features will launch in the coming months.

Third, we’re making it easier to find visual inspiration with a newly designed, browsable results page. If puddle pouring caught your eye, just search for “pour painting ideas" to see a visually rich page full of ideas from across the web, with articles, images, videos and more that you can easily scroll through.

This new visual results page is designed for searches that are looking for inspiration, like “Halloween decorating ideas” or “indoor vertical garden ideas,” and you can try it today.

Get more from videos

We already use advanced AI systems to identify key moments in videos, like the winning shot in a basketball game, or steps in a recipe. Today, we’re taking this a step further, introducing a new experience that identifies related topics in a video, with links to easily dig deeper and learn more.

Using MUM, we can even show related topics that aren’t explicitly mentioned in the video, based on our advanced understanding of information in the video. In this example, while the video doesn’t say the words “macaroni penguin’s life story,” our systems understand that topics contained in the video relate to this topic, like how macaroni penguins find their family members and navigate predators. The first version of this feature will roll out in the coming weeks, and we’ll add more visual enhancements in the coming months.

Across all these MUM experiences, we look forward to helping people discover more web pages, videos, images and ideas that they may not have come across or otherwise searched for.

A more helpful Google

The updates we’re announcing today don’t end with MUM, though. We’re also making it easier to shop from the widest range of merchants, big and small, no matter what you’re looking for. And we’re helping people better evaluate the credibility of information they find online. Plus, for the moments that matter most, we’re finding new ways to help people get access to information and insights.

All this work not only helps people around the world, but creators, publishers and businesses as well. Every day, we send visitors to well over 100 million different websites, and every month, Google connects people with more than 120 million businesses that don't have websites, by enabling phone calls, driving directions and local foot traffic.

As we continue to build more useful products and push the boundaries of what it means to search, we look forward to helping people find the answers they’re looking for, and inspiring more questions along the way.

Source: Google Ads & Commerce

Search, explore and shop the world’s information, powered by AI

AI advancements push the boundaries of what Google products can do. Nowhere is this clearer than at the core of our mission to make information more accessible and useful for everyone.

We've spent more than two decades developing not just a better understanding of information on the web, but a better understanding of the world. Because when we understand information, we can make it more helpful — whether you’re a remote student learning a complex new subject, a caregiver looking for trusted information on COVID vaccines or a parent searching for the best route home.

Deeper understanding with MUM

One of the hardest problems for search engines today is helping you with complex tasks — like planning what to do on a family outing. These often require multiple searches to get the information you need. In fact, we find that it takes people eight searches on average to complete complex tasks.

With a new technology called Multitask Unified Model, or MUM, we're able to better understand much more complex questions and needs, so in the future, it will require fewer searches to get things done. Like BERT, MUM is built on a Transformer architecture, but it’s 1,000 times more powerful and can multitask in order to unlock information in new ways. MUM not only understands language, but also generates it. It’s trained across 75 different languages and many different tasks at once, allowing it to develop a more comprehensive understanding of information and world knowledge than previous models. And MUM is multimodal, so it understands information across text and images and in the future, can expand to more modalities like video and audio.

Imagine a question like: “I’ve hiked Mt. Adams and now want to hike Mt. Fuji next fall, what should I do differently to prepare?” This would stump search engines today, but in the future, MUM could understand this complex task and generate a response, pointing to highly relevant results to dive deeper. We’ve already started internal pilots with MUM and are excited about its potential for improving Google products.

Information comes to life with Lens and AR

People come to Google to learn new things, and visuals can make all the difference. Google Lens lets you search what you see — from your camera, your photos or even your search bar. Today we’re seeing more than 3 billion searches with Lens every month, and an increasingly popular use case is learning. For example, many students might have schoolwork in a language they aren't very familiar with. That’s why we’re updating the Translate filter in Lens so it’s easy to copy, listen to or search translated text, helping students access education content from the web in over 100 languages.

AR is also a powerful tool for visual learning. With the new AR athletes in Search, you can see signature moves from some of your favorite athletes in AR — like Simone Biles’s famous balance beam routine.

Evaluate information with About This Result

Helpful information should be credible and reliable, and especially during moments like the pandemic or elections, people turn to Google for trustworthy information.

Our ranking systems are designed to prioritize high-quality information, but we also help you evaluate the credibility of sources, right in Google Search. Our About This Result feature provides details about a website before you visit it, including its description, when it was first indexed and whether your connection to the site is secure.

This month, we’ll start rolling out About This Result to all English results worldwide, with more languages to come. Later this year, we’ll add even more detail, like how a site describes itself, what other sources are saying about it and related articles to check out.

Exploring the real world with Maps

Google Maps transformed how people navigate, explore and get things done in the world — and we continue to push the boundaries of what a map can be with industry-first features like AR navigation in Live View at scale. We recently announced we’re on track to launch over 100 AI-powered improvements to Google Maps by the end of year, and today, we’re introducing a few of the newest ones. Our new routing updates are designed to reduce the likelihood of hard-braking on your drive using machine learning and historical navigation information — which we believe could eliminate over 100 million hard-braking events in routes driven with Google Maps each year.

If you’re looking for things to do, our more tailored map will spotlight relevant places based on time of day and whether or not you’re traveling. Enhancements to Live View and detailed street maps will help you explore and get a deep understanding of an area as quickly as possible. And if you want to see how busy neighborhoods and parts of town are, you’ll be able to do this at a glance as soon as you open Maps.

More ways to shop with Google

People are shopping across Google more than a billion times per day, and our AI-enhanced Shopping Graph — our deep understanding of products, sellers, brands, reviews, product information and inventory data — powers many features that help you find exactly what you’re looking for.

Because shopping isn’t always a linear experience, we’re introducing new ways to explore and keep track of products. Now, when you take a screenshot, Google Photos will prompt you to search the photo with Lens, so you can immediately shop for that item if you want. And on Chrome, we’ll help you keep track of shopping carts you’ve begun to fill, so you can easily resume your virtual shopping trip. We're also working with retailers to surface loyalty benefits for customers earlier, to help inform their decisions.

Last year we made it free for merchants to sell their products on Google. Now, we’re introducing a new, simplified process that helps Shopify’s 1.7 million merchants make their products discoverable across Google in just a few clicks.

Whether we’re understanding the world’s information, or helping you understand it too, we’re dedicated to making our products more useful every day. And with the power of AI, no matter how complex your task, we’ll be able to bring you the highest quality, most relevant results.

Source: Google LatLong

How AI is powering a more helpful Google

When I first came across the web as a computer scientist in the mid-90s, I was struck by the sheer volume of information online, in contrast with how hard it was to find what you were looking for. It was then that I first started thinking about search, and I’ve been fascinated by the problem ever since.

We’ve made tremendous progress over the past 22 years, making Google Search work better for you every day. With recent advancements in AI, we’re making bigger leaps forward in improvements to Google than we’ve seen over the last decade, so it’s even easier for you to find just what you’re looking for. Today during our Search On livestream, we shared how we're bringing the most advanced AI into our products to further our mission to organize the world’s information and make it universally accessible and useful.

Helping you find exactly what you’re

looking for

At the heart of Google Search is our ability to understand your query and rank relevant results for that query. We’ve invested deeply in language understanding research, and last year we introduced how BERT language understanding systems are helping to deliver more relevant results in Google Search. Today we’re excited to share that BERT is now used in almost every query in English, helping you get higher quality results for your questions. We’re also sharing several new advancements to search ranking, made possible through our latest research in AI:

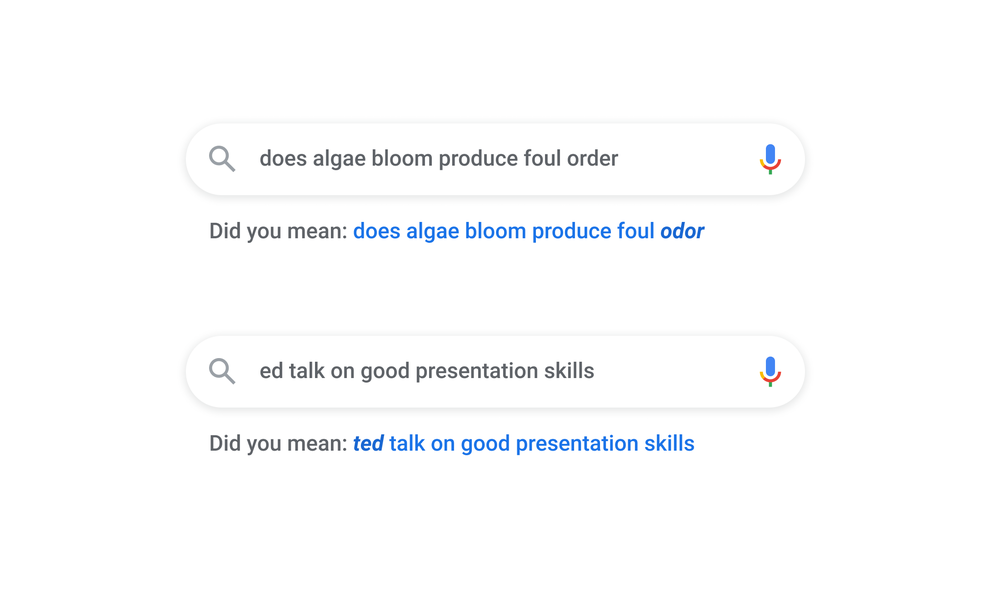

Spelling

We’ve continued to improve our ability to understand misspelled words, and for good reason—one in 10 queries every day are misspelled. Today, we’re introducing a new spelling algorithm that uses a deep neural net to significantly improve our ability to decipher misspellings. In fact, this single change makes a greater improvement to spelling than all of our improvements over the last five years.

A new spelling algorithm helps us understand the context of misspelled words, so we can help you find the right results, all in under 3 milliseconds.

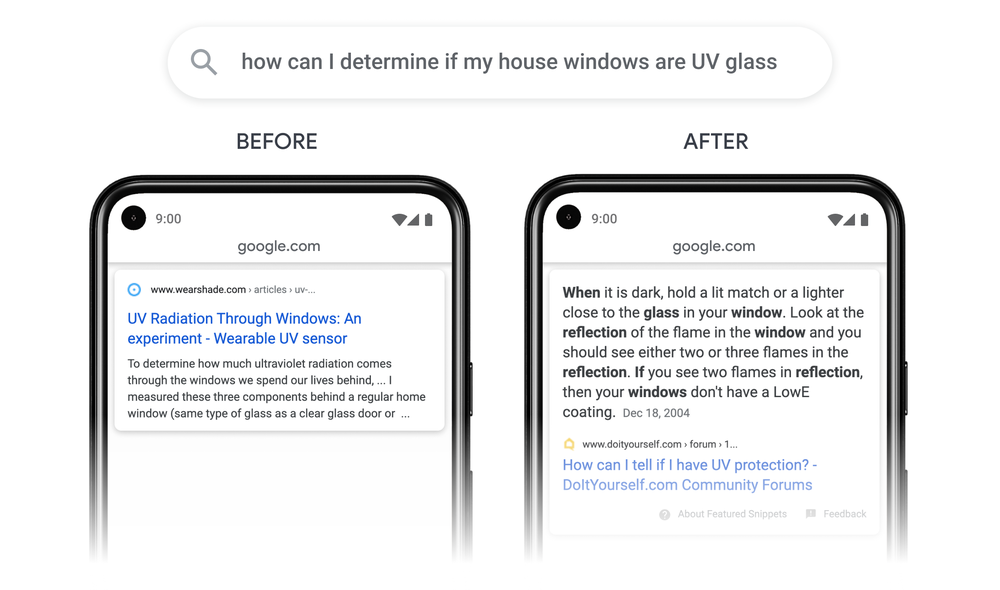

Passages

Very specific searches can be the hardest to get right, since sometimes the single sentence that answers your question might be buried deep in a web page. We’ve recently made a breakthrough in ranking and are now able to not just index web pages, but individual passages from the pages. By better understanding the relevancy of specific passages, not just the overall page, we can find that needle-in-a-haystack information you’re looking for. This technology will improve 7 percent of search queries across all languages as we roll it out globally.

With new passage understanding capabilities, Google can understand that the specific passage (R) is a lot more relevant to a specific query than a broader page on that topic (L).

Subtopics

We’ve applied neural nets to understand subtopics around an interest, which helps deliver a greater diversity of content when you search for something broad. As an example, if you search for “home exercise equipment,” we can now understand relevant subtopics, such as budget equipment, premium picks, or small space ideas, and show a wider range of content for you on the search results page. We’ll start rolling this out by the end of this year.

Access to high quality information during COVID-19

We’re making several new improvements to help you navigate your world and get things done more safely and efficiently. Live busyness updates show you how busy a place is right now so you can more easily social distance, and we’ve added a new feature to Live View to help you get essential information about a business before you even step inside. We’re also adding COVID-19 safety information front and center on Business Profiles across Google Search and Maps. This will help you know if a business requires you to wear a mask, if you need to make an advance reservation, or if the staff is taking extra safety precautions, like temperature checks. And we’ve used our Duplex conversational technology to help local businesses keep their information up-to-date online, such as opening hours and store inventory.

Understanding key moments in videos

Using a new AI-driven approach, we’re now able to understand the deep semantics of a video and automatically identify key moments. This lets us tag those moments in the video, so you can navigate them like chapters in a book. Whether you’re looking for that one step in a recipe tutorial, or the game-winning home run in a highlights reel, you can easily find those moments. We’ve started testing this technology this year, and by the end of 2020 we expect that 10 percent of searches on Google will use this new technology.

Deepening understanding through data

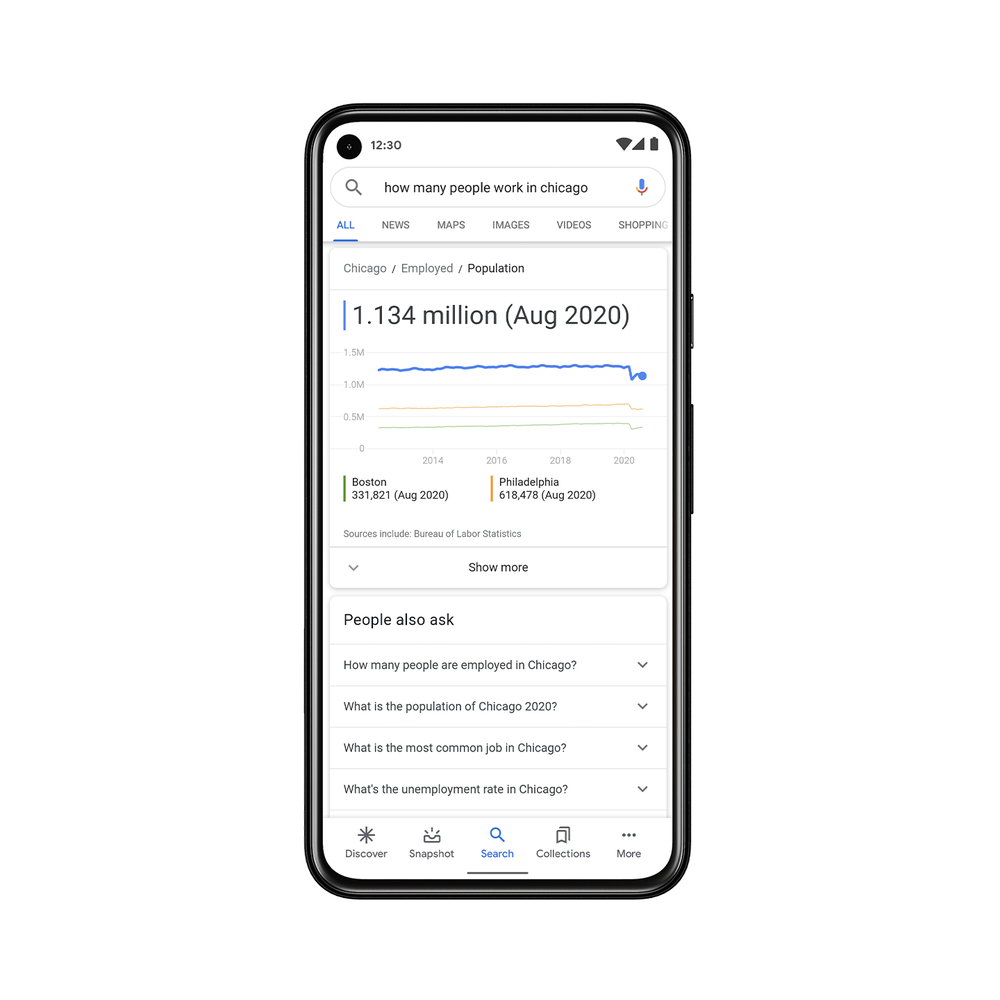

Sometimes the best search result is a statistic. But often stats are buried in large datasets and not easily comprehensible or accessible online. Since 2018, we’ve been working on the Data Commons Project, an open knowledge database of statistical data started in collaboration with the U.S. Census, Bureau of Labor Statistics, World Bank and many others. Bringing these datasets together was a first step, and now we’re making this information more accessible and useful through Google Search.

Now when you ask a question like “how many people work in Chicago ,” we use natural language processing to map your search to one specific set of the billions of data points in Data Commons to provide the right stat in a visual, easy to understand format. You’ll also find other relevant data points and context—like stats for other cities—to help you easily explore the topic in more depth.

Helping quality journalism through advanced search

Quality journalism often comes from long-term investigative projects, requiring time consuming work sifting through giant collections of documents, images and audio recordings. As part of Journalist Studio, our new suite of tools to help reporters do their work more efficiently, securely, and creatively through technology, we’re launching Pinpoint, a new tool that brings the power of Google Search to journalists. Pinpoint helps reporters quickly sift through hundreds of thousands of documents by automatically identifying and organizing the most frequently mentioned people, organizations and locations. Reporters can sign up to request access to Pinpoint starting this week.

Search what you see, and explore

information in 3D

For many topics, seeing is key to understanding. Several new features in Lens and AR in Google Search help you learn, shop, and discover the world in new ways. Many of us are dealing with the challenges of learning from home, and with Lens, you can now get step-by-step homework help on math, chemistry, biology and physics problems. Social distancing has also dramatically changed how we shop, so we’re making it easier to visually shop for what you’re looking for online, whether you’re looking for a sweater or want a closer look at a new car but can’t visit a showroom.

If you don’t know how to search it, sing it

We’ve all had that experience of having a tune stuck in our head, but can’t quite remember the lyrics. Now, when those moments arise, you just have to hum to search, and our AI models can match the melody to the right song.

What sets Google Search apart

There has never been more choice in the ways people access information, and we need to constantly develop cutting-edge technology to ensure that Google remains the most useful and most trusted way to search. Four key elements form the foundation for all our work to improve Search and answer trillions of queries every year. These elements are what makes Google helpful and reliable for the people who come to us each day to find information.

Understanding all the world’s information

We’re focused on deeply understanding all the world’s information, whether that information is contained in words on web pages, in images or videos, or even in the places and objects around us. With investments in AI, we’re able to analyze and understand all types of information in the world, just as we did by indexing web pages 22 years ago. We’re pushing the boundaries of what it means to understand the world, so before you even type in a query, we’re ready to help you explore new forms of information and insights never before available.

The highest quality information

People rely on Search for the highest quality information available, and our commitment to quality has always been what set Google apart from day one. Every year we launch thousands of improvements to make Search better, and rigorously test each of these changes to ensure people find them helpful. Our ranking factors and policies are applied fairly to all websites, and this has led to widespread access to a diversity of information, ideas and viewpoints.

World class privacy and security

To keep people and their data safe, we invest in world class privacy and security. We’ve led the industry in keeping you safe while searching with Safe Browsing and spam protection. We believe that privacy is a universal right and are committed to giving every user the tools they need to be in control.

Open access for everyone

Last—but certainly not least—we are committed to open access for everyone. We aim to help the open web thrive, sending more traffic to the open web every year since Google was created. Google is free for everyone, accessible on any device, in more than 150 languages around the world, and we continue to expand our ability to serve people everywhere.

So wherever you are, whatever you’re looking for, however you’re able to sing, spell, say, or visualize it, you can search on with Google.

Source: Search

Work reimagined: new ways to collaborate safer, smarter and simpler with G Suite

Over the last decade we’ve witnessed the maturation of G Suite—from the introduction of Gmail and Google Docs to more recent advancements in AI and machine learning that are powering, and protecting, the world's email. Now, more than 4 million paying businesses are using our suite to reimagine how they work, and companies like Whirlpool, Nielsen, BBVA and Broadcom are among the many who choose G Suite to move faster, better connect their teams and advance their competitive edge.

In the past year, our team has worked hard to offer nearly 300 new capabilities for G Suite users. Today, we’re excited to share some of the new ways organizations can use G Suite to focus on creative work and move their business forward—keep an eye out for additional announcements to come tomorrow as well.

Here’s what we’re announcing today:

Security center investigation tool (available in an Early Adopter Program* for G Suite Enterprise customers)

Data regions (available now for G Suite Business and Enterprise customers)

Smart Reply in Hangouts Chat (coming soon to G Suite customers)

Smart Compose (coming soon to G Suite customers)

Grammar Suggestions in Google Docs (available in an Early Adopter Program for G Suite customers today)

Voice commands in Hangouts Meet hardware (coming to select Hangouts Meet hardware customers later this year)

Nothing matters more than security

Businesses need a way to simplify their security management, which is why earlier this year we introduced the security center for G Suite. The security center brings together security analytics, actionable insights and best practice recommendations from Google to help you protect your organization, data and users.

Today, we’re announcing our new investigation tool in security center, which adds integrated remediation to the prevention and detection capabilities of the security center. Admins can identify which users are potentially infected, see if anything’s been shared externally and remove access to Drive files or delete malicious emails. Since the investigation tool makes it possible to review your data security in one place and has a simple UI, it makes it easier to take action against threats without having to worry about analyzing logs which can be time-consuming and require complex scripting. Investigation tool is available today as part of our Early Adopter Program (EAP) for G Suite Enterprise customers. Learn more.

In addition to giving admins a simpler way to keep data secure, we’re constantly working to ensure that they have the transparency and control they need. That’s why we’re adding support for data regions to G Suite. For organizations with data control requirements, G Suite will now let customers choose where to store primary data for select G Suite apps—globally distributed, U.S. or Europe. We’re also making it simple to manage your data regions on an ongoing basis. For example, when a file’s owner changes or moves to another organizational unit, we automatically move the data—with no impact on the file’s availability to collaborators. Plus, users continue to get full edit rights on content while data is being moved.

Rob Tollerton, Director of IT at PricewaterhouseCoopers International Limited (PwCIL), and his team are using G Suite to manage global data policies: "Given PwC is a global network with operations in 158 countries, I am very happy to see Google investing in data regions for G Suite and thrilled by how easy and intuitive it will be to set up and manage multi-region policies for our domain.“

Data regions for G Suite is generally available to all G Suite Business and Enterprise customers today at no additional cost. We're continually investing in the offering and will expand it further over time. Learn more.

I am very happy to see Google investing in data regions for G Suite and thrilled by how easy it will be to set up and manage multi-region policies.

Let machines do the mundane work

We’ve spent many years as a company investing in AI and machine learning, and we’re dedicated to a simple idea: rather than replacing human skills, we think AI has endless potential to enhance them. Google AI is already helping millions of people around the world navigate, communicate and get things done in our consumer products. In G Suite, we’re using AI to help businesses and their employees do their best work.

Many of you use Smart Reply in Gmail. It processes hundreds of millions of messages daily and already drives more than 10 percent of email replies. Today we’re announcing that Smart Reply is coming to Hangouts Chat to help you respond to messages quicker so you can free up time to focus on creative work.

Our technology recognizes which messages most likely need responses, and proposes three different replies that sound like how you typically respond. The proposed responses are casual enough for chat and yet appropriate in a workplace. Smart Reply in Hangouts Chat will be available to G Suite customers in the coming weeks.

Smart Reply makes sending short replies easy, especially on the go. But we know that the most time-consuming emails require longer, more complex thoughts. That’s why we built Smart Compose, which you may have heard Sundar talk about at Google I/O this year. Smart Compose intelligently autocompletes your emails; it can fill in greetings, sign offs and common phrases so you can collaborate efficiently. We first launched Smart Compose to consumers in May, and now Smart Compose in Gmail is ready for G Suite customers.

In addition to autocompleting common phrases, Smart Compose can insert personalized information like your office or home address, so you don’t need to spend time in repetitive tasks. And best of all, it will get smarter with time—for example, learning how you prefer to greet certain people in emails to ensure that when you use Smart Compose you sound like yourself.

Smart Compose in Gmail will be available to G Suite customers in the coming weeks.

We’re also using AI to help people write more clearly and effectively. It can be tricky at times to catch things like spelling and grammatical errors that inadvertently change the meaning of a sentence. That’s why we’re introducing grammar suggestions in Docs. To solve grammar corrections, we use a unique machine translation-based approach to recognize errors and suggest corrections on the fly. Our AI can catch several different types of corrections, from simple grammatical rules like how to use articles in a sentence (like “a” versus “an”), to more complicated grammatical concepts such as how to use subordinate clauses correctly. Machine learning will help improve this capability over time to detect trickier grammar issues. And because it’s built natively in Docs, it’s highly secure and reliable. Grammar suggestions in Docs is available today in our Early Adopter Program.

Beyond writing, we’re also working to improve meetings. Last fall, G Suite launched Hangouts Meet hardware, enabling organizations to have reliable, effective video meetings at scale. Many people still view connecting to video meetings as daunting, which is why we’re using Google AI to create a more inviting experience.

We're excited to see so many people actively engaged with Google Assistant through voice—managing their smart home and entertainment—and today, we’re bringing some of that same magic to conference rooms with voice commands for Hangouts Meet hardwareso that teams can connect to a video meeting in seconds. We plan to roll this out to select Meet hardware customers later this year.

Simplify work with G Suite

One of the reasons why G Suite is able to deliver real transformation to businesses is that it’s simple to use and adopt. G Suite was born in the cloud and built for the cloud, which means real-time collaboration is effortless. This is why more than a billion people rely on G Suite apps like Gmail, Docs, Drive and more in their personal lives. Instead of defaulting to old habits—like saving content on your desktop—G Suite saves your work securely in the cloud and provides a means for teams to push the boundaries of what they create.

In fact, 74 percent of all time spent in Docs, Sheets and Slides is on collaborative work—that is, multiple people creating and editing content together. This is a stark difference from what businesses see with legacy tools, where the work is often done individually on a desktop client.

So that’s how we’re reimagining work. Learn more about these announcements by visiting the G Suite website—or stay tuned for more updates in G Suite tomorrow.*The G Suite Trusted Tester and Early Adopter Programs will soon be renamed as Alpha and Beta, respectively. More details to come.

Source: Gmail Blog

Work reimagined: new ways to collaborate safer, smarter and simpler with G Suite

Over the last decade we’ve witnessed the maturation of G Suite—from the introduction of Gmail and Google Docs to more recent advancements in AI and machine learning that are powering, and protecting, the world's email. Now, more than 4 million paying businesses are using our suite to reimagine how they work, and companies like Whirlpool, Nielsen, BBVA and Broadcom are among the many who choose G Suite to move faster, better connect their teams and advance their competitive edge.

In the past year, our team has worked hard to offer nearly 300 new capabilities for G Suite users. Today, we’re excited to share some of the new ways organizations can use G Suite to focus on creative work and move their business forward—keep an eye out for additional announcements to come tomorrow as well.

Here’s what we’re announcing today:

Security center investigation tool (available in an Early Adopter Program* for G Suite Enterprise customers)

Data regions (available now for G Suite Business and Enterprise customers)

Smart Reply in Hangouts Chat (coming soon to G Suite customers)

Smart Compose (coming soon to G Suite customers)

Grammar Suggestions in Google Docs (available in an Early Adopter Program for G Suite customers today)

Voice commands in Hangouts Meet hardware (coming to select Hangouts Meet hardware customers later this year)

Nothing matters more than security

Businesses need a way to simplify their security management, which is why earlier this year we introduced the security center for G Suite. The security center brings together security analytics, actionable insights and best practice recommendations from Google to help you protect your organization, data and users.

Today, we’re announcing our new investigation tool in security center, which adds integrated remediation to the prevention and detection capabilities of the security center. Admins can identify which users are potentially infected, see if anything’s been shared externally and remove access to Drive files or delete malicious emails. Since the investigation tool makes it possible to review your data security in one place and has a simple UI, it makes it easier to take action against threats without having to worry about analyzing logs which can be time-consuming and require complex scripting. Investigation tool is available today as part of our Early Adopter Program (EAP) for G Suite Enterprise customers. Learn more.

In addition to giving admins a simpler way to keep data secure, we’re constantly working to ensure that they have the transparency and control they need. That’s why we’re adding support for data regions to G Suite. For organizations with data control requirements, G Suite will now let customers choose where to store primary data for select G Suite apps—globally distributed, U.S. or Europe. We’re also making it simple to manage your data regions on an ongoing basis. For example, when a file’s owner changes or moves to another organizational unit, we automatically move the data—with no impact on the file’s availability to collaborators. Plus, users continue to get full edit rights on content while data is being moved.

Rob Tollerton, Director of IT at PricewaterhouseCoopers International Limited (PwCIL), and his team are using G Suite to manage global data policies: "Given PwC is a global network with operations in 158 countries, I am very happy to see Google investing in data regions for G Suite and thrilled by how easy and intuitive it will be to set up and manage multi-region policies for our domain.“

Data regions for G Suite is generally available to all G Suite Business and Enterprise customers today at no additional cost. We're continually investing in the offering and will expand it further over time. Learn more.

I am very happy to see Google investing in data regions for G Suite and thrilled by how easy it will be to set up and manage multi-region policies.

Let machines do the mundane work

We’ve spent many years as a company investing in AI and machine learning, and we’re dedicated to a simple idea: rather than replacing human skills, we think AI has endless potential to enhance them. Google AI is already helping millions of people around the world navigate, communicate and get things done in our consumer products. In G Suite, we’re using AI to help businesses and their employees do their best work.

Many of you use Smart Reply in Gmail. It processes hundreds of millions of messages daily and already drives more than 10 percent of email replies. Today we’re announcing that Smart Reply is coming to Hangouts Chat to help you respond to messages quicker so you can free up time to focus on creative work.

Our technology recognizes which messages most likely need responses, and proposes three different replies that sound like how you typically respond. The proposed responses are casual enough for chat and yet appropriate in a workplace. Smart Reply in Hangouts Chat will be available to G Suite customers in the coming weeks.

Smart Reply makes sending short replies easy, especially on the go. But we know that the most time-consuming emails require longer, more complex thoughts. That’s why we built Smart Compose, which you may have heard Sundar talk about at Google I/O this year. Smart Compose intelligently autocompletes your emails; it can fill in greetings, sign offs and common phrases so you can collaborate efficiently. We first launched Smart Compose to consumers in May, and now Smart Compose in Gmail is ready for G Suite customers.

In addition to autocompleting common phrases, Smart Compose can insert personalized information like your office or home address, so you don’t need to spend time in repetitive tasks. And best of all, it will get smarter with time—for example, learning how you prefer to greet certain people in emails to ensure that when you use Smart Compose you sound like yourself.

Smart Compose in Gmail will be available to G Suite customers in the coming weeks.

We’re also using AI to help people write more clearly and effectively. It can be tricky at times to catch things like spelling and grammatical errors that inadvertently change the meaning of a sentence. That’s why we’re introducing grammar suggestions in Docs. To solve grammar corrections, we use a unique machine translation-based approach to recognize errors and suggest corrections on the fly. Our AI can catch several different types of corrections, from simple grammatical rules like how to use articles in a sentence (like “a” versus “an”), to more complicated grammatical concepts such as how to use subordinate clauses correctly. Machine learning will help improve this capability over time to detect trickier grammar issues. And because it’s built natively in Docs, it’s highly secure and reliable. Grammar suggestions in Docs is available today in our Early Adopter Program.

Beyond writing, we’re also working to improve meetings. Last fall, G Suite launched Hangouts Meet hardware, enabling organizations to have reliable, effective video meetings at scale. Many people still view connecting to video meetings as daunting, which is why we’re using Google AI to create a more inviting experience.

We're excited to see so many people actively engaged with Google Assistant through voice—managing their smart home and entertainment—and today, we’re bringing some of that same magic to conference rooms with voice commands for Hangouts Meet hardwareso that teams can connect to a video meeting in seconds. We plan to roll this out to select Meet hardware customers later this year.

Simplify work with G Suite

One of the reasons why G Suite is able to deliver real transformation to businesses is that it’s simple to use and adopt. G Suite was born in the cloud and built for the cloud, which means real-time collaboration is effortless. This is why more than a billion people rely on G Suite apps like Gmail, Docs, Drive and more in their personal lives. Instead of defaulting to old habits—like saving content on your desktop—G Suite saves your work securely in the cloud and provides a means for teams to push the boundaries of what they create.

In fact, 74 percent of all time spent in Docs, Sheets and Slides is on collaborative work—that is, multiple people creating and editing content together. This is a stark difference from what businesses see with legacy tools, where the work is often done individually on a desktop client.

So that’s how we’re reimagining work. Learn more about these announcements by visiting the G Suite website—or stay tuned for more updates in G Suite tomorrow.*The G Suite Trusted Tester and Early Adopter Programs will soon be renamed as Alpha and Beta, respectively. More details to come.