Learn more about updates for Google Search, Maps, Gemini and more that can help with summer travel.

Learn more about updates for Google Search, Maps, Gemini and more that can help with summer travel.

5 tips for summer travel prep

Learn more about updates for Google Search, Maps, Gemini and more that can help with summer travel.

Learn more about updates for Google Search, Maps, Gemini and more that can help with summer travel.

Learn more about updates for Google Search, Maps, Gemini and more that can help with summer travel.

Learn more about updates for Google Search, Maps, Gemini and more that can help with summer travel.

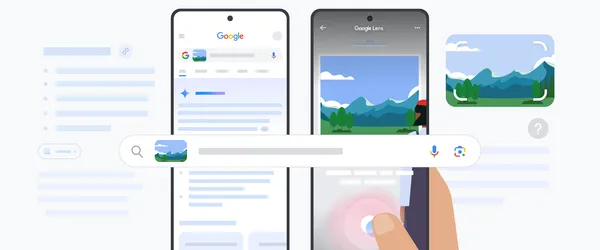

Use Google Lens to search your screen within the Google app or Chrome on iOS. Plus, AI Overviews are coming to more Lens queries.

Use Google Lens to search your screen within the Google app or Chrome on iOS. Plus, AI Overviews are coming to more Lens queries.

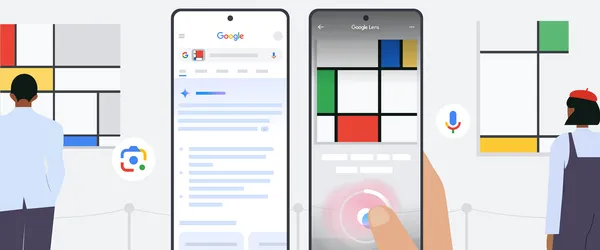

Google Lens uses AI to help you search the world around you. Here’s how to make the most of it.

Google Lens uses AI to help you search the world around you. Here’s how to make the most of it.

From a powerful new AI-flood forecasting initiative to help from AI in advancing quantum computers.

From a powerful new AI-flood forecasting initiative to help from AI in advancing quantum computers.

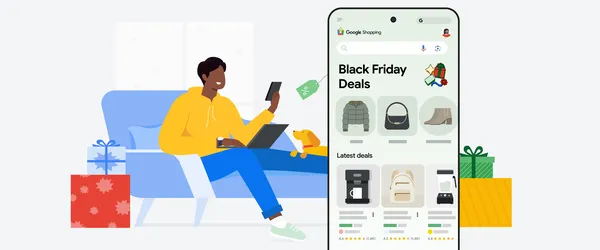

Google Shopping has some helpful tips to share to help you maximize savings and minimize stress while shopping both in store and online this Cyber Five weekend.

Google Shopping has some helpful tips to share to help you maximize savings and minimize stress while shopping both in store and online this Cyber Five weekend.

Voice input in Google Lens lets you make your voice heard as you search. Here’s how to use this new feature.

Voice input in Google Lens lets you make your voice heard as you search. Here’s how to use this new feature.

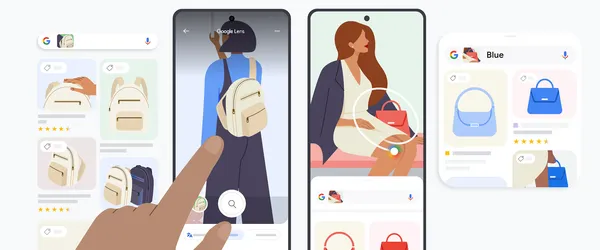

Check out Google Lens’ latest shopping edit which offers product details with one snap — plus, more tips for shopping what you see.

Check out Google Lens’ latest shopping edit which offers product details with one snap — plus, more tips for shopping what you see.

With the help of Google’s latest AI models, you can now more easily search an image or video, find a website and compare different products when shopping in Chrome deskt…

With the help of Google’s latest AI models, you can now more easily search an image or video, find a website and compare different products when shopping in Chrome deskt…

Learn more about Google's AI-powered tools that can help you explore the outdoors more.

Learn more about Google's AI-powered tools that can help you explore the outdoors more.

We’re sharing a few helpful tools from Search, Maps and Shopping ahead of the summer travel season.

We’re sharing a few helpful tools from Search, Maps and Shopping ahead of the summer travel season.