At Google, we're actively exploring how people can use creativity tools powered by machine learning and computational methods when producing multimedia content, from creating music and reframing videos, to drawing and more. One creative process in particular, video production, can especially benefit from such tools, as it requires a series of decisions about what content is best suited to a target audience, how to position the available assets within the field of view, and what temporal arrangement will yield the most compelling narrative. But what if one could leverage existing assets, such as a website, to get a jump-start on video creation? Businesses commonly host websites that contain rich visual representations about their services or products, all of which could be repurposed for other multimedia formats, such as videos, potentially enabling those without extensive resources the ability to reach a broader audience.

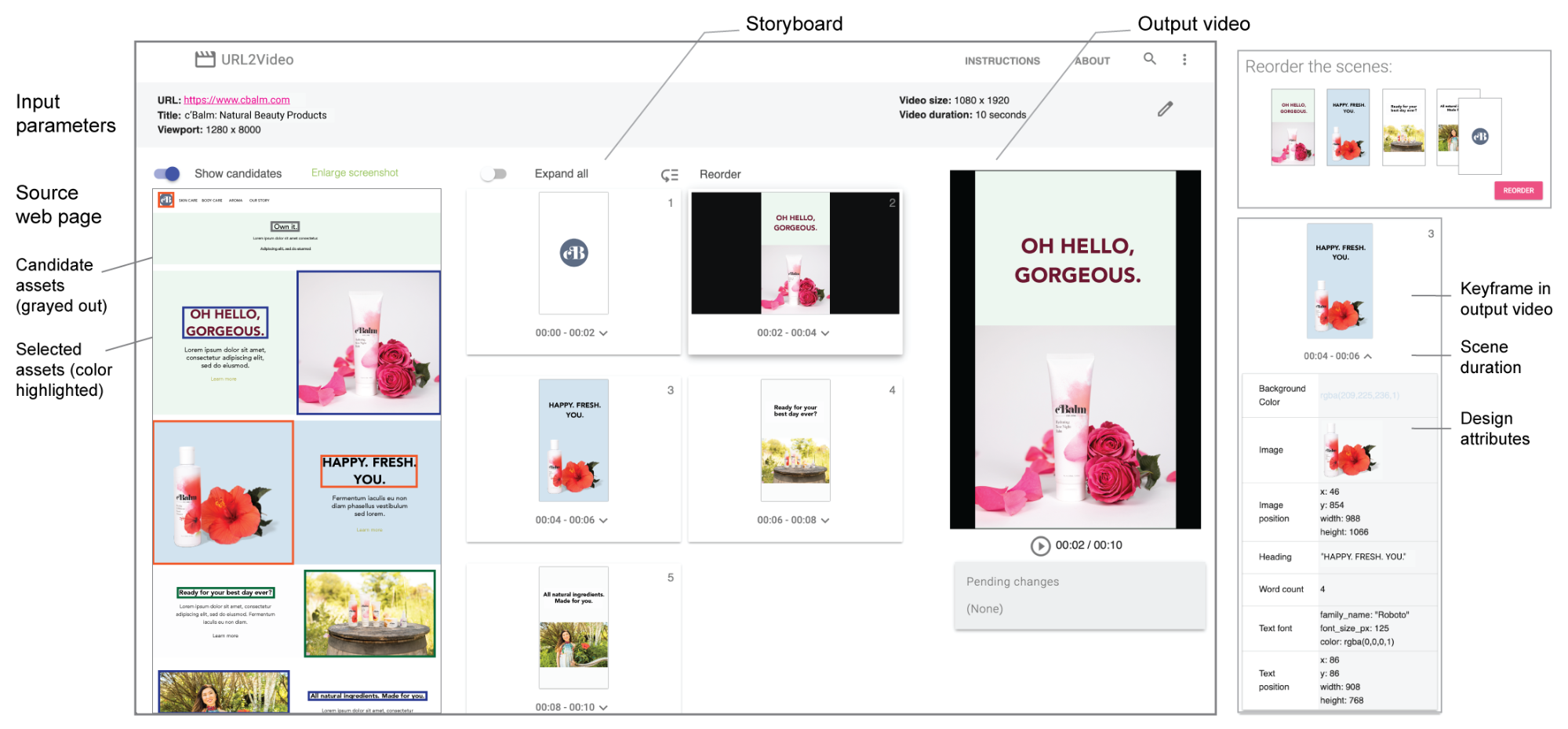

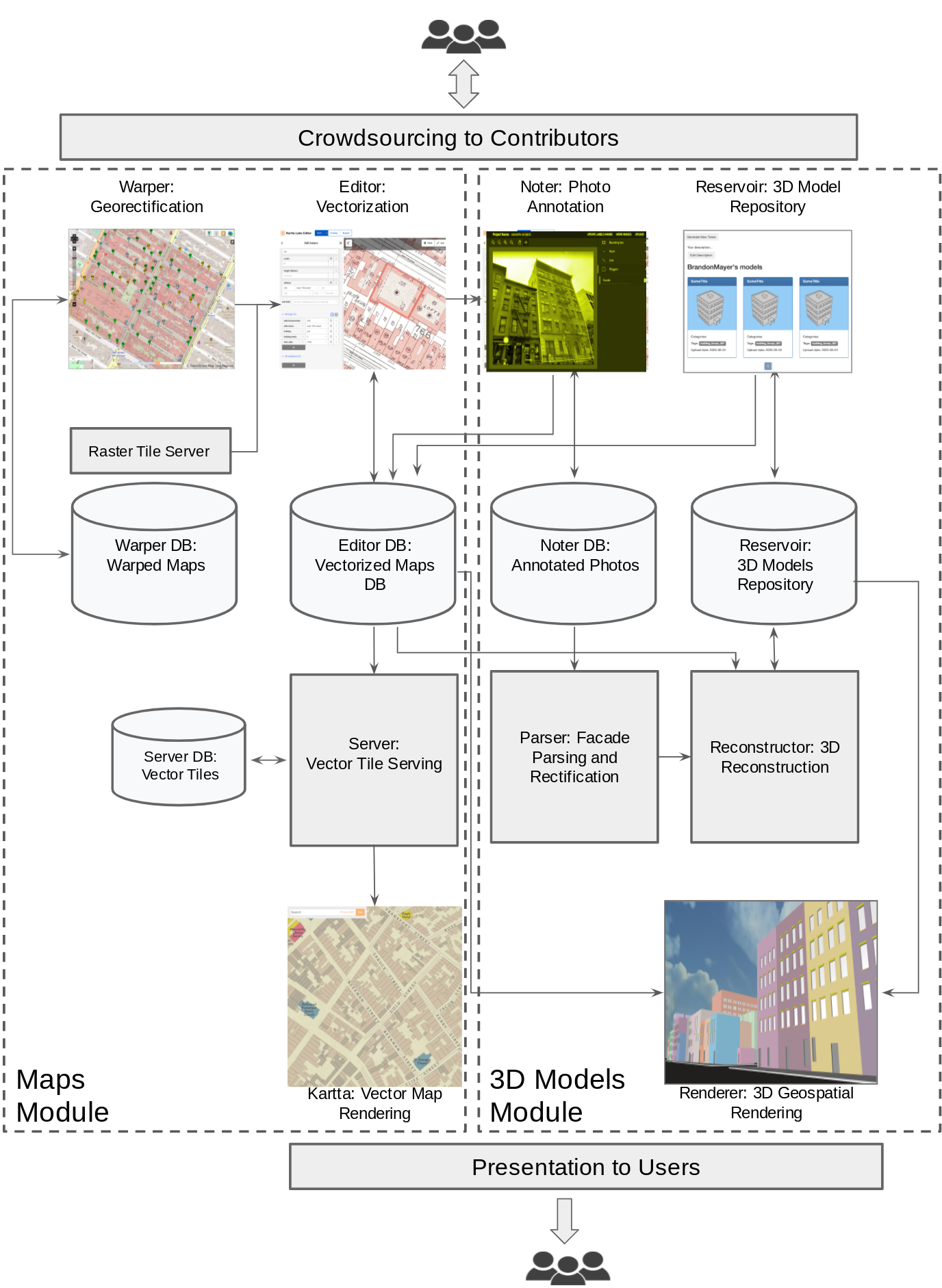

In “Automatic Video Creation From a Web Page”, published at UIST 2020, we introduce URL2Video, a research prototype pipeline to automatically convert a web page into a short video, given temporal and visual constraints provided by the content owner. URL2Video extracts assets (text, images, or videos) and their design styles (including fonts, colors, graphical layouts, and hierarchy) from HTML sources and organizes the visual assets into a sequence of shots, while maintaining a look-and-feel similar to the source page. Given a user-specified aspect ratio and duration, it then renders the repurposed materials into a video that is ideal for product and service advertising.

URL2Video Overview

Assume a user provides an URL to a web page that illustrates their business. The URL2Video pipeline automatically selects key content from the page and decides the temporal and visual presentation of each asset, based on a set of heuristics derived from an interview study with designers who were familiar with web design and video ad creation. These designer-informed heuristics capture common video editing styles, including content hierarchy, constraining the amount of information in a shot and its time duration, providing consistent color and style for branding, and more. Using this information, the URL2Video pipeline parses a web page, analyzing the content and selecting visually salient text or images while preserving their design styles, which it organizes according to the video specifications provided by the user.

Webpage Analysis

Given a webpage URL, URL2Video extracts document object model (DOM) information and multimedia materials. For the purposes of our research prototype, we limited the domain to static web pages that contain salient assets and headings preserved in an HTML hierarchy that follows recent web design principles, which encourage the use of prominent elements, distinct sections, and an order of visual focus that guides readers in perceiving information. URL2Video identifies such visually-distinguishable elements as a candidate list of asset groups, each of which may contain a heading, a product image, detailed descriptions, and call-to-action buttons, and captures both the raw assets (text and multimedia files) and detailed design specifications (HTML tags, CSS styles, and rendered locations) for each element. It then ranks the asset groups by assigning each a priority score based on their visual appearance and annotations, including their HTML tags, rendered sizes, and ordering shown on the page. In this way, an asset group that occupies a larger area at the top of the page receives a higher score.

Constraints-Based Asset Selection

We consider two goals when composing a video: (1) each video shot should provide concise information, and (2) the visual design should be consistent with the source page. Based on these goals and the video constraints provided by the user, including the intended video duration (in seconds) and aspect ratio (commonly 16:9, 4:3, 1:1, etc.), URL2Video automatically selects and orders the asset groups to optimize the total priority score. To make the content concise, it presents only dominant elements from a page, such as a headline and a few multimedia assets. It constrains the duration of each visual element for viewers to perceive the content. In this way, a short video highlights the most salient information from the top of the page, and a longer video contains more campaigns or products.

Scene Composition & Video Rendering

Given an ordered list of assets based on the DOM hierarchy, URL2Video follows the design heuristics obtained from interview studies to make decisions about both the temporal and spatial arrangement to present the assets in individual shots. It transfers the graphical layout of elements into the video’s aspect ratio, and applies the style choices including fonts and colors. To make a video more dynamic and engaging, it adjusts the presentation timing of assets. Finally, it renders the content into a video in the MPEG-4 container format.

User Control

The interface to the research prototype allows the user to review the design attributes in each video shot extracted from the source page, reorder the materials, change the detailed design, such as colors and fonts, and adjust the constraints to generate a new video.

URL2Video Use Cases

We demonstrate the performance of the end-to-end URL2Video pipeline on a variety of existing web pages. Below we highlight an example result where URL2Video converts a page that embeds multiple short video clips into a 12-second output video. Note how the pipeline makes automatic editing decisions on font and color choices, timing, and content ordering in a video captured from the source page.

|

| URL2Video identifies key content from our Google Search introduction page (top), including headings and video assets. It converts them into a video by considering the presentation flow, the source design and the output constraints (a 12-second landscape video; bottom). |

The video below provides further demonstration:

To evaluate the automatically-generated videos, we conducted a user study with designers at Google. Our results show that URL2Video effectively extracted design elements from a web page and supported designers by bootstrapping the video creation process.

Next steps

While this current research focuses on the visual presentation, we are developing new techniques that support the audio track and a voiceover in video editing. All in all, we envision a future where creators focus on making high-level decisions and an ML model interactively suggests detailed temporal and graphical edits for a final video creation on multiple platforms.

Acknowledgments

We greatly thank our paper co-authors, Zheng Sun (Research) and Katrina Panovich (YouTube). We would also like to thank our colleagues who contributed to URL2Video, (in alphabetical order of last name) Jordan Canedy, Brian Curless, Nathan Frey, Madison Le, Alireza Mahdian, Justin Parra, Emily Ryan, Mogan Shieh, Sandor Szego, and Weilong Yang. We are grateful to receive the support from our leadership, Tomas Izo, Rahul Sukthankar, and Jay Yagnik.