Natural language processing (NLP) has seen significant progress over the past several years, with pre-trained models like BERT, ALBERT, ELECTRA, and XLNet achieving remarkable accuracy across a variety of tasks. In pre-training, representations are learned from a large text corpus, e.g., Wikipedia, by repeatedly masking out words and trying to predict them (this is called masked language modeling). The resulting representations encode rich information about language and correlations between concepts, such as surgeons and scalpels. There is then a second training stage, fine-tuning, in which the model uses task-specific training data to learn how to use the general pre-trained representations to do a concrete task, like classification. Given the broad adoption of these representations in many NLP tasks, it is crucial to understand the information encoded in them and how any learned correlations affect performance downstream, to ensure the application of these models aligns with our AI Principles.

In “Measuring and Reducing Gendered Correlations in Pre-trained Models” we perform a case study on BERT and its low-memory counterpart ALBERT, looking at correlations related to gender, and formulate a series of best practices for using pre-trained language models. We present experimental results over public model checkpoints and an academic task dataset to illustrate how the best practices apply, providing a foundation for exploring settings beyond the scope of this case study. We will soon release a series of checkpoints, Zari1, which reduce gendered correlations while maintaining state-of-the-art accuracy on standard NLP task metrics.

Measuring Correlations

To understand how correlations in pre-trained representations can affect downstream task performance, we apply a diverse set of evaluation metrics for studying the representation of gender. Here, we’ll discuss results from one of these tests, based on coreference resolution, which is the capability that allows models to understand the correct antecedent to a given pronoun in a sentence. For example, in the sentence that follows, the model should recognize his refers to the nurse, and not to the patient.

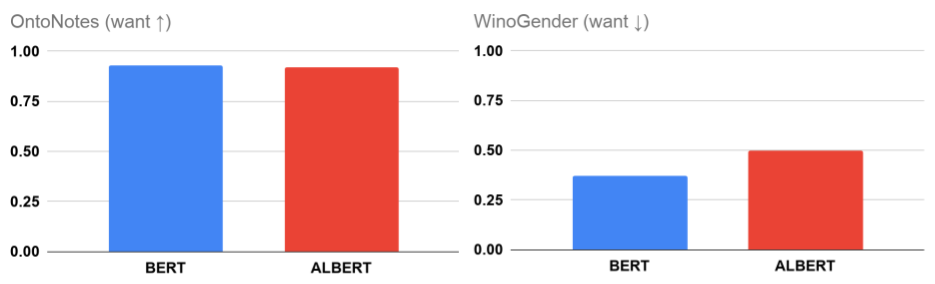

The standard academic formulation of the task is the OntoNotes test (Hovy et al., 2006), and we measure how accurate a model is at coreference resolution in a general setting using an F1 score over this data (as in Tenney et al. 2019). Since OntoNotes represents only one data distribution, we also consider the WinoGender benchmark that provides additional, balanced data designed to identify when model associations between gender and profession incorrectly influence coreference resolution. High values of the WinoGender metric (close to one) indicate a model is basing decisions on normative associations between gender and profession (e.g., associating nurse with the female gender and not male). When model decisions have no consistent association between gender and profession, the score is zero, which suggests that decisions are based on some other information, such as sentence structure or semantics.

In this study, we see that neither the (Large) BERT or ALBERT public model achieves zero score on the WinoGender examples, despite achieving impressive accuracy on OntoNotes (close to 100%). At least some of this is due to models preferentially using gendered correlations in reasoning. This isn’t completely surprising: there are a range of cues available to understand text and it is possible for a general model to pick up on any or all of these. However, there is reason for caution, as it is undesirable for a model to make predictions primarily based on gendered correlations learned as priors rather than the evidence available in the input.

Best Practices

Given that it is possible for unintended correlations in pre-trained model representations to affect downstream task reasoning, we now ask: what can one do to mitigate any risk this poses when developing new NLP models?

- It is important to measure for unintended correlations: Model quality may be assessed using accuracy metrics, but these only measure one dimension of performance, especially if the test data is drawn from the same distribution as the training data. For example, the BERT and ALBERT checkpoints have accuracy within 1% of each other, but differ by 26% (relative) in the degree to which they use gendered correlations for coreference resolution. This difference might be important for some tasks; selecting a model with low WinoGender score could be desirable in an application featuring texts about people in professions that may not conform to historical social norms, e.g., male nurses.

- Be careful even when making seemingly innocuous configuration changes: Neural network model training is controlled by many hyperparameters that are usually selected to maximize some training objective. While configuration choices often seem innocuous, we find they can cause significant changes for gendered correlations, both for better and for worse. For example, dropout regularization is used to reduce overfitting by large models. When we increase the dropout rate used for pre-training BERT and ALBERT, we see a significant reduction in gendered correlations even after fine-tuning. This is promising since a simple configuration change allows us to train models with reduced risk of harm, but it also shows that we should be mindful and evaluate carefully when making any change in model configuration.

Impact of increasing dropout regularization in BERT and ALBERT. - There are opportunities for general mitigations: A further corollary from the perhaps unexpected impact of dropout on gendered correlations is that it opens the possibility to use general-purpose methods for reducing unintended correlations: by increasing dropout in our study, we improve how the models reason about WinoGender examples without manually specifying anything about the task or changing the fine-tuning stage at all. Unfortunately, OntoNotes accuracy does start to decline as the dropout rate increases (which we can see in the BERT results), but we are excited about the potential to mitigate this in pre-training, where changes can lead to model improvements without the need for task-specific updates. We explore counterfactual data augmentation as another mitigation strategy with different tradeoffs in our paper.

What’s Next

We believe these best practices provide a starting point for developing robust NLP systems that perform well across the broadest possible range of linguistic settings and applications. Of course these techniques on their own are not sufficient to capture and remove all potential issues. Any model deployed in a real-world setting should undergo rigorous testing that considers the many ways it will be used, and implement safeguards to ensure alignment with ethical norms, such as Google's AI Principles. We look forward to developments in evaluation frameworks and data that are more expansive and inclusive to cover the many uses of language models and the breadth of people they aim to serve.

Acknowledgements

This is joint work with Xuezhi Wang, Ian Tenney, Ellie Pavlick, Alex Beutel, Jilin Chen, Emily Pitler, and Slav Petrov. We benefited greatly throughout the project from discussions with Fernando Pereira, Ed Chi, Dipanjan Das, Vera Axelrod, Jacob Eisenstein, Tulsee Doshi, and James Wexler.

1 Zari is an Afghan Muppet designed to show that ‘a little girl could do as much as everybody else’. ↩