ARCore brings augmented reality capabilities to millions of Android phones. It’s available as an SDK preview, and developers can start experimenting with it right now. We’ve already seen some really fun, useful and delightful experiences come through; check out thisisarcore.com for some of our favorites.

Daydream Labs has been in on the fun and experimentation, too. We’re exploring new interactions, including unique ways to learn about the world around you, different ways to navigate, and new ways to socialize and play with friends.

Here’s some of what we’ve made so far!

Using AR as a magic window into Street View

We built a prototype that lets you zoom into The British Museum and see Street View panoramas from the front of Great Russell Street.

Helping you see the future

With AR, we prototyped a way for architects to overlay models on top of construction in the real world to show how a finished home would look.

Skills training with ARCore

We brought our VR version of the Espresso Trainer into AR. You can use your phone to learn each step of making a perfect espresso. People who had never used the machine before made their first espresso from scratch, with perfect crema to boot!

Controlling virtual position through reality

We built a way to explore Street View without having to click arrows—just walk forward in physical space to adjust your virtual position.

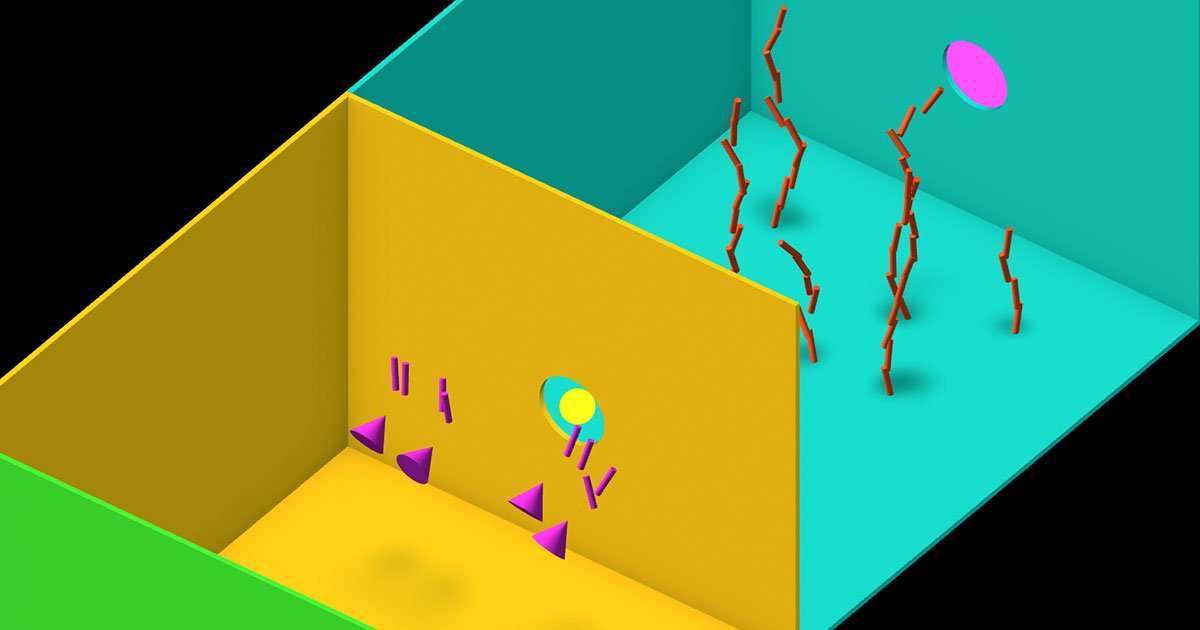

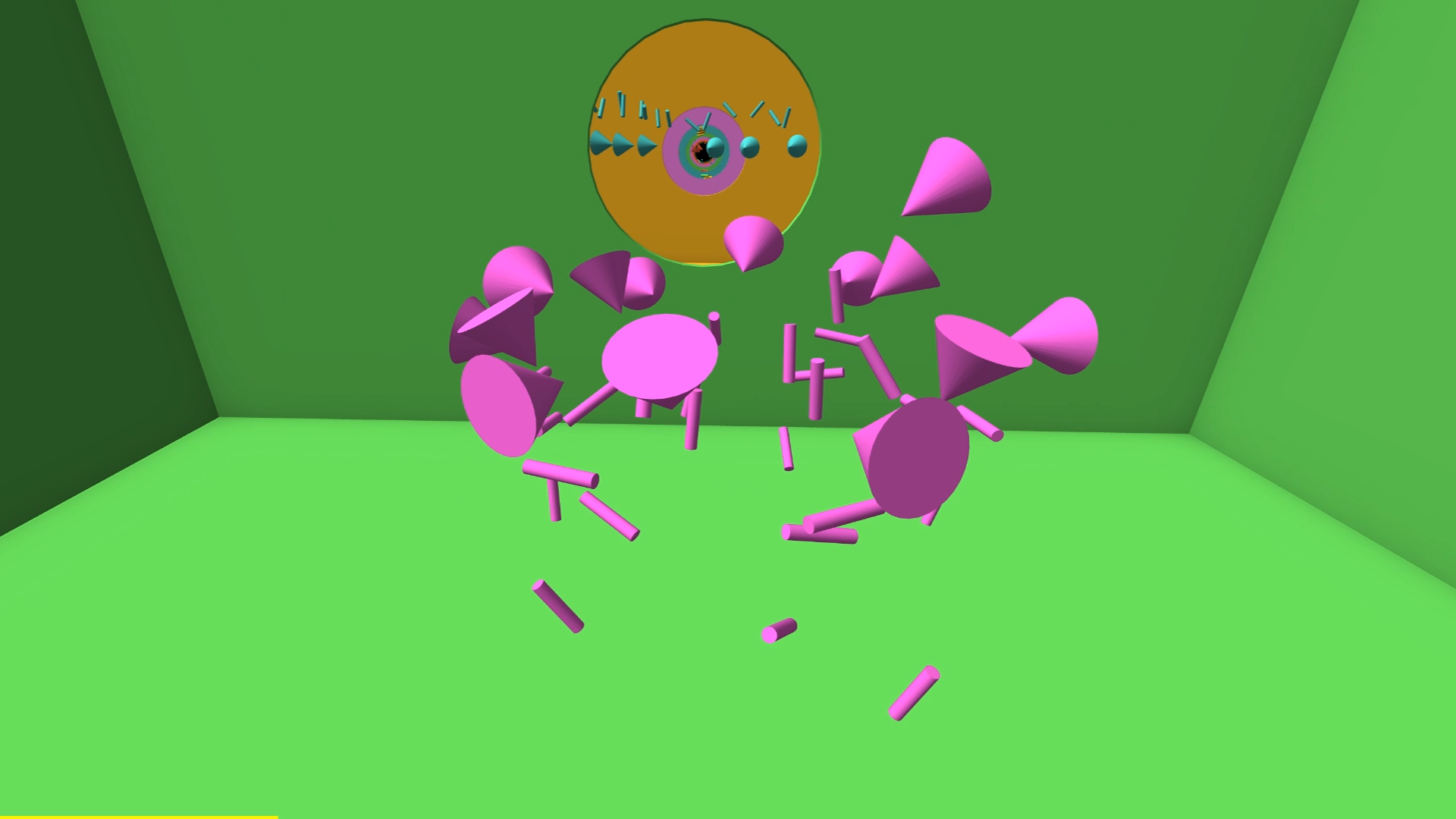

Highlight AR content

We played around with the idea of putting floating AR content in front of the user, and controlling depth of field and desaturation of the camera feed based on user motion. This experiment allows digital assets to “pop,” directing people's attention there and encouraging them to explore and interact.

Share your position with VPS

We’ve been experimenting with Google’s VPS beta (Visual Positioning Service), announced at Google I/O in May. VPS enables shared world-scale AR experiences well beyond tabletops. For example, this prototype lets you share your position with a friend, and they’ll be guided right to you with VPS. We’ve played quite a few games of hide-and-seek with it!