See more content from your favorite sites, pick up where you left off and more with these tips for customizing Google Search.

See more content from your favorite sites, pick up where you left off and more with these tips for customizing Google Search.

6 ways to get a more customized Search experience

See more content from your favorite sites, pick up where you left off and more with these tips for customizing Google Search.

See more content from your favorite sites, pick up where you left off and more with these tips for customizing Google Search.

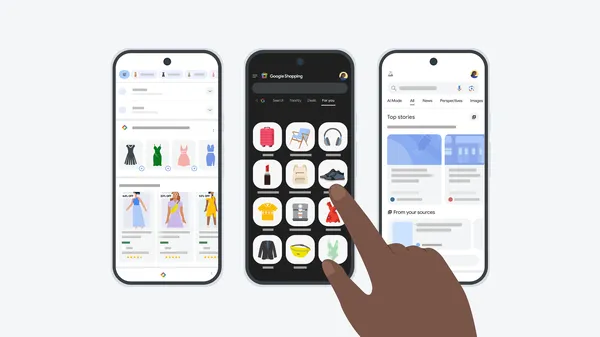

Search Live with voice facilitates back-and-forth conversations in AI Mode.

Search Live with voice facilitates back-and-forth conversations in AI Mode.

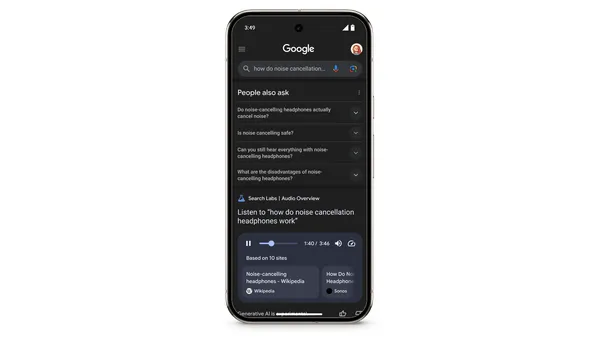

Today, we’re launching a new Search experiment in Labs – Audio Overviews, which uses our latest Gemini models to generate quick, conversational audio overviews for certa…

Today, we’re launching a new Search experiment in Labs – Audio Overviews, which uses our latest Gemini models to generate quick, conversational audio overviews for certa…

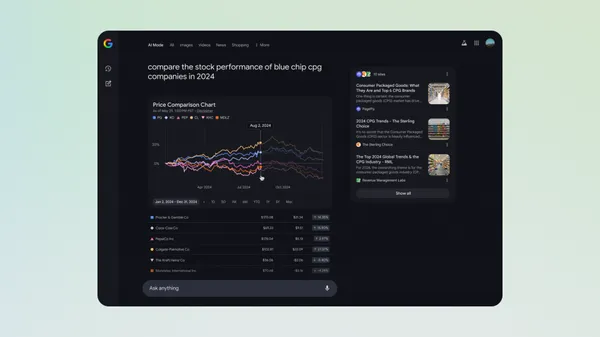

Today, we’re starting to roll out interactive chart visualizations in AI Mode in Labs to help bring financial data to life for questions on stocks and mutual funds.Now, …

Today, we’re starting to roll out interactive chart visualizations in AI Mode in Labs to help bring financial data to life for questions on stocks and mutual funds.Now, …

Here are Google’s latest AI updates from May 2025

Here are Google’s latest AI updates from May 2025

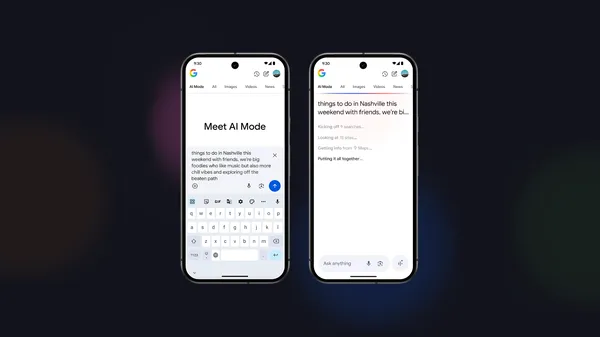

AI Mode is our most powerful AI search, which we’re rolling out to everyone in the U.S. Here’s how we brought it to life (and to your fingertips).

AI Mode is our most powerful AI search, which we’re rolling out to everyone in the U.S. Here’s how we brought it to life (and to your fingertips).

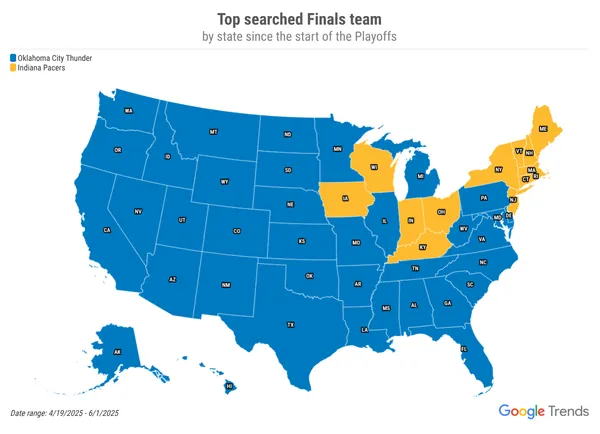

Search interest for the “NBA Finals” is spiking as the basketball finals begin and the women’s season picks up! Here’s a look at what's trending on Search, including sta…

Search interest for the “NBA Finals” is spiking as the basketball finals begin and the women’s season picks up! Here’s a look at what's trending on Search, including sta…

Take this quiz about Google I/O 2025 to see how well you know what we announced this year at I/O.

Take this quiz about Google I/O 2025 to see how well you know what we announced this year at I/O.

Learn more about the biggest announcements and launches from Google’s 2025 I/O developer conference.

Learn more about the biggest announcements and launches from Google’s 2025 I/O developer conference.

Today at I/O, we showed how we’re enhancing Search with our latest Gemini models via AI Mode.

Today at I/O, we showed how we’re enhancing Search with our latest Gemini models via AI Mode.