Posted by Catherine Armato, Program Manager, Google

The Eleventh International Conference on Learning Representations (ICLR 2023) is being held this week as a hybrid event in Kigali, Rwanda. We are proud to be a Diamond Sponsor of ICLR 2023, a premier conference on deep learning, where Google researchers contribute at all levels. This year we are presenting over 100 papers and are actively involved in organizing and hosting a number of different events, including workshops and interactive sessions.

If you’re registered for ICLR 2023, we hope you’ll visit the Google booth to learn more about the exciting work we’re doing across topics spanning representation and reinforcement learning, theory and optimization, social impact, safety and privacy, and applications from generative AI to speech and robotics. Continue below to find the many ways in which Google researchers are engaged at ICLR 2023, including workshops, papers, posters and talks (Google affiliations in bold).

Board and Organizing Committee

Board Members include: Shakir Mohamed, Tara Sainath

Senior Program Chairs include: Been Kim

Workshop Chairs include: Aisha Walcott-Bryant, Rose Yu

Diversity, Equity & Inclusion Chairs include: Rosanne Liu

Outstanding Paper awards

Emergence of Maps in the Memories of Blind Navigation Agents

Erik Wijmans, Manolis Savva, Irfan Essa, Stefan Lee, Ari S. Morcos, Dhruv Batra

DreamFusion: Text-to-3D Using 2D Diffusion

Ben Poole, Ajay Jain, Jonathan T. Barron, Ben Mildenhall

Keynote speaker

Learned Optimizers: Why They're the Future, Why They’re Hard, and What They Can Do Now

Jascha Sohl-Dickstein

Workshops

Kaggle@ICLR 2023: ML Solutions in Africa

Organizers include: Julia Elliott, Phil Culliton, Ray Harvey

Facilitators: Julia Elliot, Walter Reade

Reincarnating Reinforcement Learning (Reincarnating RL)

Organizers include: Rishabh Agarwal, Ted Xiao, Max Schwarzer

Speakers include: Sergey Levine

Panelists include: Marc G. Bellemare, Sergey Levine

Trustworthy and Reliable Large-Scale Machine Learning Models

Organizers include: Sanmi Koyejo

Speakers include: Nicholas Carlini

Physics for Machine Learning (Physics4ML)

Speakers include: Yasaman Bahri

AI for Agent-Based Modelling Community (AI4ABM)

Organizers include: Pablo Samuel Castro

Mathematical and Empirical Understanding of Foundation Models (ME-FoMo)

Organizers include: Mathilde Caron, Tengyu Ma, Hanie Sedghi

Speakers include: Yasaman Bahri, Yann Dauphin

Neurosymbolic Generative Models 2023 (NeSy-GeMs)

Organizers include: Kevin Ellis

Speakers include: Daniel Tarlow, Tuan Anh Le

What Do We Need for Successful Domain Generalization?

Panelists include: Boqing Gong

The 4th Workshop on Practical ML for Developing Countries: Learning Under Limited/Low Resource Settings

Keynote Speaker: Adji Bousso Dieng

Machine Learning for Remote Sensing

Speakers include: Abigail Annkah

Multimodal Representation Learning (MRL): Perks and Pitfalls

Organizers include: Petra Poklukar

Speakers include: Arsha Nagrani

Pitfalls of Limited Data and Computation for Trustworthy ML

Organizers include: Prateek Jain

Speakers include: Nicholas Carlini, Praneeth Netrapalli

Sparsity in Neural Networks: On Practical Limitations and Tradeoffs Between Sustainability and Efficiency

Organizers include: Trevor Gale, Utku Evci

Speakers include: Aakanksha Chowdhery, Jeff Dean

Time Series Representation Learning for Health

Speakers include: Katherine Heller

Deep Learning for Code (DL4C)

Organizers include: Gabriel Orlanski

Speakers include: Alex Polozov, Daniel Tarlow

Affinity Workshops

Tiny Papers Showcase Day (a DEI initiative)

Organizers include: Rosanne Liu

Papers

Evolve Smoothly, Fit Consistently: Learning Smooth Latent Dynamics for Advection-Dominated Systems

Zhong Yi Wan, Leonardo Zepeda-Nunez, Anudhyan Boral, Fei Sha

Quantifying Memorization Across Neural Language Models

Nicholas Carlini, Daphne Ippolito, Matthew Jagielski, Katherine Lee, Florian Tramer, Chiyuan Zhang

Emergence of Maps in the Memories of Blind Navigation Agents (Outstanding Paper Award)

Erik Wijmans, Manolis Savva, Irfan Essa, Stefan Lee, Ari S. Morcos, Dhruv Batra

Offline Q-Learning on Diverse Multi-task Data Both Scales and Generalizes (see blog post)

Aviral Kumar, Rishabh Agarwal, Xingyang Geng, George Tucker, Sergey Levine

ReAct: Synergizing Reasoning and Acting in Language Models (see blog post)

Shunyu Yao*, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik R. Narasimhan, Yuan Cao

Prompt-to-Prompt Image Editing with Cross-Attention Control

Amir Hertz, Ron Mokady, Jay Tenenbaum, Kfir Aberman, Yael Pritch, Daniel Cohen-Or

DreamFusion: Text-to-3D Using 2D Diffusion (Outstanding Paper Award)

Ben Poole, Ajay Jain, Jonathan T. Barron, Ben Mildenhall

A System for Morphology-Task Generalization via Unified Representation and Behavior Distillation

Hiroki Furuta, Yusuke Iwasawa, Yutaka Matsuo, Shixiang Shane Gu

Sample-Efficient Reinforcement Learning by Breaking the Replay Ratio Barrier

Pierluca D'Oro, Max Schwarzer, Evgenii Nikishin, Pierre-Luc Bacon, Marc G Bellemare, Aaron Courville

Dichotomy of Control: Separating What You Can Control from What You Cannot

Sherry Yang, Dale Schuurmans, Pieter Abbeel, Ofir Nachum

Fast and Precise: Adjusting Planning Horizon with Adaptive Subgoal Search

Michał Zawalski, Michał Tyrolski, Konrad Czechowski, Tomasz Odrzygóźdź, Damian Stachura, Piotr Piekos, Yuhuai Wu, Łukasz Kucinski, Piotr Miłos

The Trade-Off Between Universality and Label Efficiency of Representations from Contrastive Learning

Zhenmei Shi, Jiefeng Chen, Kunyang Li, Jayaram Raghuram, Xi Wu, Yingyu Liang, Somesh Jha

Sparsity-Constrained Optimal Transport

Tianlin Liu*, Joan Puigcerver, Mathieu Blondel

Unmasking the Lottery Ticket Hypothesis: What's Encoded in a Winning Ticket's Mask?

Mansheej Paul, Feng Chen, Brett W. Larsen, Jonathan Frankle, Surya Ganguli, Gintare Karolina Dziugaite

Extreme Q-Learning: MaxEnt RL without Entropy

Divyansh Garg, Joey Hejna, Matthieu Geist, Stefano Ermon

Draft, Sketch, and Prove: Guiding Formal Theorem Provers with Informal Proofs

Albert Qiaochu Jiang, Sean Welleck, Jin Peng Zhou, Timothee Lacroix, Jiacheng Liu, Wenda Li, Mateja Jamnik, Guillaume Lample, Yuhuai Wu

SimPer: Simple Self-Supervised Learning of Periodic Targets

Yuzhe Yang, Xin Liu, Jiang Wu, Silviu Borac, Dina Katabi, Ming-Zher Poh, Daniel McDuff

Socratic Models: Composing Zero-Shot Multimodal Reasoning with Language

Andy Zeng, Maria Attarian, Brian Ichter, Krzysztof Marcin Choromanski, Adrian Wong, Stefan Welker, Federico Tombari, Aveek Purohit, Michael S. Ryoo, Vikas Sindhwani, Johnny Lee, Vincent Vanhoucke, Pete Florence

What Learning Algorithm Is In-Context Learning? Investigations with Linear Models

Ekin Akyurek*, Dale Schuurmans, Jacob Andreas, Tengyu Ma*, Denny Zhou

Preference Transformer: Modeling Human Preferences Using Transformers for RL

Changyeon Kim, Jongjin Park, Jinwoo Shin, Honglak Lee, Pieter Abbeel, Kimin Lee

Iterative Patch Selection for High-Resolution Image Recognition

Benjamin Bergner, Christoph Lippert, Aravindh Mahendran

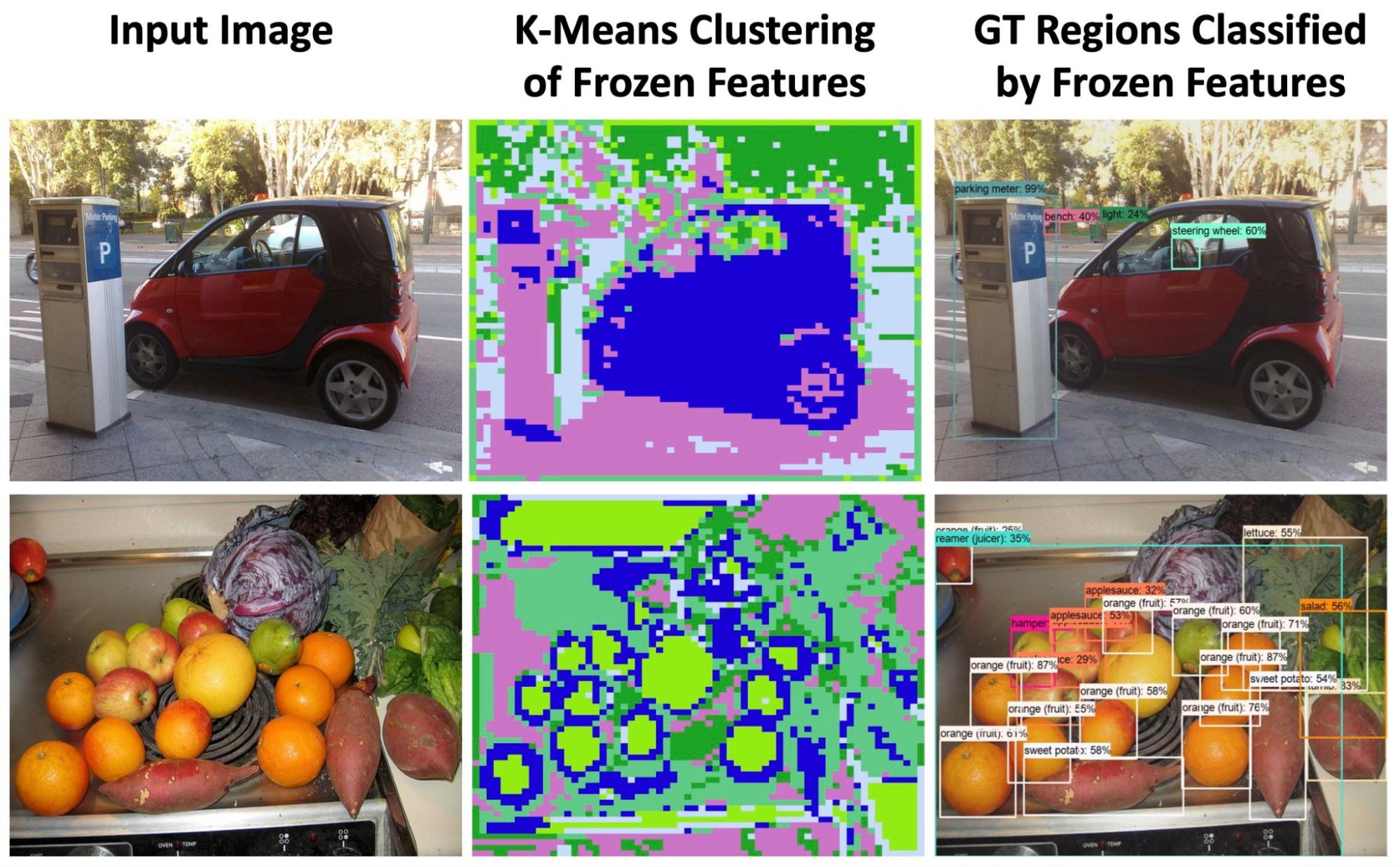

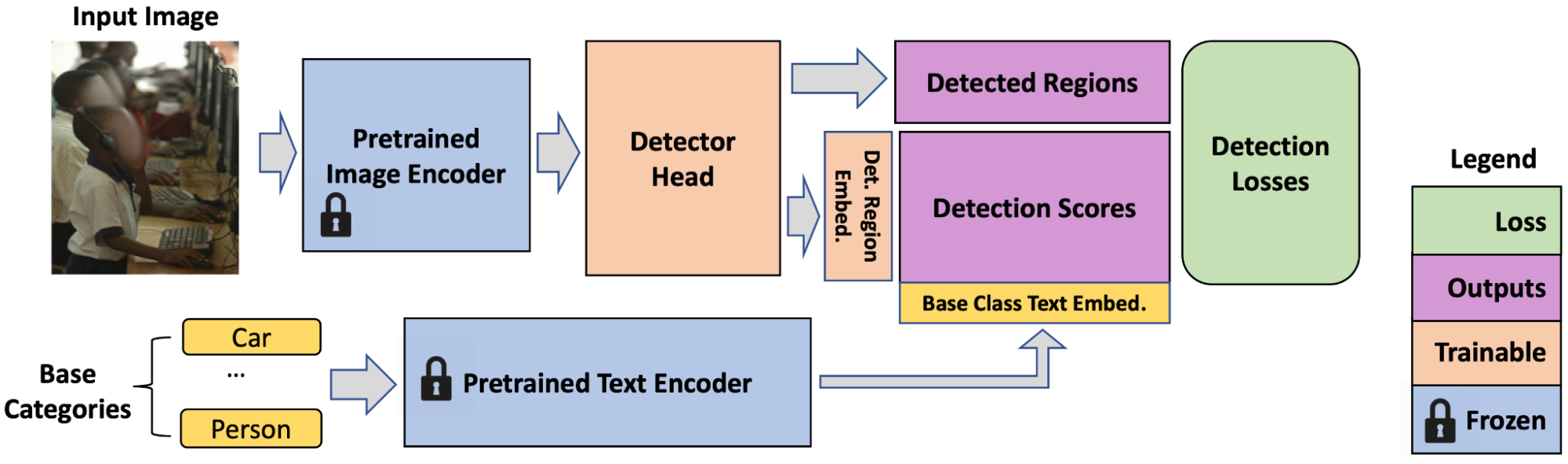

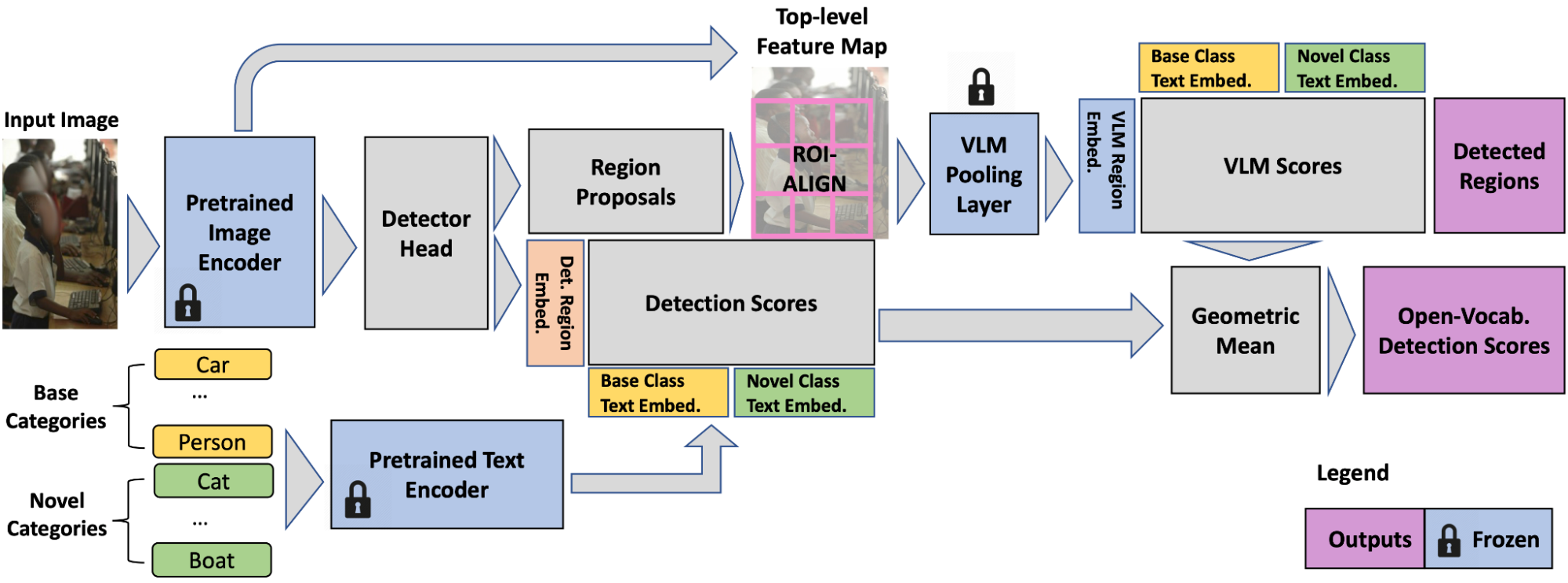

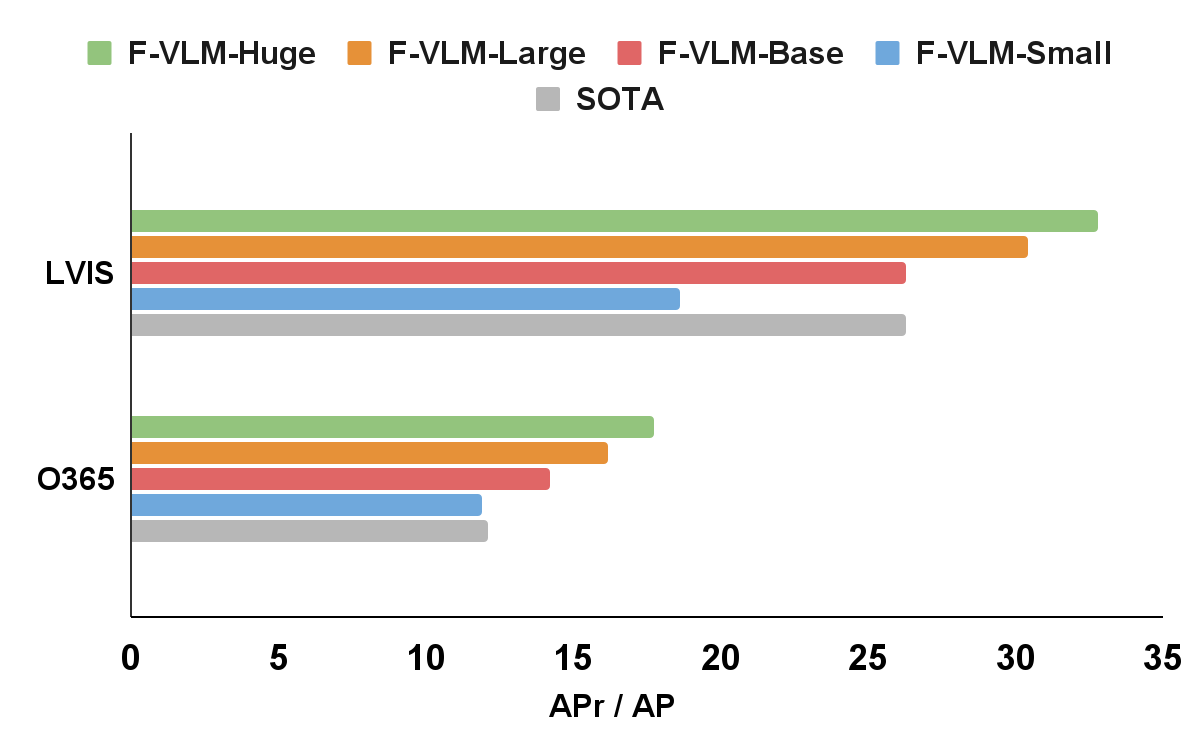

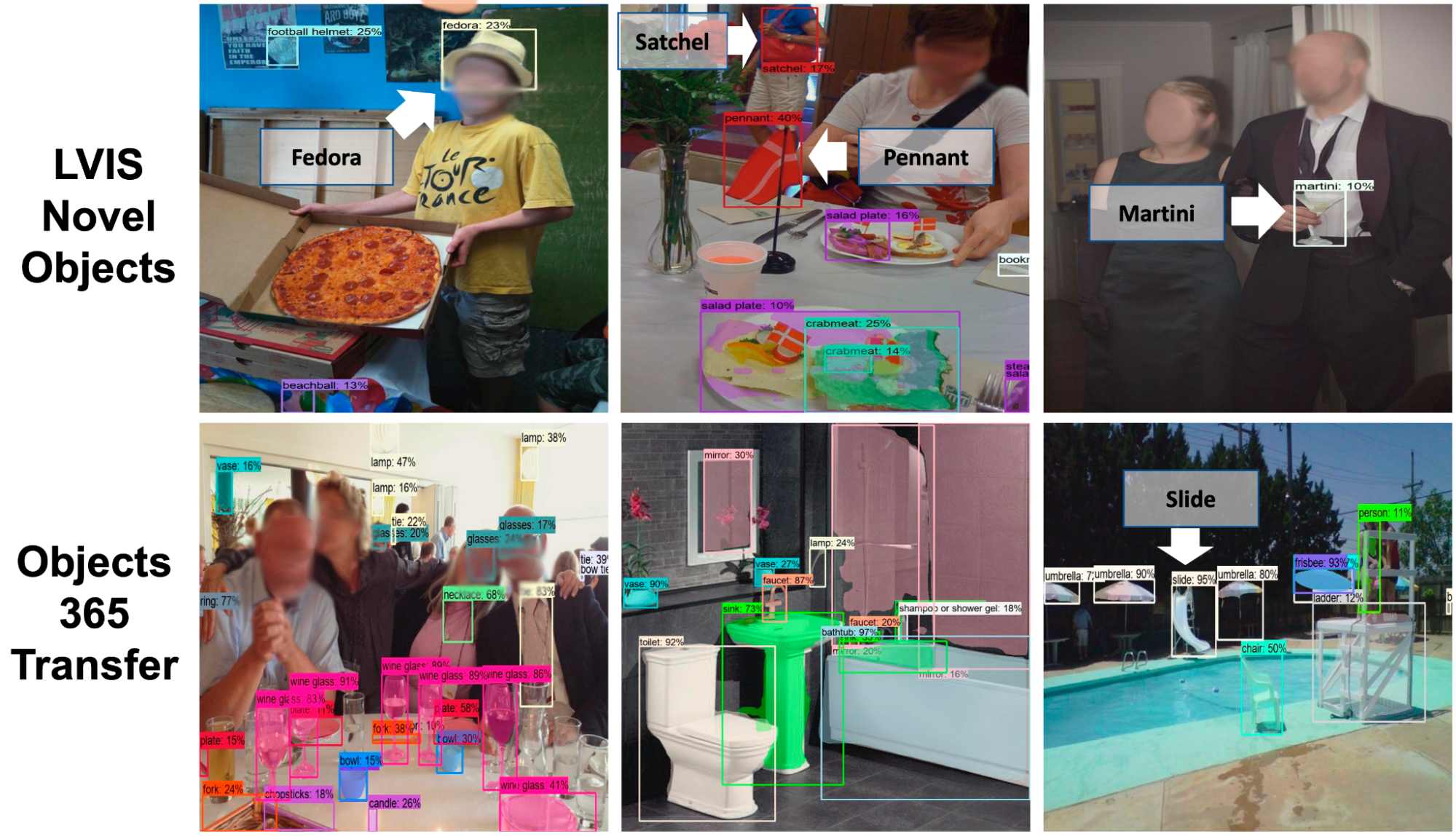

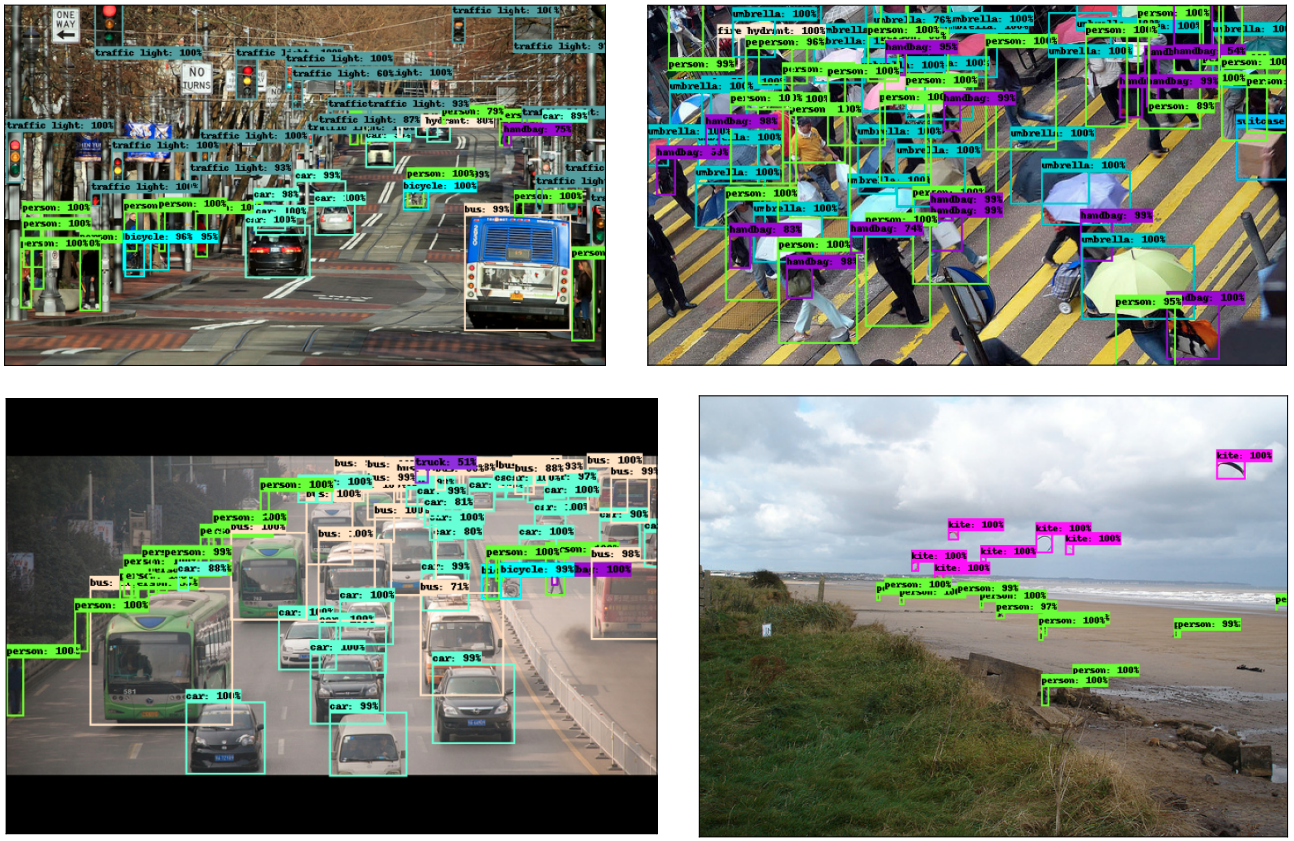

Open-Vocabulary Object Detection upon Frozen Vision and Language Models

Weicheng Kuo, Yin Cui, Xiuye Gu, AJ Piergiovanni, Anelia Angelova

(Certified!!) Adversarial Robustness for Free!

Nicholas Carlini, Florian Tramér, Krishnamurthy (Dj) Dvijotham, Leslie Rice, Mingjie Sun, J. Zico Kolter

REPAIR: REnormalizing Permuted Activations for Interpolation Repair

Keller Jordan, Hanie Sedghi, Olga Saukh, Rahim Entezari, Behnam Neyshabur

Discrete Predictor-Corrector Diffusion Models for Image Synthesis

José Lezama, Tim Salimans, Lu Jiang, Huiwen Chang, Jonathan Ho, Irfan Essa

Feature Reconstruction From Outputs Can Mitigate Simplicity Bias in Neural Networks

Sravanti Addepalli, Anshul Nasery, Praneeth Netrapalli, Venkatesh Babu R., Prateek Jain

An Exact Poly-time Membership-Queries Algorithm for Extracting a Three-Layer ReLU Network

Amit Daniely, Elad Granot

Language Models Are Multilingual Chain-of-Thought Reasoners

Freda Shi, Mirac Suzgun, Markus Freitag, Xuezhi Wang, Suraj Srivats, Soroush Vosoughi, Hyung Won Chung, Yi Tay, Sebastian Ruder, Denny Zhou, Dipanjan Das, Jason Wei

Scaling Forward Gradient with Local Losses

Mengye Ren*, Simon Kornblith, Renjie Liao, Geoffrey Hinton

Treeformer: Dense Gradient Trees for Efficient Attention Computation

Lovish Madaan, Srinadh Bhojanapalli, Himanshu Jain, Prateek Jain

LilNetX: Lightweight Networks with EXtreme Model Compression and Structured Sparsification

Sharath Girish, Kamal Gupta, Saurabh Singh, Abhinav Shrivastava

DiffusER: Diffusion via Edit-Based Reconstruction

Machel Reid, Vincent J. Hellendoorn, Graham Neubig

Leveraging Unlabeled Data to Track Memorization

Mahsa Forouzesh, Hanie Sedghi, Patrick Thiran

A Mixture-of-Expert Approach to RL-Based Dialogue Management

Yinlam Chow, Aza Tulepbergenov, Ofir Nachum, Dhawal Gupta, Moonkyung Ryu, Mohammad Ghavamzadeh, Craig Boutilier

Easy Differentially Private Linear Regression

Kareem Amin, Matthew Joseph, Monica Ribero, Sergei Vassilvitskii

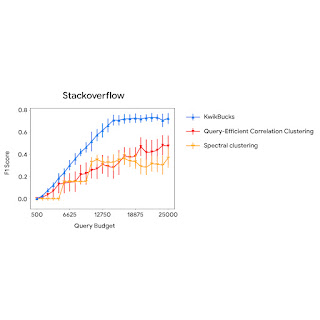

KwikBucks: Correlation Clustering with Cheap-Weak and Expensive-Strong Signals

Sandeep Silwal*, Sara Ahmadian, Andrew Nystrom, Andrew McCallum, Deepak Ramachandran, Mehran Kazemi

Massively Scaling Heteroscedastic Classifiers

Mark Collier, Rodolphe Jenatton, Basil Mustafa, Neil Houlsby, Jesse Berent, Effrosyni Kokiopoulou

The Lazy Neuron Phenomenon: On Emergence of Activation Sparsity in Transformers

Zonglin Li, Chong You, Srinadh Bhojanapalli, Daliang Li, Ankit Singh Rawat, Sashank J. Reddi, Ke Ye, Felix Chern, Felix Yu, Ruiqi Guo, Sanjiv Kumar

Compositional Semantic Parsing with Large Language Models

Andrew Drozdov, Nathanael Scharli, Ekin Akyurek, Nathan Scales, Xinying Song, Xinyun Chen, Olivier Bousquet, Denny Zhou

Extremely Simple Activation Shaping for Out-of-Distribution Detection

Andrija Djurisic, Nebojsa Bozanic, Arjun Ashok, Rosanne Liu

Long Range Language Modeling via Gated State Spaces

Harsh Mehta, Ankit Gupta, Ashok Cutkosky, Behnam Neyshabur

Investigating Multi-task Pretraining and Generalization in Reinforcement Learning

Adrien Ali Taiga, Rishabh Agarwal, Jesse Farebrother, Aaron Courville, Marc G. Bellemare

Learning Low Dimensional State Spaces with Overparameterized Recurrent Neural Nets

Edo Cohen-Karlik, Itamar Menuhin-Gruman, Raja Giryes, Nadav Cohen, Amir Globerson

Weighted Ensemble Self-Supervised Learning

Yangjun Ruan*, Saurabh Singh, Warren Morningstar, Alexander A. Alemi, Sergey Ioffe, Ian Fischer, Joshua V. Dillon

Calibrating Sequence Likelihood Improves Conditional Language Generation

Yao Zhao, Misha Khalman, Rishabh Joshi, Shashi Narayan, Mohammad Saleh, Peter J. Liu

SMART: Sentences as Basic Units for Text Evaluation

Reinald Kim Amplayo, Peter J. Liu, Yao Zhao, Shashi Narayan

Leveraging Importance Weights in Subset Selection

Gui Citovsky, Giulia DeSalvo, Sanjiv Kumar, Srikumar Ramalingam, Afshin Rostamizadeh, Yunjuan Wang*

Proto-Value Networks: Scaling Representation Learning with Auxiliary Tasks

Jesse Farebrother, Joshua Greaves, Rishabh Agarwal, Charline Le Lan, Ross Goroshin, Pablo Samuel Castro, Marc G. Bellemare

An Extensible Multi-modal Multi-task Object Dataset with Materials

Trevor Standley, Ruohan Gao, Dawn Chen, Jiajun Wu, Silvio Savarese

Measuring Forgetting of Memorized Training Examples

Matthew Jagielski, Om Thakkar, Florian Tramér, Daphne Ippolito, Katherine Lee, Nicholas Carlini, Eric Wallace, Shuang Song, Abhradeep Thakurta, Nicolas Papernot, Chiyuan Zhang

Bidirectional Language Models Are Also Few-Shot Learners

Ajay Patel, Bryan Li, Mohammad Sadegh Rasooli, Noah Constant, Colin Raffel, Chris Callison-Burch

Is Attention All That NeRF Needs?

Mukund Varma T., Peihao Wang, Xuxi Chen, Tianlong Chen, Subhashini Venugopalan, Zhangyang Wang

Automating Nearest Neighbor Search Configuration with Constrained Optimization

Philip Sun, Ruiqi Guo, Sanjiv Kumar

Static Prediction of Runtime Errors by Learning to Execute Programs with External Resource Descriptions

David Bieber, Rishab Goel, Daniel Zheng, Hugo Larochelle, Daniel Tarlow

Composing Ensembles of Pre-trained Models via Iterative Consensus

Shuang Li, Yilun Du, Joshua B. Tenenbaum, Antonio Torralba, Igor Mordatch

Λ-DARTS: Mitigating Performance Collapse by Harmonizing Operation Selection Among Cells

Sajad Movahedi, Melika Adabinejad, Ayyoob Imani, Arezou Keshavarz, Mostafa Dehghani, Azadeh Shakery, Babak N. Araabi

Blurring Diffusion Models

Emiel Hoogeboom, Tim Salimans

Part-Based Models Improve Adversarial Robustness

Chawin Sitawarin, Kornrapat Pongmala, Yizheng Chen, Nicholas Carlini, David Wagner

Learning in Temporally Structured Environments

Matt Jones, Tyler R. Scott, Mengye Ren, Gamaleldin ElSayed, Katherine Hermann, David Mayo, Michael C. Mozer

SlotFormer: Unsupervised Visual Dynamics Simulation with Object-Centric Models

Ziyi Wu, Nikita Dvornik, Klaus Greff, Thomas Kipf, Animesh Garg

Robust Algorithms on Adaptive Inputs from Bounded Adversaries

Yeshwanth Cherapanamjeri, Sandeep Silwal, David P. Woodruff, Fred Zhang, Qiuyi (Richard) Zhang, Samson Zhou

Agnostic Learning of General ReLU Activation Using Gradient Descent

Pranjal Awasthi, Alex Tang, Aravindan Vijayaraghavan

Analog Bits: Generating Discrete Data Using Diffusion Models with Self-Conditioning

Ting Chen, Ruixiang Zhang, Geoffrey Hinton

Any-Scale Balanced Samplers for Discrete Space

Haoran Sun*, Bo Dai, Charles Sutton, Dale Schuurmans, Hanjun Dai

Augmentation with Projection: Towards an Effective and Efficient Data Augmentation Paradigm for Distillation

Ziqi Wang*, Yuexin Wu, Frederick Liu, Daogao Liu, Le Hou, Hongkun Yu, Jing Li, Heng Ji

Beyond Lipschitz: Sharp Generalization and Excess Risk Bounds for Full-Batch GD

Konstantinos E. Nikolakakis, Farzin Haddadpour, Amin Karbasi, Dionysios S. Kalogerias

Causal Estimation for Text Data with (Apparent) Overlap Violations

Lin Gui, Victor Veitch

Contrastive Learning Can Find an Optimal Basis for Approximately View-Invariant Functions

Daniel D. Johnson, Ayoub El Hanchi, Chris J. Maddison

Differentially Private Adaptive Optimization with Delayed Preconditioners

Tian Li, Manzil Zaheer, Ziyu Liu, Sashank Reddi, Brendan McMahan, Virginia Smith

Distributionally Robust Post-hoc Classifiers Under Prior Shifts

Jiaheng Wei*, Harikrishna Narasimhan, Ehsan Amid, Wen-Sheng Chu, Yang Liu, Abhishek Kumar

Human Alignment of Neural Network Representations

Lukas Muttenthaler, Jonas Dippel, Lorenz Linhardt, Robert A. Vandermeulen, Simon Kornblith

Implicit Bias in Leaky ReLU Networks Trained on High-Dimensional Data

Spencer Frei, Gal Vardi, Peter Bartlett, Nathan Srebro, Wei Hu

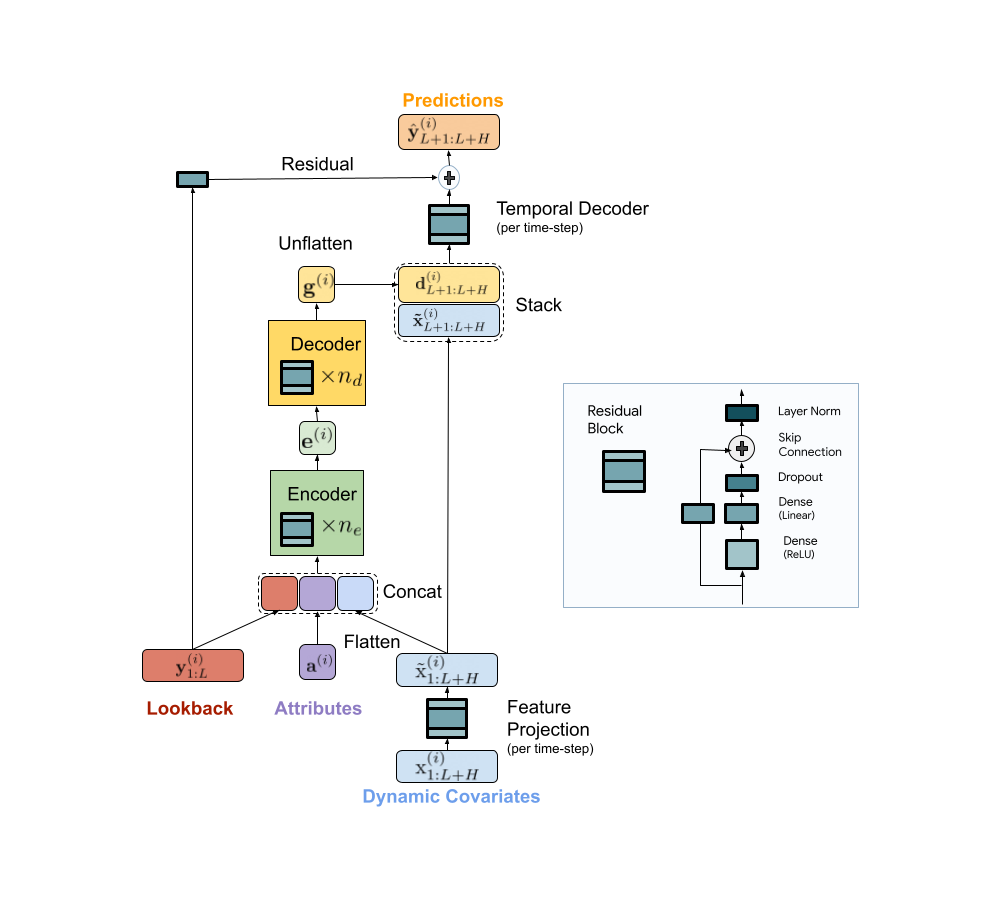

Koopman Neural Operator Forecaster for Time-Series with Temporal Distributional Shifts

Rui Wang*, Yihe Dong, Sercan Ö. Arik, Rose Yu

Latent Variable Representation for Reinforcement Learning

Tongzheng Ren, Chenjun Xiao, Tianjun Zhang, Na Li, Zhaoran Wang, Sujay Sanghavi, Dale Schuurmans, Bo Dai

Least-to-Most Prompting Enables Complex Reasoning in Large Language Models

Denny Zhou, Nathanael Scharli, Le Hou, Jason Wei, Nathan Scales, Xuezhi Wang, Dale Schuurmans, Claire Cui, Olivier Bousquet, Quoc Le, Ed Chi

Mind's Eye: Grounded Language Model Reasoning Through Simulation

Ruibo Liu, Jason Wei, Shixiang Shane Gu, Te-Yen Wu, Soroush Vosoughi, Claire Cui, Denny Zhou, Andrew M. Dai

MOAT: Alternating Mobile Convolution and Attention Brings Strong Vision Models

Chenglin Yang*, Siyuan Qiao, Qihang Yu, Xiaoding Yuan, Yukun Zhu, Alan Yuille, Hartwig Adam, Liang-Chieh Chen

Novel View Synthesis with Diffusion Models

Daniel Watson, William Chan, Ricardo Martin-Brualla, Jonathan Ho, Andrea Tagliasacchi, Mohammad Norouzi

On Accelerated Perceptrons and Beyond

Guanghui Wang, Rafael Hanashiro, Etash Guha, Jacob Abernethy

On Compositional Uncertainty Quantification for Seq2seq Graph Parsing

Zi Lin*, Du Phan, Panupong Pasupat, Jeremiah Liu, Jingbo Shang

On the Robustness of Safe Reinforcement Learning Under Observational Perturbations

Zuxin Liu, Zijian Guo, Zhepeng Cen, Huan Zhang, Jie Tan, Bo Li, Ding Zhao

Online Low Rank Matrix Completion

Prateek Jain, Soumyabrata Pal

Out-of-Distribution Detection and Selective Generation for Conditional Language Models

Jie Ren, Jiaming Luo, Yao Zhao, Kundan Krishna*, Mohammad Saleh, Balaji Lakshminarayanan, Peter J. Liu

PaLI: A Jointly-Scaled Multilingual Language-Image Model

Xi Chen, Xiao Wang, Soravit Changpinyo, AJ Piergiovanni, Piotr Padlewski, Daniel Salz, Sebastian Goodman, Adam Grycner, Basil Mustafa, Lucas Beyer, Alexander Kolesnikov, Joan Puigcerver, Nan Ding, Keran Rong, Hassan Akbari, Gaurav Mishra, Linting Xue, Ashish V. Thapliyal, James Bradbury, Weicheng Kuo, Mojtaba Seyedhosseini, Chao Jia, Burcu Karagol Ayan, Carlos Riquelme Ruiz, Andreas Peter Steiner, Anelia Angelova, Xiaohua Zhai, Neil Houlsby, Radu Soricut

Phenaki: Variable Length Video Generation from Open Domain Textual Descriptions

Ruben Villegas, Mohammad Babaeizadeh, Pieter-Jan Kindermans, Hernan Moraldo, Han Zhang, Mohammad Taghi Saffar, Santiago Castro*, Julius Kunze*, Dumitru Erhan

Promptagator: Few-Shot Dense Retrieval from 8 Examples

Zhuyun Dai, Vincent Y. Zhao, Ji Ma, Yi Luan, Jianmo Ni, Jing Lu, Anton Bakalov, Kelvin Guu, Keith B. Hall, Ming-Wei Chang

Pushing the Accuracy-Group Robustness Frontier with Introspective Self-Play

Jeremiah Zhe Liu, Krishnamurthy Dj Dvijotham, Jihyeon Lee, Quan Yuan, Balaji Lakshminarayanan, Deepak Ramachandran

Re-Imagen: Retrieval-Augmented Text-to-Image Generator

Wenhu Chen, Hexiang Hu, Chitwan Saharia, William W. Cohen

Recitation-Augmented Language Models

Zhiqing Sun, Xuezhi Wang, Yi Tay, Yiming Yang, Denny Zhou

Regression with Label Differential Privacy

Badih Ghazi, Pritish Kamath, Ravi Kumar, Ethan Leeman, Pasin Manurangsi, Avinash Varadarajan, Chiyuan Zhang

Revisiting the Entropy Semiring for Neural Speech Recognition

Oscar Chang, Dongseong Hwang, Olivier Siohan

Robust Active Distillation

Cenk Baykal, Khoa Trinh, Fotis Iliopoulos, Gaurav Menghani, Erik Vee

Score-Based Continuous-Time Discrete Diffusion Models

Haoran Sun*, Lijun Yu, Bo Dai, Dale Schuurmans, Hanjun Dai

Self-Consistency Improves Chain of Thought Reasoning in Language Models

Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed H. Chi, Sharan Narang, Aakanksha Chowdhery, Denny Zhou

Self-Supervision Through Random Segments with Autoregressive Coding (RandSAC)

Tianyu Hua, Yonglong Tian, Sucheng Ren, Michalis Raptis, Hang Zhao, Leonid Sigal

Serving Graph Compression for Graph Neural Networks

Si Si, Felix Yu, Ankit Singh Rawat, Cho-Jui Hsieh, Sanjiv Kumar

Sequential Attention for Feature Selection

Taisuke Yasuda*, MohammadHossein Bateni, Lin Chen, Matthew Fahrbach, Gang Fu, Vahab Mirrokni

Sparse Upcycling: Training Mixture-of-Experts from Dense Checkpoints

Aran Komatsuzaki*, Joan Puigcerver, James Lee-Thorp, Carlos Riquelme, Basil Mustafa, Joshua Ainslie, Yi Tay, Mostafa Dehghani, Neil Houlsby

Spectral Decomposition Representation for Reinforcement Learning

Tongzheng Ren, Tianjun Zhang, Lisa Lee, Joseph Gonzalez, Dale Schuurmans, Bo Dai

Spotlight: Mobile UI Understanding Using Vision-Language Models with a Focus (see blog post)

Gang Li, Yang Li

Supervision Complexity and Its Role in Knowledge Distillation

Hrayr Harutyunyan*, Ankit Singh Rawat, Aditya Krishna Menon, Seungyeon Kim, Sanjiv Kumar

Teacher Guided Training: An Efficient Framework for Knowledge Transfer

Manzil Zaheer, Ankit Singh Rawat, Seungyeon Kim, Chong You, Himanshu Jain, Andreas Veit, Rob Fergus, Sanjiv Kumar

TEMPERA: Test-Time Prompt Editing via Reinforcement Learning

Tianjun Zhang, Xuezhi Wang, Denny Zhou, Dale Schuurmans, Joseph E. Gonzalez

UL2: Unifying Language Learning Paradigms

Yi Tay, Mostafa Dehghani, Vinh Q. Tran, Xavier Garcia, Jason Wei, Xuezhi Wang, Hyung Won Chung, Dara Bahri, Tal Schuster, Steven Zheng, Denny Zhou, Neil Houlsby, Donald Metzler

* Work done while at Google