On June 2nd, 2021, Major League Baseball in the United States celebrated Lou Gehrig Day, commemorating both the day in 1925 that Lou Gehrig became the Yankees’ starting first baseman, and the day in 1941 that he passed away from amyotrophic lateral sclerosis (ALS, also known as Lou Gehrig’s disease) at the age of 37. ALS is a progressive neurodegenerative disease that affects motor neurons, which connect the brain with the muscles throughout the body, and govern muscle control and voluntary movements. When voluntary muscle control is affected, people may lose their ability to speak, eat, move and breathe.

In honor of Lou Gehrig, former NFL player and ALS advocate Steve Gleason, who lost his ability to speak due to ALS, recited Gehrig’s famous “Luckiest Man” speech at the June 2nd event using a recreation of his voice generated by a machine learning (ML) model. Gleason’s voice recreation was developed in collaboration with Google’s Project Euphonia, which aims to empower people who have impaired speaking ability due to ALS to better communicate using their own voices.

| Steve Gleason, who lost his voice to ALS, worked with Google’s Project Euphonia to generate a speech in his own voice in honor of Lou Gehrig. A portion of Gleason’s speech was broadcast in ballparks across the country during the 4th inning on June 2nd, 2021. |

Today we describe PnG NAT, the model adopted by Project Euphonia to recreate Steve Gleason’s voice. PnG NAT is a new text-to-speech synthesis (TTS) model that merges two state-of-the-art technologies, PnG BERT and Non-Attentive Tacotron (NAT), into a single model. It demonstrates significantly better quality and fluency than previous technologies, and represents a promising approach that can be extended to a wider array of users.

Recreating a Voice

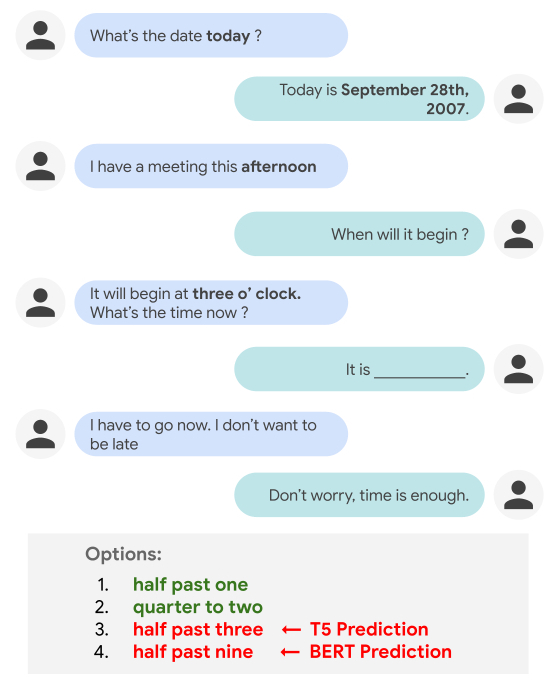

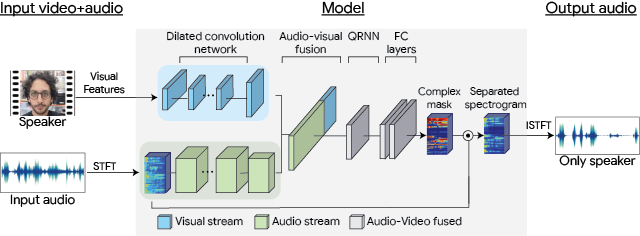

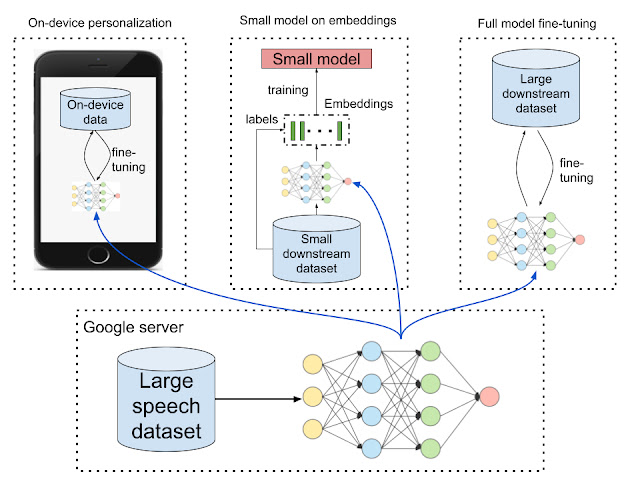

Non-Attentive Tacotron (NAT) is the successor to Tacotron 2, a sequence-to-sequence neural TTS model proposed in 2017. Tacotron 2 used an attention module to connect the input text sequence and the output speech spectrogram frame sequence, so that the model knows which part of the text to pay attention to when generating each time step of the synthesized speech spectrogram. Tacotron 2 was the first TTS model that was able to synthesize speech that sounds as natural as a person speaking. However, with extensive experimentation we discovered that there is a small probability that the model can suffer from robustness issues — such as babbling, repeating, or skipping part of the text — due to the inherent flexibility of the attention mechanism.

NAT improves upon Tacotron 2 by replacing the attention module with a duration-based upsampler, which predicts a duration for each input phoneme and upsamples the encoded phoneme representation so that the output length corresponds to the length of the predicted speech spectrogram. Such a change both resolves the robustness issue, and improves the naturalness of the synthesized speech. This approach also enables precise control of the speech duration for each phoneme of the input text while still maintaining highly natural synthesis quality. Because recordings of people with ALS often exhibit disfluent speech, this ability to exert per-phoneme control is key for achieving the fluency of the recreated voice.

|

| Non-Attentive Tacotron (NAT) model. |

While NAT addresses the robustness issue and enables precise duration control in neural TTS, we build upon it to further improve the natural language understanding of the TTS input. For this, we apply PnG BERT, which uses an approach similar to BERT, but is specifically designed for TTS. It is pre-trained with self-supervision on both the phoneme representation and the grapheme representation of the same content from a large text corpus, and then is used as the encoder of the TTS model. This results in a significant improvement of the prosody and pronunciation of the synthesized speech, especially in difficult cases.

Take, for example, the following audio, which was synthesized from a regular NAT model that takes only phonemes as input:

In comparison, the audio synthesized from PnG NAT on the same input text includes an additional pause that makes the meaning more clear.

The input text to both models is, “To cancel the payment, press one; or to continue, two.” Notice the different pause lengths before the ending “two” in the two versions. The word “two” in the version output by the regular NAT model could be confused for “too”. Because “too” and “two” have identical pronunciation (and thus the same phoneme representation), the regular NAT model does not understand which of the two is appropriate, and assumes it to be the word that more frequently follows a comma, “too”. In contrast, the PnG NAT model can more easily tell the difference, because it takes graphemes in addition to phonemes as input, and thus makes more appropriate pause.

The PnG NAT model integrates the pre-trained PnG BERT model as the encoder to the NAT model. The hidden representations output from the encoder are used by NAT to predict the duration of each phoneme, and are then upsampled to match the length of the audio spectrogram, as outlined above. In the final step, a non-attentive decoder converts the upsampled hidden representations into audio speech spectrograms, which are finally converted into audio waveforms by a neural vocoder.

|

| PnG BERT and the pre-training objectives. Yellow boxes represent phonemes, and pink boxes represent graphemes. |

|

| PnG NAT: PnG BERT replaces the original encoder in the NAT model. The random masking for the Masked Language Model (MLM) pre-training is removed. |

To recreate Steve Gleason’s voice, we first trained a PnG NAT model with recordings from 31 professional speakers, and then fine-tuned it with 30 minutes of Gleason’s recordings. Because these latter recordings were made after he was diagnosed with ALS, they exhibit signs of slurring. The fine tuned model was able to synthesize speech that sounds very similar to these recordings. However, because the symptoms of ALS were already present in Gleason’s speech, they exhibited some similar disfluencies.

To mitigate this, we leveraged the phoneme duration control of NAT as well as the model trained with professional speakers. We first predicted the durations of each phoneme for both a professional speaker and for Gleason, and then used the geometric mean of the two durations for each phoneme to guide the NAT output. As a result, the model is able to speak in Gleason’s voice, but more fluently than in the original recordings.

Here is the full version of the synthesized Lou Gehrig speech in Gleason’s voice:

Besides recreating voices for people with ALS, PnG NAT is also powering voices for a variety of customers through Google Cloud Custom Voice.

Project Euphonia

Of the millions of people around the world who have neurologic conditions that may impact their speech, such as ALS, cerebral palsy or Down syndrome, many may find it difficult to be understood, which can make face-to-face communication challenging. Using voice-activated technologies can be frustrating too, as they don’t always work reliably. Project Euphonia is a Google Research initiative focused on helping people with impaired speech be better understood. The team is researching ways to improve speech recognition for individuals with speech impairments (see recent blog post and segment in TODAY show), as well as customized text-to-speech technology (see Age of AI documentary featuring former NFL player Tim Shaw).

Acknowledgements

Many people across Google Research, Google Cloud and Consumer Apps, and Google Accessibility teams contributed to this project and the event, including Michael Brenner, Bob MacDonald, Heiga Zen, Yu Zhang, Jonathan Shen, Isaac Elias, Yonghui Wu, Anne Keck, Danielle Notaro, Kevin Hogan, Zack Kaplan, KR Liu, Kyndra Price, Zoe Ortiz.