Robust algorithm design is the backbone of systems across Google, particularly for our ML and AI models. Hence, developing algorithms with improved efficiency, performance and speed remains a high priority as it empowers services ranging from Search and Ads to Maps and YouTube. Google Research has been at the forefront of this effort, developing many innovations from privacy-safe recommendation systems to scalable solutions for large-scale ML. In 2022, we continued this journey, and advanced the state-of-the-art in several related areas. Here we highlight our progress in a subset of these, including scalability, privacy, market algorithms, and algorithmic foundations.

| · Scalable algorithms: Graphs, clustering, and optimization |

| · Privacy and federated learning |

| · Market algorithms and causal inference |

| · Algorithmic foundations and theory |

Scalable algorithms: Graphs, clustering, and optimization

As the need to handle large-scale datasets increases, scalability and reliability of complex algorithms that also exhibit improved explainability, robustness, and speed remain a high priority. We continued our efforts in developing new algorithms for handling large datasets in various areas, including unsupervised and semi-supervised learning, graph-based learning, clustering, and large-scale optimization.

An important component of such systems is to build a similarity graph — a nearest-neighbor graph that represents similarities between objects. For scalability and speed, this graph should be sparse without compromising quality. We proposed a 2-hop spanner technique, called STAR, as an efficient and distributed graph building strategy, and showed how it significantly decreases the number of similarity computations in theory and practice, building much sparser graphs while producing high-quality graph learning or clustering outputs. As an example, for graphs with 10T edges, we demonstrate ~100-fold improvements in pairwise similarity comparisons and significant running time speedups with negligible quality loss. We had previously applied this idea to develop massively parallel algorithms for metric, and minimum-size clustering. More broadly in the context of clustering, we developed the first linear-time hierarchical agglomerative clustering (HAC) algorithm as well as DBSCAN, the first parallel algorithm for HAC with logarithmic depth, which achieves 50x speedup on 100B-edge graphs. We also designed improved sublinear algorithms for different flavors of clustering problems such as geometric linkage clustering, constant-round correlation clustering, and fully dynamic k-clustering.

Inspired by the success of multi-core processing (e.g., GBBS), we embarked on a mission to develop graph mining algorithms that can handle graphs with 100B edges on a single multi-core machine. The big challenge here is to achieve fast (e.g., sublinear) parallel running time (i.e., depth). Following our previous work for community detection and correlation clustering, we developed an algorithm for HAC, called ParHAC, which has provable polylogarithmic depth and near-linear work and achieves a 50x speedup. As an example, it took ParHAC only ~10 minutes to find an approximate affinity hierarchy over a graph of over 100B edges, and ~3 hours to find the full HAC on a single machine. Following our previous work on distributed HAC, we use these multi-core algorithms as a subroutine within our distributed algorithms in order to handle tera-scale graphs.

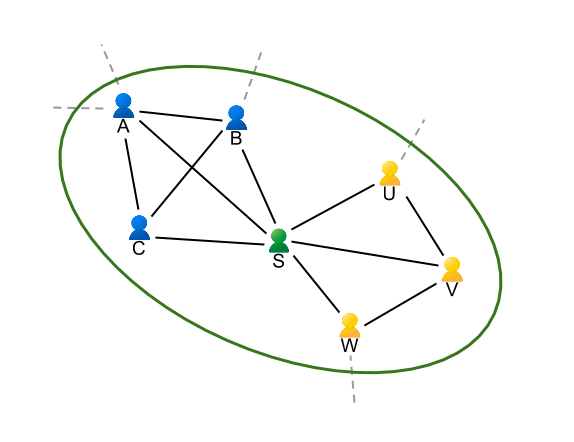

We also had a number of interesting results on graph neural networks (GNN) in 2022. We provided a model-based taxonomy that unified many graph learning methods. In addition, we discovered insights for GNN models from their performance across thousands of graphs with varying structure (shown below). We also proposed a new hybrid architecture to overcome the depth requirements of existing GNNs for solving fundamental graph problems, such as shortest paths and the minimum spanning tree.

|

| Relative performance results of three GNN variants (GCN, APPNP, FiLM) across 50,000 distinct node classification datasets in GraphWorld. We find that academic GNN benchmark datasets exist in regions where model rankings do not change. GraphWorld can discover previously unexplored graphs that reveal new insights about GNN architectures. |

Furthermore, to bring some of these many advances to the broader community, we had three releases of our flagship modeling library for building graph neural networks in TensorFlow (TF-GNN). Highlights include a model library and model orchestration API to make it easy to compose GNN solutions. Following our NeurIPS’20 workshop on Mining and Learning with Graphs at Scale, we ran a workshop on graph-based learning at ICML’22, and a tutorial for GNNs in TensorFlow at NeurIPS’22.

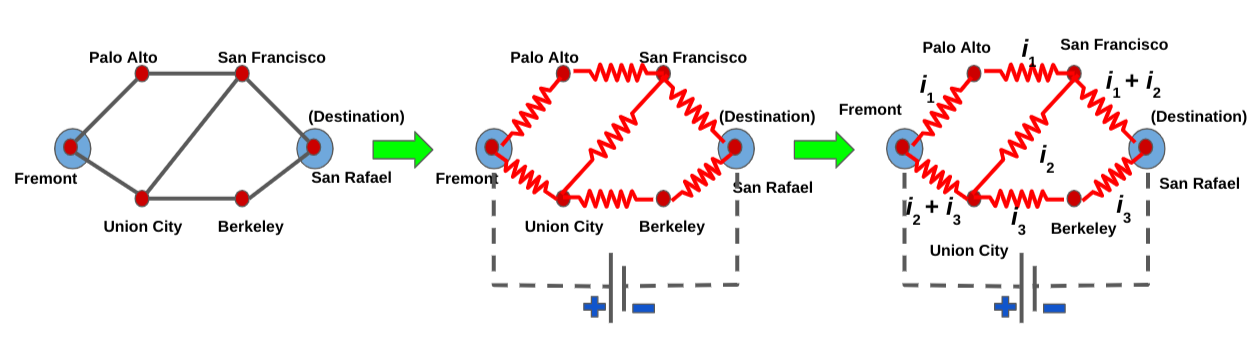

In “Robust Routing Using Electrical Flows”, we presented a recent paper that proposed a Google Maps solution to efficiently compute alternate paths in road networks that are resistant to failures (e.g., closures, incidents). We demonstrate how it significantly outperforms the state-of-the-art plateau and penalty methods on real-world road networks.

On the optimization front, we open-sourced Vizier, our flagship blackbox optimization and hyperparameter tuning library at Google. We also developed new techniques for linear programming (LP) solvers that address scalability limits caused by their reliance on matrix factorizations, which restricts the opportunity for parallelism and distributed approaches. To this end, we open-sourced a primal-dual hybrid gradient (PDHG) solution for LP called primal-dual linear programming (PDLP), a new first-order solver for large-scale LP problems. PDLP has been used to solve real-world problems with as many as 12B non-zeros (and an internal distributed version scaled to 92B non-zeros). PDLP's effectiveness is due to a combination of theoretical developments and algorithm engineering.

|

| With OSS Vizier, multiple clients each send a “Suggest” request to the Service API, which produces Suggestions for the clients using Pythia policies. The clients evaluate these suggestions and return measurements. All transactions are stored to allow fault-tolerance. |

Privacy and federated learning

Respecting user privacy while providing high-quality services remains a top priority for all Google systems. Research in this area spans many products and uses principles from differential privacy (DP) and federated learning.

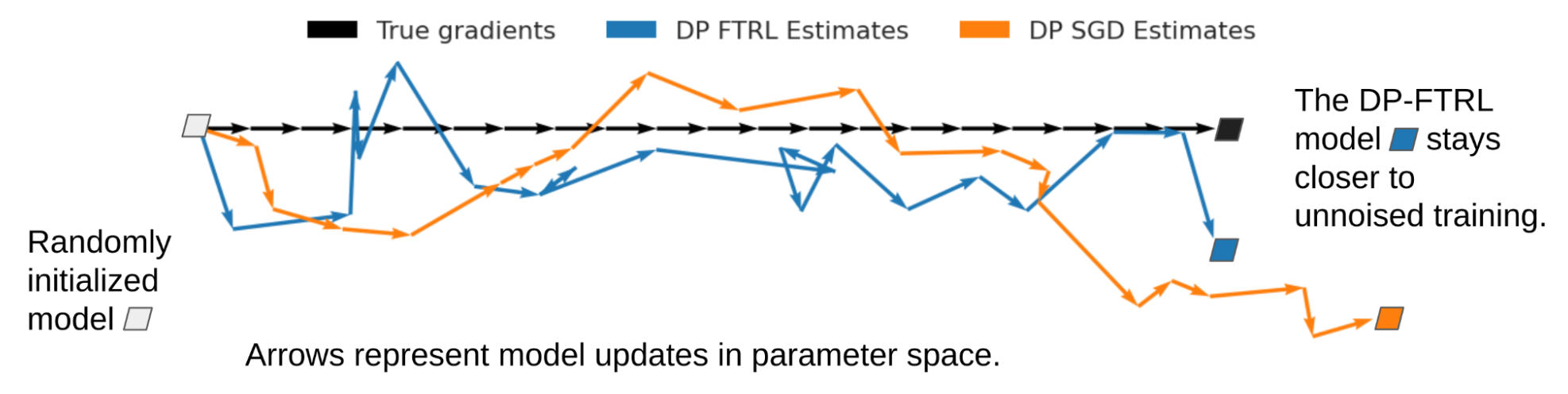

First of all, we have made a variety of algorithmic advances to address the problem of training large neural networks with DP. Building on our earlier work, which enabled us to launch a DP neural network based on the DP-FTRL algorithm, we developed the matrix factorization DP-FTRL approach. This work demonstrates that one can design a mathematical program to optimize over a large set of possible DP mechanisms to find those best suited for specific learning problems. We also establish margin guarantees that are independent of the input feature dimension for DP learning of neural networks and kernel-based methods. We further extend this concept to a broader range of ML tasks, matching baseline performance with 300x less computation. For fine-tuning of large models, we argued that once pre-trained, these models (even with DP) essentially operate over a low-dimensional subspace, hence circumventing the curse of dimensionality that DP imposes.

On the algorithmic front, for estimating the entropy of a high-dimensional distribution, we obtained local DP mechanisms (that work even when as little as one bit per sample is available) and efficient shuffle DP mechanisms. We proposed a more accurate method to simultaneously estimate the top-k most popular items in the database in a private manner, which we employed in the Plume library. Moreover, we showed a near-optimal approximation algorithm for DP clustering in the massively parallel computing (MPC) model, which further improves on our previous work for scalable and distributed settings.

Another exciting research direction is the intersection of privacy and streaming. We obtained a near-optimal approximation-space trade-off for the private frequency moments and a new algorithm for privately counting distinct elements in the sliding window streaming model. We also presented a general hybrid framework for studying adversarial streaming.

Addressing applications at the intersection of security and privacy, we developed new algorithms that are secure, private, and communication-efficient, for measuring cross-publisher reach and frequency. The World Federation of Advertisers has adopted these algorithms as part of their measurement system. In subsequent work, we developed new protocols that are secure and private for computing sparse histograms in the two-server model of DP. These protocols are efficient from both computation and communication points of view, are substantially better than what standard methods would yield, and combine tools and techniques from sketching, cryptography and multiparty computation, and DP.

While we have trained BERT and transformers with DP, understanding training example memorization in large language models (LLMs) is a heuristic way to evaluate their privacy. In particular, we investigated when and why LLMs forget (potentially memorized) training examples during training. Our findings suggest that earlier-seen examples may observe privacy benefits at the expense of examples seen later. We also quantified the degree to which LLMs emit memorized training data.

Market algorithms and causal inference

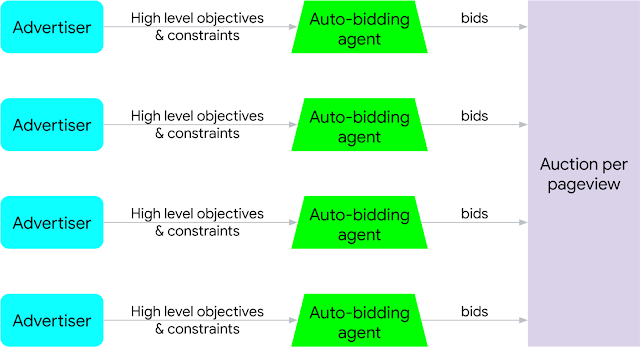

We also continued our research in improving online marketplaces in 2022. For example, an important recent area in ad auction research is the study of auto-bidding online advertising where the majority of bidding happens via proxy bidders that optimize higher-level objectives on behalf of advertisers. The complex dynamics of users, advertisers, bidders, and ad platforms leads to non-trivial problems in this space. Following our earlier work in analyzing and improving mechanisms under auto-bidding auctions, we continued our research in improving online marketplaces in the context of automation while taking different aspects into consideration, such as user experience and advertiser budgets. Our findings suggest that properly incorporating ML advice and randomization techniques, even in non-truthful auctions, can robustly improve the overall welfare at equilibria among auto-bidding algorithms.

|

| Structure of auto-bidding online ads system. |

Beyond auto-bidding systems, we also studied auction improvements in complex environments, e.g., settings where buyers are represented by intermediaries, and with Rich Ads where each ad can be shown in one of several possible variants. We summarize our work in this area in a recent survey. Beyond auctions, we also investigate the use of contracts in multi-agent and adversarial settings.

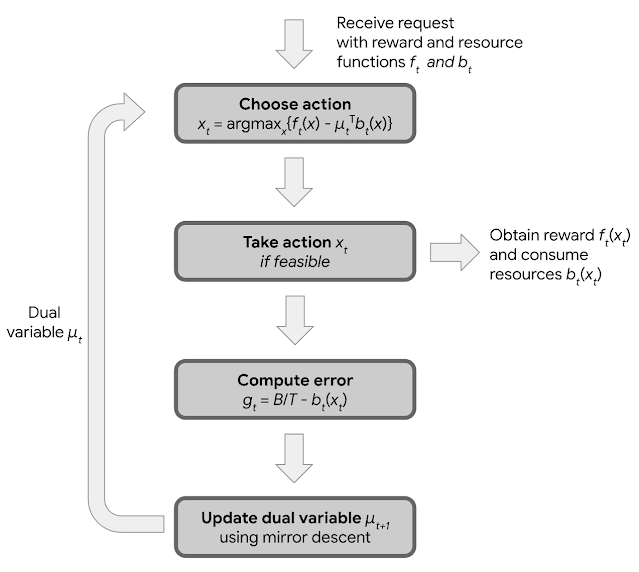

Online stochastic optimization remains an important part of online advertising systems with application in optimal bidding and budget pacing. Building on our long-term research in online allocation, we recently blogged about dual mirror descent, a new algorithm for online allocation problems that is simple, robust, and flexible. This state-of-the-art algorithm is robust against a wide range of adversarial and stochastic input distributions and can optimize important objectives beyond economic efficiency, such as fairness. We also show that by tailoring dual mirror descent to the special structure of the increasingly popular return-on-spend constraints, we can optimize advertiser value. Dual mirror descent has a wide range of applications and has been used over time to help advertisers obtain more value through better algorithmic decision making.

|

| An overview of the dual mirror descent algorithm. |

Furthermore, following our recent work at the interplay of ML, mechanism design and markets, we investigated transformers for asymmetric auction design, designed utility-maximizing strategies for no-regret learning buyers, and developed new learning algorithms to bid or to price in auctions.

|

| An overview of bipartite experimental design to reduce causal interactions between entities. |

A critical component of any sophisticated online service is the ability to experimentally measure the response of users and other players to new interventions. A major challenge of estimating these causal effects accurately is handling complex interactions — or interference — between the control and treatment units of these experiments. We combined our graph clustering and causal inference expertise to expand the results of our previous work in this area, with improved results under a flexible response model and a new experimental design that is more effective at reducing these interactions when treatment assignments and metric measurements occur on the same side of a bipartite platform. We also showed how synthetic control and optimization techniques can be combined to design more powerful experiments, especially in small data regimes.

Algorithmic foundations and theory

Finally, we continued our fundamental algorithmic research by tackling long-standing open problems. A surprisingly concise paper affirmatively resolved a four-decade old open question on whether there is a mechanism that guarantees a constant fraction of the gains-from-trade attainable whenever buyer's value weakly exceeds seller's cost. Another recent paper obtained the state-of-the-art approximation for the classic and highly-studied k-means problem. We also improved the best approximation for correlation clustering breaking the barrier approximation factor of 2. Finally, our work on dynamic data structures to solve min-cost and other network flow problems has contributed to a breakthrough line of work in adapting continuous optimization techniques to solve classic discrete optimization problems.

Concluding thoughts

Designing effective algorithms and mechanisms is a critical component of many Google systems that need to handle tera-scale data robustly with critical privacy and safety considerations. Our approach is to develop algorithms with solid theoretical foundations that can be deployed effectively in our product systems. In addition, we are bringing many of these advances to the broader community by open-sourcing some of our most novel developments and by publishing the advanced algorithms behind them. In this post, we covered a subset of algorithmic advances in privacy, market algorithms, scalable algorithms, graph-based learning, and optimization. As we move toward an AI-first Google with further automation, developing robust, scalable, and privacy-safe ML algorithms remains a high priority. We are excited about developing new algorithms and deploying them more broadly.

Acknowledgements

This post summarizes research from a large number of teams and benefited from input from several researchers including Gagan Aggarwal, Amr Ahmed, David Applegate, Santiago Balseiro, Vincent Cohen-addad, Yuan Deng, Alessandro Epasto, Matthew Fahrbach, Badih Ghazi, Sreenivas Gollapudi, Rajesh Jayaram, Ravi Kumar, Sanjiv Kumar, Silvio Lattanzi, Kuba Lacki, Brendan McMahan, Aranyak Mehta, Bryan Perozzi, Daniel Ramage, Ananda Theertha Suresh, Andreas Terzis, Sergei Vassilvitskii, Di Wang, and Song Zuo. Special thanks to Ravi Kumar for his contributions to this post.

Google Research, 2022 & beyond

This was the fifth blog post in the “Google Research, 2022 & Beyond” series. Other posts in this series are listed in the table below:

| Language Models | Computer Vision | Multimodal Models |

| Generative Models | Responsible AI | ML & Computer Systems |

| Efficient Deep Learning | Algorithmic Advances | Robotics* |

| Health | General Science & Quantum | Community Engagement |

| * Articles will be linked as they are released. |