By Samir Hammoudi, Gaming Technical Specialist, Google Cloud

Dragon Ball Legends, a new mobile game from Bandai Namco Entertainment (BNE), is based on its popular

Dragon Ball Z franchise, and is rolling out to gamers around the world as we speak. But planning the cloud infrastructure to power the game dates back to February 2017, when BNE approached Google Cloud to talk about the interesting challenges they were facing, and how we could help.

Based on their anticipated demand, BNE had three ambitious requirements for their game:

- Extreme scalability. The game would be launched globally, so it needed backend that could scale with millions of players and still perform well.

- Global network. Because the game allows real-time player versus player battles, it needs a reliable and low-latency network across regions.

- Real-time data analytics. The game is designed to evolve with players in real-time, so it was critical to have a data analytics pipeline to stream data to a data warehouse. Then the operation team can measure and evaluate how people are playing the game and adjust it on-the-fly.

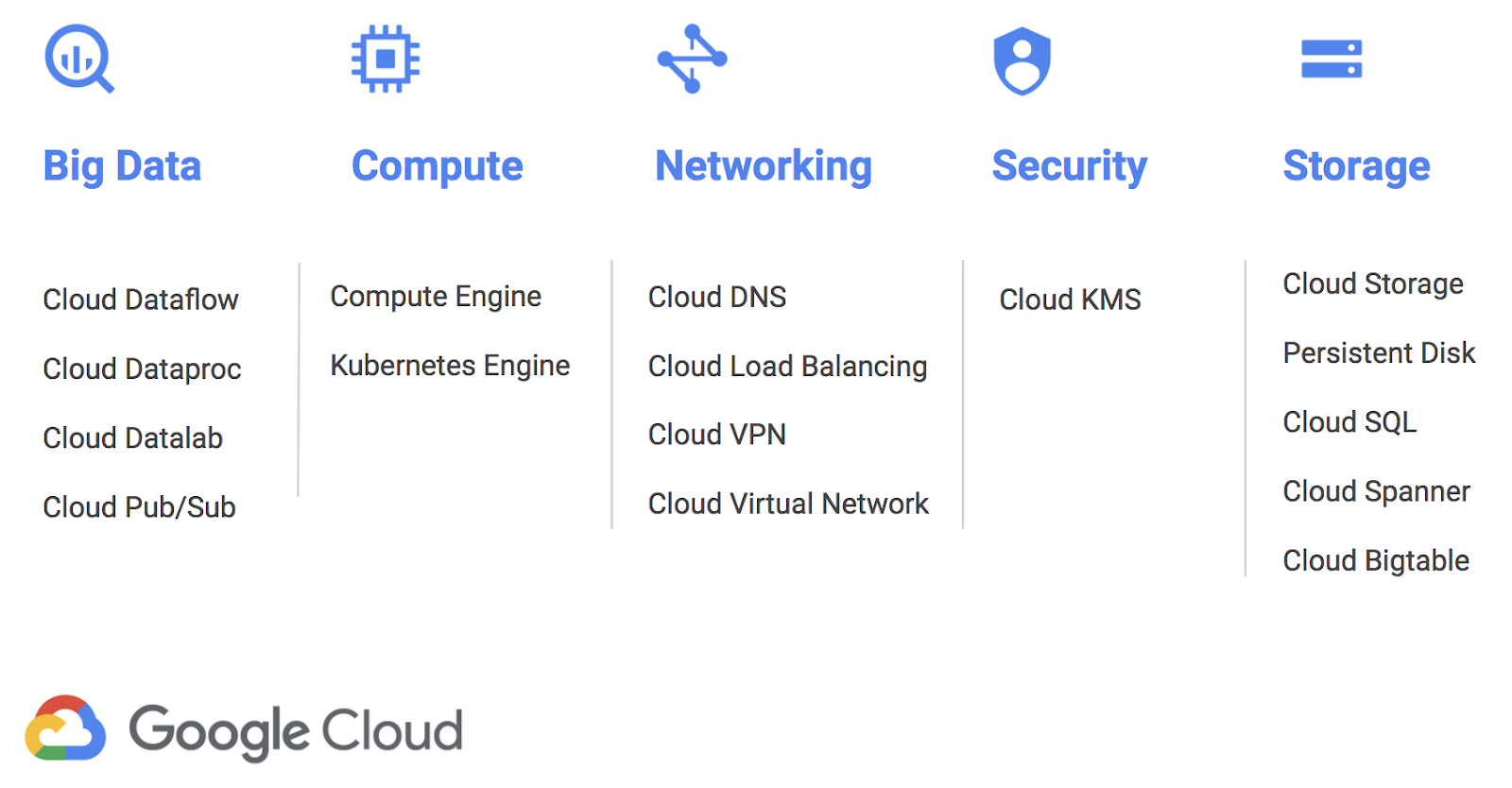

All three of these are areas where we have a lot of experience. Google has multiple global services with more than a billion users, and we use the data those services generate to improve them over time. And because

Google Cloud Platform (GCP) runs on the same infrastructure as these Google services, GCP customers can take advantage of the same enabling technologies.

Let’s take a look at how BNE worked with Google Cloud to build the infrastructure for Dragon Ball Legends.

Challenge #1: Extreme scalability

MySQL is extensively used by gaming companies in Japan because engineers are used to working with relational databases with schema, SQL queries and strong consistency. This simplifies a lot on the application side that doesn’t have to handle any database limitations like eventual consistency or schema enforcement. MySQL is a widely used even outside gaming and most backend engineers already have strong experience using this database.

While MySQL offers many advantages, it has one big limitation: scalability. Indeed, as a scale-up database if you want to increase MySQL performance, you need to add more CPU, RAM or disk. And when a single instance of MySQL can’t handle the load anymore, you can divide the load by sharding—splitting users into groups and assigning them to multiple independent instances of MySQL. Sharding has a number of drawbacks, however. Most gaming developers calculate the number of shards they’ll need for the database before the game launches since resharding is labor-intensive and error-prone. That causes gaming companies tend to overprovision the database to eventually handle more players than they expect. If the game is as popular as expected, everything is fine. But what if the game is a runaway hit and exceeds the anticipated demand? And what about the long tail representing a gradual decrease in active players? What if it’s an out-and-out flop? MySQL sharding is not dynamically scalable, and adjusting its size requires maintenance as well as risk.

In an ideal world, databases can scale in and out without downtime while offering the advantages of a relational database. When we first heard that BNE was considering MySQL sharding to handle the massive anticipated traffic for Dragon Ball Legends, we suggested they consider Cloud Spanner instead.

Why Cloud Spanner?

Cloud Spanner is a fully managed relational database that offers horizontal scalability and high availability while keeping strong consistency with a schema that is similar to MySQL’s. Better yet, as a managed service, it’s looked after by

Google SREs, removing database maintenance and minimizing the risk of downtime. We thought Cloud Spanner would be able to help BNE make their game global.

Evaluation to implementation

Before adopting a new technology, engineers should always test it to confirm its expected performance in a real world scenario. Before replacing MySQL, BNE created a new Cloud Spanner instance in GCP, including a few tables with a similar schema to what they used in MySQL. Since their backend developers were writing in Scala, they chose the

Java client library for Cloud Spanner and wrote some sample code to load-test Cloud Spanner and see if it could keep up with their queries per second (QPS) requirements for writes—around 30,000 QPS at peak. Working with our customer engineer and the Cloud Spanner engineering team, they met this goal easily. They even developed their own

DML (Data Manipulation Language) wrapper to write SQL commands like INSERT, UPDATE and DELETE.

Game release

With the proof of concept behind them, they could start their implementation. Based on the expected daily active users (DAU), BNE calculated how many Cloud Spanner nodes they needed—enough for the 3 million pre-registered players they were expecting. To prepare the release, they organized two closed beta tests to validate their backend, and didn’t have a single issue with the database! In the end, over 3 million participants worldwide pre-registered for Dragon Ball Legends, and even with this huge number, the official game release went flawlessly.

Long story short, BNE can focus on improving the game rather than spending time operating their databases.

Challenge #2: Global network

Let’s now talk about BNE’s second challenge: building a global real-time player-vs-player (PvP) game. BNE’s goal for Dragon Ball Legends was to let all its players play against one another, anywhere in the world. If you know anything about networking, you understand the challenge around latency. Round-trip time (RTT) ( between Tokyo and San Francisco, for example, is on average around 100 ms. To address that, they decided to divide every game second into 250 ms intervals. So while the game looks like it’s real-time to users, it’s actually a really fast turn-based game at its core (you can read more about the architecture

here). And while some might say that 250ms offers plenty of room for latency, it’s extremely hard to predict the latency when communicating across the Internet.

Why Cloud Networking?

Here’s what it looks like for a game client to access the game server on GCP over the internet. Since the number of hops can vary every time, this means that playing PvP can sometimes feel fast, sometimes slow.

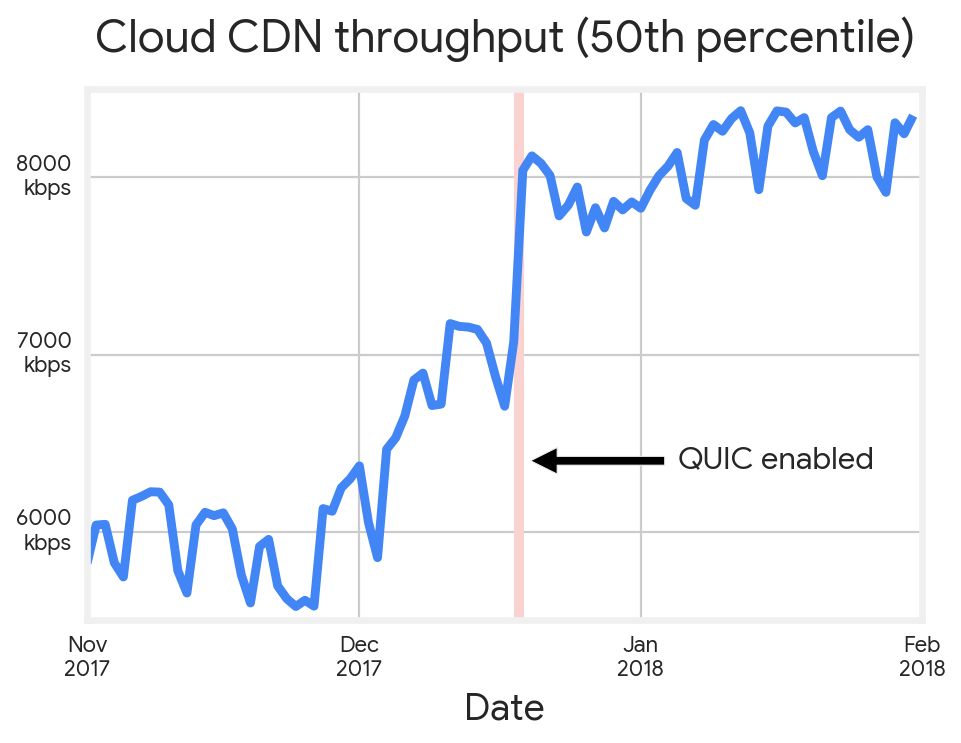

Once of the main reasons BNE decided to use GCP for the Dragon Ball Legends backend was the

Google dedicated network. As you can see in the picture below, when using GCP, once the game client accesses one of the hundreds of GCP Point Of Presence (POP) around the world, it’s on the Google dedicated network. That means none unpredictable hops, for predictable and lowest possible latency.

Taking advantage of the Google Cloud Network

Usually, gaming companies implement PvP by connecting two players directly or through a dedicated game server. Usually combat games that require low latency between players will prefer P2P communication. In general, when two players are geographically close, P2P works very well, but it’s often unreliable when trying to communicate across regions (some carriers even block P2P protocols). For two players from two different continents to communicate through Google’s dedicated network, players first try to communicate through P2P, and if that fails, they failover to an open source implementation of STUN/TURN Server called

coturn, which acts as a relay between the two players.. That way, cross continent battles leverage the low-latency and reliable Google network as much as possible.

Challenge #3: Real-time data analytics

BNE’s last challenge was around real-time data analytics. BNE wanted to offer the best user experience to their fans and one of the ways to do that is through live game operations, or LiveOps, in which operators make constant changes to the game so it always feels fresh. But to understand players’ needs, they needed data— usually users’ actions log data. And if they could get this data in near real-time, they could then make decisions on what changes to apply to the game to increase users’ satisfaction and engagement.

To gather this data, BNE used a combination of Cloud Pub/Sub, Cloud Dataflow to transform in users’ data in real-time and insert it into BigQuery.

- Cloud Pub/Sub offers a globally reliable messaging system that buffers the logs until they can be handled by Cloud Dataflow.

- Cloud Dataflow is a fully managed parallel processing service that lets you execute ETL in real-time and in parallel.

- BigQuery is the fully managed data warehouse where all the game logs are stored. Since BigQuery offers petabyte-scale storage, scaling was not a concern. Thanks to heavy parallel processing when querying the logs, BNE can get a response to a query, scanning terabytes of data in a few seconds.

This system lets a game producer visualize a player’s behavior in near real-time and take decision on what new features to bring to the game or what to change inside the game to satisfy all their fans.

Takeaways

Using Cloud Spanner, BNE could focus on developing an amazing game instead of spending time on database capacity planning and scaling. Operations-wise, by using a fully managed scalable database, they drastically reduced risks related to human error as well as an operational overhead.

Using Cloud Networking, they leveraged Google’s dedicated network to offer the best user experience to their fans, even when fighting across regions.

And finally, using Google’s analytics stack (Cloud PubSub, Cloud Dataflow and BigQuery), BNE was able to analyze players’ behaviors in near real-time and make decisions about how to adjust the game to make their fans even happier!

If you want to hear more details about how they evaluated and adopted Cloud Spanner for their game, please join them at their

Google Cloud NEXT’18 session in San Francisco.