Kubernetes configuration manifests have become an industry standard for deploying both custom and off-the-shelf applications (as well as for infrastructure). Manifests are combined into bundles to create higher-level deployable systems as well as reusable blueprints (such as a product offering, off the shelf software, or customizable starting point for a new application).

However, most teams lack the expertise or desire to create bespoke bundles of configuration from scratch and instead: 1) either fork them from another bundle, or 2) use some packaging solution which generates manifests from code.

Teams quickly discover they need to customize, validate, audit and re-publish their forked/ generated bundles for their environment. Most packaging solutions to date are tightly coupled to some format written as code (e.g. templates, DSLs, etc). This introduces a number of challenges when trying to extend, build on top of, or integrate them with other systems. For example, how does one update a forked template from upstream, or how does one apply custom validation?

Packaging is the foundation of building reusable components, but it also incurs a productivity tax on the users of those components.Today we’d like to introduce

kpt, an OSS tool for Kubernetes packaging, which uses a standard format to bundle, publish, customize, update, and apply configuration manifests.

Kpt is built around an “as data” architecture bundling

Kubernetes resource configuration, a format for both humans and machines. The ability for tools to read and write the package contents using standardized data structures enables powerful new capabilities:

- Any existing directory in a Git repo with configuration files can be used as a kpt package.

- Packages can be arbitrarily customized and later pull in updates from upstream by merging them.

- Tools and automation can perform high-level operations by transforming and validating package data on behalf of users or systems.

- Organizations can develop their own tools and automation which operate against the package data.

- Existing tools and automation that work with resource configuration “just work” with kpt.

- Existing solutions that generate configuration (e.g. from templates or DSLs) can emit kpt packages which enable the above capabilities for them.

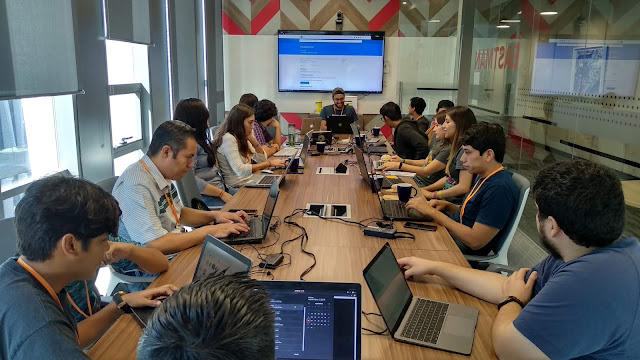

Example workflow with kpt

Now that we’ve established the benefits of using kpt for managing your packages of Kubernetes config, lets walk through how an enterprise might leverage kpt to package, share and use their best practices for Kubernetes across the organization.

First, a team within the organization may build and contribute to a repository of best practices (pictured in blue) for managing a certain type of application, for example a microservice (called “app”). As the best practices are developed within an organization, downstream teams will want to consume and modify configuration blueprints based on them. These blueprints provide a blessed starting point which adheres to organization policies and conventions.

The downstream team will get their own copy of a package by downloading it to their local filesystem (pictured in red) using

kpt pkg get. This clones the git subdirectory, recording upstream metadata so that it can be updated later.

They may decide to update the number of

replicas to fit their scaling requirements or may need to alter part of the

image field to be the image name for their app. They can directly modify the configuration using a text editor (as would be done before). Alternatively, the package may define setters, allowing fields to be set programmatically using

kpt cfg set. Setters streamline workflows by providing user and automation friendly commands to perform common operations.

Once the modifications have been made to the local filesystem, the team will commit and push their package to an app repository owned by them. From there, a CI/CD pipeline will kick off and the deployment process will begin. As a final customization before the package is deployed to the cluster, the CI/CD pipeline will inject the digest of the image it just built into the image field (using

kpt cfg set). When the image digest has been set, the CI/CD pipeline can send the manifests to the cluster using

kpt live apply. Kpt live operates like kubectl apply, providing additional functionality to prune resources deleted from the configuration and block on rollout completion (reporting status of the rollout back to the user).

Now that we’ve walked through how you might use kpt in your organization, we’d love it if you’d

try it out,

read the docs, or

contribute.

One more thing

There’s still a lot to the story we didn’t cover here. Expect to hear more from us about:

- Using kpt with GitOps

- Building custom logic with functions

- Writing effective blueprints with kpt and kustomize

By Phillip Wittrock, Software Engineer and Vic Iglesias, Cloud Solutions Architect

.png)