The latest episode of the Google AI: Release Notes podcast focuses on how Gemini was built from the ground up as a multimodal model — meaning a model that works with tex…

The latest episode of the Google AI: Release Notes podcast focuses on how Gemini was built from the ground up as a multimodal model — meaning a model that works with tex…

Hear a podcast discussion about Gemini’s multimodal capabilities.

The latest episode of the Google AI: Release Notes podcast focuses on how Gemini was built from the ground up as a multimodal model — meaning a model that works with tex…

The latest episode of the Google AI: Release Notes podcast focuses on how Gemini was built from the ground up as a multimodal model — meaning a model that works with tex…

Google Play's Indie Games Fund in Latin America is returning for its fourth year. We're committing $2 million for another 10 indie game studios, bringing our total inves…

Google Play's Indie Games Fund in Latin America is returning for its fourth year. We're committing $2 million for another 10 indie game studios, bringing our total inves…

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

We’re announcing a new program, the Google ML and Systems Junior Faculty Awards.

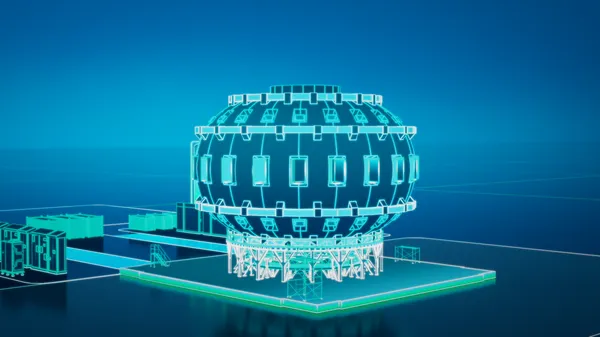

Our new agreement is designed to accelerate the development of fusion power.

Our new agreement is designed to accelerate the development of fusion power.

An overview of Google’s 2025 Environmental Report.

An overview of Google’s 2025 Environmental Report.

We love seeing how you’re using Ask Photos in early access, like asking "suggest photos that'd make great phone backgrounds" or "what did I eat on my trip to Barcelona?"…

We love seeing how you’re using Ask Photos in early access, like asking "suggest photos that'd make great phone backgrounds" or "what did I eat on my trip to Barcelona?"…

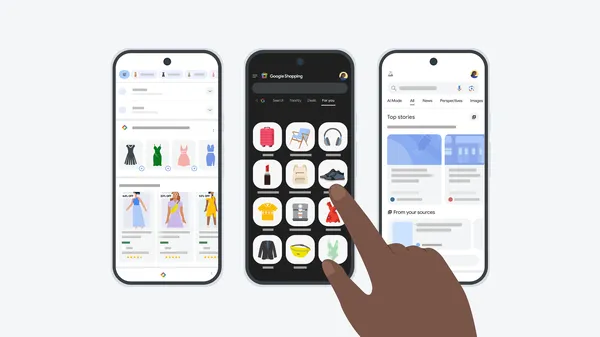

See more content from your favorite sites, pick up where you left off and more with these tips for customizing Google Search.

See more content from your favorite sites, pick up where you left off and more with these tips for customizing Google Search.

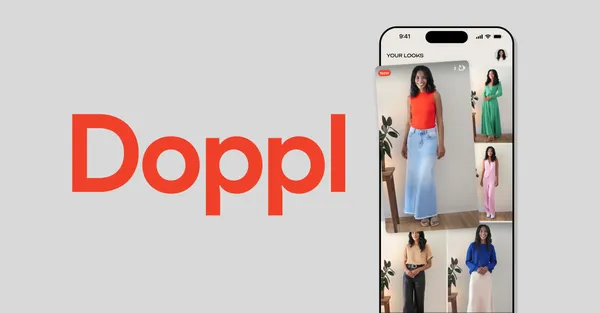

Doppl, a new Google Labs app, uses AI to create personalized outfit try-on images and videos.

Doppl, a new Google Labs app, uses AI to create personalized outfit try-on images and videos.

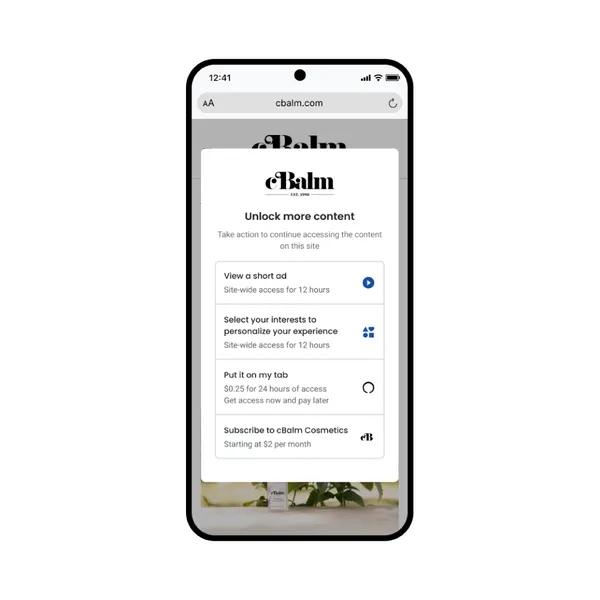

For years, our publishing partners have asked for more ways to monetize their content beyond traditional ads using Google Ad Manager. That’s why we’re launching Offerwal…

For years, our publishing partners have asked for more ways to monetize their content beyond traditional ads using Google Ad Manager. That’s why we’re launching Offerwal… Here are five tips for making videos with Flow, Google’s new AI filmmaking tool.

Here are five tips for making videos with Flow, Google’s new AI filmmaking tool.