Reinforcement and imitation learning methods in robotics research can enable autonomous environmental navigation and efficient object manipulation, which in turn opens up a breadth of useful real-life applications. Previous work has demonstrated how robots that learn end-to-end using deep neural networks can reliably and safely interact with the unstructured world around us by comprehending camera observations to take actions and solve tasks. However, while end-to-end learning methods can generalize and scale for complicated robot manipulation tasks, they require hundreds of thousands real world robot training episodes, which can be difficult to obtain. One can attempt to alleviate this constraint by using a simulation of the environment that allows virtual robots to learn more quickly and at scale, but the simulations’ inability to exactly match the real world presents a challenge c ommonly referred to as the sim-to-real gap. One important source of the gap comes from discrepancies between the images rendered in simulation and the real robot camera observations, which then causes the robot to perform poorly in the real world.

To-date, work on bridging this gap has employed a technique called pixel-level domain adaptation, which translates synthetic images to realistic ones at the pixel level. One example of this technique is GraspGAN, which employs a generative adversarial network (GAN), a framework that has been very effective at image generation, to model this transformation between simulated and real images given datasets of each domain. These pseudo-real images correct some sim-to-real gap, so policies learned with simulation execute more successfully on real robots. A limitation for their use in sim-to-real transfer, however, is that because GANs translate images at the pixel-level, multi-pixel features or structures that are necessary for robot task learning may be arbitrarily modified or even removed.

To address the above limitation, and in collaboration with the Everyday Robot Project at X, we introduce two works, RL-CycleGAN and RetinaGAN, that train GANs with robot-specific consistencies — so that they do not arbitrarily modify visual features that are specifically necessary for robot task learning — and thus bridge the visual discrepancy between sim and real. We demonstrate how these consistencies preserve features critical to policy learning, eliminating the need for hand-engineered, task-specific tuning, which in turn allows for this sim-to-real methodology to work flexibly across tasks, domains, and learning algorithms. With RL-CycleGAN, we describe our sim-to-real transfer methodology and demonstrate state-of-the-art performance on real world grasping tasks trained with RL. With RetinaGAN, we extend our approach to include imitation learning with a door opening task.

RL-CycleGAN

In “RL-CycleGAN: Reinforcement Learning Aware Simulation-To-Real”, we leverage a variation of CycleGAN for sim-to-real adaptation by ensuring consistency of task-relevant features between real and simulated images. CycleGAN encourages preservation of image contents by ensuring an adapted image transformed back to the original domain is identical to the original image, which is called cycle consistency. To further encourage the adapted images to be useful for robotics, the CycleGAN is jointly trained with a reinforcement learning (RL) robot agent that ensures the robot’s actions are the same given both the original images and those after GAN-adaptation. That is, task-specific features like robot arm or graspable object locations are unaltered, but the GAN may still alter lighting or textural differences between domains that do not affect task-level decisions.

Evaluating RL-CycleGAN

We evaluated RL-CycleGAN on a robotic indiscriminate grasping task. Trained on 580,000 real trials and simulations adapted with RL-CycleGAN, the robot grasps objects with 94% success, surpassing the 89% success rate of the prior state-of-the-art sim-to-real method GraspGAN and the 87% mark using real-only data without simulation. With only 28,000 trials, the RL-CycleGAN method reaches 86%, comparable to the previous baselines with 20x the data. Some examples of the RL-CycleGAN output alongside the simulation images are shown below.

|

| Comparison between simulation images of robot grasping before (left) and after RL-CycleGAN translation (right). |

RetinaGAN

While RL-CycleGAN reliably transfers from sim-to-real for the RL domain using task awareness, a natural question arises: can we develop a more flexible sim-to-real transfer technique that applies broadly to different tasks and robot learning techniques?

In “RetinaGAN: An Object-Aware Approach to Sim-to-Real Transfer”, presented at ICRA 2021, we develop such a task-decoupled, algorithm-decoupled GAN approach to sim-to-real transfer by instead focusing on robots’ perception of objects. RetinaGAN enforces strong object-semantic awareness through perception consistency via object detection to predict bounding box locations for all objects on all images. In an ideal sim-to-real model, we expect the object detector to predict the same box locations before and after GAN translation, as objects should not change structurally. RetinaGAN is trained toward this ideal by backpropagation, such that there is consistency in perception of objects both when a) simulated images are transformed from simulation to real and then back to simulation and b) when real images are transformed from real to simulation and then back to real. We find this object-based consistency to be more widely applicable than the task-specific consistency required by RL-CycleGAN.

Evaluating RetinaGAN on a Real Robot

Given the goal of building a more flexible sim-to-real transfer technique, we evaluate RetinaGAN in multiple ways to understand for which tasks and under what conditions it accomplishes sim-to-real transfer.

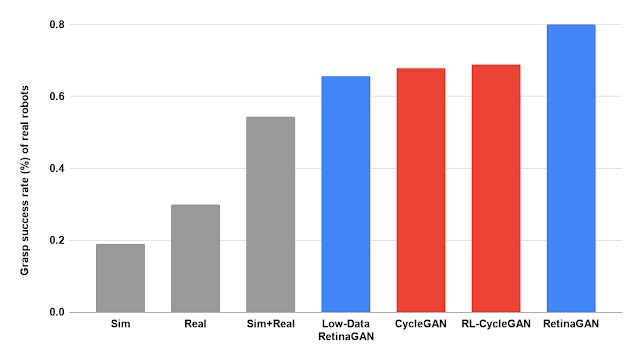

We first apply RetinaGAN to a grasping task. As demonstrated visually below, RetinaGAN emphasizes the translation of realistic object textures, shadows, and lighting, while maintaining the visual quality and saliency of the graspable objects. We couple a pre-trained RetinaGAN model with the distributed reinforcement learning method Q2-Opt to train a vision-based task model for instance grasping. On real robots, this policy grasps object instances with 80% success when trained on a hundred thousand episodes — outperforming prior adaptation methods RL-CycleGAN and CycleGAN (both achieving ~68%) and training without domain adaptation (grey bars below: 19% with sim data, 22% with real data, and 54% with mixed data). This gives us confidence that perception consistency is a valuable strategy for sim-to-real transfer. Further, with just 10,000 training episodes (8% of the data), the RL policy with RetinaGAN grasps with 66% success, demonstrating performance of prior methods with significantly less data.

|

| Evaluation performance of RL policies on instance grasping, trained with various datasets and sim-to-real methods. Low-Data RetinaGAN uses 8% of the real dataset. |

|

| The simulated grasping environment (left) is translated to a realistic image (right) using RetinaGAN. |

Next, we pair RetinaGAN with a different learning method, behavioral cloning, to open conference room doors given demonstrations by human operators. Using images from both simulated and real demonstrations, we train RetinaGAN to translate the synthetic images to look realistic, bridging the sim-to-real gap. We then train a behavior cloning model to imitate the task-solving actions of the human operators within real and RetinaGAN-adapted sim demonstrations. When evaluating this model by predicting actions to take, the robot enters real conference rooms over 93% of the time, surpassing baselines of 75% and below.

|

| Both of the above images show the same simulation, but RetinaGAN translates simulated door opening images (left) to look more like real robot sensor data (right). |

|

| Three examples of the real robot successfully opening conference room doors using the RetinaGAN-trained behavior cloning policy. |

Conclusion

This work has demonstrated how additional constraints on GANs may address the visual sim-to-real gap without requiring task-specific tuning; these approaches reach higher real robot success rates with less data collection. RL-CycleGAN translates synthetic images to realistic ones with an RL-consistency loss that automatically preserves task-relevant features. RetinaGAN is an object-aware sim-to-real adaptation technique that transfers robustly across environments and tasks, agnostic to the task learning method. Since RetinaGAN is not trained with any task-specific knowledge, we show how it can be reused for a novel object pushing task. We hope that work on the sim-to-real gap further generalizes toward solving task-agnostic robotic manipulation in unstructured environments.

Acknowledgements

Research into RL-CycleGAN was conducted by Kanishka Rao, Chris Harris, Alex Irpan, Sergey Levine, Julian Ibarz, and Mohi Khansari. Research into RetinaGAN was conducted by Daniel Ho, Kanishka Rao, Zhuo Xu, Eric Jang, Mohi Khansari, and Yunfei Bai. We’d also like to give special thanks to Ivonne Fajardo, Noah Brown, Benjamin Swanson, Christopher Paguyo, Armando Fuentes, and Sphurti More for overseeing the robot operations. We thank Paul Wohlhart, Konstantinos Bousmalis, Daniel Kappler, Alexander Herzog, Anthony Brohan, Yao Lu, Chad Richards, Vincent Vanhoucke, and Mrinal Kalakrishnan, Max Braun and others in the Robotics at Google team and the Everyday Robot Project for valuable discussions and help.