Virtual assistants are increasingly integrated into our daily routines. They can help with everything from setting alarms to giving map directions and can even assist people with disabilities to more easily manage their homes. As we use these assistants, we are also becoming more accustomed to using natural language to accomplish tasks that we once did by hand.

One of the biggest challenges in building a robust virtual assistant is identifying what a user wants and what information is needed to perform the task at hand. In the natural language processing (NLP) literature, this is mainly framed as a task-oriented dialogue parsing task, where a given dialogue needs to be parsed by a system to understand the user intent and carry out the operation to fulfill that intent. While the academic community has made progress in handling task-oriented dialogue thanks to custom purpose datasets, such as MultiWOZ, TOP, SMCalFlow, etc., progress is limited because these datasets lack typical speech phenomena necessary for model training to optimize language model performance. The resulting models often underperform, leading to dissatisfaction with assistant interactions. Relevant speech patterns might include revisions, disfluencies, code-mixing, and the use of structured context surrounding the user’s environment, which might include the user’s notes, smart home devices, contact lists, etc.

Consider the following dialogue that illustrates a common instance when a user needs to revise their utterance:

|

| A dialogue conversation with a virtual assistant that includes a user revision. |

The virtual assistant misunderstands the request and attempts to call the incorrect contact. Hence, the user has to revise their utterance to fix the assistant’s mistake. To parse the last utterance correctly, the assistant would also need to interpret the special context of the user — in this case, it would need to know that the user had a contact list saved in their phone that it should reference.

Another common category of utterance that is challenging for virtual assistants is code-mixing, which occurs when the user switches from one language to another while addressing the assistant. Consider the utterance below:

|

| A dialogue denoting code-mixing between English and German. |

In this example, the user switches from English to German, where “vier Uhr” means “four o’clock” in German.

In an effort to advance research in parsing such realistic and complex utterances, we are launching a new dataset called PRESTO, a multilingual dataset for parsing realistic task-oriented dialogues that includes roughly half a million realistic conversations between people and virtual assistants. The dataset spans six different languages and includes multiple conversational phenomena that users may encounter when using an assistant, including user-revisions, disfluencies, and code-mixing. The dataset also includes surrounding structured context, such as users’ contacts and lists associated with each example. The explicit tagging of various phenomena in PRESTO allows us to create different test sets to separately analyze model performance on these speech phenomena. We find that some of these phenomena are easier to model with few-shot examples, while others require much more training data.

Dataset characteristics

- Conversations by native speakers in six languages

All conversations in our dataset are provided by native speakers of six languages — English, French, German, Hindi, Japanese, and Spanish. This is in contrast to other datasets, such as MTOP and MASSIVE, that translate utterances only from English to other languages, which does not necessarily reflect the speech patterns of native speakers in non-English languages. - Structured context

Users often rely on the information stored in their devices, such as notes, contacts, and lists, when interacting with virtual assistants. However, this context is often not accessible to the assistant, which can result in parsing errors when processing user utterances. To address this issue, PRESTO includes three types of structured context, notes, lists, and contacts, as well as user utterances and their parses. The lists, notes, and contacts are authored by native speakers of each language during data collection. Having such context allows us to examine how this information can be used to improve performance on parsing task-oriented dialog models.

- User revisions

It is common for a user to revise or correct their own utterances while speaking to a virtual assistant. These revisions happen for a variety of reasons — the assistant could have made a mistake in understanding the utterance or the user might have changed their mind while making an utterance. One such example is in the figure above. Other examples of revisions include canceling one’s request (‘’Don’t add anything.”) or correcting oneself in the same utterance (“Add bread — no, no wait — add wheat bread to my shopping list.”). Roughly 27% of all examples in PRESTO have some type of user revision that is explicitly labeled in the dataset.

- Code-mixing

As of 2022, roughly 43% of the world’s population is bilingual. As a result, many users switch languages while speaking to virtual assistants. In building PRESTO, we asked bilingual data contributors to annotate code-mixed utterances, which amounted to roughly 14% of all utterances in the dataset.

Examples of Hindi-English, Spanish-English, and German-English code-switched utterances from PRESTO. - Disfluencies

Disfluencies, like repeated phrases or filler words, are ubiquitous in user utterances due to the spoken nature of the conversations that the virtual assistants receive. Datasets such as DISFL-QA note the lack of such phenomena in existing NLP literature and contribute towards the goal of alleviating that gap. In our work, we include conversations targeting this particular phenomenon across all six languages.

Examples of utterances in English, Japanese, and French with filler words or repetitions.

Key findings

We performed targeted experiments to focus on each of the phenomena described above. We ran mT5-based models trained using the PRESTO dataset and evaluated them using an exact match between the predicted parse and the human annotated parse. Below we show the relative performance improvements as we scale the training data on each of the targeted phenomena — user revisions, disfluencies, and code-mixing.

|

| K-shot results on various linguistic phenomena and the full test set across increasing training data size. |

The k-shot results yield the following takeaways:

- Zero-shot performance on the marked phenomenon is poor, emphasizing the need for such utterances in the dataset to improve performance.

- Disfluencies and code-mixing have a much better zero-shot performance than user-revisions (over 40 points difference in exact-match accuracy).

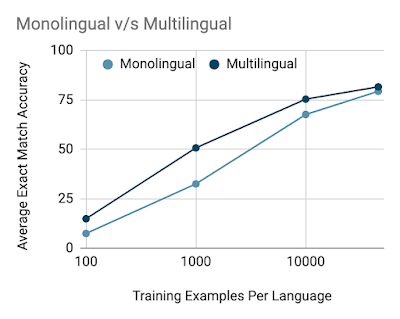

We also investigate the difference between training monolingual and multilingual models on the train set and find that with fewer data multilingual models have an advantage over monolingual models, but the gap shrinks as the data size is increased.

Additional details on data quality, data collection methodology, and modeling experiments can be found in our paper.

Conclusion

We created PRESTO, a multilingual dataset for parsing task-oriented dialogues that includes realistic conversations representing a variety of pain points that users often face in their daily conversations with virtual assistants that are lacking in existing datasets in the NLP community. PRESTO includes roughly half a million utterances that are contributed by native speakers of six languages — English, French, German, Hindi, Japanese, and Spanish. We created dedicated test sets to focus on each targeted phenomenon — user revisions, disfluencies, code-mixing, and structured context. Our results indicate that the zero-shot performance is poor when the targeted phenomenon is not included in the training set, indicating a need for such utterances to improve performance. We notice that user revisions and disfluencies are easier to model with more data as opposed to code-mixed utterances, which are harder to model, even with a high number of examples. With the release of this dataset, we open more questions than we answer and we hope the research community makes progress on utterances that are more in line with what users are facing every day.

Acknowledgements

It was a privilege to collaborate on this work with Waleed Ammar, Siddharth Vashishtha, Motoki Sano, Faiz Surani, Max Chang, HyunJeong Choe, David Greene, Kyle He, Rattima Nitisaroj, Anna Trukhina, Shachi Paul, Pararth Shah, Rushin Shah, and Zhou Yu. We’d also like to thank Tom Small for the animations in this blog post. Finally, a huge thanks to all the expert linguists and data annotators for making this a reality.