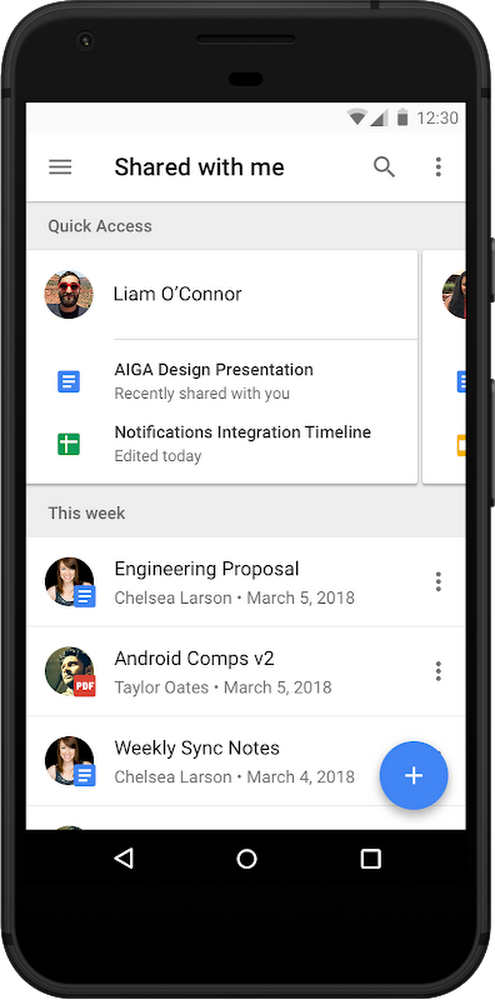

With thelaunch of Hire last year, we simplified the hiring process by integrating it into the tools where recruiters already spend much of their day—Gmail, Google Calendar and other G Suite apps. Recruiters tell us Hire has fundamentally improved how they work, with less context switching between apps. In fact, when we measured user activity, we found Hire reduced time spent completing common recruiting tasks—like reviewing applications or scheduling interviews—by up to 84 percent. But we wanted to do more.

The result is our latest release of Hire. By incorporating Google AI, Hire now reduces repetitive, time-consuming tasks, into one-click interactions. This means hiring teams can spend less time with logistics and more time connecting with people.

Here’s a little more on what recruiters can do with the new Hire:

Schedule interviews in seconds

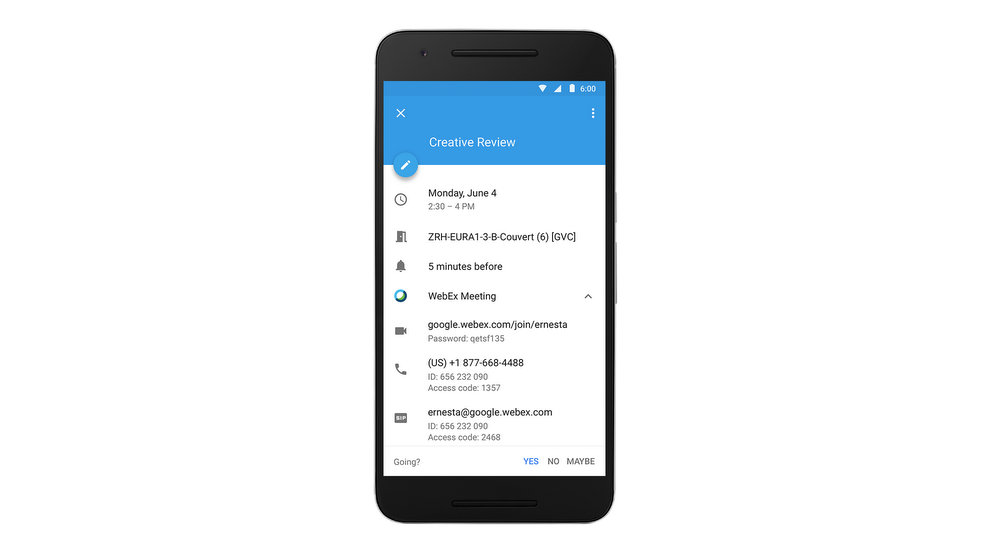

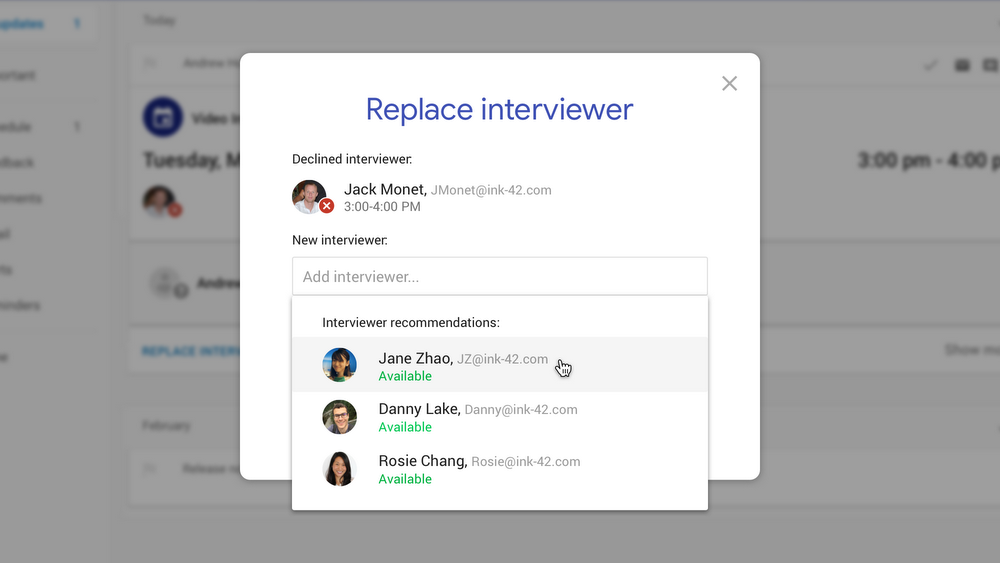

Recruiters and recruiting coordinators spend a lot of time managing interview logistics—finding available time on calendars, booking rooms, and pulling together the right information to prep interviewers. To streamline this process, Hire now uses AI to automatically suggest interviewers and ideal time slots, reducing interview scheduling to a few clicks.

If an interviewer cancels last minute, Hire not only alerts you, it also recommends available replacement interviewers and makes it easy to quickly invite them. This means hiring teams can invest time in preparing for interviews and building relationships with candidates instead of scheduling rooms and checking calendars.

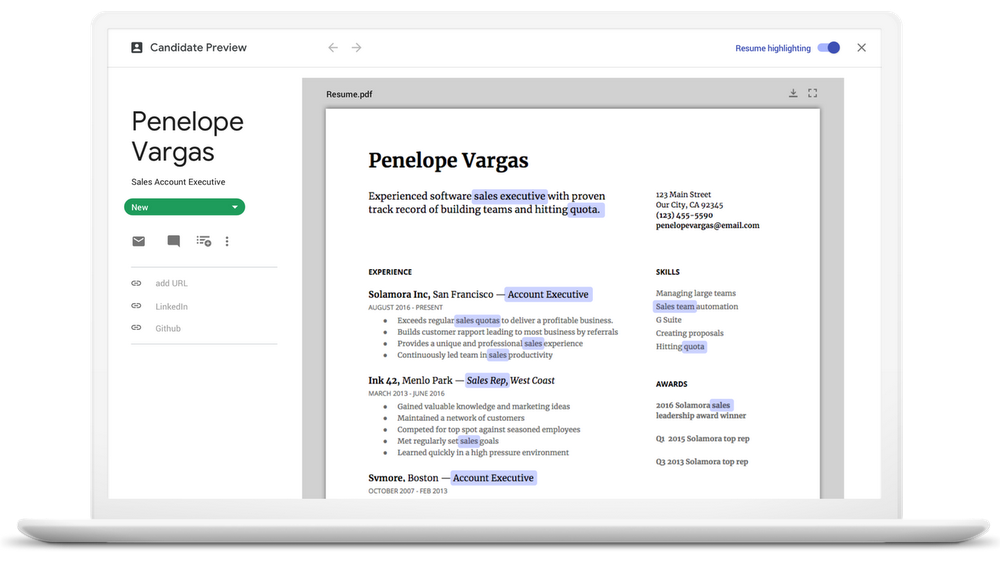

Auto-highlight resumes

A huge portion of recruiters' time is spent reviewing resumes. Watching people interact with Hire, we found that they were frequently using “Ctrl+F” to search for the right skills as they scanned through a resume—a repetitive, manual task that could easily be automated. Using AI, Hire now automatically analyzes the terms in a job description or search query and auto-highlights them on resumes, including synonyms and acronyms.

Click to call candidates

Whether they’re screening candidates, conducting interviews, or following-up on offers, recruiters often have dozens of phone conversations each day. This means spending a lot of time searching for phone numbers or logging notes. Hire now simplifies every phone conversation with click-to-call functionality, and automatically logs calls so team members know who has spoken with a candidate.

“Using Hire by Google has helped streamline the processes that used to take up a lot of my time, which allows me to focus on the next steps to make sure candidates have the best experience.” – Anna McMurray, Chocolate Talent Scout, Dandelion Chocolate

There’s a huge opportunity for technology—and AI specifically—to help people work faster and therefore focus on uniquely human activities. Ultimately, that’s what Hire is all about, and the functionality we’re adding today demonstrates our commitment to help companies focus on people and build their best teams. Visit hire.google.com to learn more.