The goal of natural language processing (NLP) is to develop computational models that can understand and generate natural language. By capturing the statistical patterns and structures of text-based natural language, language models can predict and generate coherent and meaningful sequences of words. Enabled by the increasing use of the highly successful Transformer model architecture and with training on large amounts of text (with proportionate compute and model size), large language models (LLMs) have demonstrated remarkable success in NLP tasks.

However, modeling spoken human language remains a challenging frontier. Spoken dialog systems have conventionally been built as a cascade of automatic speech recognition (ASR), natural language understanding (NLU), response generation, and text-to-speech (TTS) systems. However, to date there have been few capable end-to-end systems for the modeling of spoken language: i.e., single models that can take speech inputs and generate its continuation as speech outputs.

Today we present a new approach for spoken language modeling, called Spectron, published in “Spoken Question Answering and Speech Continuation Using Spectrogram-Powered LLM.” Spectron is the first spoken language model that is trained end-to-end to directly process spectrograms as both input and output, instead of learning discrete speech representations. Using only a pre-trained text language model, it can be fine-tuned to generate high-quality, semantically accurate spoken language. Furthermore, the proposed model improves upon direct initialization in retaining the knowledge of the original LLM as demonstrated through spoken question answering datasets.

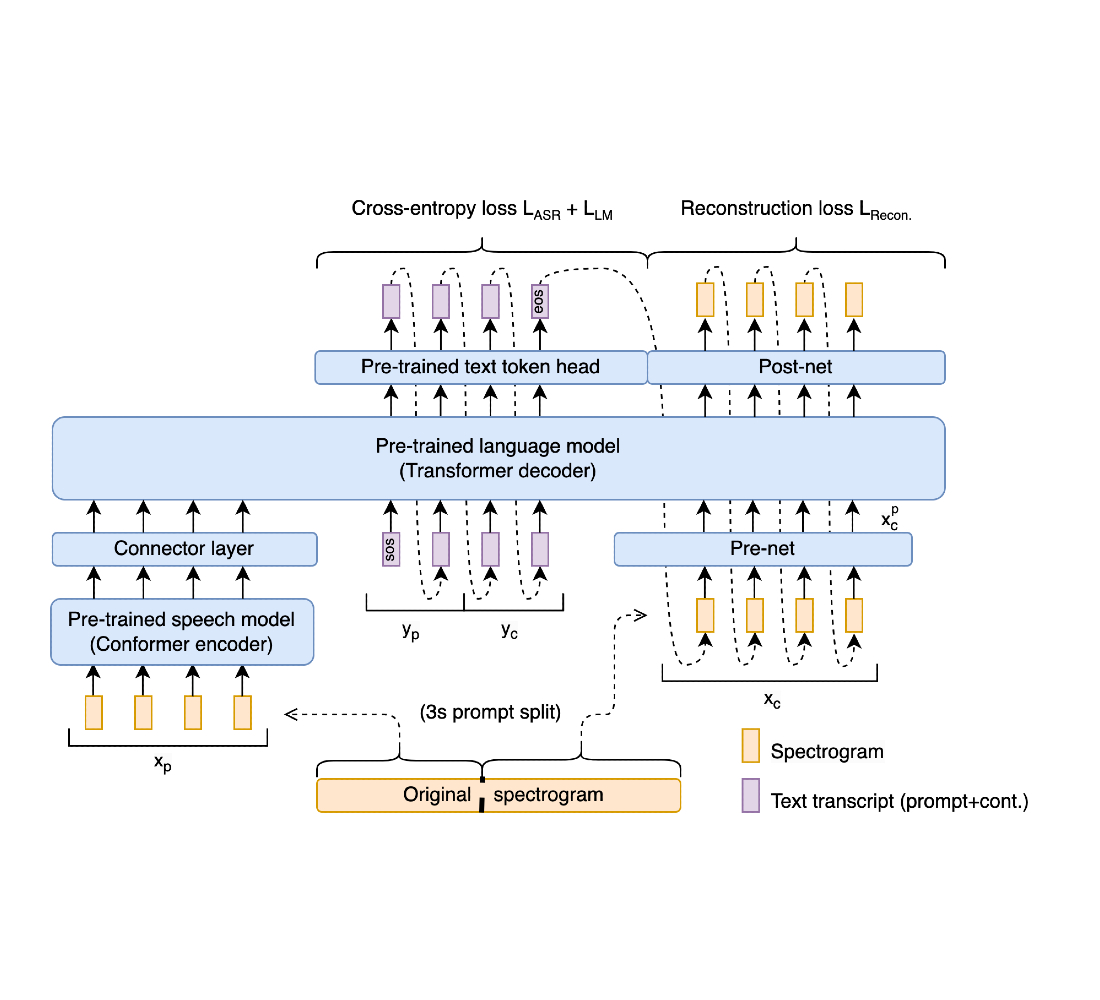

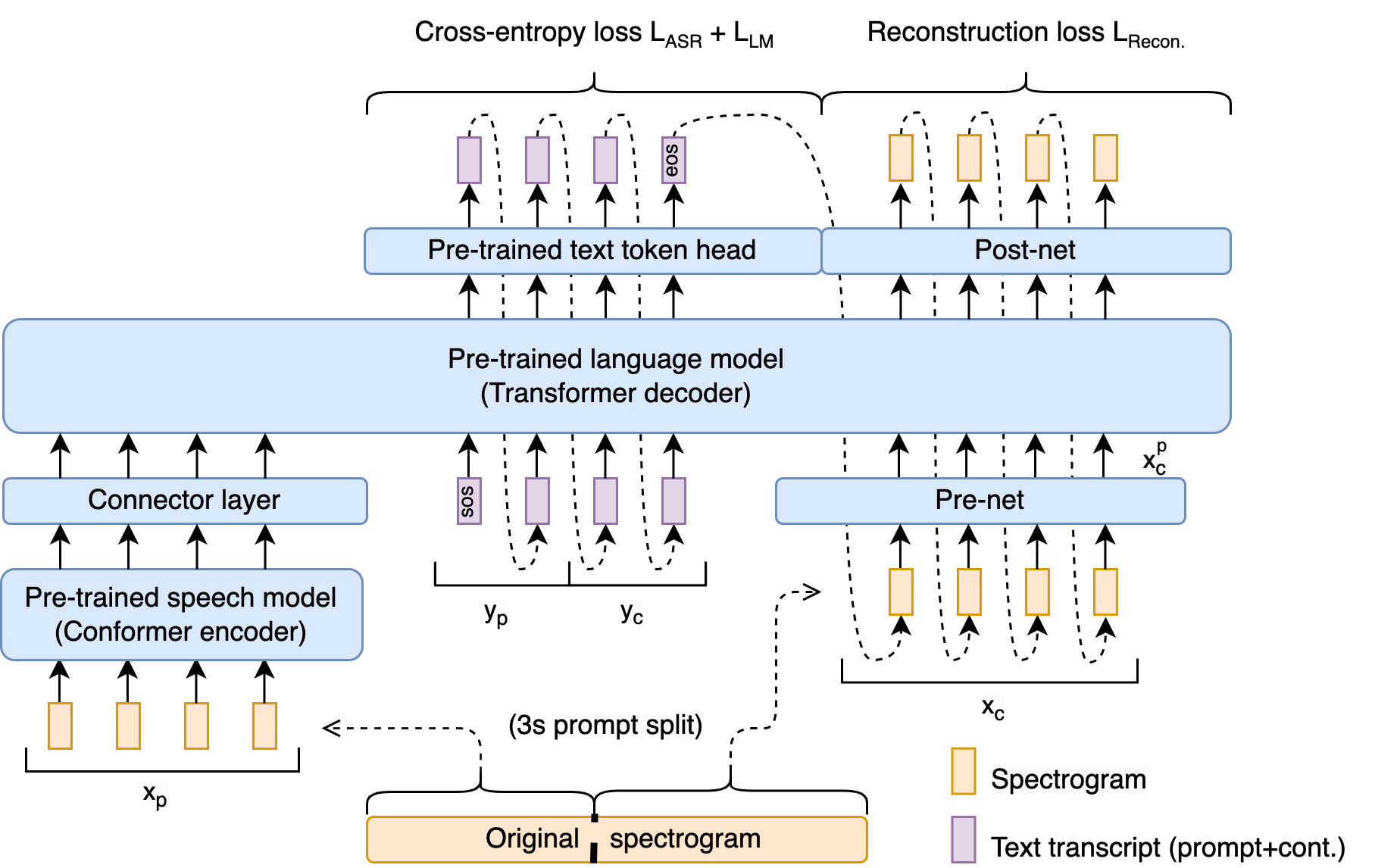

We show that a pre-trained speech encoder and a language model decoder enable end-to-end training and state-of-the-art performance without sacrificing representational fidelity. Key to this is a novel end-to-end training objective that implicitly supervises speech recognition, text continuation, and conditional speech synthesis in a joint manner. A new spectrogram regression loss also supervises the model to match the higher-order derivatives of the spectrogram in the time and frequency domain. These derivatives express information aggregated from multiple frames at once. Thus, they express rich, longer-range information about the shape of the signal. Our overall scheme is summarized in the following figure:

Spectron architecture

The architecture is initialized with a pre-trained speech encoder and a pre-trained decoder language model. The encoder is prompted with a speech utterance as input, which it encodes into continuous linguistic features. These features feed into the decoder as a prefix, and the whole encoder-decoder is optimized to jointly minimize a cross-entropy loss (for speech recognition and transcript continuation) and a novel reconstruction loss (for speech continuation). During inference, one provides a spoken speech prompt, which is encoded and then decoded to give both text and speech continuations.

Speech encoder

The speech encoder is a 600M-parameter conformer encoder pre-trained on large-scale data (12M hours). It takes the spectrogram of the source speech as input, generating a hidden representation that incorporates both linguistic and acoustic information. The input spectrogram is first subsampled using a convolutional layer and then processed by a series of conformer blocks. Each conformer block consists of a feed-forward layer, a self-attention layer, a convolution layer, and a second feed-forward layer. The outputs are passed through a projection layer to match the hidden representations to the embedding dimension of the language model.

Language model

We use a 350M or 1B parameter decoder language model (for the continuation and question-answering tasks, respectively) trained in the manner of PaLM 2. The model receives the encoded features of the prompt as a prefix. Note that this is the only connection between the speech encoder and the LM decoder; i.e., there is no cross-attention between the encoder and the decoder. Unlike most spoken language models, during training, the decoder is teacher-forced to predict the text transcription, text continuation, and speech embeddings. To convert the speech embeddings to and from spectrograms, we introduce lightweight modules pre- and post-network.

By having the same architecture decode the intermediate text and the spectrograms, we gain two benefits. First, the pre-training of the LM in the text domain allows continuation of the prompt in the text domain before synthesizing the speech. Secondly, the predicted text serves as intermediate reasoning, enhancing the quality of the synthesized speech, analogous to improvements in text-based language models when using intermediate scratchpads or chain-of-thought (CoT) reasoning.

Acoustic projection layers

To enable the language model decoder to model spectrogram frames, we employ a multi-layer perceptron “pre-net” to project the ground truth spectrogram speech continuations to the language model dimension. This pre-net compresses the spectrogram input into a lower dimension, creating a bottleneck that aids the decoding process. This bottleneck mechanism prevents the model from repetitively generating the same prediction in the decoding process. To project the LM output from the language model dimension to the spectrogram dimension, the model employs a “post-net”, which is also a multi-layer perceptron. Both pre- and post-networks are two-layer multi-layer perceptrons.

Training objective

The training methodology of Spectron uses two distinct loss functions: (i) cross-entropy loss, employed for both speech recognition and transcript continuation, and (ii) regression loss, employed for speech continuation. During training, all parameters are updated (speech encoder, projection layer, LM, pre-net, and post-net).

Audio samples

Following are examples of speech continuation and question answering from Spectron:

Speech Continuation | |

| Prompt: | |

| Continuation: | |

| Prompt: | |

| Continuation: | |

| Prompt: | |

| Continuation: | |

| Prompt: | |

| Continuation: | |

Question Answering | |

| Question: | |

| Answer: | |

| Question: | |

| Answer: | |

Performance

To empirically evaluate the performance of the proposed approach, we conducted experiments on the Libri-Light dataset. Libri-Light is a 60k hour English dataset consisting of unlabelled speech readings from LibriVox audiobooks. We utilized a frozen neural vocoder called WaveFit to convert the predicted spectrograms into raw audio. We experiment with two tasks, speech continuation and spoken question answering (QA). Speech continuation quality is tested on the LibriSpeech test set. Spoken QA is tested on the Spoken WebQuestions datasets and a new test set named LLama questions, which we created. For all experiments, we use a 3 second audio prompt as input. We compare our method against existing spoken language models: AudioLM, GSLM, TWIST and SpeechGPT. For the speech continuation task, we use the 350M parameter version of LM and the 1B version for the spoken QA task.

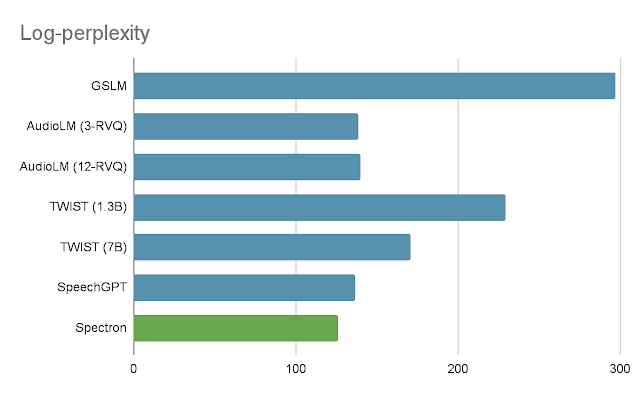

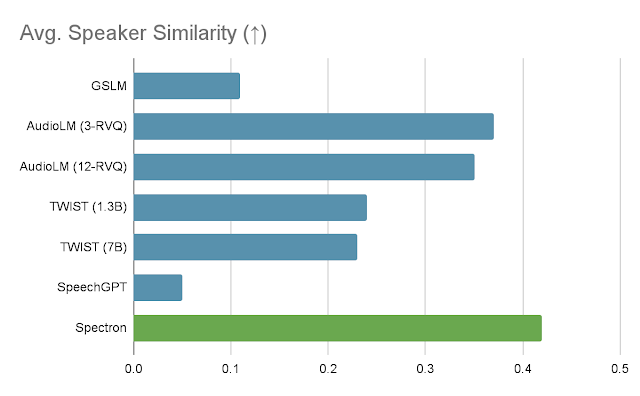

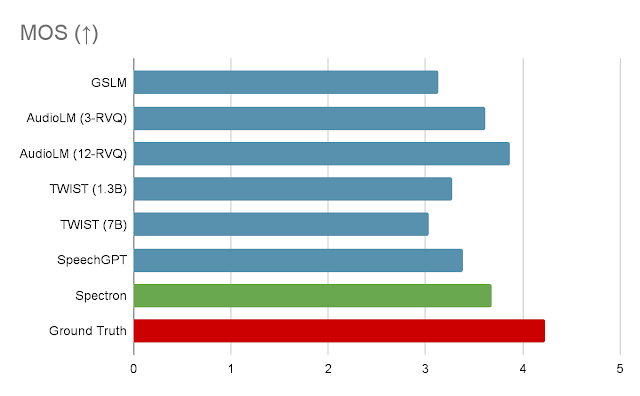

For the speech continuation task, we evaluate our method using three metrics. The first is log-perplexity, which uses an LM to evaluate the cohesion and semantic quality of the generated speech. The second is mean opinion score (MOS), which measures how natural the speech sounds to human evaluators. The third, speaker similarity, uses a speaker encoder to measure how similar the speaker in the output is to the speaker in the input. Performance in all 3 metrics can be seen in the following graphs.

|

| Log-perplexity for completions of LibriSpeech utterances given a 3-second prompt. Lower is better. |

|

| Speaker similarity between the prompt speech and the generated speech using the speaker encoder. Higher is better. |

|

| MOS given by human users on speech naturalness. Raters rate 5-scale subjective mean opinion score (MOS) ranging between 0 - 5 in naturalness given a speech utterance. Higher is better. |

As can be seen in the first graph, our method significantly outperforms GSLM and TWIST on the log-perplexity metric, and does slightly better than state-of-the-art methods AudioLM and SpeechGPT. In terms of MOS, Spectron exceeds the performance of all the other methods except for AudioLM. In terms of speaker similarity, our method outperforms all other methods.

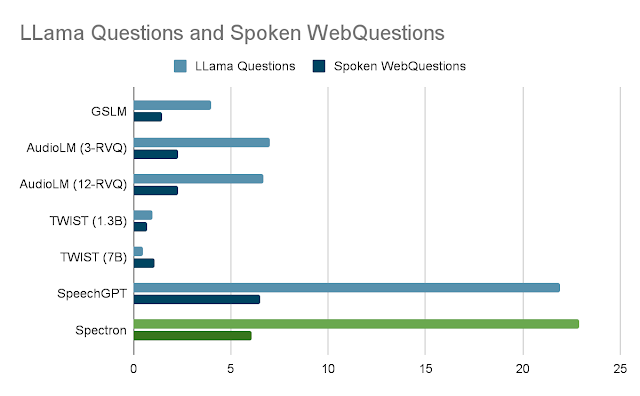

To evaluate the ability of the models to perform question answering, we use two spoken question answering datasets. The first is the LLama Questions dataset, which uses general knowledge questions in different domains generated using the LLama2 70B LLM. The second dataset is the WebQuestions dataset which is a general question answering dataset. For evaluation we use only questions that fit into the 3 second prompt length. To compute accuracy, answers are transcribed and compared to the ground truth answers in text form.

|

| Accuracy for Question Answering on the LLama Questions and Spoken WebQuestions datasets. Accuracy is computed using the ASR transcripts of spoken answers. |

First, we observe that all methods have more difficulty answering questions from the Spoken WebQuestions dataset than from the LLama questions dataset. Second, we observe that methods centered around spoken language modeling such as GSLM, AudioLM and TWIST have a completion-centric behavior rather than direct question answering which hindered their ability to perform QA. On the LLama questions dataset our method outperforms all other methods, while SpeechGPT is very close in performance. On the Spoken WebQuestions dataset, our method outperforms all other methods except for SpeechGPT, which does marginally better.

Acknowledgements

The direct contributors to this work include Eliya Nachmani, Alon Levkovitch, Julian Salazar, Chulayutsh Asawaroengchai, Soroosh Mariooryad, RJ Skerry-Ryan and Michelle Tadmor Ramanovich. We also thank Heiga Zhen, Yifan Ding, Yu Zhang, Yuma Koizumi, Neil Zeghidour, Christian Frank, Marco Tagliasacchi, Nadav Bar, Benny Schlesinger and Blaise Aguera-Arcas.