The human visual system has a remarkable ability to make sense of our 3D world from its 2D projection. Even in complex environments with multiple moving objects, people are able to maintain a feasible interpretation of the objects’ geometry and depth ordering. The field of computer vision has long studied how to achieve similar capabilities by computationally reconstructing a scene’s geometry from 2D image data, but robust reconstruction remains difficult in many cases.

A particularly challenging case occurs when both the camera and the objects in the scene are freely moving. This confuses traditional 3D reconstruction algorithms that are based on triangulation, which assumes that the same object can be observed from at least two different viewpoints, at the same time. Satisfying this assumption requires either a multi-camera array (like Google’s Jump), or a scene that remains stationary as the single camera moves through it. As a result, most existing methods either filter out moving objects (assigning them “zero” depth values), or ignore them (resulting in incorrect depth values).

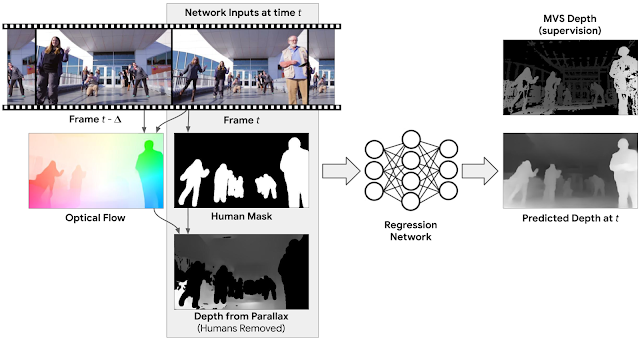

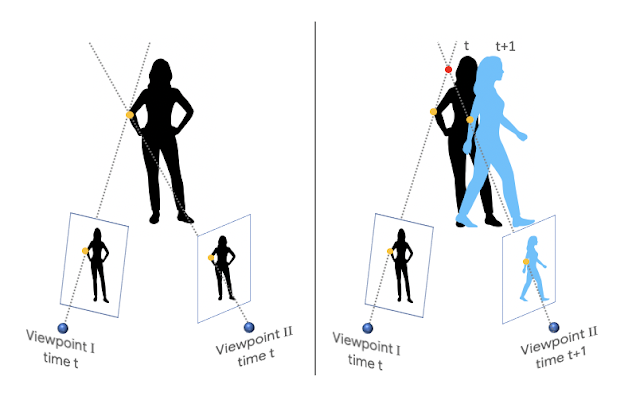

|

| Left: The traditional stereo setup assumes that at least two viewpoints capture the scene at the same time. Right: We consider the setup where both camera and subject are moving. |

|

| Our model predicts the depth map (right; brighter=closer to the camera) from a regular video (left), where both the people in the scene and the camera are freely moving. |

We train our depth-prediction model in a supervised manner, which requires videos of natural scenes, captured by moving cameras, along with accurate depth maps. The key question is where to get such data. Generating data synthetically requires realistic modeling and rendering of a wide range of scenes and natural human actions, which is challenging. Further, a model trained on such data may have difficulty generalizing to real scenes. Another approach might be to record real scenes with an RGBD sensor (e.g., Microsoft’s Kinect), but depth sensors are typically limited to indoor environments and have their own set of 3D reconstruction issues.

Instead, we make use of an existing source of data for supervision: YouTube videos in which people imitate mannequins by freezing in a wide variety of natural poses, while a hand-held camera tours the scene. Because the entire scene is stationary (only the camera is moving), triangulation-based methods--like multi-view-stereo (MVS)--work, and we can get accurate depth maps for the entire scene including the people in it. We gathered approximately 2000 such videos, spanning a wide range of realistic scenes with people naturally posing in different group configurations.

|

| Videos of people imitating mannequins while a camera tours the scene, which we used for training. We use traditional MVS algorithms to estimate depth, which serves as supervision during training of our depth-prediction model. |

The Mannequin Challenge videos provide depth supervision for moving camera and “frozen” people, but our goal is to handle videos with a moving camera and moving people. We need to structure the input to the network in order to bridge that gap.

A possible approach is to infer depth separately for each frame of the video (i.e., the input to the model is just a single frame). While such a model already improves over state-of-the-art single image methods for depth prediction, we can improve the results further by considering information from multiple frames. For example, motion parallax, i.e., the relative apparent motion of static objects between two different viewpoints, provides strong depth cues. To benefit from such information, we compute the 2D optical flow between each input frame and another frame in the video, which represents the pixel displacement between the two frames. This flow field depends on both the scene’s depth and the relative position of the camera. However, because the camera positions are known, we can remove their dependency from the flow field, which results in an initial depth map. This initial depth is valid only for static scene regions. To handle moving people at test time, we apply a human-segmentation network to mask out human regions in the initial depth map. The full input to our network then includes: the RGB image, the human mask, and the masked depth map from parallax.

Below are some examples of our depth-prediction model results based on videos, with comparison to recent state-of-the-art learning based methods.

|

| Comparison of depth prediction models to a video clip with moving cameras and people. Top: Learning based monocular depth prediction methods (DORN; Chen et al.). Bottom: Learning based stereo method (DeMoN), and our result. |

Our predicted depth maps can be used to produce a range of 3D-aware video effects. One such effect is synthetic defocus. Below is an example, produced from an ordinary video using our depth map.

|

| Bokeh video effect produced using our estimated depth maps. Video courtesy of Wind Walk Travel Videos. |

Acknowledgements

The research described in this post was done by Zhengqi Li, Tali Dekel, Forrester Cole, Richard Tucker, Noah Snavely, Ce Liu and Bill Freeman. We would like to thank Miki Rubinstein for his valuable feedback.