As a software engineer and generally analytic type, I like to craft theories for everything. Theories on how to build software, how to stay productive, how to be creative...and even how to dress well. For help with that last one, I decided to hire a personal stylist. As it turned out, I was not my stylist’s first software engineer client. “The problem with you people in tech is that you’re always looking for some sort of theory of fashion,” she told me. “But there is no formula–it’s about taste.”

Unfortunately my stylist’s taste was a bit outside of my price range (I drew the line at a $300 hoodie). But I knew she was right. It’s true that computers (and maybe the people who program them) are better at solving problems with clear-cut answers than they are at navigating touchy-feely matters, like taste. Fashion trends are not set by data-crunching CPUs, they’re made by human tastemakers and fashionistas and their modern-day equivalents, social media influencers.

I found myself wondering if I could build an app that combined trendsetters’ sense of style with AI’s efficiency to help me out a little. I started getting fashion inspiration from Instagram influencers who matched my style. When I saw an outfit I liked, I’d try to recreate it using items I already owned. It was an effective strategy, so I set out to automate it with AI.

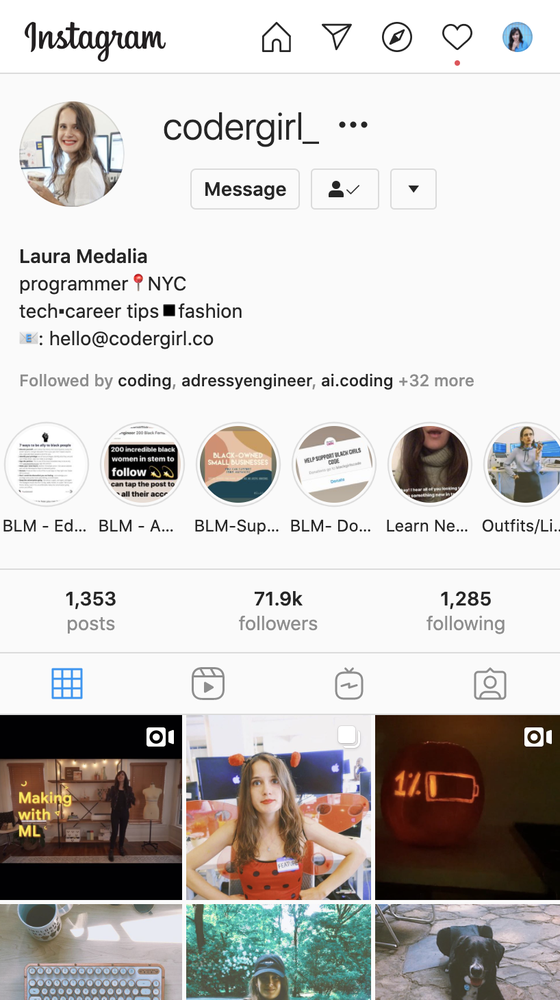

First, I partnered up with one of my favorite programmers, who just so happened to also be an Instagram influencer, Laura Medalia (or codergirl_ on Instagram). With her permission, I uploaded all of Laura’s pictures to Google Cloud to serve as my outfit inspiration.

Next, I painstakingly photographed every single item of clothing I owned, creating a digital archive of my closet.

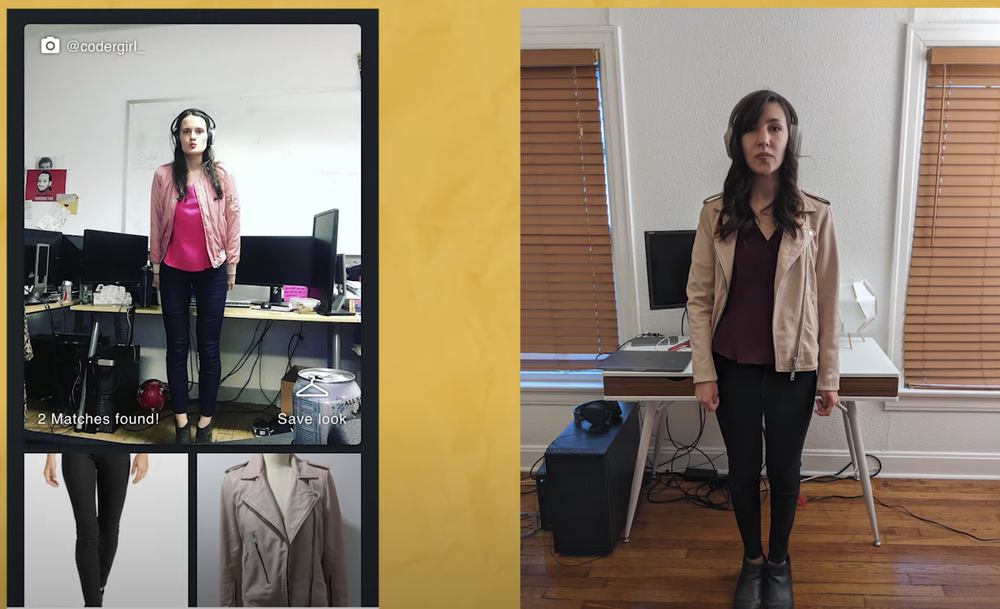

To compare my closet with Laura’s, I used Google Cloud Vision Product Search API, which uses computer vision to identify similar products. If you’ve ever seen a “See Similar Items” tab when you’re online shopping, it’s probably powered by a similar technology. I used this API to look through all of Laura’s outfits and all of my clothes to figure out which looks I could recreate. I bundled up all of the recommendations into a web app so that I could browse them on my phone, and voila: I had my own AI-powered stylist. It looks like this:

Thanks to Laura’s sense of taste, I have lots of new ideas for styling my own wardrobe. Here’s one look I was able to recreate:

If you want to see the rest of my newfound outfits, check out the YouTube video at the top of this post, where I go into all of the details of how I built the app, or read my blog post.

No, I didn’t end up with a Grand Unified Theory of Fashion—but at least I have something stylish to wear while I’m figuring it out.