Gemini 2.5 Flash and Pro are now generally available, and we’re introducing 2.5 Flash-Lite, our most cost-efficient and fastest 2.5 model yet.

Gemini 2.5 Flash and Pro are now generally available, and we’re introducing 2.5 Flash-Lite, our most cost-efficient and fastest 2.5 model yet.

We’re expanding our Gemini 2.5 family of models

Gemini 2.5 Flash and Pro are now generally available, and we’re introducing 2.5 Flash-Lite, our most cost-efficient and fastest 2.5 model yet.

Gemini 2.5 Flash and Pro are now generally available, and we’re introducing 2.5 Flash-Lite, our most cost-efficient and fastest 2.5 model yet.

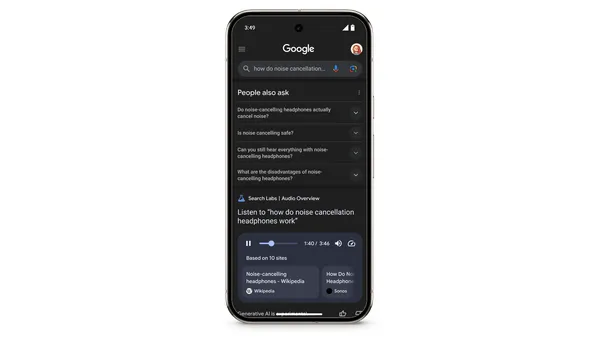

Today, we’re launching a new Search experiment in Labs – Audio Overviews, which uses our latest Gemini models to generate quick, conversational audio overviews for certa…

Today, we’re launching a new Search experiment in Labs – Audio Overviews, which uses our latest Gemini models to generate quick, conversational audio overviews for certa…

We partnered with Darren Aronofsky, Eliza McNitt and a team of more than 200 to make ANCESTRA.

We partnered with Darren Aronofsky, Eliza McNitt and a team of more than 200 to make ANCESTRA.