Over the past couple years, the Creative Lab in collaboration with the Handwriting Recognition team have released a few experiments in the realm of “doodle” recognition. First, in 2016, there was Quick, Draw!, which uses a neural network to guess what you’re drawing. Since Quick, Draw! launched we have collected over 1 billion drawings across 345 categories. In the wake of that popularity, we open sourced a collection of 50 million drawings giving developers around the world access to the data set and the ability to conduct research with it.

"The different ways in which people draw are like different notes in some universally human scale" - Ian Johnson, UX Engineer @ Google

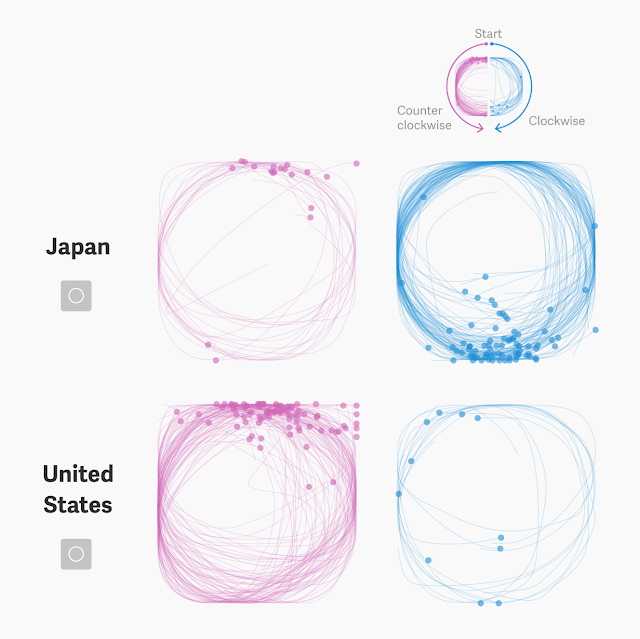

Since the initial dataset was released, it has been incredible to see how graphs, t-sne clusters, and simply overlapping millions of these doodles have given us the ability to infer interesting human behaviors, across different cultures. One example, from the Quartz study, is that 86% of Americans (from a sample of 50,000) draw their circles counterclockwise, while 80% of Japanese (from a sample of 800) draw them clockwise. Part of this pattern in behavior can be attributed to the strict stroke order in Japanese writing, from the top left to the bottom right.

It’s also interesting to see how the data looks when it’s overlaid by country, as Kyle McDonald did, when he discovered that some countries draw their chairs in perspective while others draw them straight on.

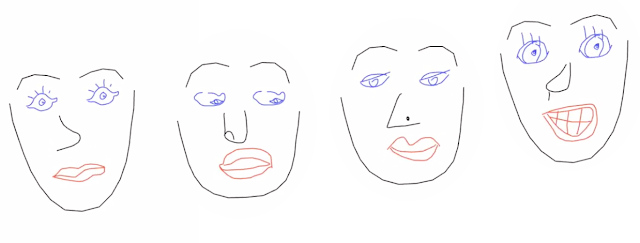

On the more fun, artistic spectrum, there are some simple but clever uses of the data like Neil Mendoza’s face tracking experiment and Deborah Schmidt’s letter collages.

|

| See the video here of Neil Mendoza mapping Quick, Draw! facial features to your own face in front of a webcam |

|

|

| See the process video here of Deborah Schmidt packing QuickDraw data into letters using OpenFrameworks |

Some handy tools have also been released from the community since the release of all this data, and one of those that we’re releasing now is a Polymer component that allows you to display a doodle in your web-based project with one line of markup:

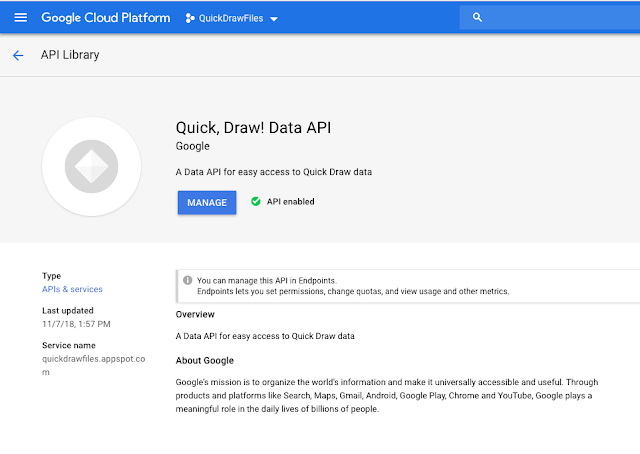

The Polymer component is coupled with a Data API that layers a massive file directory (50 million files) and returns a JSON object or an HTML canvas rendering for each drawing. Without downloading all the data, you can start creating right away in prototyping your ideas. We’ve also provided instructions for how to host the data and API yourself on Google Cloud Platform (for more serious projects that demand a higher request limit).

One really handy tool when hosting an API on Google Cloud is Cloud Endpoints. It allowed us to launch a demo API with a quota limit and authentication via an API key.

By defining an OpenAPI specification (here is the Quick, Draw! Data API spec) and adding these three lines to our app.yaml file, an Extensible Service Proxy (ESP) gets deployed with our API backend code (more instructions here):

endpoints_api_service:

name: quickdrawfiles.appspot.com

rollout_strategy: managed

Based on the OpenAPI spec, documentation is also automatically generated for you:

We used a public Google Group as an access control list, so anyone who joins can then have the API available in their API library.

|

| The Google Group used as an Access Control List |

|

| After joining the group, you can search for and add the Quick, Draw! Data API in your GCP project |

This component and Data API will make it easier for creatives out there to manipulate the data for their own research. Looking to the future, a potential next step for the project could be to store everything in a single database for more complex queries (i.e. “give me an recognized drawing from China in March 2017”). Feedback is always welcome, and we hope this inspires even more types of projects using the data! More details on the project and the incredible research projects done using it can be found on our GitHub repo.

Editor's Note: Some may notice that this isn’t the only dataset we’ve open sourced recently! You can find many more datasets in our open source project directory.