- Stuffing keyword-rich links to your site in your articles

- Having the articles published across many different sites; alternatively, having a large number of articles on a few large, different sites

- Using or hiring article writers that aren’t knowledgeable about the topics they’re writing on

- Using the same or similar content across these articles; alternatively, duplicating the full content of articles found on your own site (in which case use of rel=”canonical”, in addition to rel=”nofollow”, is advised)

Category Archives: Google Webmaster Central Blog

How we fought webspam – Webspam Report 2016

With 2017 well underway, we wanted to take a moment and share some of the insights we gathered in 2016 in our fight against webspam. Over the past year, we continued to find new ways of keeping spam from creating a poor quality search experience, and worked with webmasters around the world to make the web better.

We do a lot behind the scenes to make sure that users can make full use of what today’s web has to offer, bringing relevant results to everyone around the globe, while fighting webspam that could potentially harm or simply annoy users.

Webspam trends in 2016

- Website security continues to be a major source of concern. Last year we saw more hacked sites than ever - a 32% increase compared to 2015. Because of this, we continued to invest in improving and creating more resources to help webmasters know what to do when their sites get hacked.

- We continued to see that sites are compromised not just to host webspam. We saw a lot of webmasters affected by social engineering, unwanted software, and unwanted ad injectors. We took a stronger stance in Safe Browsing to protect users from deceptive download buttons, made a strong effort to protect users from repeatedly dangerous sites, and we launched more detailed help text within the Search Console Security Issues Report.

- Since more people are searching on Google using a mobile device, we saw a significant increase in spam targeting mobile users. In particular, we saw a rise in spam that redirects users, without the webmaster’s knowledge, to other sites or pages, inserted into webmaster pages using widgets or via ad units from various advertising networks.

How we fought spam in 2016

- We continued to refine our algorithms to tackle webspam. We made multiple improvements to how we rank sites, including making Penguin (one of our core ranking algorithms) work in real-time.

- The spam that we didn’t identify algorithmically was handled manually. We sent over 9 million messages to webmasters to notify them of webspam issues on their sites. We also started providing more security notifications via Google Analytics.

- We performed algorithmic and manual quality checks to ensure that websites with structured data markup meet quality standards. We took manual action on more than 10,000 sites that did not meet the quality guidelines for inclusion in search features powered by structured data.

Working with users and webmasters for a better web

- In 2016 we received over 180,000 user-submitted spam reports from around the world. After carefully checking their validity, we considered 52% of those reported sites to be spam. Thanks to all who submitted reports and contributed towards a cleaner and safer web ecosystem!

- We conducted more than 170 online office hours and live events around the world to audiences totaling over 150,000 website owners, webmasters and digital marketers.

- We continued to provide support to website owners around the world through our Webmaster Help Forums in 15 languages. Through these forums we saw over 67,000 questions, with a majority of them being identified as having a Best Response by our community of Top contributors, Rising Stars and Googlers.

- We had 119 volunteer Webmaster Top Contributors and Rising Stars, whom we invited to join us at our local Top Contributor Meetups in 11 different locations across 4 continents (Asia, Europe, North America, South America).

We think everybody deserves high quality, spam-free search results. We hope that this report provides a glimpse of what we do to make that happen.

Posted by Michal Wicinski, Search Quality Strategist and Kiyotaka Tanaka, User Education & Outreach Specialist

Source: Google Webmaster Central Blog

How we fought webspam – Webspam Report 2016

With 2017 well underway, we wanted to take a moment and share some of the insights we gathered in 2016 in our fight against webspam. Over the past year, we continued to find new ways of keeping spam from creating a poor quality search experience, and worked with webmasters around the world to make the web better.

We do a lot behind the scenes to make sure that users can make full use of what today’s web has to offer, bringing relevant results to everyone around the globe, while fighting webspam that could potentially harm or simply annoy users.

Webspam trends in 2016

- Website security continues to be a major source of concern. Last year we saw more hacked sites than ever - a 32% increase compared to 2015. Because of this, we continued to invest in improving and creating more resources to help webmasters know what to do when their sites get hacked.

- We continued to see that sites are compromised not just to host webspam. We saw a lot of webmasters affected by social engineering, unwanted software, and unwanted ad injectors. We took a stronger stance in Safe Browsing to protect users from deceptive download buttons, made a strong effort to protect users from repeatedly dangerous sites, and we launched more detailed help text within the Search Console Security Issues Report.

- Since more people are searching on Google using a mobile device, we saw a significant increase in spam targeting mobile users. In particular, we saw a rise in spam that redirects users, without the webmaster’s knowledge, to other sites or pages, inserted into webmaster pages using widgets or via ad units from various advertising networks.

How we fought spam in 2016

- We continued to refine our algorithms to tackle webspam. We made multiple improvements to how we rank sites, including making Penguin (one of our core ranking algorithms) work in real-time.

- The spam that we didn’t identify algorithmically was handled manually. We sent over 9 million messages to webmasters to notify them of webspam issues on their sites. We also started providing more security notifications via Google Analytics.

- We performed algorithmic and manual quality checks to ensure that websites with structured data markup meet quality standards. We took manual action on more than 10,000 sites that did not meet the quality guidelines for inclusion in search features powered by structured data.

Working with users and webmasters for a better web

- In 2016 we received over 180,000 user-submitted spam reports from around the world. After carefully checking their validity, we considered 52% of those reported sites to be spam. Thanks to all who submitted reports and contributed towards a cleaner and safer web ecosystem!

- We conducted more than 170 online office hours and live events around the world to audiences totaling over 150,000 website owners, webmasters and digital marketers.

- We continued to provide support to website owners around the world through our Webmaster Help Forums in 15 languages. Through these forums we saw over 67,000 questions, with a majority of them being identified as having a Best Response by our community of Top contributors, Rising Stars and Googlers.

- We had 119 volunteer Webmaster Top Contributors and Rising Stars, whom we invited to join us at our local Top Contributor Meetups in 11 different locations across 4 continents (Asia, Europe, North America, South America).

We think everybody deserves high quality, spam-free search results. We hope that this report provides a glimpse of what we do to make that happen.

Posted by Michal Wicinski, Search Quality Strategist and Kiyotaka Tanaka, User Education & Outreach Specialist

Source: Google Webmaster Central Blog

Similar items: Rich products feature on Google Image Search

Image Search recently launched “Similar items” on mobile web and the Android Search app. The “Similar items” feature is designed to help users find products they love in photos that inspire them on Google Image Search. Using machine vision technology, the Similar items feature identifies products in lifestyle images and displays matching products to the user. Similar items supports handbags, sunglasses, and shoes and will cover other apparel and home & garden categories in the next few months.

The Similar items feature enables users to browse and shop inspirational fashion photography and find product info about items they’re interested in. Try it out by opening results from queries like [designer handbags].

Finding price and availability information was one of the top Image Search feature requests from our users. The Similar items carousel gets millions of impressions and clicks daily from all over the world.

To make your products eligible for Similar items, make sure to add and maintain schema.org product metadata on your pages. The schema.org/Product markup helps Google find product offerings on the web and give users an at-a-glance summary of product info.

To ensure that your products are eligible to appear in Similar items:

- Ensure that the product offerings on your pages have schema.org product markup, including an image reference. Products with name, image, price & currency, and availability meta-data on their host page are eligible for Similar items

- Test your pages with Google’s Structured Data Testing Tool to verify that the product markup is formatted correctly

- See your images on image search by issuing the query “site:yourdomain.com.” For results with valid product markup, you may see product information appear once you tap on the images from your site. It can take up to a week for Googlebot to recrawl your website.

Right now, Similar items is available on mobile browsers and the Android Google Search App globally, and we plan to expand to more platforms in 2017.

If you have questions, find us in the dedicated Structured data section of our forum, on Twitter, or on Google+. To prevent your images from showing in Similar items, webmasters can opt-out of Google Image Search.

We’re excited to help users find your products on the web by showcasing buyable items. Thanks for partnering with us to make the web more shoppable!

Source: Google Webmaster Central Blog

Updates to the Google Safe Browsing’s Site Status Tool

Google Safe Browsing gives users tools to help protect themselves from web-based threats like malware, unwanted software, and social engineering. We are best known for our warnings, which users see when they attempt to navigate to dangerous sites or download dangerous files. We also provide other tools, like the Site Status Tool, where people can check the current safety status of a web page (without having to visit it).

We host this tool within Google’s Safe Browsing Transparency Report. As with other sections in Google’s Transparency Report, we make this data available to give the public more visibility into the security and health of the online ecosystem. Users of the Site Status Tool input a webpage (as a URL, website, or domain) into the tool, and the most recent results of the Safe Browsing analysis for that webpage are returned...plus references to troubleshooting help and educational materials.

We’ve just launched a new version of the Site Status Tool that provides simpler, clearer results and is better designed for the primary users of the page: people who are visiting the tool from a Safe Browsing warning they’ve received, or doing casual research on Google’s malware and phishing detection. The tool now features a cleaner UI, easier-to-interpret language, and more precise results. We’ve also moved some of the more technical data on associated ASes (autonomous systems) over to the malware dashboard section of the report.

While the interface has been streamlined, additional diagnostic information is not gone: researchers who wish to find more details can drill-down elsewhere in Safe Browsing’s Transparency Report, while site-owners can find additional diagnostic information in Search Console. One of the goals of the Transparency Report is to shed light on complex policy and security issues, so, we hope the design adjustments will indeed provide our users with additional clarity.

Source: Google Webmaster Central Blog

#NoHacked: A year in review

We wanted to share with you a summary of our 2016 work as we continue our #NoHacked campaign. Let’s start with some trends on hacked sites from the past year.

State of Website Security in 2016

First off, some unfortunate news. We’ve seen an increase in the number of hacked sites by approximately 32% in 2016 compared to 2015. We don’t expect this trend to slow down. As hackers get more aggressive and more sites become outdated, hackers will continue to capitalize by infecting more sites.On the bright side, 84% webmasters who do apply for reconsideration are successful in cleaning their sites. However, 61% of webmasters who were hacked never received a notification from Google that their site was infected because their sites weren't verified in Search Console. Remember to register for Search Console if you own or manage a site. It’s the primary channel that Google uses to communicate site health alerts.

More Help for Hacked Webmasters

We’ve been listening to your feedback to better understand how we can help webmasters with security issues. One of the top requests was easier to understand documentation about hacked sites. As a result we’ve been hard at work to make our documentation more useful.

First, we created new documentation to give webmasters more context when their site has been compromised. Here is a list of the new help documentation:

- Top ways websites get hacked by spammers

- Glossary for Hacked Sites

- FAQs for Hacked Sites

- How do I know if my site is hacked?

Gibberish Hack: The gibberish hack automatically creates many pages with non-sensical sentences filled with keywords on the target site. Hackers do this so the hacked pages show up in Google Search. Then, when people try to visit these pages, they’ll be redirected to an unrelated page, like a porn site. Learn more on how to fix this type of hack.

Japanese Keywords Hack: The Japanese keywords hack typically creates new pages with Japanese text on the target site in randomly generated directory names. These pages are monetized using affiliate links to stores selling fake brand merchandise and then shown in Google search. Sometimes the accounts of the hackers get added in Search Console as site owners. Learn more on how to fix this type of hack.

Cloaked Keywords Hack: The cloaked keywords and link hack automatically creates many pages with non-sensical sentence, links, and images. These pages sometimes contain basic template elements from the original site, so at first glance, the pages might look like normal parts of the target site until you read the content. In this type of attack, hackers usually use cloaking techniques to hide the malicious content and make the injected page appear as part of the original site or a 404 error page. Learn more on how to fix this type of hack.

Prevention is Key

As always it’s best to take a preventative approach and secure your site rather than dealing with the aftermath. Remember a chain is only as strong as its weakest link. You can read more about how to identify vulnerabilities on your site in our hacked help guide. We also recommend staying up-to-date on releases and announcements from your Content Management System (CMS) providers and software/hardware vendors.

Looking Forward

Hacking behavior is constantly evolving, and research allows us to stay up to date on and combat the latest trends. You can learn about our latest research publications in the information security research site. Highlighted below are a few specific studies specific to website compromises:- Cloak of Visibility: Detecting When Machines Browse a Different Web

- Investigating Commercial Pay-Per-Install and the Distribution of Unwanted Software

- Users Really Do Plug in USB Drives They Find

- Ad Injection at Scale: Assessing Deceptive Advertisement Modifications

Source: Google Webmaster Central Blog

Closing down for a day

Even in today's "always-on" world, sometimes businesses want to take a break. There are times when even their online presence needs to be paused. This blog post covers some of the available options so that a site's search presence isn't affected.

Option: Block cart functionality

If a site only needs to block users from buying things, the simplest approach is to disable that specific functionality. In most cases, shopping cart pages can either be blocked from crawling through the robots.txt file, or blocked from indexing with a robots meta tag. Since search engines either won't see or index that content, you can communicate this to users in an appropriate way. For example, you may disable the link to the cart, add a relevant message, or display an informational page instead of the cart.

Option: Always show interstitial or pop-up

If you need to block the whole site from users, be it with a "temporarily unavailable" message, informational page, or popup, the server should return a 503 HTTP result code ("Service Unavailable"). The 503 result code makes sure that Google doesn't index the temporary content that's shown to users. Without the 503 result code, the interstitial would be indexed as your website's content.

Googlebot will retry pages that return 503 for up to about a week, before treating it as a permanent error that can result in those pages being dropped from the search results. You can also include a "Retry after" header to indicate how long the site will be unavailable. Blocking a site for longer than a week can have negative effects on the site's search results regardless of the method that you use.

Option: Switch whole website off

Turning the server off completely is another option. You might also do this if you're physically moving your server to a different data center. For this, have a temporary server available to serve a 503 HTTP result code for all URLs (with an appropriate informational page for users), and switch your DNS to point to that server during that time.

- Set your DNS TTL to a low time (such as 5 minutes) a few days in advance.

- Change the DNS to the temporary server's IP address.

- Take your main server offline once all requests go to the temporary server.

- … your server is now offline ...

- When ready, bring your main server online again.

- Switch DNS back to the main server's IP address.

- Change the DNS TTL back to normal.

We hope these options cover the common situations where you'd need to disable your website temporarily. If you have any questions, feel free to drop by our webmaster help forums!

PS If your business is active locally, make sure to reflect these closures in the opening hours for your local listings too!

Source: Google Webmaster Central Blog

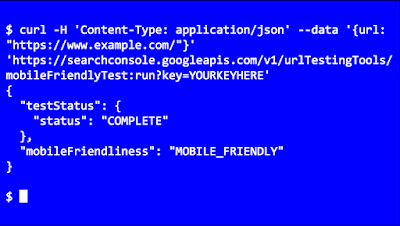

Introducing the Mobile-Friendly Test API

With so many users on mobile devices, having a mobile-friendly web is important to us all. The Mobile-Friendly Test is a great way to check individual pages manually. We're happy to announce that this test is now available via API as well.

The Mobile-Friendly Test API lets you test URLs using automated tools. For example, you could use it to monitor important pages in your website in order to prevent accidental regressions in templates that you use. The API method runs all tests, and returns the same information - including a list of the blocked URLs - as the manual test. The documentation includes simple samples to help get you started quickly.

We hope this API makes it easier to check your pages for mobile-friendliness and to get any such issues resolved faster. We'd love to hear how you use the API -- leave us a comment here, and feel free to link to any code or implementation that you've set up! As always, if you have any questions, feel free to drop by our webmaster help forum.

Source: Google Webmaster Central Blog

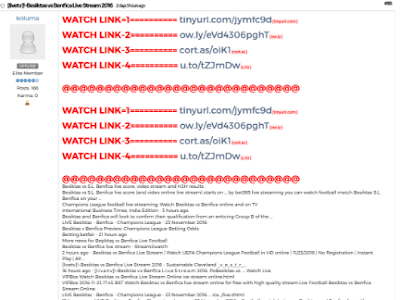

Protect your site from user generated spam

As a website owner, you might have come across some auto-generated content in comments sections or forum threads. When such content is created on your pages, not only does it disrupt those visiting your site, but it also shows some content that you may not want to be associated with your site to Google and other search engines.

In this blog post, we will give you tips to help you deal with this type of spam in your site and forum.

Some spammers abuse sites owned by others by posting deceiving content and links, in an attempt to get more traffic to their sites. Here are a few examples:

Comments and forum threads can be a really good source of information and an efficient way of engaging a site's users in discussions. This valuable content should not be buried by auto-generated keywords and links placed there by spammers.

There are many ways of securing your site’s forums and comment threads and making them unattractive to spammers:

- Keep your forum software updated and patched. Take the time to keep your software up-to-date and pay special attention to important security updates. Spammers take advantage of security issues in older versions of blogs, bulletin boards, and other content management systems.

- Add a CAPTCHA. CAPTCHAs require users to confirm that they are not robots in order to prove they're a human being and not an automated script. One way to do this is to use a service like reCAPTCHA, Securimage and Jcaptcha .

- Block suspicious behavior. Many forums allow you to set time limits between posts, and you can often find plugins to look for excessive traffic from individual IP addresses or proxies and other activity more common to bots than human beings. For example, phpBB, Simple Machines, myBB, and many other forum platforms enable such configurations.

- Check your forum’s top posters on a daily basis. If a user joined recently and has an excessive amount of posts, then you probably should review their profile and make sure that their posts and threads are not spammy.

- Consider disabling some types of comments. For example, It’s a good practice to close some very old forum threads that are unlikely to get legitimate replies.

If you plan on not monitoring your forum going forward and users are no longer interacting with it, turning off posting completely may prevent spammers from abusing it. - Make good use of moderation capabilities. Consider enabling features in moderation that require users to have a certain reputation before links can be posted or where comments with links require moderation.

If possible, change your settings so that you disallow anonymous posting and make posts from new users require approval before they're publicly visible.

Moderators, together with your friends/colleagues and some other trusted users can help you review and approve posts while spreading the workload. Keep an eye on your forum's new users by looking on their posts and activities on your forum. - Consider blacklisting obviously spammy terms. Block obviously inappropriate comments with a blacklist of spammy terms (e.g. Illegal streaming or pharma related terms) . Add inappropriate and off-topic terms that are only used by spammers, learn from the spam posts that you often see on your forum or other forums. Built-in features or plugins can delete or mark comments as spam for you.

- Use the "nofollow" attribute for links in the comment field. This will deter spammers from targeting your site. By default, many blogging sites (such as Blogger) automatically add this attribute to any posted comments.

- Use automated systems to defend your site. Comprehensive systems like Akismet, which has plugins for many blogs and forum systems are easy to install and do most of the work for you.

For detailed information about these topics, check out our Help Center document on User Generated Spam and comment spam. You can also visit our Webmaster Central Help Forum if you need any help.

Source: Google Webmaster Central Blog

What Crawl Budget Means for Googlebot

First, we'd like to emphasize that crawl budget, as described below, is not something most publishers have to worry about. If new pages tend to be crawled the same day they're published, crawl budget is not something webmasters need to focus on. Likewise, if a site has fewer than a few thousand URLs, most of the time it will be crawled efficiently.

Prioritizing what to crawl, when, and how much resource the server hosting the site can allocate to crawling is more important for bigger sites, or those that auto-generate pages based on URL parameters, for example.

Crawl rate limit

Googlebot is designed to be a good citizen of the web. Crawling is its main priority, while making sure it doesn't degrade the experience of users visiting the site. We call this the "crawl rate limit," which limits the maximum fetching rate for a given site.Simply put, this represents the number of simultaneous parallel connections Googlebot may use to crawl the site, as well as the time it has to wait between the fetches. The crawl rate can go up and down based on a couple of factors:

- Crawl health: if the site responds really quickly for a while, the limit goes up, meaning more connections can be used to crawl. If the site slows down or responds with server errors, the limit goes down and Googlebot crawls less.

- Limit set in Search Console: website owners can reduce Googlebot's crawling of their site. Note that setting higher limits doesn't automatically increase crawling.

Crawl demand

Even if the crawl rate limit isn't reached, if there's no demand from indexing, there will be low activity from Googlebot. The two factors that play a significant role in determining crawl demand are: - Popularity: URLs that are more popular on the Internet tend to be crawled more often to keep them fresher in our index.

- Staleness: our systems attempt to prevent URLs from becoming stale in the index.

Taking crawl rate and crawl demand together we define crawl budget as the number of URLs Googlebot can and wants to crawl.

Factors affecting crawl budget

According to our analysis, having many low-value-add URLs can negatively affect a site's crawling and indexing. We found that the low-value-add URLs fall into these categories, in order of significance: - Faceted navigation and session identifiers

- On-site duplicate content

- Soft error pages

- Hacked pages

- Infinite spaces and proxies

- Low quality and spam content

Top questions

Crawling is the entry point for sites into Google's search results. Efficient crawling of a website helps with its indexing in Google Search. Q: Does site speed affect my crawl budget? How about errors?

A: Making a site faster improves the users' experience while also increasing crawl rate. For Googlebot a speedy site is a sign of healthy servers, so it can get more content over the same number of connections. On the flip side, a significant number of 5xx errors or connection timeouts signal the opposite, and crawling slows down.

We recommend paying attention to the Crawl Errors report in Search Console and keeping the number of server errors low.

Q: Is crawling a ranking factor?

A: An increased crawl rate will not necessarily lead to better positions in Search results. Google uses hundreds of signals to rank the results, and while crawling is necessary for being in the results, it's not a ranking signal.

Q: Do alternate URLs and embedded content count in the crawl budget?

A: Generally, any URL that Googlebot crawls will count towards a site's crawl budget. Alternate URLs, like AMP or hreflang, as well as embedded content, such as CSS and JavaScript, may have to be crawled and will consume a site's crawl budget. Similarly, long redirect chains may have a negative effect on crawling.

Q: Can I control Googlebot with the "crawl-delay" directive?

A: The non-standard "crawl-delay" robots.txt directive is not processed by Googlebot.

Q: Does the nofollow directive affect crawl budget?

A: It depends. Any URL that is crawled affects crawl budget, so even if your page marks a URL as nofollow it can still be crawled if another page on your site, or any page on the web, doesn't label the link as nofollow.

For information on how to optimize crawling of your site, take a look at our blogpost on optimizing crawling from 2009 that is still applicable. If you have questions, ask in the forums!

Posted by Gary, Crawling and Indexing teams