Posted by Kevin Deus, Joel Galenson, Billy Lau and Ivan Lozano, Android Security & Privacy TeamThe Android platform team is committed to securing Android for every user across every device. In addition to monthly security updates to patch vulnerabilities reported to us through our Vulnerability Rewards Program (VRP), we also proactively architect Android to protect against undiscovered vulnerabilities through hardening measures such as applying compiler-based mitigations and improving sandboxing. This post focuses on the decision-making process that goes into these proactive measures: in particular, how we choose which hardening techniques to deploy and where they are deployed. As device capabilities vary widely within the Android ecosystem, these decisions must be made carefully, guided by data available to us to maximize the value to the ecosystem as a whole.

The overall approach to Android Security is multi-pronged and leverages several principles and techniques to arrive at data-guided solutions to make future exploitation more difficult. In particular, when it comes to hardening the platform, we try to answer the following questions:

- What data are available and how can they guide security decisions?

- What mitigations are available, how can they be improved, and where should they be enabled?

- What are the deployment challenges of particular mitigations and what tradeoffs are there to consider?

By shedding some light on the process we use to choose security features for Android, we hope to provide a better understanding of Android's overall approach to protecting our users.

Data-driven security decision-making

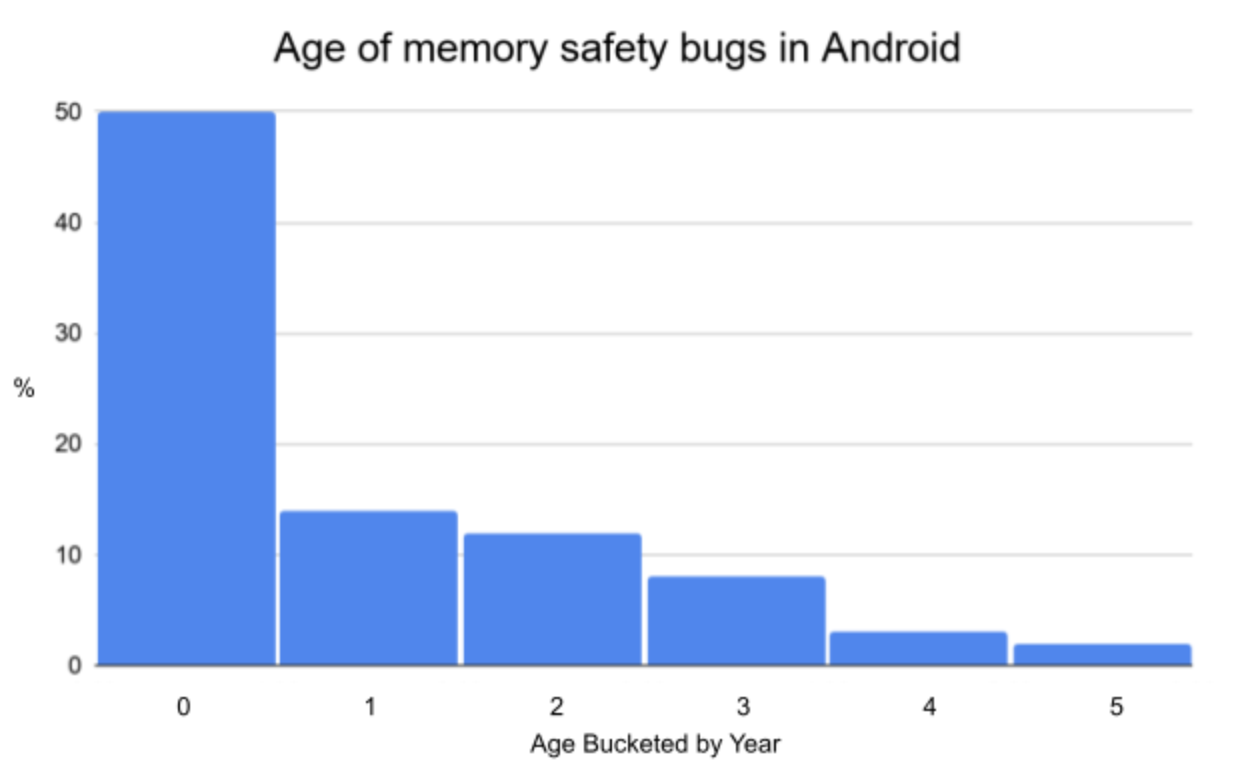

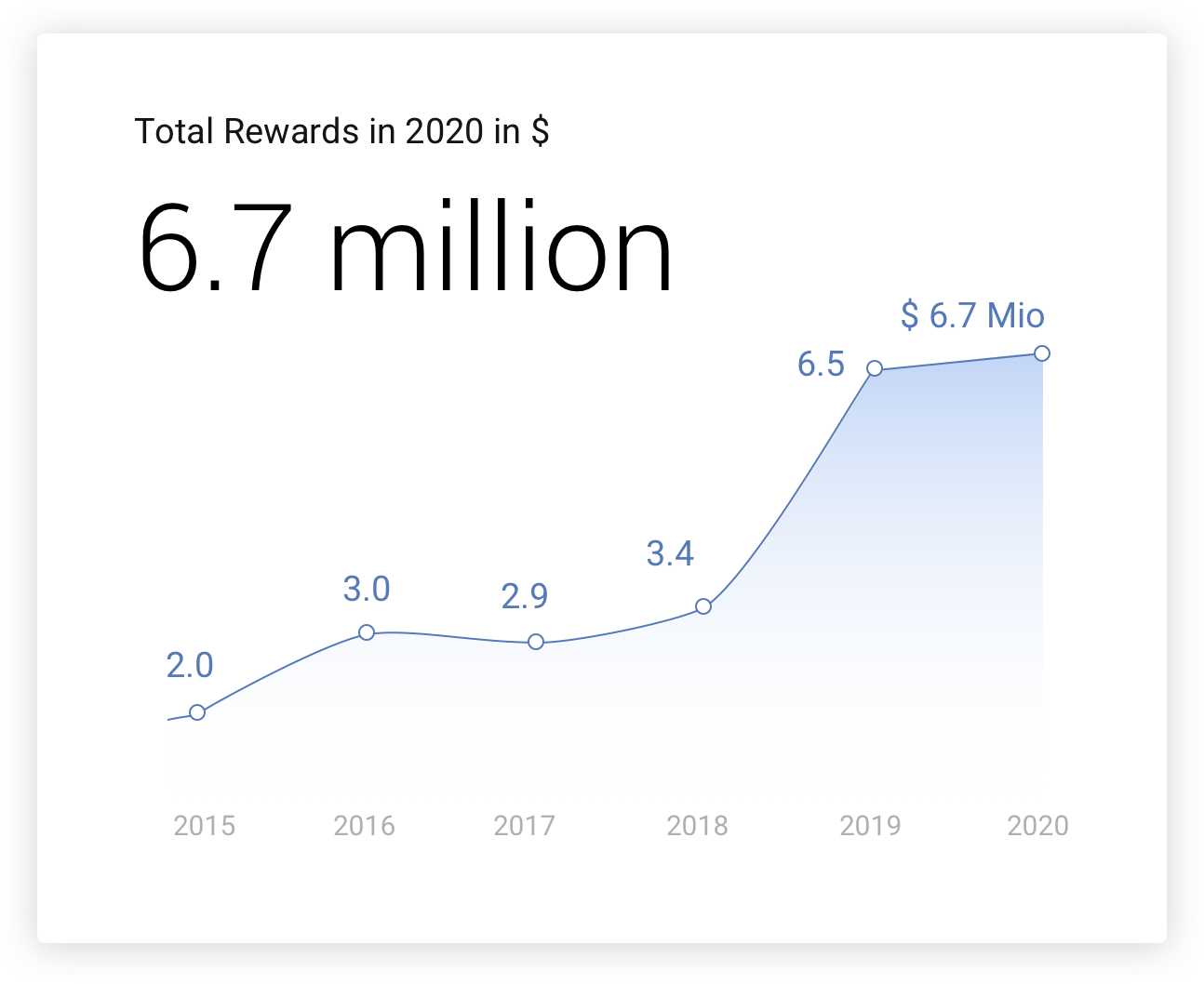

We use a variety of sources to determine what areas of the platform would benefit the most from different types of security mitigations. The Android Vulnerability Rewards Program (VRP) is one very informative source: all vulnerabilities submitted through this program are analyzed by our security engineers to determine the root cause of each vulnerability and its overall severity (based on these guidelines). Other sources are internal and external bug-reports, which identify vulnerable components and reveal coding practices that commonly lead to errors. Knowledge of problematic code patterns combined with the prevalence and severity of the vulnerabilities they cause can help inform decisions about which mitigations are likely to be the most beneficial.

Types of Critical and High severity vulnerabilities fixed in Android Security Bulletins in 2019

Relying purely on vulnerability reports is not sufficient as the data are inherently biased: often, security researchers flock to "hot" areas, where other researchers have already found vulnerabilities (e.g. Stagefright). Or they may focus on areas where readily-available tools make it easier to find bugs (for instance, if a security research tool is posted to Github, other researchers commonly utilize that tool to explore deeper).

To ensure that mitigation efforts are not biased only toward areas where bugs and vulnerabilities have been reported, internal Red Teams analyze less scrutinized or more complex parts of the platform. Also, continuous automated fuzzers run at-scale on both Android virtual machines and physical devices. This also ensures that bugs can be found and fixed early in the development lifecycle. Any vulnerabilities uncovered through this process are also analyzed for root cause and severity, which inform mitigation deployment decisions.

The Android VRP rewards submissions of full exploit-chains that demonstrate a full end-to-end attack. These exploit-chains, which generally utilize multiple vulnerabilities, are very informative in demonstrating techniques that attackers use to chain vulnerabilities together to accomplish their goals. Whenever a researcher submits a full exploit chain, a team of security engineers analyzes and documents the overall approach, each link in the chain, and any innovative attack strategies used. This analysis informs which exploit mitigation strategies could be employed to prevent pivoting directly from one vulnerability to another (some examples include Address Space Layout Randomization and Control-Flow Integrity) and whether the process’s attack surface could be reduced if it has unnecessary access to resources.

There are often multiple different ways to use a collection of vulnerabilities to create an exploit chain. Therefore a defense-in-depth approach is beneficial, with the goal of reducing the usefulness of some vulnerabilities and lengthening exploit chains so that successful exploitation requires more vulnerabilities. This increases the cost for an attacker to develop a full exploit chain.

Keeping up with developments in the wider security community helps us understand the current threat landscape, what techniques are currently used for exploitation, and what future trends look like. This involves but is not limited to:

- Close collaboration with the external security research community

- Reading journals and attending conferences

- Monitoring techniques used by malware

- Following security research trends in security communities

- Participating in external efforts and projects such as KSPP, syzbot, LLVM, Rust, and more

All of these data sources provide feedback for the overall security hardening strategy, where new mitigations should be deployed, and what existing security mitigations should be improved.

Reasoning About Security Hardening

Hardening and Mitigations

Analyzing the data reveals areas where broader mitigations can eliminate entire classes of vulnerabilities. For instance, if parts of the platform show a large number of vulnerabilities due to integer overflow bugs, they are good candidates to enable Undefined Behavior Sanitizer (UBSan) mitigations such as the Integer Overflow Sanitizer. When common patterns in memory access vulnerabilities appear, they inform efforts to build hardened memory allocators (enabled by default in Android 11) and implement mitigations (such as CFI) against exploitation techniques that provide better resilience against memory overflows or Use-After-Free vulnerabilities.

Before discussing how the data can be used, it is important to understand how we classify our overall efforts in hardening the platform. There are a few broadly defined buckets that hardening techniques and mitigations fit into (though sometimes a particular mitigation may not fit cleanly into any single one):

- Exploit mitigations

- Deterministic runtime prevention of vulnerabilities detects undefined or unexpected behavior and aborts execution when the behavior is detected. This turns potential memory corruption vulnerabilities into less harmful crashes. Often these mitigations can be enabled selectively and still be effective because they impact individual bugs. Examples include Integer Sanitizer and Bounds Sanitizer.

- Exploitation technique mitigations target the techniques used to pivot from one vulnerability to another or to gain code execution. These mitigations theoretically may render some vulnerabilities useless, but more often serve to constrain the actions available to attackers seeking to exploit vulnerabilities. This increases the difficulty of exploit development in terms of time and resources. These mitigations may need to be enabled across an entire process's memory space to be effective. Examples include Address Space Layout Randomization, Control Flow Integrity (CFI), Stack Canaries and Memory Tagging.

- Compiler transformations that change undefined behavior to defined behavior at compile-time. This prevents attackers from taking advantage of undefined behavior such as uninitialized memory. An example of this is stack initialization.

- Architectural decomposition

- Splits larger, more privileged components into smaller pieces, each of which has fewer privileges than the original. After this decomposition, a vulnerability in one of the smaller components will have reduced severity by providing less access to the system, lengthening exploit chains, and making it harder for an attacker to gain access to sensitive data or additional privilege escalation paths.

- Sandboxing/isolation

- Related to architectural decomposition, enforces a minimal set of permissions/capabilities that a process needs to correctly function, often through mandatory and/or discretionary access control. Like architectural decomposition, this makes vulnerabilities in these processes less valuable as there are fewer things attackers can do in that execution context, by applying the principle of least privilege. Some examples are Android Permissions, Unix Permissions, Linux Capabilities, SELinux, and Seccomp.

- Migrating to memory-safe languages

- C and C++ do not provide memory safety the way that languages like Java, Kotlin, and Rust do. Given that the majority of security vulnerabilities reported to Android are memory safety issues, a two-pronged approach is applied: improving the safety of C/C++ while also encouraging the use of memory safe languages.

Enabling these mitigations

With the broad arsenal of mitigation techniques available, which of these to employ and where to apply them depends on the type of problem being solved. For instance, a monolithic process that handles a lot of untrusted data and does complex parsing would be a good candidate for all of these. The media frameworks provide an excellent historical example where an architectural decomposition enabled incrementally turning on more exploit mitigations and deprivileging.

Architectural decomposition and isolation of the Media Frameworks over time

Remotely reachable attack surfaces such as NFC, Bluetooth, WiFi, and media components have historically housed the most severe vulnerabilities, and as such these components are also prioritized for hardening. These components often contain some of the most common vulnerability root causes that are reported in the VRP, and we have recently enabled sanitizers in all of them.

Libraries and processes that enforce or sit at security boundaries, such as libbinder, and widely-used core libraries such as libui, libcore, and libcutils are good targets for exploit mitigations since these are not process-specific. However, due to performance and stability sensitivities around these core libraries, mitigations need to be supported by strong evidence of their security impact.

Finally, the kernel’s high level of privilege makes it an important target for hardening as well. Because different codebases have different characteristics and functionality, susceptibility to and prevalence of certain kinds of vulnerabilities will differ. Stability and performance of mitigations here are exceptionally important to avoid negatively impacting the user experience, and some mitigations that make sense to deploy in user space may not be applicable or effective. Therefore our considerations for which hardening strategies to employ in the kernel are based on a separate analysis of the available kernel-specific data.

This data-driven approach has led to tangible and measurable results. Starting in 2015 with Stagefright, a large number of Critical severity vulnerabilities were reported in Android's media framework. These were especially sensitive because many of these vulnerabilities were remotely reachable. This led to a large architectural decomposition effort in Android Nougat, followed by additional efforts to improve our ability to patch media vulnerabilities quickly. Thanks to these changes, in 2020 we had no internet-reachable Critical severity vulnerabilities reported to us in the media frameworks.

Deployment Considerations

Some of these mitigations provide more value than others, so it is important to focus engineering resources where they are most effective. This involves weighing the performance cost of each mitigation as well as how much work is required to deploy it and support it without negatively affecting device stability or user experience.

Performance

Understanding the performance impact of a mitigation is a critical step toward enabling it. Adding too much overhead to some components or the entire system can negatively impact user experience by reducing battery life and making the device less responsive. This is especially true for entry-level devices, which should benefit from hardening as well. We thus want to prioritize engineering efforts on impactful mitigations with acceptable overheads.

When investigating performance, important factors include not just CPU time but also memory increase, code size, battery life, and UI jank. These factors are especially important to consider for more constrained entry-level devices, to ensure that the mitigations perform well across the entire Android ecosystem.

The system-wide performance impact of a mitigation is also dependent on where that mitigation is enabled, as certain components are more performance-sensitive than others. For example, binder is one of the most used paths for interprocess communication, so even small additional overhead could significantly impact user experience on a device. On the other hand, video players only need to ensure that frames are rendered at the source framerate; if frames are rendered much faster than the rate at which they are displayed, additional overhead may be more acceptable.

Benchmarks, if available, can be extremely useful to evaluate the performance impact of a mitigation. If there are no benchmarks for a certain component, new ones should be created, for instance by calling impacted codec code to decode a media file. If this testing reveals unacceptable overhead, there are often a few options to address it:

- Selectively disable the mitigation in performance-sensitive functions identified during benchmarks. A small number of functions are often responsible for a large part of the runtime overhead, so disabling the mitigation in those functions can maximize the security benefit while minimizing the performance cost. Here is an example of this in one of the media codecs. These exempted functions must be manually reviewed for bugs to reduce the risk of disabling the mitigation there.

- Optimize the implementation of the mitigation to improve its performance. This often involves modifying the compiler. For example, our team has upstreamed optimizations to the Integer Overflow Sanitizer and the Bounds Sanitizer.

- Certain mitigations, such as the Scudo allocator’s built-in robustness against heap-based vulnerabilities, have tunable parameters that can be tweaked to improve performance.

Most of these improvements involve changes or contributions to the LLVM project. By working with upstream LLVM, these improvements have impact and benefit beyond Android. At the same time Android benefits from upstream improvements when others in the LLVM community make improvements as well.

Deployment and Support

There is more to consider when enabling a mitigation than its security benefit and performance cost, such as the cost of short-term deployment and long-term support.

Deployment Stability Considerations

One important issue is whether a mitigation can contain false positives. For example, if the Bounds Sanitizer produces an error, there is definitely an out-of-bounds access (although it might not be exploitable). But the Integer Overflow Sanitizer can produce false positives, as many integer overflows are harmless or even perfectly expected and correct.

It is thus important to consider the impact of a mitigation on the stability of the system. Whether a crash is due to a false positive or a legitimate security issue, it still disrupts the user experience and so is undesirable. This is another reason to carefully consider which components should have which mitigations, as crashes in some components are worse than others. If a mitigation causes a crash in a media codec, the user’s video playback will be stopped, but if netd crashes during an update, the phone could be bricked. For a mitigation like Bounds Sanitizer, where false positives are not an issue, we still need to perform extensive testing to ensure the device remains stable. Off-by-one errors, for example, may not crash during normal operation, but Bounds Sanitizer would abort execution and result in instability.

Another consideration is whether it is possible to enumerate everything a mitigation might break. For example, it is not easy to contain the risk of the Integer Overflow Sanitizer without extensive testing, as it is difficult to determine which overflows are intentional/benign (and thus should be allowed) and which could lead to vulnerabilities.

Support

We must consider not just issues caused by deploying mitigations but also how to support them long-term. This includes the developer time to integrate a mitigation into existing systems, enable and debug it, deploy it onto devices, and support it after launch. SELinux is a good example of this; it takes a significant amount of effort to write the policy for a new device, and even once enforcing mode is enabled, the policy must be supported for years as code changes and functionality is added or removed.

We try to make mitigations less disruptive and spread awareness of how they affect developers. This is done by making documentation available on source.android.com and by improving existing algorithms to reduce false positives. Making it easier to debug mitigations when something goes wrong reduces the developer maintenance burden that can accompany mitigations. For example, when developers found it difficult to identify UBSan errors, we enabled support for the UBSan Minimal Runtime by default in the Android build system. The minimal runtime itself was first upstreamed by others at Google specifically for this purpose. When the Integer Overflow Sanitizer crashes a program, that adds the following hint to the generic SIGABRT crash message:

Abort message: 'ubsan: sub-overflow'

Developers who see this message then know to enable diagnostics mode, which prints out details about the crash:

frameworks/native/services/surfaceflinger/SurfaceFlinger.cpp:2188:32: runtime error: unsigned integer overflow: 0 - 1 cannot be represented in type 'size_t' (aka 'unsigned long')

Similarly, upstream SELinux provides a tool called audit2allow that can be used to suggest rules to allow blocked behaviors:

adb logcat -d | audit2allow -p policy

#============= rmt ==============

allow rmt kmem_device:chr_file { read write };

A debugging tool does not need to be perfect to be helpful; audit2allow does not always suggest the correct options, but for developers without detailed knowledge of SELinux it provides a strong starting point.

Conclusion

With every Android release, our team works hard to balance security improvements that benefit the entire ecosystem with performance and stability, drawing heavily from the data that are available to us. We hope that this sheds some light on the particular challenges involved and the overall process that leads to mitigations introduced in each Android release.

Thank you to Jeff Vander Stoep for contributions to this blog post.