Chances are, you’ve seen the work of Paul Debevec, Xueming Yu and Wan-Chun Alex Ma on the big screen—but you probably don’t know it. And that’s by design. Just this weekend, these Googlers took home an Academy Award for their face-digitizing technology, which has changed the way movies and video games use visual effects. And their goal is often to make sure you can just enjoy the characters, without thinking of them as computer-generated effects at all.

The three, alongside their former colleague Timothy Hawkins, won Scientific and Technical Achievement Awards for their work at the Institute for Creative Technologies at the University of Southern California, where they worked before heading to Google in 2016. (Debevec remains an adjunct professor there.) Along with a larger team, they created the Light Stage and its accompanying software, which capture 3D models of an actor’s face to be used for visual effects.

Actress Zoe Saldana is scanned in 2006 in Light Stage 5 at the USC Institute for Creative Technologies for her character Neytiri in "Avatar"

Here’s how it works, in a nutshell: An actor performs scenes inside a dome, which features thousands of lights that hit his or her face at different angles. Multiple cameras capture different poses, and software converts the footage into a 3D model. Visual effects artists can use that model to create characters that can look like anything, from fantastical characters to realistic recreations.

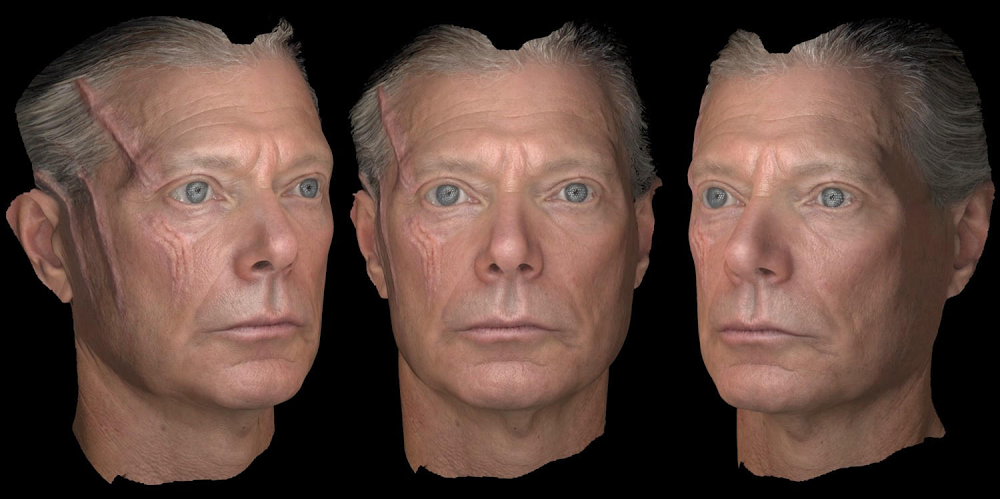

The 3D model the team scanned of Stephen Lang's face for his photoreal digital stunt double as Col. Miles Quaritch in "Avatar"

The first movie they used this facial scanning technology for was 2009’s “Avatar,” performing the first facial scans of Sam Worthington and Zoe Saldana in 2006. Among many other movies, the team also worked on 2014's “Maleficent,” which included creating a digital double of Angelina Jolie's character, scanned in her full Disney-villain makeup.

Just prior to heading to Google, they worked on “Blade Runner 2049,” which took home the Oscar for Best Visual Effects last year and brought back the character Rachael from the original “Blade Runner” movie. The new Rachael was constructed with facial features from the original actress, Sean Young, and another actress, Loren Peta, to make the character appear to be the same age she was in the first film.

Nowadays, Debevec, Yu and Ma are applying the technology they developed for the film industry to the fields of augmented reality (AR) and virtual reality (VR). “We try to bring our knowledge and background to try to make better Google products,” Ma says. “We’re working on improving the realism of VR and AR experiences.”

One of their projects involves a new Light Stage that can capture 3-D models of both faces and bodies in motion, to create more realistic visual effects on screen, whether that screen is at a movie theater or on your smartphone. “We’re trying to make it so your cell phone has the same digital effects pipeline that movie studios employ scores of artists to do,” Debevec says. “It blows me away what we’re even trying to do, not to mention we’re having some success with it.”

Ma, Hawkins, Yu and Debevec on stage at the Academy Sci-Tech Awards.

They picked up their Academy Awards, which come in the form of certificates emblazoned with the gold Oscar logo, at a scientific and technical ceremony two weeks before the televised Oscars. One of the final films the team worked on, “Ready Player One,” is nominated for the Best Visual Effects Oscar this year, and the team says they’re especially happy to help others take home statuettes. Google also partners with the Academy Software Foundation, which helps the movie industry use open-source software more effectively.

During the Scientific and Technical Awards presentation, the three brought fellow Googlers who work on Augmented Perception and Google Cloud to share a table with them and celebrate. They accepted their prize after an introduction from actor David Oyelowo, who hosted the ceremony. As for “Ready Player One?” We’ll have to wait until the Oscars ceremony on February 24 to find out.