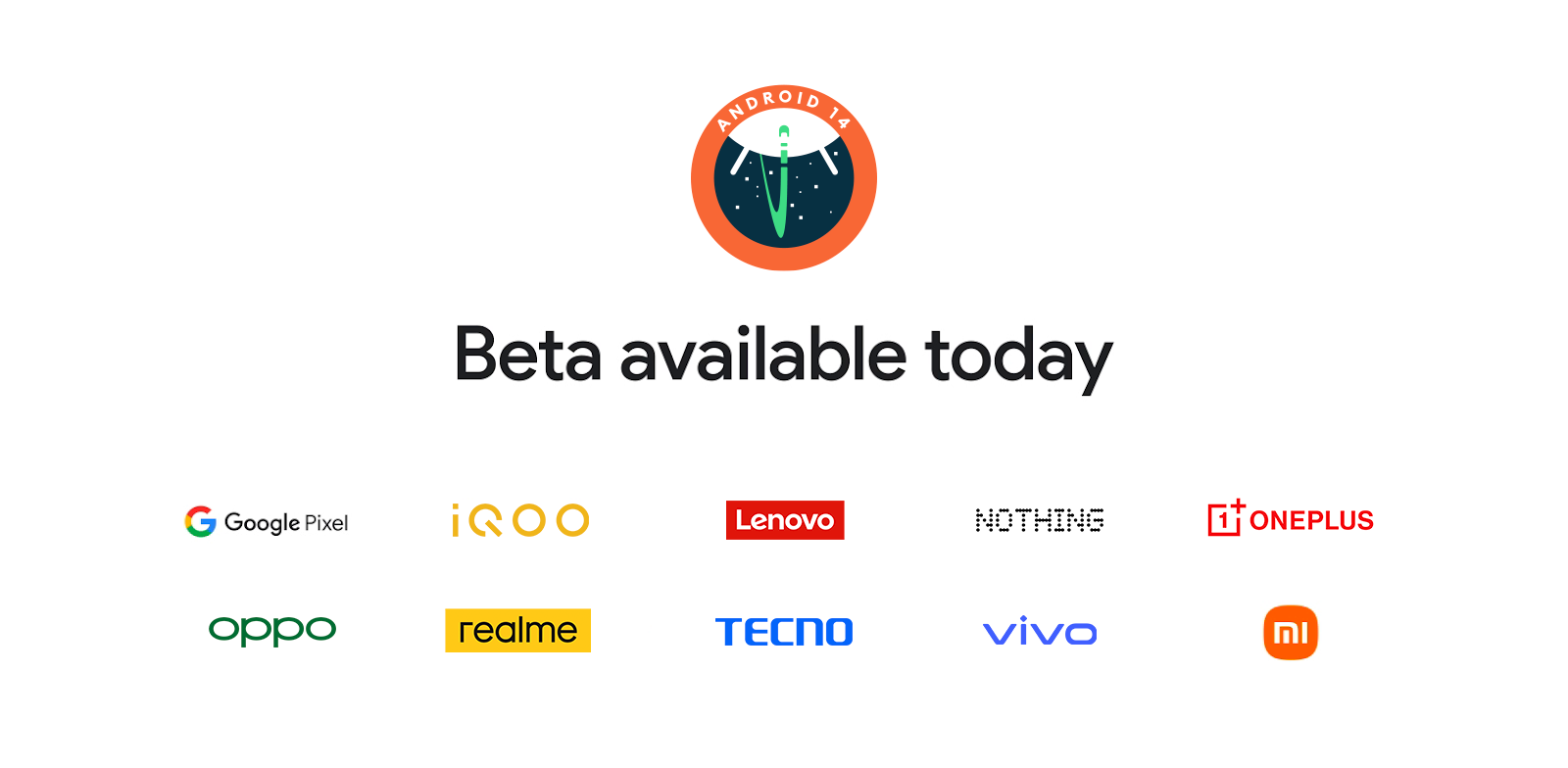

Today, coinciding with Google I/O, we're releasing the second Beta of Android 14. Google I/O includes sessions covering many of Android 14's new features in detail, and Beta 2 includes enhancements around camera and media, privacy and security, system UI, and developer productivity. We're continuing to improve the large-screen device experience, and the Android 14 beta program is now available for the first time on select partner phones, tablets, and foldables.

Android delivers enhancements and new features year-round, and your feedback on the Android beta program plays a key role in helping Android continuously improve. The Android 14 developer site has lots more information about the beta, including downloads for Pixel and the release timeline. We’re looking forward to hearing what you think, and thank you in advance for your continued help in making Android a platform that works for everyone.

Now available on more devices

|

The Android 14 beta is now available from partners including iQOO, Lenovo, Nothing, OnePlus, OPPO, Realme, Tecno, vivo, and Xiaomi.

Premium camera and media experiences

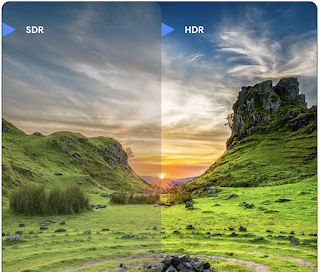

Android devices are known for premium cameras, and Android 13 added support for recording vivid high dynamic range (HDR) video supporting billions of colors, camera extensions that device manufacturers use to expose capabilities such as night mode and bokeh, stream use cases for optimized camera streams, and more. Android 14 builds on these capabilities.

Ultra HDR for images

Android adds support for 10-bit high dynamic range (HDR) images, retaining more of the information from the sensor when taking a photo, enabling vibrant colors and greater contrast. The Ultra HDR format Android uses is fully backwards compatible with JPEG, allowing apps to seamlessly interoperate with HDR images, displaying them in standard dynamic range as needed. Rendering these images in the UI in HDR is done automatically by the framework when your app opts in to using HDR UI for its Activity Window, either through a Manifest entry or at runtime by calling Window.setColorMode.You can also capture 10-bit compressed still images on supported devices. With more colors recovered from the sensor, editing in post can be more flexible. The Gainmap associated with Ultra HDR images can be used to render them using OpenGL or Vulkan.

Zoom, Focus, Postview, and more in Camera Extensions

Android 14 upgrades and improves Camera Extensions, allowing apps to handle longer processing times, enabling improved images using compute-intensive algorithms like low-light photography on supported devices. This will give users an even more robust experience when using Camera Extension capabilities. Examples of these improvements include:

- Dynamic still capture processing latency estimation provides much more accurate still capture latency estimates based on the current scene and environment conditions. Call CameraExtensionSession.getRealtimeStillCaptureLatency() to get a StillCaptureLatency object, which has two latency estimation methods. The getCaptureLatency() method returns the estimated latency between onCaptureStarted() and onCaptureProcessStarted(), and the getProcessingLatency() method returns the estimated latency between onCaptureProcessStarted() and the final processed frame being available.

- Support for capture progress callbacks so that apps can display the current progress of long running still capture processing operations. You can check if this feature is available with CameraExtensionCharacteristics.isCaptureProcessProgressAvailable(), and if it is, you implement the onCaptureProcessProgressed() callback, which has the progress (from 0 to 100) passed in as a parameter.

- Extension specific metadata, such as CaptureRequest.EXTENSION_STRENGTH for dialing in the amount of an extension effect, such as the amount of background blur with EXTENSION_BOKEH.

- Postview Feature for Still Capture in Camera Extensions, which provides a less-processed image more quickly than the final image. If an extension has increased processing latency, a postview image could be provided as a placeholder to improve UX and switched out later for the final image. You can check if this feature is available with CameraExtensionCharacteristics.isPostviewAvailable(). Then you can pass an OutputConfiguration to ExtensionSessionConfiguration.setPostviewOutputConfiguration().

- Support for SurfaceView allowing for a more optimized and power efficient preview render path.

- Support for tap to focus and zoom during Extension usage.

In-sensor zoom

When REQUEST_AVAILABLE_CAPABILITIES_STREAM_USE_CASE in CameraCharacteristics contains SCALER_AVAILABLE_STREAM_USE_CASES_CROPPED_RAW, your app can leverage advanced sensor capabilities to give a cropped RAW stream the same pixels as the full field of view by using a CaptureRequest with a RAW target that has stream use case set to CameraMetadata.SCALER_AVAILABLE_STREAM_USE_CASES_CROPPED_RAW. By implementing the request override controls, the updated camera will give users zoom control even before other camera controls are ready.

Lossless USB audio

Android 14 gains support for lossless audio formats for audiophile-level experiences over USB wired headsets. You can query a USB device for its preferred mixer attributes, register a listener for changes in preferred mixer attributes, and configure mixer attributes using a new AudioMixerAttributes class. It represents the format, such as channel mask, sample rate, and behavior of the audio mixer. The class allows for audio to be sent directly, without mixing, volume adjustment, or processing effects. We are working with our OEM partners to enable this feature in devices later this year.

More graphics capabilities

Android 14 adds advanced graphics features that can be used to take advantage of sophisticated GPU capabilities from within the Canvas layer.

Custom meshes with vertex and fragment shaders

Android has long supported drawing triangle meshes with custom shading, but the input mesh format has been limited to a few predefined attribute combinations. Android 14 adds support for custom meshes, which can be defined as triangles or triangle strips, and can, optionally, be indexed. These meshes are specified with custom attributes, vertex strides, varying, and vertex/fragment shaders written in AGSL. The vertex shader defines the varyings, such as position and color, while the fragment shader can optionally define the color for the pixel, typically by using the varyings created by the vertex shader. If color is provided by the fragment shader, it is then blended with the current Paint color using the blend mode selected when drawing the mesh. Uniforms can be passed into the fragment and vertex shaders for additional flexibility.

Hardware buffer renderer for Canvas

To assist in using Android's Canvas API to draw with hardware acceleration into a HardwareBuffer, Android 14 introduces HardwareBufferRenderer. It is particularly useful when your use case involves communication with the system compositor through SurfaceControl for low-latency drawing.

Privacy

Android 14 continues to focus on privacy, with new functionality that gives users more control and visibility over their data and how it's shared.

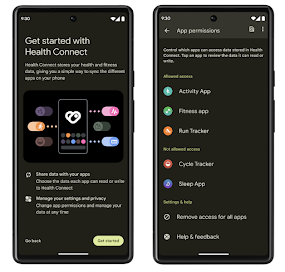

Health Connect

Health Connect is an on-device repository for user health and fitness data. It allows users to share data between their favorite apps, with a single place to control what data they want to share with these apps.

Health Connect is currently available to download as an app on the Google Play store. Starting with Android 14, Health Connect is part of the platform and receives updates via Google Play system updates without requiring a separate download. With this, Health Connect can be updated frequently, and your apps can rely on Health Connect being available on devices running Android 14+. Users can access Health Connect from the Settings in their device, with privacy controls integrated into the system settings.

We're launching support for exercise routes in Health Connect, allowing users to share a route of their workout which can be visualized on a map. A route is defined as a list of locations saved within a window of time, and your app can insert routes into exercise sessions, tying them together. To ensure that users have complete control over this sensitive data, users must allow sharing individual routes with other apps.

That's not all that's new! We have a separate blog post with more detail on Health Connect and more in What's new in Android Health.

Data Sharing Updates

Users will see a new section in the location runtime permission dialog that highlights when an app shares location data with third parties, where they can get more information and control the app’s data access. This information is from the Data safety form of the Google Play Console. Other app stores will be able to provide a mechanism to pass along this information as well. We encourage you to review your apps’ location data sharing policies and make any applicable updates to your apps' data safety information to ensure that they are up to date. This change will be rolling out shortly.

In addition, users will get a periodic notification if any of their apps with the location permission change their data sharing practices to start sharing their data with 3rd parties.

The new location data sharing updates page will be accessible from within device settings.

Secure full screen Intent notifications

With Android 11 (API level 30), it was possible for any app to use Notification.Builder#sendFullScreenIntent to send full-screen intents while the phone is locked. You could auto-grant this on app install by declaring the USE_FULL_SCREEN_INTENT permission in the AndroidManifest.

Full-screen intent notifications are designed for extremely high-priority notifications demanding the user's immediate attention, such as an incoming phone call or user-configured alarm clock settings. Starting with Android 14, we are limiting the apps granted this permission on app install to those that provide calling and alarms only.

This permission remains enabled for apps installed on the phone before the user updates to Android 14. Users can turn this permission on and off.

You can use the new API NotificationManager.canUseScreenIntent to check if your app has the permission; if not, your app can use the new intent ACTION_MANAGE_APP_USE_FULL_SCREEN_INTENT to launch the settings page where users can grant the permission.

System UI

Predictive Back

With the Android 14 Beta 2 release, we've added multiple improvements and new guidance for developers to have more seamless animation when moving between activities within an app.

- You can set android:enableOnBackInvokedCallback=true to opt in to predictive back system animations per-Activity instead of for the entire app.

- We’ve added new Material Component animations for Bottom sheets, Side sheets, and Search.

- We've created design guidance for creating custom in-app animations and transitions.

- We've added new APIs to support custom in-app transition animations:

- handleOnBackStarted, handleOnBackProgressed, handleOnBackCancelled in OnBackPressedCallback

- onBackStarted, onBackProgressed, onBackCancelled in OnBackAnimationCallback

- Use overrideActivityTransition instead of overridePendingTransition for transitions that respond as the user swipes back.

With Android 14 Beta 2, all features of Predictive Back remain behind a developer option. See the developer guide to migrate your app to predictive back, as well as the developer guide to creating custom in-app transitions.

App compatibility

With Beta 2, we're just a step away from platform stability in June 2023, when we'll have the final Android 14 SDK and NDK APIs and final app-facing system behaviors. Now that more devices will be running the Android 14 beta, In the weeks ahead, you can expect more users to be trying your app on Android 14 and raising issues they find.

To test for compatibility, install your published app on a device or emulator running the Android 14 Beta and work through all of the app’s flows. Review behavior changes to focus your testing. After you’ve resolved any issues, publish an update as soon as possible.

|

It’s also a good time to start getting ready for your app to target Android 14, by testing with the app compatibility changes toggles in Developer Options.

|

| App compatibility toggles in Developer Options |

Get started with Android 14

Today's Beta 2 release has everything you need to try the Android 14 features, test your apps, and give us feedback. For testing your app with tablets and foldables, you can test with devices from our partners, but the easiest way to get started is using the 64-bit Android Emulator system images for the Pixel Tablet or Pixel Fold configurations found in the latest preview of the Android Studio SDK Manager. You can also enroll any supported Pixel device here to get this and future Android 14 Beta and feature drop Beta updates over-the-air.

For the best development experience with Android 14, we recommend that you use the latest release of Android Studio Hedgehog. Once you’re set up, here are some of the things you should do:

- Try the new features and APIs – your feedback is critical as we finalize the APIs. Report issues in our tracker on the feedback page.

- Test your current app for compatibility – learn whether your app is affected by default behavior changes in Android 14. Install your app onto a device or emulator running Android 14 and extensively test it.

- Test your app with opt-in changes – Android 14 has opt-in behavior changes that only affect your app when it’s targeting the new platform. It’s important to understand and assess these changes early. To make it easier to test, you can toggle the changes on and off individually.

We’ll update the beta system images and SDK regularly throughout the Android 14 release cycle.

For complete information on how to get the Beta, visit the Android 14 developer site.

Posted by Dave Burke, VP of Engineering

Posted by Dave Burke, VP of Engineering