The last year showed tremendous breakthroughs in artificial intelligence (AI), particularly in large language models (LLMs) and text-to-image models. These technological advances require that we are thoughtful and intentional in how they are developed and deployed. In this blogpost, we share ways we have approached Responsible AI across our research in the past year and where we’re headed in 2023. We highlight four primary themes covering foundational and socio-technical research, applied research, and product solutions, as part of our commitment to build AI products in a responsible and ethical manner, in alignment with our AI Principles.

| · Theme 1: Responsible AI Research Advancements | |

| · Theme 2: Responsible AI Research in Products | |

| · Theme 3: Tools and Techniques | |

| · Theme 4: Demonstrating AI’s Societal Benefit |

Theme 1: Responsible AI Research Advancements

Machine Learning Research

When machine learning (ML) systems are used in real world contexts, they can fail to behave in expected ways, which reduces their realized benefit. Our research identifies situations in which unexpected behavior may arise, so that we can mitigate undesired outcomes.

Across several types of ML applications, we showed that models are often underspecified, which means they perform well in exactly the situation in which they are trained, but may not be robust or fair in new situations, because the models rely on “spurious correlations” — specific side effects that are not generalizable. This poses a risk to ML system developers, and demands new model evaluation practices.

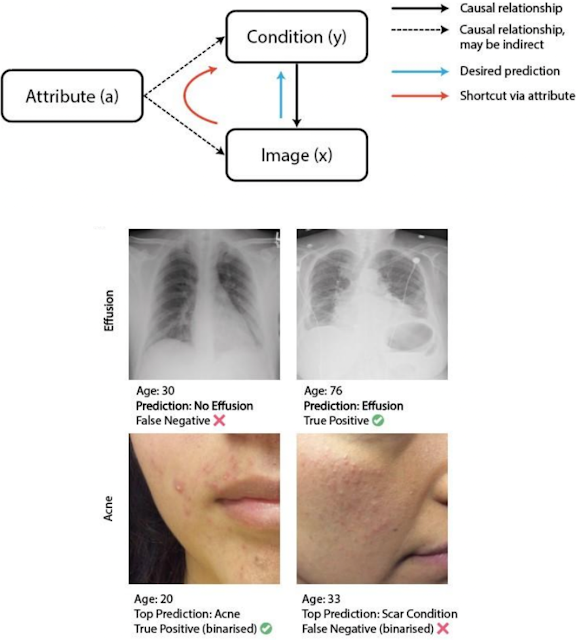

We surveyed evaluation practices currently used by ML researchers and introduced improved evaluation standards in work addressing common ML pitfalls. We identified and demonstrated techniques to mitigate causal “shortcuts”, which lead to a lack of ML system robustness and dependency on sensitive attributes, such as age or gender.

|

| Shortcut learning: Age impacts correct medical diagnosis. |

To better understand the causes of and mitigations for robustness issues, we decided to dig deeper into model design in specific domains. In computer vision, we studied the robustness of new vision transformer models and developed new negative data augmentation techniques to improve their robustness. For natural language tasks, we similarly investigated how different data distributions improve generalization across different groups and how ensembles and pre-trained models can help.

Another key part of our ML work involves developing techniques to build models that are more inclusive. For example, we look to external communities to guide understanding of when and why our evaluations fall short using participatory systems, which explicitly enable joint ownership of predictions and allow people to choose whether to disclose on sensitive topics.

Sociotechnical Research

In our quest to include a diverse range of cultural contexts and voices in AI development and evaluation, we have strengthened community-based research efforts, focusing on particular communities who are less represented or may experience unfair outcomes of AI. We specifically looked at evaluations of unfair gender bias, both in natural language and in contexts such as gender-inclusive health. This work is advancing more accurate evaluations of unfair gender bias so that our technologies evaluate and mitigate harms for people with queer and non-binary identities.

Alongside our fairness advancements, we also reached key milestones in our larger efforts to develop culturally-inclusive AI. We championed the importance of cross-cultural considerations in AI — in particular, cultural differences in user attitudes towards AI and mechanisms for accountability — and built data and techniques that enable culturally-situated evaluations, with a focus on the global south. We also described user experiences of machine translation, in a variety of contexts, and suggested human-centered opportunities for their improvement.

Human-Centered Research

At Google, we focus on advancing human-centered research and design. Recently, our work showed how LLMs can be used to rapidly prototype new AI-based interactions. We also published five new interactive explorable visualizations that introduce key ideas and guidance to the research community, including how to use saliency to detect unintended biases in ML models, and how federated learning can be used to collaboratively train a model with data from multiple users without any raw data leaving their devices.

|

Our interpretability research explored how we can trace the behavior of language models back to the training data itself, suggested new ways to compare differences in what models pay attention to, how we can explain emergent behavior, and how to identify human-understandable concepts learned by models. We also proposed a new approach for recommender systems that uses natural language explanations to make it easier for people to understand and control their recommendations.

Creativity and AI Research

We initiated conversations with creative teams on the rapidly changing relationship between AI technology and creativity. In the creative writing space, Google’s PAIR and Magenta teams developed a novel prototype for creative writing, and facilitated a writers' workshop to explore the potential and limits of AI to assist creative writing. The stories from a diverse set of creative writers were published as a collection, along with workshop insights. In the fashion space, we explored the relationship between fashion design and cultural representation, and in the music space, we started examining the risks and opportunities of AI tools for music.

Theme 2: Responsible AI Research in Products

The ability to see yourself reflected in the world around you is important, yet image-based technologies often lack equitable representation, leaving people of color feeling overlooked and misrepresented. In addition to efforts to improve representation of diverse skin tones across Google products, we introduced a new skin tone scale designed to be more inclusive of the range of skin tones worldwide. Partnering with Harvard professor and sociologist, Dr. Ellis Monk, we released the Monk Skin Tone (MST) Scale, a 10-shade scale that is available for the research community and industry professionals for research and product development. Further, this scale is being incorporated into features on our products, continuing a long line of our work to improve diversity and skin tone representation on Image Search and filters in Google Photos.

|

| The 10 shades of the Monk Skin Tone Scale. |

This is one of many examples of how Responsible AI in Research works closely with products across the company to inform research and develop new techniques. In another example, we leveraged our past research on counterfactual data augmentation in natural language to improve SafeSearch, reducing unexpected shocking Search results by 30%, especially on searches related to ethnicity, sexual orientation, and gender. To improve video content moderation, we developed new approaches for helping human raters focus their attention on segments of long videos that are more likely to contain policy violations. And, we’ve continued our research on developing more precise ways of evaluating equal treatment in recommender systems, accounting for the broad diversity of users and use cases.

In the area of large models, we incorporated Responsible AI best practices as part of the development process, creating Model Cards and Data Cards (more details below), Responsible AI benchmarks, and societal impact analysis for models such as GLaM, PaLM, Imagen, and Parti. We also showed that instruction fine-tuning results in many improvements for Responsible AI benchmarks. Because generative models are often trained and evaluated on human-annotated data, we focused on human-centric considerations like rater disagreement and rater diversity. We also presented new capabilities using large models for improving responsibility in other systems. For example, we have explored how language models can generate more complex counterfactuals for counterfactual fairness probing. We will continue to focus on these areas in 2023, also understanding the implications for downstream applications.

Theme 3: Tooling and Techniques

Responsible Data

Data Documentation:

Extending our earlier work on Model Cards and the Model Card Toolkit, we released Data Cards and the Data Cards Playbook, providing developers with methods and tools to document appropriate uses and essential facts related to a model or dataset. We have also advanced research on best practices for data documentation, such as accounting for a dataset’s origins, annotation processes, intended use cases, ethical considerations, and evolution. We also applied this to healthcare, creating “healthsheets” to underlie the foundation of our international Standing Together collaboration, bringing together patients, health professionals, and policy-makers to develop standards that ensure datasets are diverse and inclusive and to democratize AI.

New Datasets:

Fairness: We released a new dataset to assist in ML fairness and adversarial testing tasks, primarily for generative text datasets. The dataset contains 590 words and phrases that show interactions between adjectives, words, and phrases that have been shown to have stereotypical associations with specific individuals and groups based on their sensitive or protected characteristics.

|

| A partial list of the sensitive characteristics in the dataset denoting their associations with adjectives and stereotypical associations. |

Toxicity: We constructed and publicly released a dataset of 10,000 posts to help identify when a comment's toxicity depends on the comment it's replying to. This improves the quality of moderation-assistance models and supports the research community working on better ways to remedy online toxicity.

Societal Context Data: We used our experimental societal context repository (SCR) to supply the Perspective team with auxiliary identity and connotation context data for terms relating to categories such as ethnicity, religion, age, gender, or sexual orientation — in multiple languages. This auxiliary societal context data can help augment and balance datasets to significantly reduce unintended biases, and was applied to the widely used Perspective API toxicity models.

Learning Interpretability Tool (LIT)

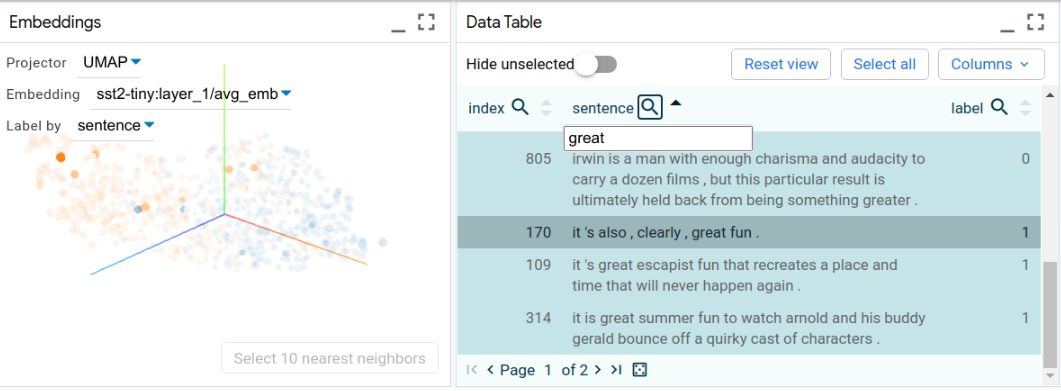

An important part of developing safer models is having the tools to help debug and understand them. To support this, we released a major update to the Learning Interpretability Tool (LIT), an open-source platform for visualization and understanding of ML models, which now supports images and tabular data. The tool has been widely used in Google to debug models, review model releases, identify fairness issues, and clean up datasets. It also now lets you visualize 10x more data than before, supporting up to 100s of thousands of data points at once.

|

| A screenshot of the Language Interpretability Tool displaying generated sentences on a data table. |

Counterfactual Logit Pairing

ML models are sometimes susceptible to flipping their prediction when a sensitive attribute referenced in an input is either removed or replaced. For example, in a toxicity classifier, examples such as "I am a man" and "I am a lesbian" may incorrectly produce different outputs. To enable users in the Open Source community to address unintended bias in their ML models, we launched a new library, Counterfactual Logit Pairing (CLP), which improves a model’s robustness to such perturbations, and can positively influence a model’s stability, fairness, and safety.

|

| Illustration of fairness predictions that can be mitigated using counterfactual logit pairing. |

Theme 4: Demonstrating AI’s Societal Benefit

We believe that AI can be used to explore and address hard, unanswered questions around humanitarian and environmental issues. Our research and engineering efforts span many areas, including accessibility, health, and media representation, with the end goal of promoting inclusion and meaningfully improving people’s lives.

Accessibility

Following many years of research, we launched Project Relate, an Android app that uses a personalized AI-based speech recognition model to enable people with non-standard speech to communicate more easily with others. The app is available to English speakers 18+ in Australia, Canada, Ghana, India, New Zealand, the UK, and the US.

To help catalyze advances in AI to benefit people with disabilities, we also launched the Speech Accessibility Project. This project represents the culmination of a collaborative, multi-year effort between researchers at Google, Amazon, Apple, Meta, Microsoft, and the University of Illinois Urbana-Champaign. This program will build a large dataset of impaired speech that is available to developers to empower research and product development for accessibility applications. This work also complements our efforts to assist people with severe motor and speech impairments through improvements to techniques that make use of a user’s eye gaze.

Health

We’re also focused on building technology to better the lives of people affected by chronic health conditions, while addressing systemic inequities, and allowing for transparent data collection. As consumer technologies — such as fitness trackers and mobile phones — become central in data collection for health, we’ve explored use of technology to improve interpretability of clinical risk scores and to better predict disability scores in chronic diseases, leading to earlier treatment and care. And, we advocated for the importance of infrastructure and engineering in this space.

Many health applications use algorithms that are designed to calculate biometrics and benchmarks, and generate recommendations based on variables that include sex at birth, but might not account for users’ current gender identity. To address this issue, we completed a large, international study of trans and non-binary users of consumer technologies and digital health applications to learn how data collection and algorithms used in these technologies can evolve to achieve fairness.

Media

We partnered with the Geena Davis Institute on Gender in Media (GDI) and the Signal Analysis and Interpretation Laboratory (SAIL) at the University of Southern California (USC) to study 12 years of representation in TV. Based on an analysis of over 440 hours of TV programming, the report highlights findings and brings attention to significant disparities in screen and speaking time for light and dark skinned characters, male and female characters, and younger and older characters. This first-of-its-kind collaboration uses advanced AI models to understand how people-oriented stories are portrayed in media, with the ultimate goal to inspire equitable representation in mainstream media.

Plans for 2023 and Beyond

We’re committed to creating research and products that exemplify positive, inclusive, and safe experiences for everyone. This begins by understanding the many aspects of AI risks and safety inherent in the innovative work that we do, and including diverse sets of voices in coming to this understanding.

- Responsible AI Research Advancements: We will strive to understand the implications of the technology that we create, through improved metrics and evaluations, and devise methodology to enable people to use technology to become better world citizens.

- Responsible AI Research in Products: As products leverage new AI capabilities for new user experiences, we will continue to collaborate closely with product teams to understand and measure their societal impacts and to develop new modeling techniques that enable the products to uphold Google’s AI Principles.

- Tools and Techniques: We will develop novel techniques to advance our ability to discover unknown failures, explain model behaviors, and to improve model output through training, responsible generation, and failure mitigation.

- Demonstrating AI’s Social Benefit: We plan to expand our efforts on AI for the Global Goals, bringing together research, technology, and funding to accelerate progress on the Sustainable Development Goals. This commitment will include $25 million to support NGOs and social enterprises. We will further our work on inclusion and equity by forming more collaborations with community-based experts and impacted communities. This includes continuing the Equitable AI Research Roundtables (EARR), focused on the potential impacts and downstream harms of AI with community based experts from the Othering and Belonging Institute at UC Berkeley, PolicyLink, and Emory University School of Law.

Building ML models and products in a responsible and ethical manner is both our core focus and core commitment.

Acknowledgements

This work reflects the efforts from across the Responsible AI and Human-Centered Technology community, from researchers and engineers to product and program managers, all of whom contribute to bringing our work to the AI community.

Google Research, 2022 & Beyond

This was the second blog post in the “Google Research, 2022 & Beyond” series. Other posts in this series are listed in the table below:

| Language Models | Computer Vision | Multimodal Models |

| Generative Models | Responsible AI | Algorithms* |

| ML & Computer Systems | Robotics | Health |

| General Science & Quantum | Community Engagement |

| * Articles will be linked as they are released. |