Today, we gave a keynote presentation at the Open Networking Summit, where we shared details about Espresso, Google’s peering edge architecture — the latest offering in our Software Defined Networking (SDN) strategy. Espresso has been in production for over two years and routes 20 percent of our total traffic to the internet -- and growing. It’s changing the way traffic is directed at the peering edge, delivering unprecedented scale, flexibility and efficiency.

We view our network as more than just a way to connect computers to one another. Building the right network infrastructure enables new application capabilities that simply would not otherwise be possible. This is especially powerful when the capability is exposed to higher level applications running in our datacenters.

For example, consider real-time voice search. Answering the question “What’s the latest news?” with Google Assistant requires a fast, low-latency connection from a user’s device to the edge of Google’s network, and from the edge of our network to one of our data centers. Once inside a data center, hundreds — or even thousands — of individual servers must consult vast amounts of data to score the mapping of an audio recording to possible phrases in one of many languages and dialects. The resulting phrase is then passed to another cluster to perform a web search, consulting a real-time index of internet content. The results are then gathered, scored and returned to the edge of Google’s network back to the end user.

Answering queries in real-time involves coordinating dozens of internet routers and thousands of computers across the globe, often in the space of less than a second! Further, the system must scale to a worldwide audience that generates thousands of queries every second.

Early on, we realized that the network we needed to support our services did not exist and could not be bought. Hence, over the past 10+ years, we set out to the fill in the required pieces in-house. Our fundamental design philosophy is that the network should be treated as a large-scale distributed system and leverage the same control infrastructure we developed for Google’s compute and storage systems.

We defined and employed SDN principles to build Jupiter, a datacenter interconnect capable of supporting more than 100,000 servers and 1 Pb/s of total bandwidth to host our services. We also constructed B4 to connect our data centers to one another with bandwidth and latency that allowed our engineers to access and replicate data in real-time between individual campuses. We then deployed Andromeda, a Network Function Virtualization stack that delivers the same capabilities available to Google-native applications all the way to containers and virtual machines running on Google Cloud Platform.

Introducing Espresso

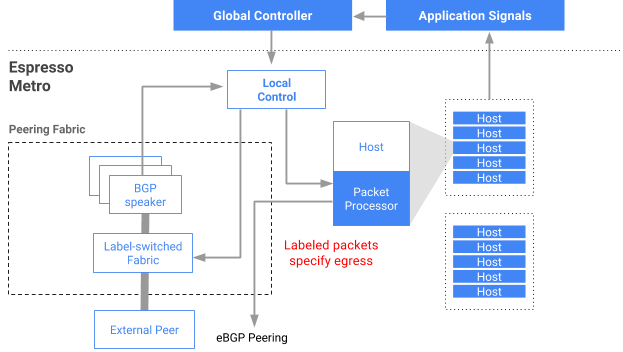

Espresso is the fourth, and in some ways the most challenging, pillar of our SDN strategy, extending our approach all the way to the peering edge of our network, where Google connects to other networks across the planet.

Google has one of the largest peering surfaces in the world, exchanging data with Internet Service Providers (ISPs) at 70 metros and generating more than 25 percent of all Internet traffic. However, we found that existing Internet protocols cannot use all of the connectivity options offered by our ISP partners, and therefore aren’t able to deliver the best availability and user experience to our end users.

Espresso delivers two key pieces of innovation. First, it allows us to dynamically choose from where to serve individual users based on measurements of how end-to-end network connections are performing in real time. Rather than pick a static point to connect users simply based on their IP address (or worse, the IP address of their DNS resolver), we dynamically choose the best point and rebalance our traffic based on actual performance data. Similarly, we are able to react in real-time to failures and congestion both within our network and in the public Internet.

Espresso allows us to maintain performance and availability in a way that is not possible with existing router-centric Internet protocols. This translates to higher availability and better performance through Google Cloud than is available through the Internet at large.

Second, we separate the logic and control of traffic management from the confines of individual router “boxes.” Rather than relying on thousands of individual routers to manage and learn from packet streams, we push the functionality to a distributed system that extracts the aggregate information. We leverage our large-scale computing infrastructure and signals from the application itself to learn how individual flows are performing, as determined by the end user’s perception of quality.

Google’s network is a critical part of our infrastructure, enabling us to process tremendous amounts of information in real time and to host some of the world’s most demanding services, all while delivering content with the highest levels of availability and efficiency to a global population. Our network continues to be a key opportunity and differentiator for Google, ensuring that Google Cloud services and customers enjoy the same levels of availability, performance, and efficiency available to “Google native” services such as Google Search, YouTube, Gmail and more.

Note: Ankur Jain, Principal Engineer and Mahesh Kallahalla, Principal Engineer also contributed to this post