Posted by Jon Bottarini and Hamzeh Zawawy, Android Security

Fuzzing is an effective technique for finding software vulnerabilities. Over the past few years Android has been focused on improving the effectiveness, scope, and convenience of fuzzing across the organization. This effort has directly resulted in improved test coverage, fewer security/stability bugs, and higher code quality. Our implementation of continuous fuzzing allows software teams to find new bugs/vulnerabilities, and prevent regressions automatically without having to manually initiate fuzzing runs themselves. This post recounts a brief history of fuzzing on Android, shares how Google performs fuzzing at scale, and documents our experience, challenges, and success in building an infrastructure for automating fuzzing across Android. If you’re interested in contributing to fuzzing on Android, we’ve included instructions on how to get started, and information on how Android’s VRP rewards fuzzing contributions that find vulnerabilities.

A Brief History of Android Fuzzing

Fuzzing has been around for many years, and Android was among the early large software projects to automate fuzzing and prioritize it similarly to unit testing as part of the broader goal to make Android the most secure and stable operating system. In 2019 Android kicked off the fuzzing project, with the goal to help institutionalize fuzzing by making it seamless and part of code submission. The Android fuzzing project resulted in an infrastructure consisting of Pixel phones and Google cloud based virtual devices that enabled scalable fuzzing capabilities across the entire Android ecosystem. This project has since grown to become the official internal fuzzing infrastructure for Android and performs thousands of fuzzing hours per day across hundreds of fuzzers.

Under the Hood: How Is Android Fuzzed

Step 1: Define and find all the fuzzers in Android repo

The first step is to integrate fuzzing into the Android build system (Soong) to enable build fuzzer binaries. While developers are busy adding features to their codebase, they can include a fuzzer to fuzz their code and submit the fuzzer alongside the code they have developed. Android Fuzzing uses a build rule called cc_fuzz (see example below). cc_fuzz (we also support rust_fuzz and java_fuzz) defines a Soong module with source file(s) and dependencies that can be built into a binary.

cc_fuzz {

name: "fuzzer_foo",

srcs: [

"fuzzer_foo.cpp",

],

static_libs: [

"libfoo",

],

host_supported: true,

}

A packaging rule in Soong finds all of these cc_fuzz definitions and builds them automatically. The actual fuzzer structure itself is very simple and consists of one main method (LLVMTestOneInput):

#include <stddef.h>

#include <stdint.h>

extern "C" int LLVMFuzzerTestOneInput(

const uint8_t *data,

size_t size) {

// Here you invoke the code to be fuzzed.

return 0;

}

This fuzzer gets automatically built into a binary and along with its static/dynamic dependencies (as specified in the Android build file) are packaged into a zip file which gets added to the main zip containing all fuzzers as shown in the example below.

Step 2: Ingest all fuzzers into Android builds

Once the fuzzers are found in the Android repository and they are built into binaries, the next step is to upload them to the cloud storage in preparation to run them on our backend. This process is run multiple times daily. The Android fuzzing infrastructure uses an open source continuous fuzzing framework (Clusterfuzz) to run fuzzers continuously on Android devices and emulators. In order to run the fuzzers on clusterfuzz, the fuzzers zip files are renamed after the build and the latest build gets to run (see diagram below):

The fuzzer zip file contains the fuzzer binary, corresponding dictionary as well as a subfolder containing its dependencies and the git revision numbers (sourcemap) corresponding to the build. Sourcemaps are used to enhance stack traces and produce crash reports.

Step 3: Run fuzzers continuously and find bugs

Running fuzzers continuously is done through scheduled jobs where each job is associated with a set of physical devices or emulators. A job is also backed by a queue that represents the fuzzing tasks that need to be run. These tasks are a combination of running a fuzzer, reproducing a crash found in an earlier fuzzing run, or minimizing the corpus, among other tasks.

Each fuzzer is run for multiple hours, or until they find a crash. After the run, Haiku takes all of the interesting input discovered during the run and adds it to the fuzzer corpus. This corpus is then shared across fuzzer runs and grows over time. The fuzzer is then prioritized in subsequent runs according to the growth of new coverage and crashes found (if any). This ensures we provide the most effective fuzzers more time to run and find interesting crashes.

Step 4: Generate fuzzers line coverage

What good is a fuzzer if it’s not fuzzing the code you care about? To improve the quality of the fuzzer and to monitor the overall progress of Android fuzzing, two types of coverage metrics are calculated and available to Android developers. The first metric is for edge coverage which refers to edges in the Control Flow Graph (CFG). By instrumenting the fuzzer and the code being fuzzed, the fuzzing engine can track small snippets of code that get triggered every time execution flow reaches them. That way, fuzzing engines know exactly how many (and how many times) each of these instrumentation points got hit on every run so they can aggregate them and calculate the coverage.

INFO: Seed: 2859304549

INFO: Loaded 1 modules (773 inline 8-bit counters): 773 [0x5610921000, 0x5610921305),

INFO: Loaded 1 PC tables (773 PCs): 773 [0x5610921308,0x5610924358),

INFO: -max_len is not provided; libFuzzer will not generate inputs larger than 4096 bytes

INFO: A corpus is not provided, starting from an empty corpus

#2 INITED cov: 2 ft: 2 corp: 1/1b lim: 4 exec/s: 0 rss: 24Mb

#413 NEW cov: 3 ft: 3 corp: 2/9b lim: 8 exec/s: 0 rss: 24Mb L: 8/8 MS: 1 InsertRepeatedBytes-

#3829 NEW cov: 4 ft: 4 corp: 3/17b lim: 38 exec/s: 0 rss: 24Mb L: 8/8 MS: 1 ChangeBinInt-

...

Line coverage inserts instrumentation points specifying lines in the source code. Line coverage is very useful for developers as they can pinpoint areas in the code that are not covered and update their fuzzers accordingly to hit those areas in future fuzzing runs.

Drilling into any of the folders can show the stats per file:

Further clicking on one of the files shows the lines that were touched and lines that never got coverage. In the example below, the first line has been fuzzed ~5 million times, but the fuzzer never makes it into lines 3 and 4, indicating a gap in the coverage for this fuzzer.

We have dashboards internally that measure our fuzzing coverage across our entire codebase. In order to generate these coverage dashboards yourself, you follow these steps.

Another measurement of the quality of the fuzzers is how many fuzzing iterations can be done in one second. It has a direct relationship with the computation power and the complexity of the fuzz target. However, this parameter alone can not measure how good or effective the fuzzing is.

How we handle fuzzer bugs

Android fuzzing utilizes the Clusterfuzz fuzzing infrastructure to handle any found crashes and file a ticket to the Android security team. Android security makes an assessment of the crash based on the Android Severity Guidelines and then routes the vulnerability to the proper team for remediation. This entire process of finding the reproducible crash, routing to Android Security, and then assigning the issue to a team responsible can take as little as two hours, and up to a week depending on the type of crash and the severity of the vulnerability.

One example of a recent fuzzer success is (CVE 2022-20473), where an internal team wrote a 20-line fuzzer and submitted it to run on Android fuzzing infra. Within a day, the fuzzer was ingested and pushed to our fuzzing infrastructure to begin fuzzing, and shortly found a critical severity vulnerability! A patch for this CVE has been applied by the service team.

Why Android Continues to Invest in Fuzzing

Protection Against Code Regressions

The Android Open Source Project (AOSP) is a large and complex project with many contributors. As a result, there are thousands of changes made to the project every day. These changes can be anything from small bug fixes to large feature additions, and fuzzing helps to find vulnerabilities that may be inadvertently introduced and not caught during code review.

Continuous fuzzing has helped to find these vulnerabilities before they are introduced in production and exploited by attackers. One real-life example is (CVE-2023-21041), a vulnerability discovered by a fuzzer written three years ago. This vulnerability affected Android firmware and could have led to local escalation of privilege with no additional execution privileges needed. This fuzzer was running for many years with limited findings until a code regression led to the introduction of this vulnerability. This CVE has since been patched.

Protection against unsafe memory language pitfalls

Android has been a huge proponent of Rust, with Android 13 being the first Android release with the majority of new code in a memory safe language. The amount of new memory-unsafe code entering Android has decreased, but there are still millions of lines of code that remain, hence the need for fuzzing persists.

No One Code is Safe: Fuzzing code in memory-safe languages

Our work does not stop with non-memory unsafe languages, and we encourage fuzzer development in languages like Rust as well. While fuzzing won’t find common vulnerabilities that you would expect to see memory unsafe languages like C/C++, there have been numerous non-security issues discovered and remediated which contribute to the overall stability of Android.

Fuzzing Challenges

In addition to generic C/C++ binaries issues such as missing dependencies, fuzzers can have their own classes of problems:

Low executions per second: in order to fuzz efficiently, the number of mutations has to be in the order of hundreds per second otherwise the fuzzing will take a very long time to cover the code. We addressed this issue by adding a set of alerts that continuously monitor the health of the fuzzers as well as any sudden drop in coverage. Once a fuzzer is identified as underperforming, an automated email is sent to the fuzzer author with details to help them improve the fuzzer.

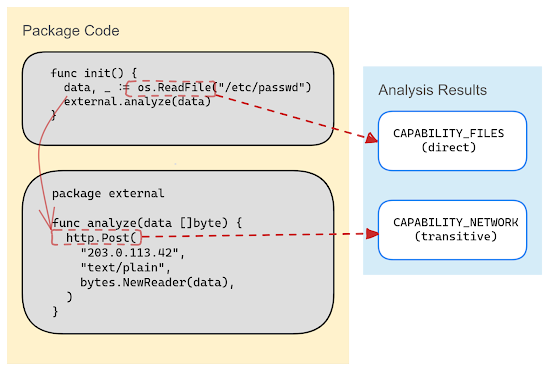

Fuzzing the wrong code: Like all resources, fuzzing resources are limited. We want to ensure that those resources give us the highest return, and that generally means devoting them towards fuzzing code that processes untrusted (i.e. potentially attacker controlled) inputs. This can cover any way that the phone can receive input including Bluetooth, NFC, USB, web, etc. Parsing structured input is particularly interesting since there is room for programming errors due to specs complexity. Code that generates output is not particularly interesting to fuzz. Similarly internal code that is not exposed publicly is also less of a security concern. We addressed this issue by identifying the most vulnerable code (see the following section).

What to fuzz

In order to fuzz the most important components of the Android source code, we focus on libraries that have:

- A history of vulnerabilities: the history should not be the distant history since context change but more focus on the last 12 months.

- Recent code changes: research indicates that more vulnerabilities are found in recently changed code than code that is more stable.

- Remote access: vulnerabilities in code that are reachable remotely can be critical.

- Privileged: Similarly to #3, vulnerabilities in code that runs in privileged processes can be critical.

How to submit a fuzzer to AOSP

We’re constantly writing and improving fuzzers internally to cover some of the most sensitive areas of Android, but there is always room for improvement. If you’d like to get started writing your own fuzzer for an area of AOSP, you’re welcome to do so to make Android more secure (example CL)::

- Get Android source code

- Have a testing phone?

- Write a fuzz target (follow guidelines in ‘What to fuzz’ section)

- Upload your fuzzer to AOSP.

Get started by reading our documentation on Fuzzing with libFuzzer and check your fuzzer into the Android Open Source project. If your fuzzer finds a bug, you can submit it to the Android Bug Bounty Program and could be eligible for a reward!