In the last few years, research in natural language generation, used for tasks like text summarization, has made tremendous progress. Yet, despite achieving high levels of fluency, neural systems can still be prone to hallucination (i.e.generating text that is understandable, but not faithful to the source), which can prohibit these systems from being used in many applications that require high degrees of accuracy. Consider an example from the Wikibio dataset, where the neural baseline model tasked with summarizing a Wikipedia infobox entry for Belgian football player Constant Vanden Stock summarizes incorrectly that he is an American figure skater.

While the process of assessing the faithfulness of generated text to the source content can be challenging, it is often easier when the source content is structured (e.g., in tabular format). Moreover, structured data can also test a model’s ability for reasoning and numerical inference. However, existing large scale structured datasets are often noisy (i.e., the reference sentence cannot be fully inferred from the tabular data), making them unreliable for the measurement of hallucination in model development.

In “ToTTo: A Controlled Table-To-Text Generation Dataset”, we present an open domain table-to-text generation dataset created using a novel annotation process (via sentence revision) along with a controlled text generation task that can be used to assess model hallucination. ToTTo (shorthand for “Table-To-Text”) consists of 121,000 training examples, along with 7,500 examples each for development and test. Due to the accuracy of annotations, this dataset is suitable as a challenging benchmark for research in high precision text generation. The dataset and code are open-sourced on our GitHub repo.

Table-to-Text Generation

ToTTo introduces a controlled generation task in which a given Wikipedia table with a set of selected cells is used as the source material for the task of producing a single sentence description that summarizes the cell contents in the context of the table. The example below demonstrates some of the many challenges posed by the task, such as numerical reasoning, a large open-domain vocabulary, and varied table structure.

Annotation Process

Designing an annotation process to obtain natural but also clean target sentences from tabular data is a significant challenge. Many datasets like Wikibio and RotoWire pair naturally occurring text heuristically with tables, a noisy process that makes it difficult to disentangle whether hallucination is primarily caused by data noise or model shortcomings. On the other hand, one can elicit annotators to write sentence targets from scratch, which are faithful to the table, but the resulting targets often lack variety in terms of structure and style.

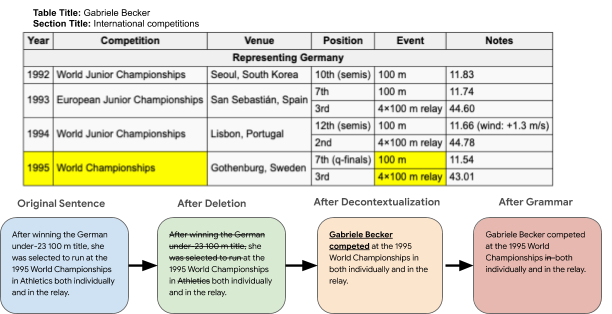

In contrast, ToTTo is constructed using a novel data annotation strategy in which annotators revise existing Wikipedia sentences in stages. This results in target sentences that are clean, as well as natural, containing interesting and varied linguistic properties. The data collection and annotation process begins by collecting tables from Wikipedia, where a given table is paired with a summary sentence collected from the supporting page context according to heuristics, such as word overlap between the page text and the table and hyperlinks referencing tabular data. This summary sentence may contain information not supported by the table and may contain pronouns with antecedents found in the table only, not the sentence itself.

The annotator then highlights the cells in the table that support the sentence and deletes phrases in the sentence that are not supported by the table. They also decontextualize the sentence so that it is standalone (e.g., with correct pronoun resolution) and correct grammar, where necessary.

We show that annotators obtain high agreement on the above task: 0.856 Fleiss Kappa for cell highlighting, and 67.0 BLEU for the final target sentence.

Dataset Analysis

We conducted a topic analysis on the ToTTo dataset over 44 categories and found that the Sports and Countries topics, each of which consists of a range of fine-grained topics, e.g., football/olympics for sports and population/buildings for countries, together comprise 56.4% of the dataset. The other 44% is composed of a much more broad set of topics, including Performing Arts, Transportation, and Entertainment.

Furthermore, we conducted a manual analysis of the different types of linguistic phenomena in the dataset over 100 randomly chosen examples. The table below summarizes the fraction of examples that require reference to the page and section titles, as well as some of the linguistic phenomena in the dataset that potentially pose new challenges to current systems.

| Linguistic Phenomena | Percentage |

| Require reference to page title | 82% |

| Require reference to section title | 19% |

| Require reference to table description | 3% |

| Reasoning (logical, numerical, temporal etc.) | 21% |

| Comparison across rows/columns/cells | 13% |

| Require background information | 12% |

Baseline Results

We present some baseline results of three state-of-the-art models from the literature (BERT-to-BERT, Pointer Generator, and the Puduppully 2019 model) on two evaluation metrics, BLEU and PARENT. In addition to reporting the score on the overall test set, we also evaluate each model on a more challenging subset consisting of out-of-domain examples. As the table below shows, the BERT-to-BERT model performs best in terms of both BLEU and PARENT. Moreover, all models achieve considerably lower performance on the challenge set indicating the challenge of out-of-domain generalization.

| BLEU | PARENT | BLEU | PARENT | |

| Model | (overall) | (overall) | (challenge) | (challenge) |

| BERT-to-BERT | 43.9 | 52.6 | 34.8 | 46.7 |

| Pointer Generator | 41.6 | 51.6 | 32.2 | 45.2 |

| Puduppully et al. 2019 | 19.2 | 29.2 | 13.9 | 25.8 |

While automatic metrics can give some indication of performance, they are not currently sufficient for evaluating hallucination in text generation systems. To better understand hallucination, we manually evaluate the top performing baseline, to determine how faithful it is to the content in the source table, under the assumption that discrepancies indicate hallucination. To compute the “Expert” performance, for each example in our multi-reference test set, we held out one reference and asked annotators to compare it with the other references for faithfulness. As the results show, the top performing baseline appears to hallucinate information ~20% of the time.

| Faithfulness | Faithfulness | |

| Model | (overall) | (challenge) |

| Expert | 93.6 | 91.4 |

| BERT-to-BERT | 76.2 | 74.2 |

Model Errors and Challenges

In the table below, we present a selection of the observed model errors to highlight some of the more challenging aspects of the ToTTo dataset. We find that state-of-the-art models struggle with hallucination, numerical reasoning, and rare topics, even when using cleaned references (errors in red). The last example shows that even when the model output is correct it is sometimes not as informative as the original reference which contains more reasoning about the table (shown in blue).

| Reference | Model Prediction |

| in the 1939 currie cup, western province lost to transvaal by 17–6 in cape town. | the first currie cup was played in 1939 in transvaal1 at new- lands, with western province winning 17–6. |

| a second generation of micro- drive was announced by ibm in 2000 with increased capacities at 512 mb and 1 gb. | there were 512 microdrive models in 2000: 1 gigabyte. |

| the 1956 grand prix motorcy- cle racing season consisted of six grand prix races in five classes: 500cc, 350cc, 250cc, 125cc and sidecars 500cc. | the 1956 grand prix motorcycle racing season consisted of eight grand prix races in five classes: 500cc, 350cc, 250cc, 125cc and sidecars 500cc. |

| in travis kelce’s last collegiate season, he set personal career highs in receptions (45), re- ceiving yards (722), yards per receptions (16.0) and receiving touchdowns (8). | travis kelce finished the 2012 season with 45 receptions for 722 yards (16.0 avg.) and eight touchdowns. |

Conclusion

In this work, we presented ToTTo, a large, English table-to-text dataset that presents both a controlled generation task and a data annotation process based on iterative sentence revision. We also provided several state-of-the-art baselines, and demonstrated ToTTo could be a useful dataset for modeling research as well as for developing evaluation metrics that can better detect model improvements.

In addition to the proposed task, we hope our dataset can also be helpful for other tasks such as table understanding and sentence revision. ToTTo is available at our GitHub repo.

Acknowledgements

The authors wish to thank Ming-Wei Chang, Jonathan H. Clark, Kenton Lee, and Jennimaria Palomaki for their insightful discussions and support. Many thanks also to Ashwin Kakarla and his team for help with the annotations.