Enabling easy access to vast amounts of information across multiple languages and modalities (from text to images to video), computers have become highly influential tools for learning, allowing you to use the world’s information to aid you with your research. However, when researching a topic or writing a term paper, gathering all the information you need from a variety of sources on the Internet can be time-consuming, and at times, a distraction from the writing process.

That’s why we developed algorithms for Explore in Docs, a collaboration between the Coauthor and Apps teams that uses powerful Google infrastructure, best-in-class information retrieval, machine learning, and machine translation technologies to assemble the relevant information and sources for a research paper, all within the document. Explore in Docs suggests relevant content—in the form of topics, images, and snippets —based on the content of the document, allowing the user to focus on critical thinking and idea development.

More than just a Search

Suggesting material that is relevant to the content in a Google Doc is a difficult problem. A naive approach would be to consider the content of a document as a Search query. However, search engines are not designed to accept large blocks of text as queries, so they might truncate the query or focus on the wrong words. So the challenge becomes not only identifying relevant search terms based on the overall content of the document, but additionally providing related topics that may be useful.

To tackle this, the Coauthor team built algorithms that are able to associate external content with topics - entities, abstract concepts - in a document and assign relative importance to each of them. This is accomplished by creating a “target” in a topic vector space that incorporates not only the topics you are writing about but also related topics, creating a variety of search terms that include both. Then, each returned search result (piece of text, image, etc) is embedded in the same vector space and the closest items in that vector space are suggested to the user.

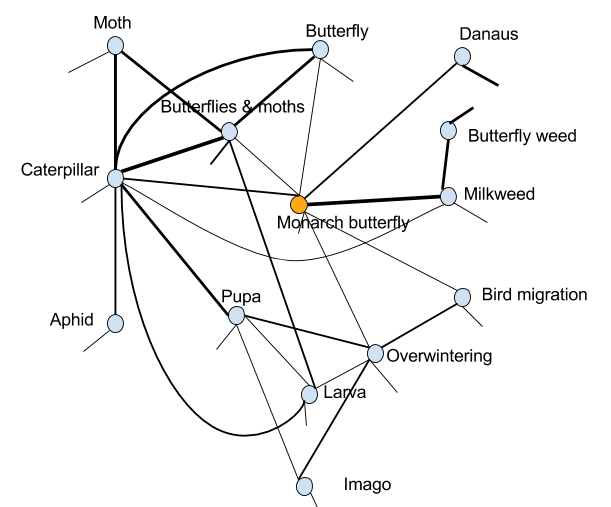

For example, if you’re writing about monarch butterflies, our algorithms find that monarch butterfly and milkweed plant are related to each other. This is done by analyzing the statistics of discourse on the web, collected from hundreds of billions of sentences from billions of webpages across dozens of languages. Note that these two are not semantically close (an insect versus a plant). An example of a set of learned relations is below:

The information you need, in multiple languages

Cross-lingual predictive search is another key aspect of what we have designed and built. If the relevant material is likely to be in foreign languages, Google searches the web in those languages and translates the selected nuggets into the language of the document.

In the example pictured below, the user begins to type an essay in Docs about Claudia Neto and clicks on the “Explore” button to learn more about her. Explore returns relevant “Topics” and “Images” as well as “Related Research” sourced from multiple websites. Also, Explore suggests Dolores Silva as a related topic since she and Claudia have high mutual information in multilingual web text (statistics collected from more than 10 billion webpages).

Because Swedish ranks high among languages that have significant discourse on Claudia Neto, our algorithms search Swedish content on the Internet for any additional information about her that might not be available on English websites. Before returning information obtained from the Swedish websites, we use Google Translate to render the nugget in the user’s preferred language (in this case, English). Related Research is currently available in 10 languages with more to come in the future.

Explore in Docs is a useful tool that can be used worldwide, in all forms of industry and at all levels of education. Try out the Explore feature the next time you create a document, and check back for more exciting progress from the Coauthor team!