The computational understanding of user interfaces (UI) is a key step towards achieving intelligent UI behaviors. Previously, we investigated various UI modeling tasks, including widget captioning, screen summarization, and command grounding, that address diverse interaction scenarios such as automation and accessibility. We also demonstrated how machine learning can help user experience practitioners improve UI quality by diagnosing tappability confusion and providing insights for improving UI design. These works along with those developed by others in the field have showcased how deep neural networks can potentially transform end user experiences and the interaction design practice.

With these successes in addressing individual UI tasks, a natural question is whether we can obtain foundational understandings of UIs that can benefit specific UI tasks. As our first attempt to answer this question, we developed a multi-task model to address a range of UI tasks simultaneously. Although the work made some progress, a few challenges remain. Previous UI models heavily rely on UI view hierarchies — i.e., the structure or metadata of a mobile UI screen like the Document Object Model for a webpage — that allow a model to directly acquire detailed information of UI objects on the screen (e.g., their types, text content and positions). This metadata has given previous models advantages over their vision-only counterparts. However, view hierarchies are not always accessible, and are often corrupted with missing object descriptions or misaligned structure information. As a result, despite the short-term gains from using view hierarchies, it may ultimately hamper the model performance and applicability. In addition, previous models had to deal with heterogeneous information across datasets and UI tasks, which often resulted in complex model architectures that were difficult to scale or generalize across tasks.

In “Spotlight: Mobile UI Understanding using Vision-Language Models with a Focus”, accepted for publication at ICLR 2023, we present a vision-only approach that aims to achieve general UI understanding completely from raw pixels. We introduce a unified approach to represent diverse UI tasks, the information for which can be universally represented by two core modalities: vision and language. The vision modality captures what a person would see from a UI screen, and the language modality can be natural language or any token sequences related to the task. We demonstrate that Spotlight substantially improves accuracy on a range of UI tasks, including widget captioning, screen summarization, command grounding and tappability prediction.

Spotlight Model

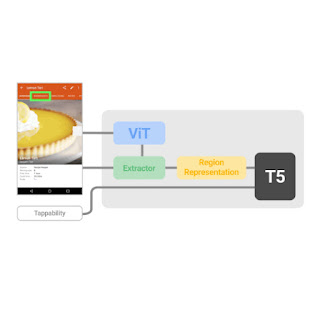

The Spotlight model input includes a tuple of three items: the screenshot, the region of interest on the screen, and the text description of the task. The output is a text description or response about the region of interest. This simple input and output representation of the model is expressive to capture various UI tasks and allows scalable model architectures. This model design allows a spectrum of learning strategies and setups, from task-specific fine-tuning, to multi-task learning and to few-shot learning. The Spotlight model, as illustrated in the above figure, leverages existing architecture building blocks such as ViT and T5 that are pre-trained in the high-resourced, general vision-language domain, which allows us to build on top of the success of these general domain models.

Because UI tasks are often concerned with a specific object or area on the screen, which requires a model to be able to focus on the object or area of interest, we introduce a Focus Region Extractor to a vision-language model that enables the model to concentrate on the region in light of the screen context.

In particular, we design a Region Summarizer that acquires a latent representation of a screen region based on ViT encodings by using attention queries generated from the bounding box of the region (see paper for more details). Specifically, each coordinate (a scalar value, i.e., the left, top, right or bottom) of the bounding box, denoted as a yellow box on the screenshot, is first embedded via a multilayer perceptron (MLP) as a collection of dense vectors, and then fed to a Transformer model along their coordinate-type embedding. The dense vectors and their corresponding coordinate-type embeddings are color coded to indicate their affiliation with each coordinate value. Coordinate queries then attend to screen encodings output by ViT via cross attention, and the final attention output of the Transformer is used as the region representation for the downstream decoding by T5.

|

| A target region on the screen is summarized by using its bounding box to query into screen encodings from ViT via attentional mechanisms. |

Results

We pre-train the Spotlight model using two unlabeled datasets (an internal dataset based on C4 corpus and an internal mobile dataset) with 2.5 million mobile UI screens and 80 million web pages. We then separately fine-tune the pre-trained model for each of the four downstream tasks (captioning, summarization, grounding, and tappability). For widget captioning and screen summarization tasks, we report CIDEr scores, which measure how similar a model text description is to a set of references created by human raters. For command grounding, we report accuracy that measures the percentage of times the model successfully locates a target object in response to a user command. For tappability prediction, we report F1 scores that measure the model’s ability to tell tappable objects from untappable ones.

In this experiment, we compare Spotlight with several benchmark models. Widget Caption uses view hierarchy and the image of each UI object to generate a text description for the object. Similarly, Screen2Words uses view hierarchy and the screenshot as well as auxiliary features (e.g., app description) to generate a summary for the screen. In the same vein, VUT combines screenshots and view hierarchies for performing multiple tasks. Finally, the original Tappability model leverages object metadata from view hierarchy and the screenshot to predict object tappability. Taperception, a follow-up model of Tappability, uses a vision-only tappability prediction approach. We examine two Spotlight model variants with respect to the size of its ViT building block, including B/16 and L/16. Spotlight drastically exceeded the state-of-the-art across four UI modeling tasks.

| Model | Captioning | Summarization | Grounding | Tappability | |||||||||||

| Baselines | Widget Caption | 97 | - | - | - | ||||||||||

| Screen2Words | - | 61.3 | - | - | |||||||||||

| VUT | 99.3 | 65.6 | 82.1 | - | |||||||||||

| Taperception | - | - | - | 85.5 | |||||||||||

| Tappability | - | - | - | 87.9 | |||||||||||

Spotlight | B/16 | 136.6 | 103.5 | 95.7 | 86.9 | ||||||||||

| L/16 | 141.8 | 106.7 | 95.8 | 88.4 |

We then pursue a more challenging setup where we ask the model to learn multiple tasks simultaneously because a multi-task model can substantially reduce model footprint. As shown in the table below, the experiments showed that our model still performs competitively.

| Model | Captioning | Summarization | Grounding | Tappability | ||||||||||

| VUT multi-task | 99.3 | 65.1 | 80.8 | - | ||||||||||

| Spotlight B/16 | 140 | 102.7 | 90.8 | 89.4 | ||||||||||

| Spotlight L/16 | 141.3 | 99.2 | 94.2 | 89.5 |

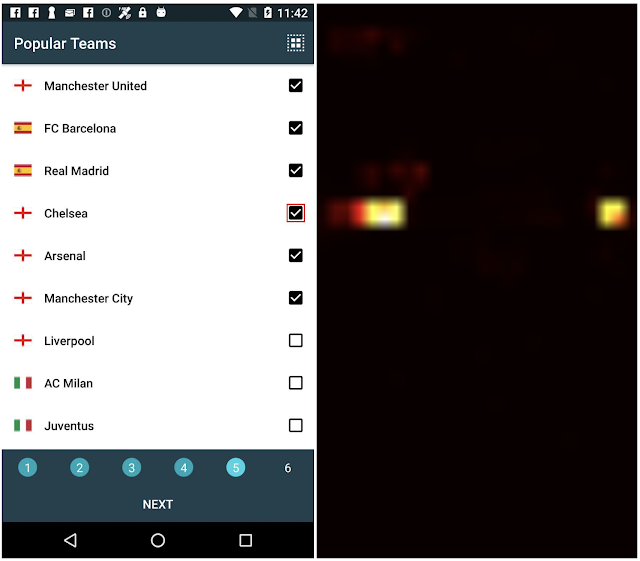

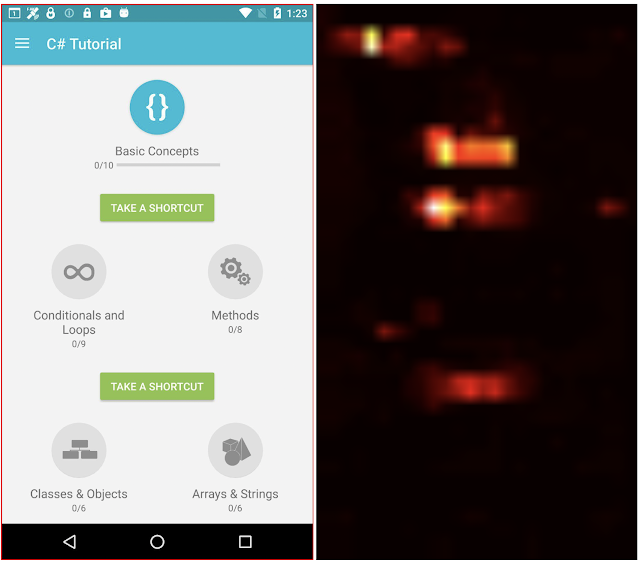

To understand how the Region Summarizer enables Spotlight to focus on a target region and relevant areas on the screen, we analyze the attention weights (which indicate where the model attention is on the screenshot) for both widget captioning and screen summarization tasks. In the figure below, for the widget captioning task, the model predicts “select Chelsea team” for the checkbox on the left side, highlighted with a red bounding box. We can see from its attention heatmap (which illustrates the distribution of attention weights) on the right that the model learns to attend to not only the target region of the check box, but also the text “Chelsea" on the far left to generate the caption. For the screen summarization example, the model predicts “page displaying the tutorial of a learning app” given the screenshot on the left. In this example, the target region is the entire screen, and the model learns to attend to important parts on the screen for summarization.

Conclusion

We demonstrate that Spotlight outperforms previous methods that use both screenshots and view hierarchies as the input, and establishes state-of-the-art results on multiple representative UI tasks. These tasks range from accessibility, automation to interaction design and evaluation. Our vision-only approach for mobile UI understanding alleviates the need to use view hierarchy, allows the architecture to easily scale and benefits from the success of large vision-language models pre-trained for the general domain. Compared to recent large vision-language model efforts such as Flamingo and PaLI, Spotlight is relatively small and our experiments show the trend that larger models yield better performance. Spotlight can be easily applied to more UI tasks and potentially advance the fronts of many interaction and user experience tasks.

Acknowledgment

We thank Mandar Joshi and Tao Li for their help in processing the web pre-training dataset, and Chin-Yi Cheng and Forrest Huang for their feedback for proofreading the paper. Thanks to Tom Small for his help in creating animated figures in this post.