Professional football player. Organizational psychologist. Mechanical engineer. Ice cream factory worker.

This list of careers may seem random, but they have something in common. They’re all part of the personal and professional histories of people who now work at Google data centers. These individuals, and their stories, take center stage in Season 2 of Where the Internet Lives, a podcast about the hidden world of data centers.

In Season 1, we pulled back the curtain to share how data centers work, what they mean to the communities that host them and our goal to run them on 24/7 carbon-free energy. In Season 2, we’re focusing on the lives and career journeys of ten people who help keep the internet running.

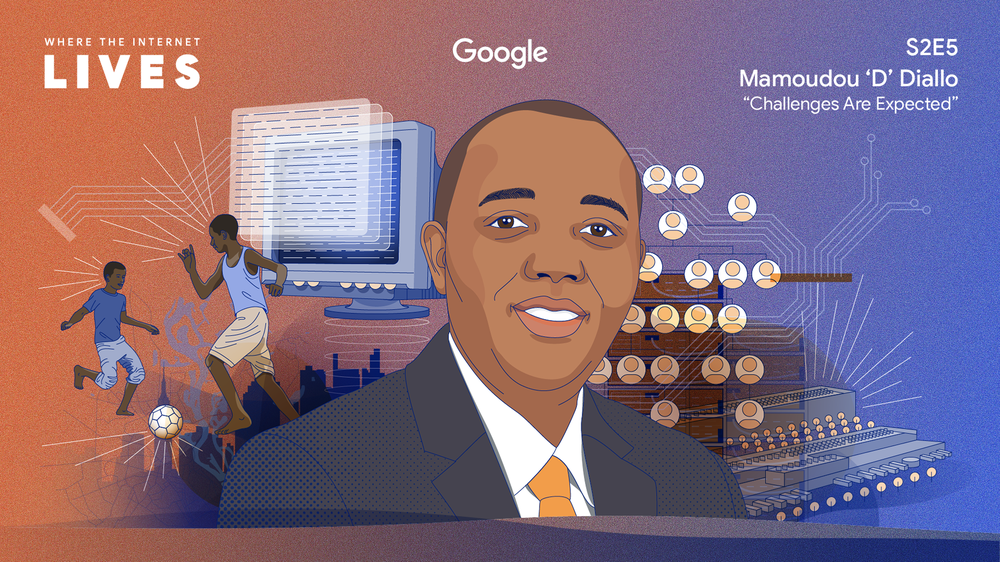

You’ll hear from folks like Mamoudou “D” Diallo, who grew up in Guinea-Conakry in West Africa. After scoring exceptionally well on a standardized test in high school, he traveled to Ukraine for college to study computer engineering — a subject that, up until that point, he had only read about in books. He later moved to Ohio for graduate school and spent 20 years working on technology in the financial sector. He has since shifted to the tech industry, and is now the site manager for Google’s data center in New Albany, Ohio.

Today, we released a report with Deloitte to evaluate the economic and community impact of our two data centers in Loudoun County, Virginia.

Today, we released a report with Deloitte to evaluate the economic and community impact of our two data centers in Loudoun County, Virginia.

Today, we released a report with Deloitte to evaluate the economic and community impact of our two data centers in Loudoun County, Virginia.

Today, we released a report with Deloitte to evaluate the economic and community impact of our two data centers in Loudoun County, Virginia.

A new agreement brings wind and solar energy to the local Arizona electricity grid. Plus, more ways we’re supporting the community.

A new agreement brings wind and solar energy to the local Arizona electricity grid. Plus, more ways we’re supporting the community.

Google builds on continued investment in the UK with a $1 billion data centre investment.

Google builds on continued investment in the UK with a $1 billion data centre investment.

Season 3 of the Where the Internet Lives podcast explores how data centers change the world around them in surprising and beneficial ways.

Season 3 of the Where the Internet Lives podcast explores how data centers change the world around them in surprising and beneficial ways.

We’re introducing two programs with partners to help drive a more equitable clean energy transition.

We’re introducing two programs with partners to help drive a more equitable clean energy transition.

Season 3 of the Where the Internet Lives podcast explores how data centers change the world around them in surprising & beneficial ways.

Season 3 of the Where the Internet Lives podcast explores how data centers change the world around them in surprising & beneficial ways.

Learn about our climate-conscious data center cooling strategy and how it complements our existing sustainability commitments.

Learn about our climate-conscious data center cooling strategy and how it complements our existing sustainability commitments.