Welcome to #IamaGDE - a series of spotlights presenting Google Developer Experts (GDEs) from across the globe. Discover their stories, passions, and highlights of their community work.

Leigh Johnson turned her childhood love of Geocities and Neopets into a web development career, and then trained her focus on Machine Learning. Now, she’s a staff software engineer at Slack, a Google Developer Expert in Web and Machine Learning, and founder of Print Nanny, an automated failure detection system and monitoring system for 3D printers.

Meet Leigh Johnson, Google Developer Expert in Web and Machine Learning.

GDE Leigh Johnson

The early days

Leigh Johnson grew up in the Bronx, NY, and got an early start in web development when she became captivated by Geocities and Neopets in elementary school.

“I loved the power of being able to put something online that other people could see, using just HTML and CSS,” she says.

She started college and studied Latin, but it wasn’t the right fit for her, so she dropped out and launched her own business building WordPress sites for small businesses, like local restaurants putting their menus online for the first time or taking orders through a form.

“I was 18, running around a data center trying to rack servers and teaching myself DNS to serve my customer base, which was small business owners,” she says. “I ran my business for five years, until companies like Squarespace and Wix started to edge me out of the market a little bit.”

Leigh went on to chase her dream of working in the video game industry, where she got exposed to low-level C++ programming, graphics engines, and basic statistics, which led her to machine learning.

Machine learning

At the video game studio where she worked, Leigh got into Bayesian inference.

“It’s old school machine learning, where you try to predict things based on the probability of previous events,” she explains. “You look at past events and try to predict the probability of future events, and I did this for marketing offers—what’s the likelihood you’d purchase a yellow hat to match your yellow pants?”

In the first month or two of trying email offers, the company made more small dollar sales than they typically made in a year.

“I realized, this is powerful dark magic; I must learn more,” Leigh says.

She continued working for tech startups like Ansible, which was acquired by Red Hat, and Dave.com, doing heavy data lifting.

“Everything about machine learning is powered by being able to manipulate and get data from point A to point B,” she says.

Today, Leigh works on machine learning and infrastructure at Slack and is a Google Developer Expert in machine learning. She also has a side project she runs: Print Nanny.

Print Nanny: Monitoring 3D printers

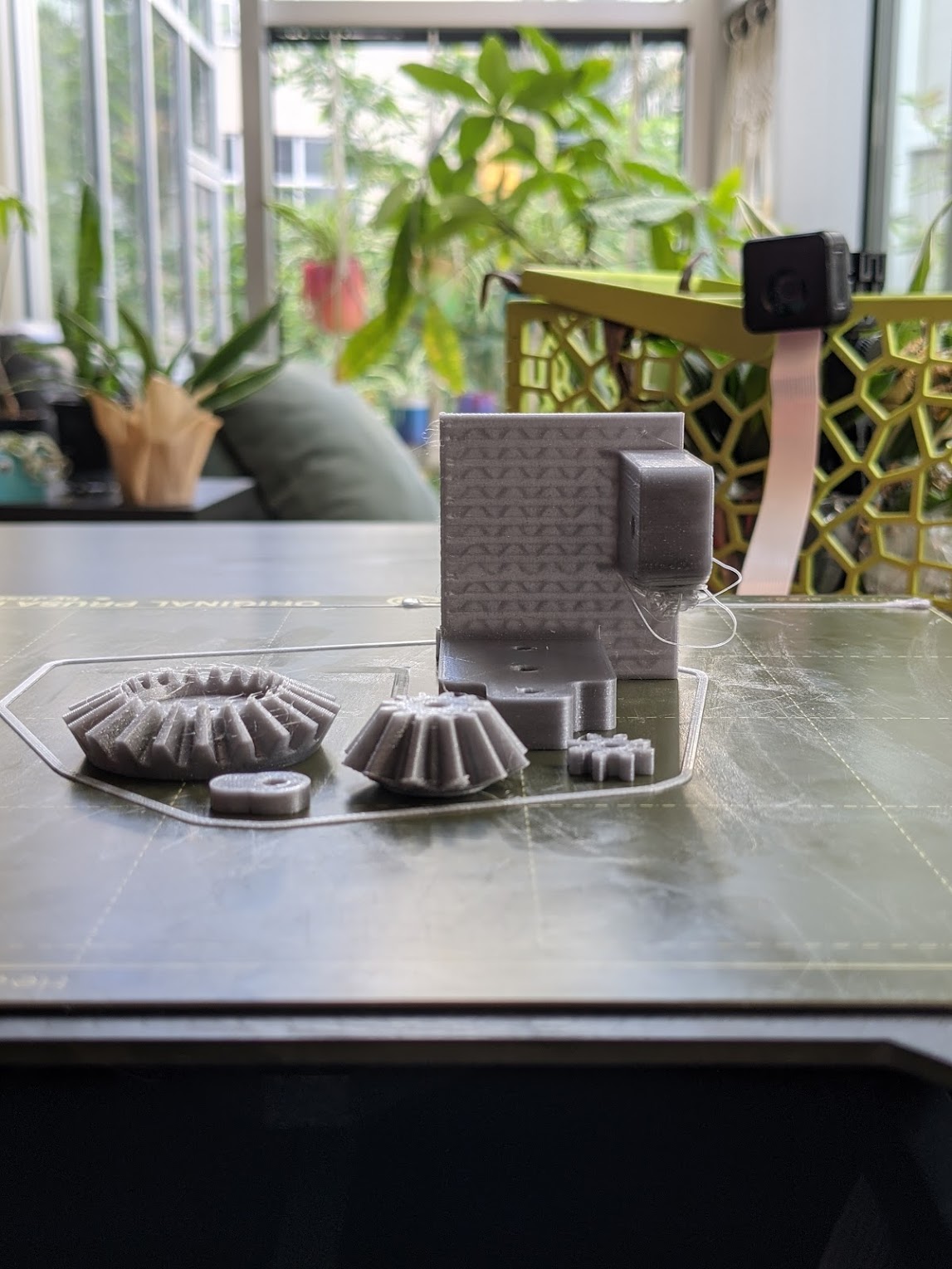

When Leigh got into 3D printing as a hobby during the COVID-19 shutdown, she discovered that 3D printers can be unreliable and lack sophisticated monitoring programs.

“When I assembled my 3D printer myself, I realized that over time, the calibration is going to change,” she says. “It's a very finicky process, and it didn't necessarily guarantee the quality of these traditional large batch manufacturing processes.”

She installed a nanny cam to watch her 3D printer and researched solutions, knowing from her machine learning experience that because 3D printers build a print up layer by layer, there’s no one point of failure—failure happens layer by layer, over time. So she wrote that algorithm.

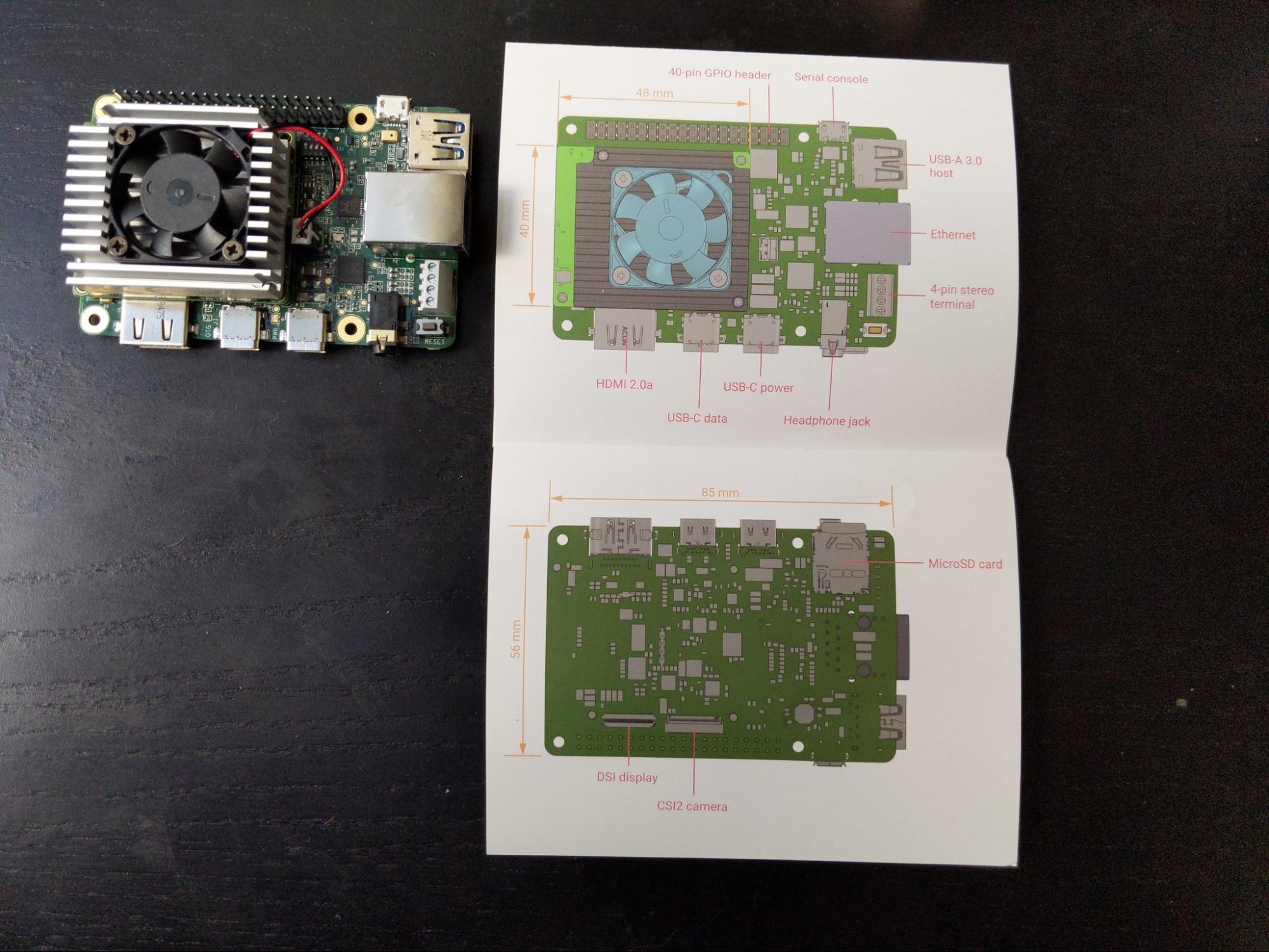

“I saw an opportunity to take some of the traditional machine intelligence strategies used by large manufacturers to ensure there’s a certain consistency and quality to the things they produce, and I made Print Nanny,” she says. “It uses a Raspberry Pi, a credit card-sized computer that costs $30. You can stick a computer vision model on one and do offline inference, which are basically predictions about what the camera sees. You can make predictions about whether a print will fail, help score calculations, and attenuate the print.”

Leigh used Google Cloud Platform AutoML Vision, Google Cloud Platform IoT Core, TensorFlow Model Garden, and TensorFlow.js to build Print Nanny. Using GCP credits provided by Google, she improved and developed Print Nanny with TensorFlow and Google Cloud Platform products.

When Print Nanny detects that a print is failing, the user receives a notification and can remotely pause or stop the printer.

“Print Nanny is an automated failure detection system and monitoring system for 3D printers, which uses computer vision to detect defects and alert you to potential quality or safety hazards,” Leigh says.

Leigh has hired team members who are interested in machine learning to help her with the technical aspects of Print Nanny. Print Nanny currently has 2100 users signed up for a closed beta, with 200 people actively using the beta version. Of that group, 80% are hobbyists and 20% are small business owners. Print Nanny is 100% open source.

Becoming a GDE

Leigh got involved with the GDE program about four years ago, when she began putting machine learning models on Raspberry Pis and building robots. She began writing tutorials about what she was learning.

“The things I was doing were quite hard: TensorFlow Light, the mobile device of TensorFlow—there was a missing documentation opportunity there, and my target platform, the Raspberry Pi, is a hobbyist platform, so there was a little bit of missing documentation there,” Leigh says. “For a hobbyist who wanted to pick up a Raspberry Pi and do a computer vision project for the first time, there was a missing tutorial there, so I started writing about what I was doing, and the response was tremendous.”

Leigh’s work caught the eye of Google staff research engineer Pete Warden, the technical Lead of the TensorFlow Mobile team, who encouraged her, and she leveraged the GDE program to connect to Google experts on TensorFlow and machine learning. Google provides a machine learning course for developers and supports TensorFlow, in addition to its many AI products.

“I had no knowledge of graph programming or what it meant to adapt the low-level kernel operations that would run on a Raspberry Pi, or compiling software, and I learned all that through the GDE program,” Leigh says. “This program changed my life.”

Leigh’s favorite part of the GDE program is going to events like TensorFlow World, which she last attended in 2019, and GDE summits. She hadn’t travelled internationally until she was in her 20’s, so the GDE program has connected her to the international community.

“It’s been life-changing,” she says. “I never would have had access to that many perspectives. It’s changed the way I view the world, my life, and myself. It’s very powerful.”

Leigh’s advice to future developers

Leigh recommends that people find the best environment for themselves and adopt a growth mindset.

“The best advice that I can give is to find your motivation and find the environment where you can be successful,” she says. “Surround yourself with people who are lifelong learners. When you cultivate an environment of learning around you, it's this positive, self-perpetuating process.”