More and more industries are beginning to recognize the value of local AI, where the speed of local inference allows considerable savings on bandwidth and cloud compute costs, and keeping data local preserves user privacy.

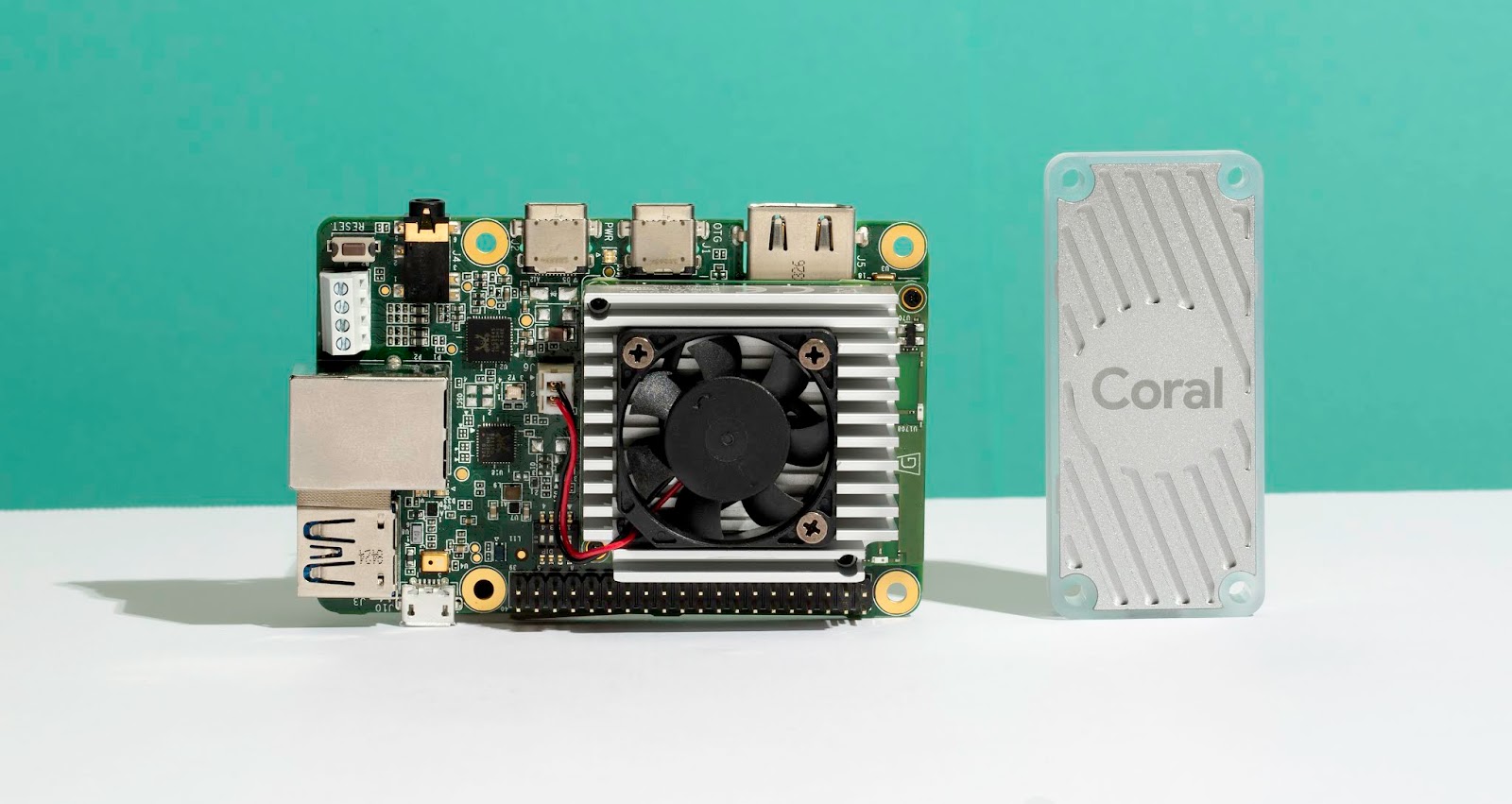

Last year, we launched Coral, our platform of hardware components and software tools that make it easy to prototype and scale local AI products. Our product portfolio includes the Coral Dev Board, USB Accelerator, and PCIe Accelerators, all now available in 36 countries.

Since our release, we’ve been excited by the diverse range of applications already built on Coral across a broad set of industries that range from healthcare to agriculture to smart cities. And for 2020, we’re excited to announce new additions to the Coral platform that will expand the possibilities even further.

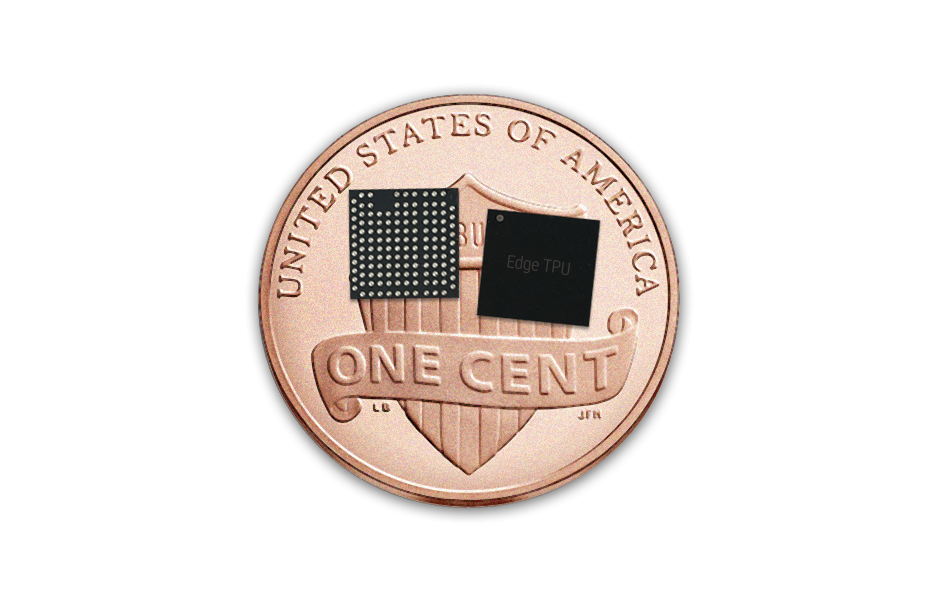

First up is the Coral Accelerator Module, an easy to integrate multi-chip package that encapsulates the Edge TPU ASIC. The module exposes both PCIe and USB interfaces and can easily integrate into custom PCB designs. We’ve been working closely with Murata to produce the module and you can see a demo at CES 2020 by visiting their booth at the Las Vegas Convention Center, Tech East, Central Plaza, CP-18. The Coral Accelerator Module will be available in the first half of 2020.

Coral Accelerator Module, a new multi-chip module with Google Edge TPU

Next, we’re announcing the Coral Dev Board Mini, which provides a smaller form-factor, lower-power, and lower-cost alternative to the Coral Dev Board. The Mini combines the new Coral Accelerator Module with the MediaTek 8167s SoC to create a board that excels at 720P video encoding/decoding and computer vision use cases. The board will be on display during CES 2020 at the MediaTek showcase located in the Venetian, Tech West, Level 3. The Coral Dev Board Mini will be available in the first half of 2020.

We're also offering new variations to the Coral System-on-Module, now available with 2GB and 4GB LPDDR4 RAM in addition to the original 1GB LPDDR4 configuration. We’ll be showcasing how the SoM can be used in smart city, manufacturing, and healthcare applications, as well as a few new SoC and MCU explorations we’ve been working on with the NXP team at CES 2020 in their pavilion located at the Las Vegas Convention Center, Tech East, Central Plaza, CP-18.

Finally, Asus has chosen the Coral SOM as the base to their Tinker Edge T product, a maker friendly single-board computer that features a rich set of I/O interfaces, multiple camera connectors, programmable LEDs, and color-coded GPIO header. The Tinker Edge T board will be available soon -- more details can be found here from Asus.

Come visit Coral at CES Jan 7-10 in Las Vegas:

- NXP exhibit (LVCC, Tech East, Central Plaza, CP-18)

- Mediatek exhibit (Venetian, Tech West, Level 3)

- Murata exhibit (LVCC, South Hall 2, MP26061)

And, as always, we are always looking for ways to improve the platform, so keep reaching out to us at [email protected].