The Stable channel has been updated to 124.0.6367.78/.79 for Windows and Mac and 124.0.6367.78 to Linux which will roll out over the coming days/weeks. A full list of changes in this build is available in the Log.

The Extended Stable channel has been updated to 124.0.6367.78/.79 for Windows and Mac which will roll out over the coming days/weeks.

Security Fixes and Rewards

Note: Access to bug details and links may be kept restricted until a majority of users are updated with a fix. We will also retain restrictions if the bug exists in a third party library that other projects similarly depend on, but haven’t yet fixed.

This update includes 4 security fixes. Below, we highlight fixes that were contributed by external researchers. Please see the Chrome Security Page for more information.

[$16000][332546345] Critical CVE-2024-4058: Type Confusion in ANGLE. Reported by Toan (suto) Pham and Bao (zx) Pham of Qrious Secure on 2024-04-02

[TBD][333182464] High CVE-2024-4059: Out of bounds read in V8 API. Reported by Eirik on 2024-04-08

[TBD][333420620] High CVE-2024-4060: Use after free in Dawn. Reported by wgslfuzz on 2024-04-09

We would also like to thank all security researchers that worked with us during the development cycle to prevent security bugs from ever reaching the stable channel.

As usual, our ongoing internal security work was responsible for a wide range of fixes:

[336329431] Various fixes from internal audits, fuzzing and other initiatives

Many of our security bugs are detected using AddressSanitizer, MemorySanitizer, UndefinedBehaviorSanitizer, Control Flow Integrity, libFuzzer, or AFL.

Interested in switching release channels? Find out how here. If you find a new issue, please let us know by filing a bug. The community help forum is also a great place to reach out for help or learn about common issues.

Daniel Yip

Google Chrome

Google launches new Chromebook devices and announces features for schools globally.

Google launches new Chromebook devices and announces features for schools globally.

Learn more about Google's AI-powered tools that can help you explore the outdoors more.

Learn more about Google's AI-powered tools that can help you explore the outdoors more.

For Earth Day, we’re sharing how Chromebooks can help support sustainability goals at your school by building a repair program and more.

For Earth Day, we’re sharing how Chromebooks can help support sustainability goals at your school by building a repair program and more.

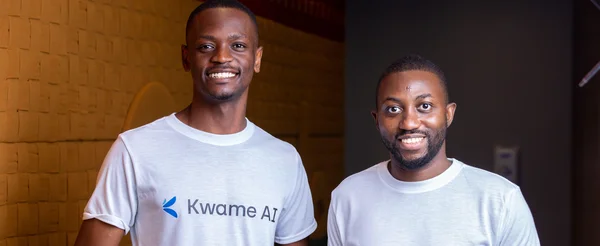

Google and startups collaborating to bring AI solutions to sustainability problems

Google and startups collaborating to bring AI solutions to sustainability problems

The new Growth Academy: AI for Education program provides edtech startups with hands-on Google mentorship and technical support.

The new Growth Academy: AI for Education program provides edtech startups with hands-on Google mentorship and technical support.